SpaceX-Anthropic Deal Highlights Compute as AI’s Revenue Bottleneck

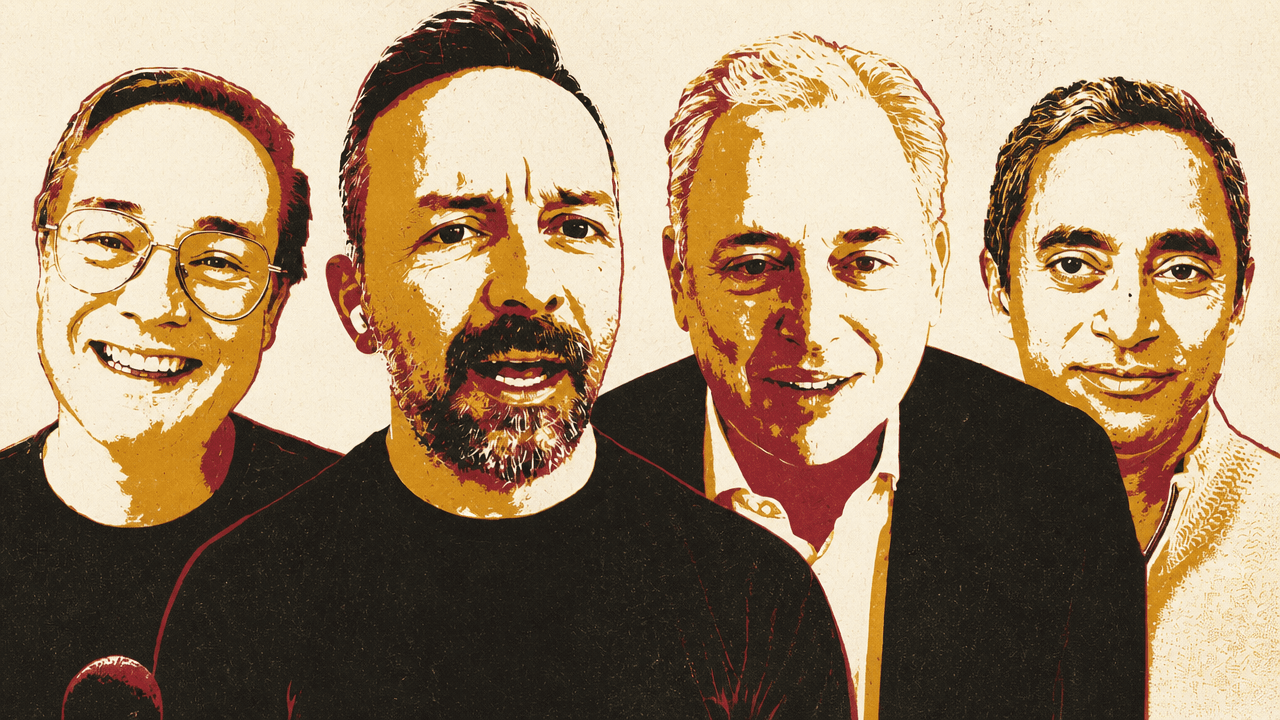

Jason Calacanis

Jason Calacanis Chamath Palihapitiya

Chamath Palihapitiya David Sacks

David Sacks Brad GerstnerAll-In PodcastFriday, May 8, 202622 min read

Brad GerstnerAll-In PodcastFriday, May 8, 202622 min readThe All-In panel used SpaceX’s compute deal with Anthropic to argue that frontier AI is now being constrained less by demand than by access to power, GPUs and data-center capacity. David Sacks warned that Anthropic’s reported revenue trajectory could make it a historic monopoly if sustained, while Brad Gerstner pushed back that the market is still too early and competitive for pre-emptive regulation. The discussion turned on whether AI safety concerns justify coordination with government or risk becoming an “FDA for AI,” and whether the AI boom will ultimately show up as measurable productivity and profit for customers buying tokens.

Compute, not demand, is setting the pace of frontier AI

The SpaceX-Anthropic compute agreement was treated as more than a capacity lease. Chamath Palihapitiya framed it as confirmation that frontier AI revenue is being governed by supply, not demand. In his view, the revenue performance of Anthropic and OpenAI “has nothing to do with demand” and “is entirely to do with the supply constraints that exist in data centers, and specifically in power.” If those companies had “infinite power,” he said, their revenue curves would probably be even more parabolic.

The agreement shown on screen said Anthropic had signed a deal with SpaceX to use all of the compute capacity at Colossus 1. The document described “more than 300 megawatts of new capacity” and “over 220,000 NVIDIA GPUs” coming within the month, with the additional capacity intended to improve Claude Pro and Claude Max. A second on-screen document said Claude Code’s five-hour rate limits would double for Pro, Max, Team, and seat-based Enterprise plans; peak-hour limit reductions would be removed for Pro and Max accounts; and API rate limits for Claude Opus models would be raised.

For Brad Gerstner, the transaction clarified a possible new layer in SpaceX’s business. He cited a Shaun Maguire post describing SpaceX as a “five-layer cake”: launch, connectivity, compute/hyperscaler, applications/models, and other bets. Gerstner said the Anthropic agreement derisks the compute layer because SpaceX can monetize capacity even before xAI fully converts its own model investments into revenue.

That point mattered because, as Gerstner described it, one question in the SpaceX roadshow has been whether xAI’s revenue trajectory can justify the scale of infrastructure commitments. He estimated the Anthropic deal could generate an incremental $4 billion to $5 billion of revenue this year, on top of analyst estimates in the mid-$20 billions. That was Gerstner’s projection, not a disclosed deal figure. In his view, the revenue would help offset infrastructure costs and subsidize investment in the next generation of Grok.

David Sacks made the same balance-sheet argument in more direct terms. SpaceX already has profitable space, telecommunications, and Starlink businesses, he said, but xAI had “huge losses” because frontier training clusters require large capital commitments before the model produces substantial revenue. The problem is sharper because, in his view, “right now all the revenue is in enterprise, which is to say coding,” and xAI does not yet have a coding product comparable to the leading players. Leasing capacity to Anthropic, Sacks said, turns some of those CapEx commitments into revenue.

The speakers were loose about the corporate boundary among SpaceX, xAI, Colossus, and what Jason Calacanis called “Elon Web Services.” They did not establish a formal new hyperscaler entity. What they described was an emerging Elon-controlled compute business built from data centers, power access, factory-building ability, and AI demand.

They repeatedly returned to Musk’s advantage in securing power and building physical infrastructure. Palihapitiya said Musk “somehow saw the tea leaves before most people,” built to scale, secured power, and now owns a critical asset that can “kingmake” in AI. Gerstner put it more operationally: “there’s nobody better on planet Earth than Elon at converting electrons to tokens.”

Calacanis extended the logic into the broader “Elon Web Services” thesis. He compared the opportunity to AWS, Azure, and Google Cloud, pointing to their large revenue bases and implied standalone valuations. He argued that Musk’s core competencies at Tesla and SpaceX — factories, batteries, energy, satellite connectivity — map unusually well to AI infrastructure. Data centers, he said, are “basically big giant factories,” and Musk has already shown unusual ability to build factories, deploy batteries, and coordinate energy systems.

The on-screen materials reinforced that argument. A table showed cloud annualized revenue estimates for AWS, Azure, and GCP, with AWS around $120 billion, Azure above $100 billion within Microsoft Cloud, and GCP around $80 billion.

| Cloud | Annualized revenue | Growth | Operating margin | Standalone valuation estimate |

|---|---|---|---|---|

| AWS | ~$120B | 20–24% | ~30% | $1.5T–$2.0T |

| Azure | ~$100B+ within $54.5B/qtr Microsoft Cloud | ~40% | ~45% | $1.5T–$2.5T |

| GCP | ~$80B | 63% | ~-17% | $700B–$1.3T |

A Tesla factory floor-space chart showed multiple large Tesla and SpaceX sites. A battery deployment chart showed Tesla’s annual battery deployments reaching 46.7 GWh in 2025, with visible text describing “10-year growth ~1,300x,” cumulative deployments since 2016 of about 127 GWh, and 2025 energy revenue of $12.8 billion.

Calacanis’s more speculative extension was distributed compute: compute in Powerwalls, homes, cars, and eventually orbit. The deal announcement, he noted, also said Anthropic expressed interest in partnering on “multiple gigawatts of orbital AI compute capacity.” A separate visual showed a Realtor.com/DFN article about PulteGroup, NVIDIA, and Span testing “mini data centers” in suburban backyards, which Palihapitiya called “super cool.” The shared premise was that resistance to giant data centers may push compute into more distributed forms; the specific Powerwall and home-compute architecture was Calacanis’s projection.

The valuation thesis stayed tied to that infrastructure argument. Gerstner said SpaceX could trade at “40 to 50 times revenue” in an IPO because Musk has the “largest TAM in the world” and a future pipeline of innovation that no other operator can match. A Polymarket visual shown during the discussion displayed a “SpaceX IPO Closing Market Cap” market with “2.0T+” at 60%. Calacanis described the “Elon variable” as a premium investors assign because of future product creation.

Palihapitiya pushed back on the framing that Apple, Google, Meta, or Amazon are being penalized. In his view, those companies are fairly valued; Musk’s companies receive a premium because “everybody else has stopped innovating.” He went further with his own prediction that Tesla and SpaceX would eventually merge into “all things Elon” or “Elon Corp,” possibly by the end of the year or the middle of the following year. That was presented as his expectation, not as a disclosed plan. His broader point was that markets appear willing to award Musk an innovation premium while treating other large technology companies as mature platforms that know how to “draw more blood from the stone” but no longer create the same broad social benefit.

That compute bottleneck set up the next argument: if access to power and GPUs determines revenue, then the company with the steepest revenue curve may not merely be growing fast. It may be moving toward a market structure that invites monopoly and regulatory-capture questions before the market has settled.

Anthropic’s growth gave Sacks grounds for a monopoly warning

David Sacks used Anthropic’s reported growth to make the strongest claim in the discussion: if its current trajectory persists, Anthropic could become “the most powerful monopoly ever created in human history.” He said Anthropic had grown roughly 10x per year for three years, and that from January 1 to March 31 it grew from roughly $10 billion of ARR to $30 billion. In April, he said, it increased again to $44 billion of ARR. Sacks called that kind of growth at that scale something “nobody in Silicon Valley has ever seen.”

Unless something about their current trajectory changes, Anthropic will be the most powerful monopoly ever created in human history.

The claim was not that Anthropic already had an established monopoly. Sacks’s point was trajectory. If Anthropic exits the year at roughly $100 billion of ARR and continues compounding, he argued, the question becomes whether it reaches $1 trillion of ARR in 2027. That would put it beyond the revenue scale of the largest technology companies, which he said are generally in the hundreds of billions of dollars of annual revenue and growing far more slowly.

Chamath Palihapitiya supplied a comparison: Apple at $420 billion of 2025 revenue, Microsoft at $300 billion, Alphabet at $390 billion, Amazon at $700 billion, NVIDIA at $190 billion, Meta at $185 billion, and Tesla at $110 billion, for a total of about $2.3 trillion to $2.35 trillion. If Sacks’s trillion-dollar Anthropic scenario came true, he said, “it won’t be the Mag 7, it’ll be the Mag 1.”

Brad Gerstner was more cautious. He said “Dario on Dwarkesh” had put combined AI revenue among market leaders at about $1 trillion in 2029, not that a single company would reach that level in 2027. Gerstner agreed that the total addressable market is enormous and that Anthropic’s curve had surprised people, but he warned that the numbers were hard to reconcile from compute supply alone. If Anthropic expects roughly 5 gigawatts by the end of the year and 10 gigawatts by the end of the next, he said, “it’s kind of hard to get to those numbers for a single company.”

Sacks’s counter was that the coding market alone may be large enough to support enormous AI revenue. His understanding was that roughly $1 trillion a year is spent on software developers and related software creation. He did not claim Anthropic would capture that whole market. Instead, he argued that coding tokens could expand the market by increasing the amount of code generated, potentially doubling the software market from $1 trillion to $2 trillion.

Competitive response is the major limit on the monopoly scenario. Sacks said Anthropic had been the “porcupine” focused on one thing while other labs behaved like “foxes” doing many things: image generation, Sora-like products, fantasy character chatbots, and other projects that now look less central. He argued the market has revealed coding and the products built on coding tokens — agents, co-work, and enterprise automation — as the immediate revenue engine. OpenAI, he said, has already pivoted, with strong reports around Codex based on GPT-5.5 and a new base model called Spud. Google, he added, has strong coding talent, and Musk has tied up with Cursor.

A chart shown during the discussion compared OpenAI Codex and Anthropic Claude Code under the title “Codex Installs Skyrocketed in Early May.” The visible values showed OpenAI Codex moving from roughly 9.9M–13.1M in the March and April weeks shown to 86.1M in the May 3 week; Claude Code moved from roughly 3.1M–7.2M across the same period. The chart’s labels used “weekly aggregate installs,” but the visual did not clarify the measurement beyond those labels.

| Week | OpenAI Codex visible value | Anthropic Claude Code visible value |

|---|---|---|

| Mar 15 | 9.9M | 3.6M |

| Mar 22 | 10.2M | 3.7M |

| Mar 29 | 10.8M | 3.3M |

| Apr 5 | 10.2M | 3.1M |

| Apr 12 | 12.9M | 3.1M |

| Apr 19 | 13.1M | 4.1M |

| Apr 26 | 11.3M | 5.8M |

| May 3 | 86.1M | 7.2M |

Sacks treated the chart as evidence that OpenAI could take share, while still emphasizing that the leader on an exponential curve has the easier task: it needs to maintain inertia; challengers need to change something.

Gerstner resisted the monopoly frame altogether. He said it was “ridiculous” to talk about monopoly when AI had “not even left the starting gate.” Five months earlier, he noted, many people thought OpenAI would run away with the market. Google has substantial AI revenue. Amazon, Google, Microsoft, and other incumbents generate huge free cash flow to fund investment. Anthropic and OpenAI, by contrast, remain “fledgling” and “fragile” relative to the platform companies around them. His policy conclusion was simple: let the companies compete and keep Washington out of preemptive winner-picking.

Sacks’s concern was that Washington historically wakes up late to monopoly formation. Once a company has 80% of a market, he said, regulators finally notice. His warning was not a call to block competition, but a warning that safety rhetoric can become a tool for regulatory capture before the market has fully formed.

To make that point, he offered a Standard Oil analogy. Imagine, he said, if John D. Rockefeller had been better at public relations and named Standard Oil “Safe Oil.” Kerosene could light a house or burn it down; in the wrong hands it could torch a city or make a bomb. A safety-minded Rockefeller could have called for a new government agency to regulate kerosene safety, with testing, licensing, and “common sense regulation.” The public debate might have focused on wick thickness and dangerous independent refiners, while missing that Rockefeller was building the most powerful monopoly of his era.

Sacks’s application to AI was explicit: some “safetyist” policy proposals look like regulatory capture, because they would strengthen the moat around a monopoly or duopoly and slow competitors. He pointed to Anthropic banning OpenClaw from using its models as an example he considered anti-competitive. Palihapitiya did not endorse an enforcement action, but said he would “double click” on it.

That concern over safety rhetoric became the bridge to the policy fight. The issue was not whether powerful cyber models create real risks. Sacks, Gerstner, and Palihapitiya all accepted that some guardrails and coordination may be needed. The dispute was whether the answer should be targeted cooperation or a standing approval regime in Washington.

The ‘FDA for AI’ fight turned on coordination versus approval

A New York Times report shown on screen said President Trump was considering an executive order to create an AI working group, with tech executives and government officials examining “potential oversight procedures,” including a possible review process for new AI models. The visible excerpt said the administration was discussing “a formal government review process for new A.I. models.”

The reported catalyst was Anthropic’s Mythos model. A visual excerpt said Mythos was powerful enough at identifying software vulnerabilities that it could lead to a cybersecurity “reckoning,” and that Anthropic declined to release it publicly. Another excerpt said the White House wanted to avoid political repercussions if a devastating AI-enabled cyberattack occurred.

The analogy that triggered the backlash came from Kevin Hassett on Fox Business. Hassett said the administration was studying a possible executive order to provide a “clear roadmap” for future AIs that could “potentially create vulnerabilities,” so that they would be “released into the wild after they’ve been proven safe,” “just like an FDA drug.” Scott Bessent, in a separate Fox News clip, used different language: the government’s job was to maintain safety while allowing AI companies to innovate, and there was “a very important calculus here between innovation and safety.”

Brad Gerstner said Hassett’s FDA analogy “muddied the waters.” He said he had spoken to Hassett after the clip aired and asked whether FDA was the right analogy. According to Gerstner, Hassett said he meant that the government needed to see models so it could coordinate, harden systems, and prepare intelligence and cyber agencies — not that Washington should approve every model before release. Gerstner said he could not find anyone “on the right” who supported an approval regime.

His objection to an approval regime was categorical. If every model must be shared with a Washington agency and pre-approved before release, he said, the result would be bureaucracy, winner-picking, and a burden on the very competition that has put the United States ahead. He supported better coordination, faster cyber review capacity inside government, and a finite feedback window, but not “an FDA of models sitting in Washington.”

David Sacks agreed that no senior official supported an “FDA for AI.” He called that framing “fake news” and said the idea came from commentary and media gloss rather than White House policy. He pointed to a statement from White House Chief of Staff Susie Wiles shown on screen, which said Trump was “not in the business of picking winners and losers,” and that the goal was to deploy the best and safest technology rapidly while defeating threats.

Sacks also rejected the idea that the administration had adopted a purely laissez-faire posture. He said the White House had released a National AI Regulatory Framework on March 20, which he had worked on, including a four-page bulleted list of legislation the administration would support. The distinction, he said, was between “specific solutions to specific problems” and “a giant power grab by Washington that would squash innovation.”

The specific problem he accepted was cyber. Sacks said Mythos is not unique; OpenAI also has a cyber-capable model, and within three to six months all major frontier labs, including Chinese models, will have similar capabilities. The same tools used for cyber defense can be used for cyber offense. That means systems need to be hardened, code bases need to be scanned, and vulnerabilities need to be patched before hackers acquire or reproduce the capabilities.

His preferred response was not pre-release approval. It was rapid deployment of these models to “good guys”: the U.S. cybersecurity industry, including public companies such as Palo Alto Networks and CrowdStrike and a long tail of startups doing AI-powered penetration testing and related work. For firms with weaker internal security, these companies could become vendors using frontier cyber models as force multipliers.

Chamath Palihapitiya asked whether powerful models should have a KYC wrapper. Gerstner clarified for listeners that KYC means “know your customer.” Sacks said basic KYC makes sense during preview periods for highly capable models: before handing out API access to a model like Mythos, a lab should know whether the user is a company, a known actor, or possibly a state-sponsored attacker. He emphasized that both Anthropic and OpenAI had acted responsibly by not releasing their most capable cyber models publicly, which made pre-release approvals a solution to a problem that did not exist.

Gerstner added that labs already track API use because of anti-distillation efforts, and suspicious activity is flagged and shared with government. In some cases, he said, it may be valuable to allow questionable users to interact with systems so defenders can observe what they are trying to extract. The practical issue, in his account, is not that there is no monitoring; it is that coordination can improve.

Sacks’s larger warning was that real cyber risk can be used opportunistically by AI doomers and ideologues. He invoked “never let a crisis go to waste”: the cyber issue is real and must be handled over the next three to six months, but some actors want to convert it into a permanent Washington approval infrastructure. A Bernie Sanders post shown on screen welcomed the idea that the Trump administration might be “beginning to face reality” about “out-of-control AI.” Sacks read Sanders’s reaction as evidence that the FDA frame appeals to people who want to slow or stop AI progress.

AI’s political problem is not just fear of models; it is fear of who benefits

Chamath Palihapitiya explained the regulatory pressure as cultural and political rather than technical. He said there has been a “profound vibe shift” toward tech, Silicon Valley, “tech oligarchs,” and AI. Main Street already feels it, and Washington is beginning to absorb it. Some form of oversight is coming, he argued; it would be worse under Democrats, but even Republicans are likely to impose some oversight.

The reason, in his account, is that the public mostly hears the negative case for AI. The industry has “horrible messaging” and has not spent enough time or money articulating the upside in a way that creates broad support. At the same time, the prospect of “a few winners and many, many, many potential losers” is politically destabilizing. Tech leaders, he said, should be reinvesting in America at a scale that blunts the backlash. They are not doing enough, or they are communicating it poorly.

What you’re seeing is the build up of antibodies.

Jason Calacanis agreed that humans are naturally better at imagining dangers than benefits: deepfakes, robotics, self-driving cars replacing jobs, bioweapons, and cyberattacks. He argued that the industry needs a counter-story built around concrete benefits. His first proposal was broad-based giving tied to the AI wealth creation cycle. NVIDIA, SpaceX, Anthropic, OpenAI, and similar companies, he said, could commit a portion of IPO proceeds or stock to Invest America accounts or similar vehicles for every American child. He called the idea “IPO-K” — an IPO for kids — and contrasted it with the Giving Pledge, which he dismissed as late-life virtue signaling.

His second proposal was to focus AI’s benefits on healthcare, education, and the cost pressures facing the bottom half of Americans. People worry about income, healthcare, housing, and their children’s education. Calacanis said capitalists should be willing to study minimum wage increases in countries such as New Zealand, Sweden, Switzerland, and Australia, and consider modest company-by-company increases that lift the bottom third of society. He also argued that universal healthcare, if designed well, could remove a burden from companies and increase consumer capacity in an economy driven heavily by household spending.

David Sacks resisted the pivot from AI to minimum wage and universal healthcare. On universal healthcare, he said the issue is not desirability but cost, quoting P.J. O’Rourke: “If you think healthcare is expensive now, just wait until you make it free.” But he accepted the broader premise that AI is unpopular. His counter was that AI is not salient. A chart shown during the discussion ranked artificial intelligence 29th out of 39 issues in “current importance,” far below cost of living, the economy, inflation, immigration, taxes, corruption, healthcare, and education.

Sacks’s political argument was that the effects of AI are more popular than AI itself. The top voter concerns are cost of living and the economy, and he said AI is deflationary, helps with cost of living, and is creating an economic boom. He claimed AI accounted for 75% of GDP growth in Q1 and said the impact is not confined to Silicon Valley startups. It includes, in his telling, construction, blue-collar jobs, and 25% to 30% wage increases for construction workers.

Brad Gerstner strongly agreed. If Bernie Sanders had his way and shut down data centers, he said, the U.S. would have negative GDP growth, a stock market down 15% to 20%, and rising unemployment. He argued that AI already delivers net benefits through economic growth, employment, and productivity, but also agreed with Palihapitiya that the industry must deliver and communicate local benefits. His example was Abilene, Texas: if a company puts a data center there, perhaps electricity should be free for local households.

On healthcare and education, Gerstner was skeptical of top-down solutions. He pointed to charts showing the categories with the highest inflation — hospital services, college tuition, medical care, childcare — as categories with heavy government involvement. A visual sourced to the Bureau of Labor Statistics and Carpe Diem AEI showed hospital services, college tuition and fees, textbooks, medical care, and childcare rising far above overall inflation from 2000 to 2022, while software, toys, TVs, and cellphone services became more affordable. Gerstner’s conclusion was that markets, not more government control, are more likely to produce abundance in healthcare and education through AI coaches and related tools.

Calacanis turned that into a critique of regulation in the very sectors where Americans feel pain. Founders and venture capitalists often avoid housing, education, minimum wage-related labor models, and healthcare because regulation makes those sectors “kryptonite.” In his view, if government cleared away barriers and allowed founders to build in those verticals, the industry could address the sources of public anxiety more directly.

The political argument therefore fed back into the market argument. If the AI boom is to avoid backlash, it cannot only produce revenue for model companies and infrastructure providers. The speakers disagreed on the mechanism, but they returned to the same practical test: whether AI’s benefits become measurable and visible beyond the frontier labs.

The AI trade now depends on whether token spending becomes measurable profit

Hyperscaler growth supplied the public-market backdrop. A Jamin Ball post shown on screen listed AWS at about a $150 billion run rate growing 28% year over year, Azure at about $108 billion growing 39%, and Google Cloud at $80 billion growing 63%. Jason Calacanis noted that Azure and Google Cloud include some software products, but said the growth numbers were still extraordinary. Collectively, he said, the three added roughly $30 billion of annualized run-rate revenue.

| Cloud business | Run rate | Year-over-year growth |

|---|---|---|

| AWS | ~$150B | 28% |

| Azure | ~$108B | 39% |

| Google Cloud | $80B | 63% |

Brad Gerstner said the macro picture had defied repeated warnings: tariffs were supposed to cause hyperinflation and destroy GDP; conflicts in Venezuela and Iran were supposed to do the same. Instead, he said, GDP was accelerating, the 10-year Treasury was around 4.3%, inflation was under control, and AI compute investment was contributing massively to U.S. growth. The S&P 500 was up only 8% on the year, which he said did not look like a bubble. He described Meta at 17 times fully taxed GAAP earnings, NVIDIA at 19 times, Microsoft at 20 times, Google at 24 times, and memory names such as SK Hynix, Samsung, and Micron at five, six, and seven times earnings. Those figures were presented as Gerstner’s market read.

His investment pivot came when private-market AI revenue began to appear. Entering the year, he said, the major question was whether AI revenues would show up against the enormous infrastructure spend. If Anthropic’s revenue had not shown up, and hyperscalers had not reaccelerated, he believed the market would be down 10% to 15% because investors would question the ROI on infrastructure. When the numbers began appearing in December and January, Gerstner said he moved from medium exposure to large exposure, with 80% or more of exposure in compute, AI, memory, and related areas.

David Sacks attributed the AI boom to Trump administration policy. In Sacks’s characterization, Trump rescinded Biden-era policies that would have required models trained above certain compute thresholds to be approved in Washington and would have required licensing for GPU sales worldwide unless narrow exemptions applied. Trump, he said, declared that the U.S. had to win the AI race and unleashed companies to do so. Sacks also emphasized energy: Trump’s “drill, baby, drill” posture and willingness to let AI companies become energy companies are, in his view, foundational to data center buildout without forcing firms to compete directly with consumers for grid power.

Calacanis agreed with the broad American exceptionalism and business-friendly policy argument, while objecting to the administration’s tariffs and war decisions. Gerstner replied that it was hard to imagine a more favorable situation for the United States: markets at highs, bond markets controlled, private companies such as SpaceX, OpenAI, and Anthropic approaching enormous scale, and the U.S. widening its gap over the rest of the world in AI.

Chamath Palihapitiya introduced the main caution. In the short term, he said, the companies “making the new thing” — NVIDIA, memory makers, Anthropic, SpaceX, OpenAI — need to be valued and show value. But eventually, the buyers of tokens need measurable benefit. He said there is “not a scintilla of evidence” that AI has lifted S&P 500 operating margins. In the next two or three years, he expects a fork: either operating expenses shrink and margins rise through workforce and cost reductions, or revenues grow while margins expand and operating expenses remain flat or even increase. The distinction matters politically and economically because the first path implies labor displacement, while the second implies growth through productivity.

The people that are paying for all these tokens need to see an actual benefit.

Sacks accepted the framework but was more optimistic. He connected it to Gerstner’s earlier infrastructure point: investors first needed to see ROI from infrastructure in the form of model revenue, and that revenue has appeared. The next question is whether the customers buying coding tokens see ROI. Sacks argued that continued month-over-month enterprise spend suggests they do. He expects the ROI to “trickle down from infrastructure to model to application to end user,” producing a productivity wave.

Calacanis was more emphatic, drawing analogies to the PC, internet, cloud, and mobile transitions. He said each cycle began with questions about whether adoption would pay off and later became obvious. Inside his own investment firm, he said three people are building internal interfaces and products that a 22-person firm would traditionally have bought as SaaS. In startup land, he argued, the ROI is already visible: companies are shipping software, getting more done with less capital, and hiring fewer employees than they otherwise would.

Palihapitiya pushed back. Startups may be seeing value, but broad-market profits cannot be “willed” upward. Anheuser-Busch still has to sell more beer; Nike still has to sell more shoes; medical-device companies still have to sell more hips and knees. Experimentation is not the same as proven financial value. Companies need to trace “I spent X and I made Y,” with Y greater than X and margin expansion resulting. He said that has not yet appeared in global GDP, global productivity, or S&P 500 margins.

Gerstner offered evidence on the other side but admitted the attribution question remains open. Azure grew 39% and Google Cloud 63%, while combined Mag 5 headcount growth over three years was about 3%, and operating margins are expanding. He said S&P 500 operating margins rose from about 11% in 2023 to 11.8% in Q1 2024 and 13% this year — a 200 basis point improvement. Palihapitiya said he would bet “dollars to donuts” that the margin expansion is not AI, but the same financial engineering that supported earnings growth over the prior decade. Gerstner conceded it is legitimate to ask whether margin expansion is durable and how much is actually AI, but said the anecdotes he hears from companies growing revenue without adding headcount “map” to the AI productivity thesis.

The labor-market evidence did not settle the argument. Sacks said operating efficiency has improved while unemployment remains near historic lows, around the low 4% range. He also cited a Wall Street Journal headline, shown through one of his own posts, saying new college graduate hiring was up 5.6% year over year and youth unemployment for degreed 20- to 24-year-olds fell to 5.3% from 8.9%. Calacanis replied that labor force participation complicates the picture: at 61.9% in March, it remained below the pre-COVID 63.3% he cited. He said the job-market evidence is mixed and too early to settle. Sacks rejected that as a dodge, saying Calacanis calls it “too soon to tell” whenever data refutes his narrative.