Agents Move From Model Capability To Operational Control

Google DeepMind, OpenAI, Vercel, SAP, Adaptive ML, and CME’s compute-futures plan all point to the same applied-AI shift: agents are being designed around the conditions that let them operate safely in real workflows. The open questions are less about whether models can act and more about reference, permissions, memory, business context, feedback, evaluation, and compute exposure.

AI is leaving the chat box

Applied AI’s most concrete movement today is not a bigger answer box. It is the attempt to let agents share a user’s frame of reference and act through software interfaces without becoming unbounded operators.

Google DeepMind researcher Adrien Baranes and OpenAI’s Ari Weinstein are approaching that problem from opposite sides of the interface. Baranes’s experimental pointer prototype treats the cursor as a reference layer for Gemini. When a user says “this,” “that,” or “here,” the pointer supplies the object: a note, calendar event, image, ingredient list, map location, or underlying app element. Weinstein’s Codex demo treats local Mac applications as an action surface. Codex can see interfaces, click, type, and continue work in the background, but it uses a separate cursor and requires app-by-app approval before it can see or type into specific software.

The distinction matters. Baranes is working on shared attention: how a person and model agree on what object is being discussed. In his examples, the user does not need to name every ingredient in a ratatouille recipe or formally specify the destination field for a shopping list. Pointing and speech together bind the vague instruction to precise screen objects and app data. A calendar example follows the same logic: “Can you make this 8 PM?” works because “this” resolves to the event currently indicated, including the structured data behind the visible card.

OpenAI’s Codex computer-use demo is about bounded action. Weinstein says Codex can use graphical applications built for humans by seeing, clicking, and typing. But the demo repeatedly emphasizes limits: macOS Accessibility and Screenshots permissions are required; the user grants access to local apps case by case; Codex uses a cursor separate from the human’s own pointer; and it can work inside one app without viewing or interacting with the rest of the desktop. Weinstein contrasts this with computer-use systems that “take over your entire computer.”

The pointer and the separate cursor are complementary primitives. One tells the model what the user means. The other constrains what the agent can do. A system that understands “this clause,” “that meeting,” or “the image over there” still needs scoped authority before it changes a document, sends a message, adjusts a setting, or manipulates a spreadsheet. A system that has permission to act still needs a reliable way to know what object the user intended.

The product direction is broader than either demonstration. Baranes frames the pointer prototype as a step toward a more collaborative operating environment, where the model and user share a canvas “like if I was working with another person.” Weinstein frames Codex computer use as the missing local-app layer for a coding agent that already had files, commands, and online integrations. Both are careful, in different ways, to show that the interface problem is not solved by raw model capability. The agent needs an interface grammar: shared reference, visible action, and bounded permission.

Neither demonstration should be treated as a finished AI operating system. Baranes’s work is explicitly an experimental research prototype. Codex computer use is a product demo for Mac, with Windows support described as coming later. But together they point to the same operational bottleneck. Once an AI system leaves the chat box and enters the user’s actual software environment, capability is inseparable from control.

The agent needs a computer

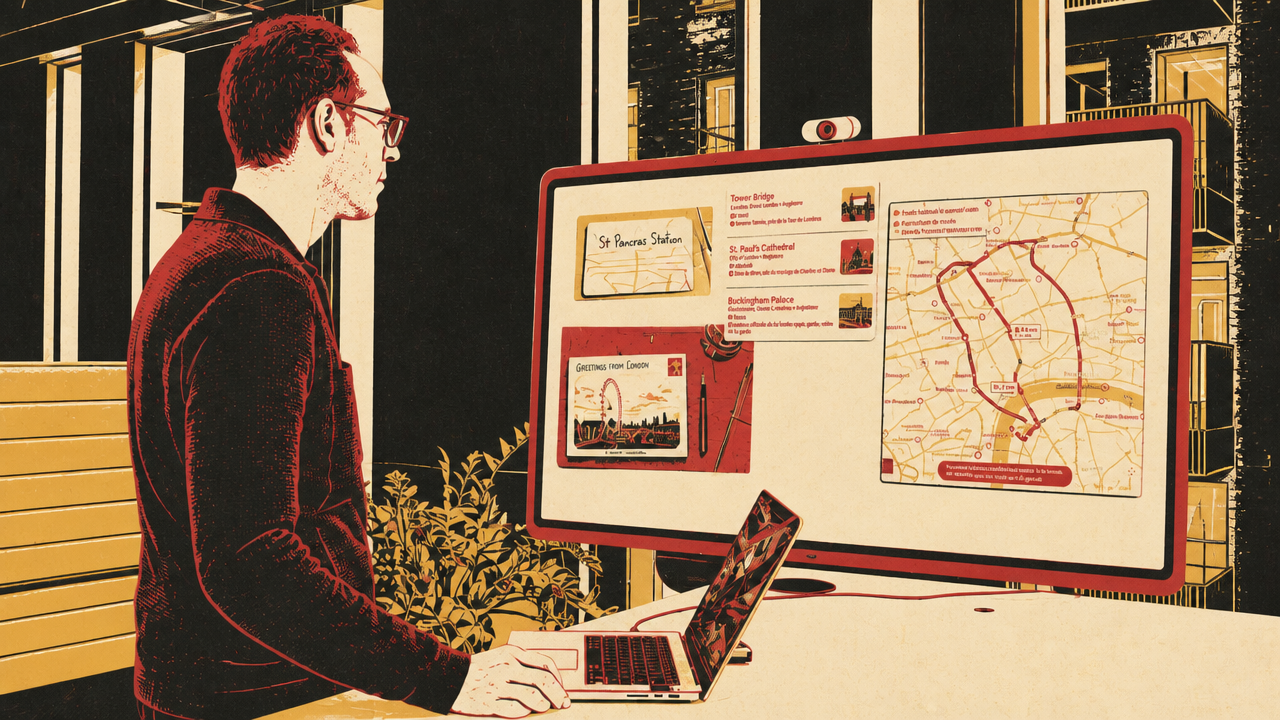

If interface control lets an agent act in human software, persistent state lets it sustain work over time. Nico Albanese’s Vercel workshop gives the day’s clearest architecture for that shift: an agent runtime and tools are not enough; the agent needs “a computer,” meaning a sandboxed environment with a file system where it can write plans, store research, run commands, maintain memory, and reuse artifacts.

The useful idea is not storage for its own sake. Albanese says Vercel’s internal D0 data agent already had broad access to internal systems, including data tools, backend systems, an admin panel, Salesforce, and other company tools. Before receiving a file system and instructions for using it, it might run several tools and produce a somewhat hallucinated answer. Afterward, its behavior changed. It wrote an objective and plan into a scratchpad, checked off steps, kept research in a directory, and repeatedly reread the artifact that stated what it was supposed to do.

That turns memory from a hidden product feature into an inspectable workbench. In the workshop, a memories.md file is read before each run and injected into the agent’s instructions. The agent can add important facts, edit them, clear them, and use shell commands to inspect its own working directory. Albanese’s “memory is a file in the sandbox” claim is deliberately plain: the agent’s memory is not an opaque profile store but an artifact the agent and application can read.

sandbox persistence changes the agent’s operating model. Vercel’s named sandboxes can spin down after inactivity, snapshot the file system, and later resume from the same named state. Application code can therefore treat the remote environment as a durable computer tied to a user or session. The agent can write code, store files, and come back later without rebuilding its context from scratch.

The broader pattern is compact:

| Layer | What it gives the agent | Control problem it raises |

|---|---|---|

| UI control | Ability to click, type, and operate human software | Which apps and objects may the agent touch? |

| Persistent state | Plans, memories, research, and files across sessions | What should be saved, forgotten, or trusted? |

| Reusable scripts | Durable procedures for repeated tasks | When should the agent reuse, revise, or discard them? |

| Sub-agents | Isolated work on long or exploratory tasks | What summary returns to the main thread? |

The weather-script example shows both the promise and fragility. Albanese asks for London weather using Python. The agent creates a script, fetches and parses weather data, stores the script in a scripts directory, and documents it in memories.md so it can be reused. When asked for San Francisco weather, the script initially resolves the location incorrectly to Quebec-Ouest, Canada. The agent notices the mismatch and suggests a more specific location string. The durable capability exists, but it still needs validation and operating policy.

The same is true of memory. When Albanese first tells the agent to save facts, it writes down trivial greetings. The file-backed mechanism works; the policy is wrong. He then refines the instructions to distinguish durable user preferences, project context, corrections, and work facts from greetings or small talk. The hard part moves from “can the agent write to memory?” to “what counts as memory?”

That is the more general lesson for applied AI systems. Giving an agent a persistent workbench makes it less dependent on one long prompt and one fragile context window. It can externalize work, inspect prior artifacts, create scripts, and isolate subtasks. But every new capability introduces a governance question: what the agent may run, what it may store, how it should compact context, which artifacts it should reuse, and when a sub-agent’s lossy summary is safe enough for the main thread.

Albanese’s long-run example also pushes against an overly simple view of context management. He says a coding-agent run lasting nearly 105 minutes used only 32% of GPT-5.4’s context window without compaction, and that aggressive message trimming can damage cache behavior or lose critical constraints. His preferred mitigation is not always summarization. It is often isolation: send a sub-agent to explore a bounded objective, then return a compact result to the main thread.

The architectural throughline is clear. A local-app agent can operate a desktop. A sandboxed agent can accumulate procedures. A reliable agent needs both action surfaces and persistent state, with a memory policy explicit enough that the system can be inspected, corrected, and reused.

Enterprise agents need context and correction

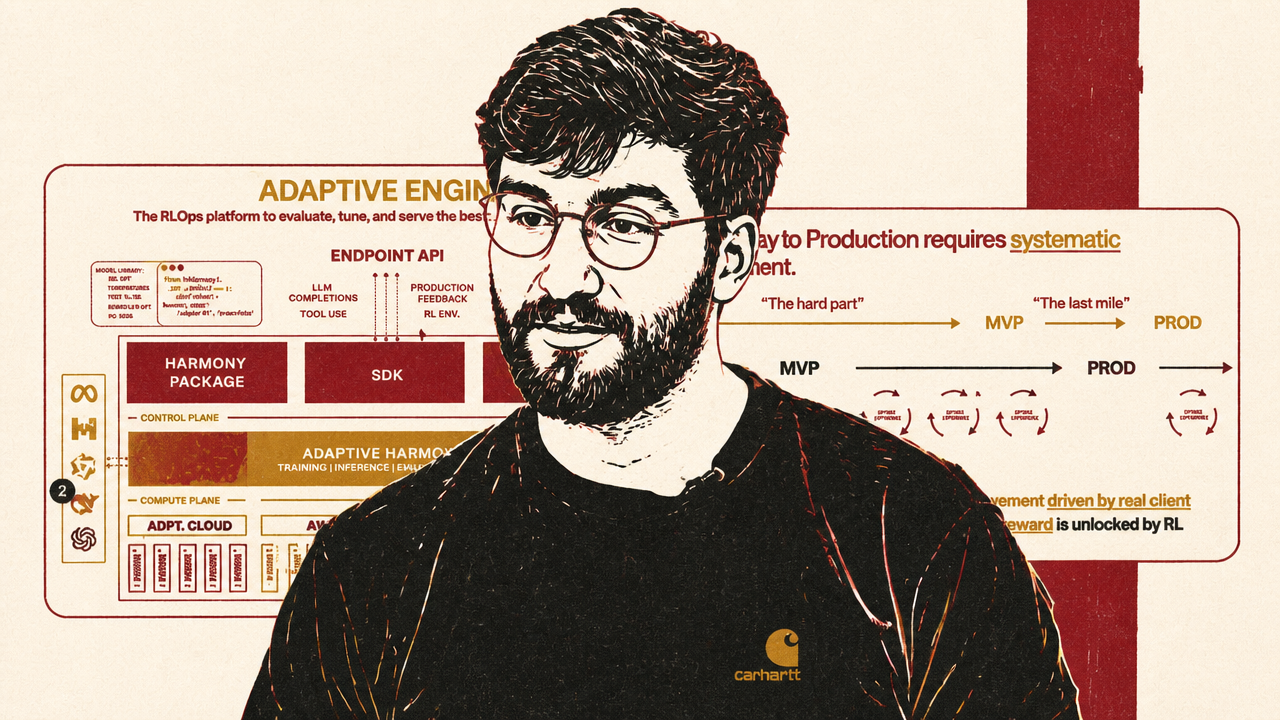

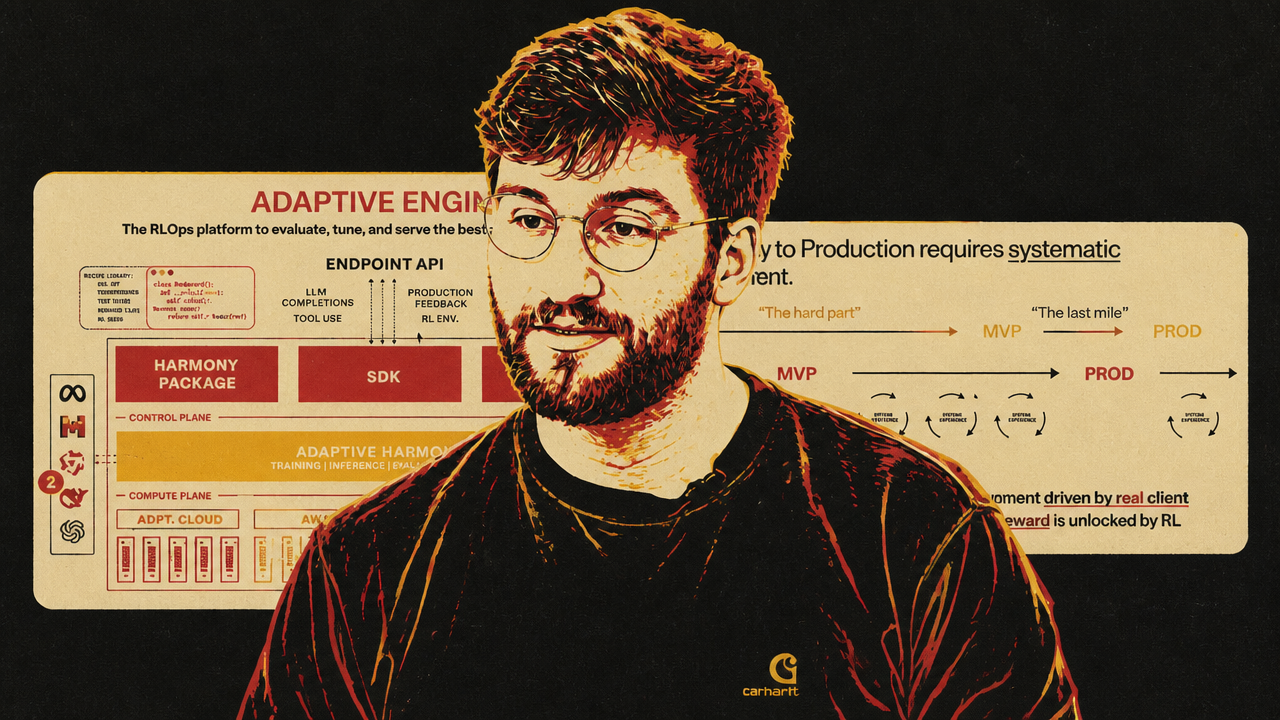

The enterprise AI debate is narrowing from “which base model?” to “what operating system surrounds it?” SAP chief executive Christian Klein and Adaptive ML co-founder Alessandro Cappelli diagnose different missing loops in business deployments. Klein says agents need ERP context: the business data, process relationships, and field-level structure generic models do not know. Cappelli says enterprise pilots fail because defects, user feedback, production outcomes, and business KPIs do not systematically reach the model.

Klein’s argument is that SAP’s advantage is not a proprietary large language model. He says customers can use “any commodity LLM” or bring their own model. SAP’s claimed differentiation is the context layer around ERP data and processes. He describes ERP as “the brain of every company” and says SAP can give agents the business context required to be accurate, compliant, and reliable across functions such as finance, commerce, inventory, procurement, and supply chain.

The proof points Klein cited are operational rather than benchmark-driven. He said JPMorgan Chase can close its books 30% faster, H&M used a personalized commerce agent to improve turnover, and inventory was reduced 10% through coordination among demand, inventory, and procurement agents. Those claims support SAP’s central pitch: the value is not just an assistant making one employee faster, but agents coordinating business processes through the system where those processes already live.

Cappelli’s diagnosis starts one step later. A credible demo or MVP is not the hard endpoint. He cites a slide attributed to MIT’s 2025 State of AI in Business saying 95% of GenAI pilots fail to reach production, and argues that the failure is often a feedback problem. Prompting can patch a defect and create another one. Instruction fine-tuning can produce an improved dataset-driven model, but it does not answer what happens after launch, when real users, costs, latency constraints, defects, and business outcomes arrive continuously.

For Cappelli, reinforcement learning is the mechanism that can convert those signals into improvement. He describes enterprise AI as a lifecycle: observe defects, define rewards, use business KPIs and production feedback, train models against enterprise-specific environments, and repeat. Agents make this more urgent because they consume more tokens, execute more complex flows, touch tools and live systems, and leave less room for error. A summarizer may produce text for review; an agent may change records or act in customer-facing workflows.

The two arguments are not identical, but they fit together. SAP’s context loop asks whether the agent knows enough about how the business works. Adaptive ML’s improvement loop asks whether the system can learn from what happens when the agent operates.

| Enterprise bottleneck | SAP’s diagnosis | Adaptive ML’s diagnosis |

|---|---|---|

| Missing knowledge | Generic models lack company processes, ERP fields, and cross-functional context | Models lack enterprise-specific environments, rewards, and feedback |

| Reliability mechanism | Harmonized business context around agents | Reinforcement-learning-style improvement loops |

| Where value is claimed | Finance close, commerce personalization, inventory and procurement coordination | Production systems shaped by defects, KPIs, user feedback, and tool outcomes |

| Model stance | Customers can bring commodity LLMs | Open and smaller specialized models can be trained against enterprise rewards |

SAP’s competitive tension is that its partners are also potential competitors. Klein names AWS, Microsoft, Nvidia, Databricks, and Snowflake as partners in harmonizing data beyond SAP systems, while insisting that SAP’s “right to win” sits in ERP process context. His line that “agents can’t compensate for broken data models” is the practical bridge: even a strong model fails if the data and process layer are incoherent.

Adaptive ML’s tension is operational complexity. Cappelli concedes that reinforcement learning is hard. Algorithms such as PPO require orchestration of multiple models and infrastructure layers. His platform claim is that enterprises should define rubrics, outcomes, environments, and reward signals while an RLOps layer handles the training machinery. That is a vendor claim, not a settled industry conclusion. But the underlying problem is not vendor-specific: post-launch feedback has to travel somewhere more systematic than an ad hoc prompt edit.

The shared implication is that enterprise AI reliability is becoming less about the base model in isolation. A business agent needs relevant context before it acts and a correction mechanism after it acts. Without the first, it may misunderstand the process. Without the second, it may repeat failures in production while the organization treats the MVP as if it were the finish line.

Evaluation has to become continuous

Vincent Koc’s evaluation argument is the natural continuation of the enterprise-feedback problem. If agents change with prompts, tools, context, traces, users, and even harnesses around them, then a fixed pre-deployment evaluation suite cannot remain the sole control mechanism.

Koc’s phrase for the failure mode is “eval calcification”: the test suite hardens around yesterday’s product while production behavior keeps moving. The product may still pass compliance checks, regression cases, and known tool-path tests. But new users, new usage patterns, changed prompts, different tools, and unexpected edge cases can push risk into the part of behavior the suite does not cover.

He frames the industry’s current testing habit as narrower than mature software engineering. Conventional systems use examples, unit tests, regression suites, CI/CD, observability, and sometimes chaos engineering. AI teams often lean on static benchmarks, hand-curated test sets, and offline pre-deployment evals. That can catch known failures. It is weaker at detecting when the product’s user base, tool use, cost profile, or agent behavior changes after launch.

The link to Cappelli’s feedback-loop argument is direct. Cappelli says production feedback, business outcomes, defects, and rewards must reach the model. Koc says traces and telemetry should also reshape the evaluation system itself. Both reject the idea that launch ends the model lifecycle.

eval calcification is especially dangerous for agents because the stable majority of behavior can disguise the moving edge. Koc uses an 80/20 framing: perhaps 80% of agent behavior is stable and covered by existing tests, while the changing 20% contains the weird user path, new customer segment, or unexpected use case that can damage the business. He does not present that split as a formal law. It is an operating intuition: the margin can create outsized risk.

Koc does not argue that handcrafted tests are useless. Compliance checks still matter. Regression tests still matter. Known tool paths still matter. A bank asking a system a crafted set of compliance questions has a real need. The argument is insufficiency: static tests are necessary but not enough when an agent adapts across users and contexts.

His proposed direction is “always-on” evaluation and optimization. Production traces should make stale tests visible. If new customers ask different questions, or if the agent starts invoking tools differently, those traces should surface the shift and help revise the suite. Telemetry should not only populate dashboards for humans to inspect later; it can become part of the correction loop for agentic systems. Koc also points to adaptive testing research that dynamically selects informative test items rather than running a constant set for every model or condition.

The deeper shift is from evaluating only examples to evaluating intent. Koc organizes recent AI application building into prompt engineering, context engineering, and intent engineering. Prompt engineering was trial-and-error wordsmithing. Context engineering made systems more decomposable through RAG, tools, long context windows, MCP servers, and modular agent components. Intent engineering, in his framing, is harder because systems can begin optimizing toward a user’s or organization’s desired end state. The evaluation target then becomes not only “did it answer this exact question?” but “is it moving toward the right operational outcome, with the right ambiguity, tone, cost, safety, and tool behavior?”

That raises more questions than it resolves. How should an organization define personality inside an agent? How should it define ambiguity? How should two different users’ experiences be compared if the agent adapts to each? Koc’s answer points toward rubrics, traces, telemetry, adaptive tests, and agents helping maintain evaluation artifacts. It is a direction of travel, not a completed methodology.

The practical lesson for applied AI teams is conservative rather than radical. Keep fixed tests for known risks. Add production traces and telemetry to detect where the test suite is going stale. Treat evaluation as a living system tied to the product’s intended end state. An agent that can adapt in production needs an evaluation process that can adapt with it.

The resource layer is getting financialized

The same operability problem now appears at the infrastructure layer. Enterprises want agents that can act reliably, remember work, use business context, improve from feedback, and pass continuous evaluation. Those systems depend on compute. CME Group and Silicon Data are trying to turn that dependency into a futures market.

Bloomberg’s Katherine Doherty describes CME and Silicon Data’s plan as an effort to make computing power tradable through futures contracts based on a compute-price index. The index is the first layer: a way to put a number on the price of compute. The futures contract would add the ability to hedge future price moves. Caroline Hyde frames the move through CME chief Terry Duffy’s phrase that compute is “the new oil.”

The analogy is straightforward but incomplete. A futures contract lets a participant agree to buy or sell something later at a fixed price. For oil, metals, and agricultural commodities, the reference commodity is familiar. For AI compute, the benchmark has to be defined around GPU processing power, GPU hours, or a similar measure. Doherty says the index is where those details begin: what processing power is measured, how it is priced today, and how that reference can support forward exposure.

- Index firstSilicon Data creates a compute-price index to make GPU processing capacity more observable.

- Futures planCME and Silicon Data work toward futures contracts that would let companies and investors hedge future compute-price moves.

- Market testRegulatory approval, benchmark design, and liquidity determine whether compute futures become usable.

This is the market-level version of the applied-AI control stack. At the interface layer, agents need bounded control. At the architecture layer, they need persistent workspaces. At the enterprise layer, they need context and correction. At the evaluation layer, they need continuous measurement. At the infrastructure layer, the input they consume may need forward pricing and hedging.

CME’s involvement matters because it brings institutional derivatives infrastructure to an area that has been treated largely as procurement, cloud capacity planning, and strategic infrastructure competition. Doherty says Silicon Data’s index was intended as one pillar of a broader ecosystem, not merely a tool for comparing compute offerings. CME adds a large U.S. derivatives exchange with existing traders and institutional customers. The prospective participant base would include commercial users of compute that want to hedge costs and financial traders that view compute as a commodity exposure.

The unresolved questions are concrete. Regulators still need to approve the contracts. The benchmark needs enough clarity around GPU processing power to support trading. Liquidity has to develop. Commercial hedgers and financial participants both need reasons to show up. Other exchanges may follow, but multiple venues only help if the market becomes deep enough to be useful.

The compute-futures plan should not be read as proof that AI infrastructure has become a mature commodity market. It is an attempt to build the pricing and hedging layer for a constrained input. That attempt is itself revealing. Once AI systems become operational infrastructure rather than isolated demos, their inputs become strategic risks. Compute stops being only a cloud bill and starts looking like an exposure that companies may want to price forward, hedge, and trade.