Enterprise GenAI Pilots Fail When Feedback Cannot Reach the Model

Alessandro Cappelli, co-founder and chief customer officer of Adaptive ML, argues that enterprise generative AI pilots fail to reach production because companies lack a systematic way to turn defects, user feedback, business metrics and production signals into model improvement. In a talk on Fortune 500 deployments, he says prompting and instruction fine-tuning can produce credible demos, but reinforcement learning is the mechanism needed to train models and agents against enterprise-specific environments, rewards and KPIs. His case is that agents make this feedback loop more urgent, because they consume more tokens, touch live systems and leave less room for error.

The failure point is not the demo

Alessandro Cappelli argues that the production failure rate for enterprise generative AI is better understood as a feedback problem than as a deployment problem. He is making that argument as co-founder and Chief Customer Officer of Adaptive ML, which he describes as an RLOps platform vendor for large enterprises including AT&T, Manulife, and CCS. The number he puts on the table is stark: a slide attributed to MIT's 2025 State of AI in Business says 95% of GenAI pilots fail to reach production. Cappelli's explanation is that many organizations still treat the MVP as the hard part and production as a “last mile” execution step. In his view, that timeline is wrong.

The MVP, Cappelli says, is only “the first mile.” A demo can be built on a proprietary frontier model or on an open-source model adjusted with instruction fine-tuning. Both can produce something credible in front of internal stakeholders. But neither, in his account, gives an enterprise a systematic way to absorb defects, business outcomes, user feedback, and production signals into a continuously improving model.

The defect loop is where he sees proprietary-model deployments breaking down. If testing reveals a failure, the main available move is to change the system prompt. That may fix one behavior while creating another. Cappelli describes this as lacking a “scientific, systematic” way to improve and monitor the model. Instruction fine-tuning has its own version of the same issue: the team can keep iterating on datasets, but that is expensive and does not answer the operating question after launch. “Will you keep creating a new dataset every single week?” he asks.

His alternative framing is a model lifecycle rather than a last mile. The work after an MVP is continuous retraining, refinement, and improvement driven by client feedback, business metrics, and environment rewards. Reinforcement learning is the mechanism he argues can integrate those signals in a systematic way.

Getting to an MVP is not easy, but it's just the first mile. What actually the real journey, the real marathon is to get from an MVP to production and beyond.

Cappelli is not presenting reinforcement learning as merely one post-training option among several. He describes it as the missing layer between open-source models that can be made to demo and models that can be operated in production by large enterprises. He traces that view to his earlier work on Falcon, which he says was one of the most widely adopted open-source models around three years ago. The founding team behind Adaptive ML, he says, concluded that the gap between open-source production deployments and proprietary frontier-lab systems was reinforcement learning.

Reinforcement learning changes both performance and operating economics

Alessandro Cappelli distinguishes reinforcement learning from prompting and supervised fine-tuning by saying all three can steer model behavior, but they are not equally effective. A slide citing Ouyang et al. 2022 shows reinforcement learning reaching roughly the same human-preference performance as supervised fine-tuning with a model around 100 times smaller. Cappelli's interpretation is that RL has “outsized performance”: it can deliver comparable behavior with smaller models, and that smaller-model path matters because enterprise AI systems have to survive cost and latency constraints, not just benchmark comparisons.

The operating claim has several parts. Smaller specialized models are cheaper to serve. At Fortune 500 scale, seemingly narrow features can cost millions of dollars when deployed broadly. Cappelli's example is AT&T summarizing every transcript between a customer and an agent. That summarization use case alone, he says, costs millions. If an enterprise can train a much smaller model than a general-purpose system such as ChatGPT or Sonnet for that workload, the token economics change.

Smaller models also lower latency. Not every use case requires speed, but some production systems have hard latency thresholds. For a speech-to-speech customer support system, half a second is already awkward; ideally the system responds in roughly a third of a second. In Cappelli's view, that is not realistic with very large language models. It pushes teams toward smaller open models in the approximate 10B-parameter family, such as recent Gemma, Mistral, or Qwen models.

The third operating benefit, in Cappelli's framing, is ownership. He says enterprises own the data they give to the model because it is trained on their own business data, and they own the solution. He ties that ownership to operational stability: a company does not have to worry that a vendor's latest model update will shift performance “underneath your feet.”

Adaptive ML's slide makes larger deployment claims around specialization: “50–90% lower costs” with smaller models and fewer tokens, “better than frontier accuracy” with agents tailored to the business, “7x lower latency” with sub-500ms response times for real-time interactions, and ownership over data, model, and safety guardrails. Cappelli uses those claims to argue that specialization is not only a quality strategy; it is the path by which deployment becomes economically viable.

Agents make the feedback problem harder and more important

Alessandro Cappelli says the reinforcement-learning case applies to ordinary enterprise workloads such as summarization, classification, and OCR. But agents, in his account, raise the stakes because they intensify every production constraint at once. They use more tokens. They involve more complex flows. They have less room for error because they may access data and change records in systems used by employees or customers.

The cost problem compounds accordingly. If summarization at enterprise scale can already cost millions, Cappelli asks what happens when an agent uses 10 times as many tokens. The reliability problem also changes: the system is no longer only producing text for a human to inspect. It may be using tools and acting against live databases.

This is where he argues RL's original design becomes relevant. Reinforcement learning was made to train agents in environments. In the enterprise-agent setting, Adaptive ML's diagram places a mock user, model, tools, model output, reward, and business outcomes or KPIs inside a dynamic environment. The same slide notes that LLMs as judges can supply part of the reward definition. The picture matters because it is not a static prompt-response setup: the model acts, tools respond, an environment produces outcomes, and the reward turns those outcomes into training signal.

Cappelli describes two cases. If a company already has an agent workflow, as he says Manulife did, Adaptive ML can plug a trainable model into that existing environment. If the environment does not yet exist, it can be built by mocking the tools and, where needed, mocking the user.

The mock user can itself be an LLM, especially for chatbot or tool-using agent scenarios. The reward can come from business outcomes, KPIs, or LLM judges that encode what success means for the enterprise. Cappelli gives examples: Was the agent helpful? Was it useful? Did it use tone and vocabulary consistent with business guidelines?

That environment framing is important because it turns agent behavior into something trainable in a controlled setup. A model can act in the environment, receive a reward signal, and improve against the task definition. Cappelli's core claim is that agents are not an exception to the RL thesis; they widen RL's advantage because the problem is closer to the kind of environment-based training RL was built for.

Synthetic data is a byproduct of environment training, not the starting requirement

Alessandro Cappelli describes a common enterprise objection: teams do not have the data required to get to an MVP or production. The problem is worse for agents because the relevant training data usually does not exist “in the wild.” There is no general web-scale dataset that captures an enterprise-specific agent using enterprise-specific tools in the way a company needs.

His answer is to invert the data premise. If a team has an environment and a reward, then it has built a synthetic data pipeline as a byproduct. The environment can generate trajectories. The reward can identify which trajectories are good and which are not. From there, teams can use rejection sampling to create a dataset that bootstraps the first model training run.

This does not mean proprietary enterprise data becomes irrelevant. Cappelli argues that many companies lack the exact data needed to train agents but still have data that can improve the environment. Real transcripts between customers and human agents, for instance, can be used to make a mock user more realistic. The mock user can be trained to behave like the actual customer population: impatient, repetitive, confused, angry, or frightened.

His example is medical supply. In that setting, people may call while panicked. A correct agent behavior might not be to complete the transaction autonomously; it might be to escalate to a human or call 911. Cappelli uses this to make a broader point: the “dirty” texture of real conversations is useful. It can be used to shape simulated users and scenarios so that the model is trained against the conditions it will actually face.

The data strategy, then, is not to wait for a perfect agent dataset. It is to construct an environment, define rewards, use that environment to generate trajectories, and use proprietary data to make the environment more faithful to the enterprise's real operating conditions.

The human-in-the-loop work moves from annotation to reward design

Alessandro Cappelli distinguishes his view of human feedback from expensive annotation campaigns. RL became prominent in the LLM world through RLHF, reinforcement learning from human feedback, which OpenAI described in connection with ChatGPT. But “human in the loop” can hide a costly process that organizations do not want to run. In Cappelli's experience, annotation campaigns are either expensive or not useful because people do not want to do them.

He still wants humans in the loop, but in a different place. The human role is to define the reward signal: rubrics, judge prompts, scenario definitions, and alignment with the business's view of success. Some rewards are systematic. Code can run or fail. Syntax can be correct or incorrect. Some rewards come directly from KPIs and business outcomes. Cappelli cites CCS, a medical supply company, as using a customer support system that tries to maximize containment rate: the percentage of calls handled end to end by the model. That percentage can be directly optimized.

Other requirements are more open-ended. Was the tone correct? Were business requirements followed? For those, Cappelli says LLMs as judges can be used. The human contribution is to define the rubric and the system prompt for the judge, and to define scenarios that reflect what the business sees in practice. He says this work can take from a few minutes to two hours, rather than weeks, and does not need to be repeated dozens of times as an annotation campaign would.

The human in the loop is helping just by defining the rubrics, defining the system prompt to these LLMs as judges and defining the scenarios.

The question period sharpened this distinction. An audience member asked about production feedback of the kind Cursor had described publicly for tab completion: whether a completion is accepted or not. The questioner contrasted that with a more traditional LLM RL setup, where one might run several rollouts for a prompt, select outputs that work, and train on those samples. The question was whether a single implicit production signal can be trained on directly as a reward signal, or whether teams should replay the problem to generate variations.

Cappelli's answer separated early sparse feedback from later production-scale feedback. When the feedback is not from production and consists of perhaps 10 to 20 examples, Adaptive ML generally uses it to improve the LLM judge: this output is good, that one is bad, and the judge's description of the task should be adjusted accordingly. Once a system reaches production, there may be thousands of such feedback events. At that point, the data can be used to train reward models rather than relying only on prompted large judges such as Qwen 235B.

For implicit feedback, the right approach depends on the use case. Because the data is available, Cappelli says the team can build a reward model accordingly and run different training scenarios against an evaluation to see which produces the best performance. He does not present a single universal rule for whether one implicit feedback signal is enough or whether replay is needed; he frames it as an empirical choice under a defined evaluation.

The platform claim is that RL needs an operating system, not just an algorithm

Alessandro Cappelli closes the argument by conceding the main objection: reinforcement learning is hard. It is not as simple as changing a system prompt, and it is not as simple as building a dataset for instruction fine-tuning. He points to PPO, one of the best-known RL algorithms, as requiring orchestration of four large language models at the same time. That operational complexity is the reason, he says, Adaptive ML built Adaptive Engine as an RLOps platform.

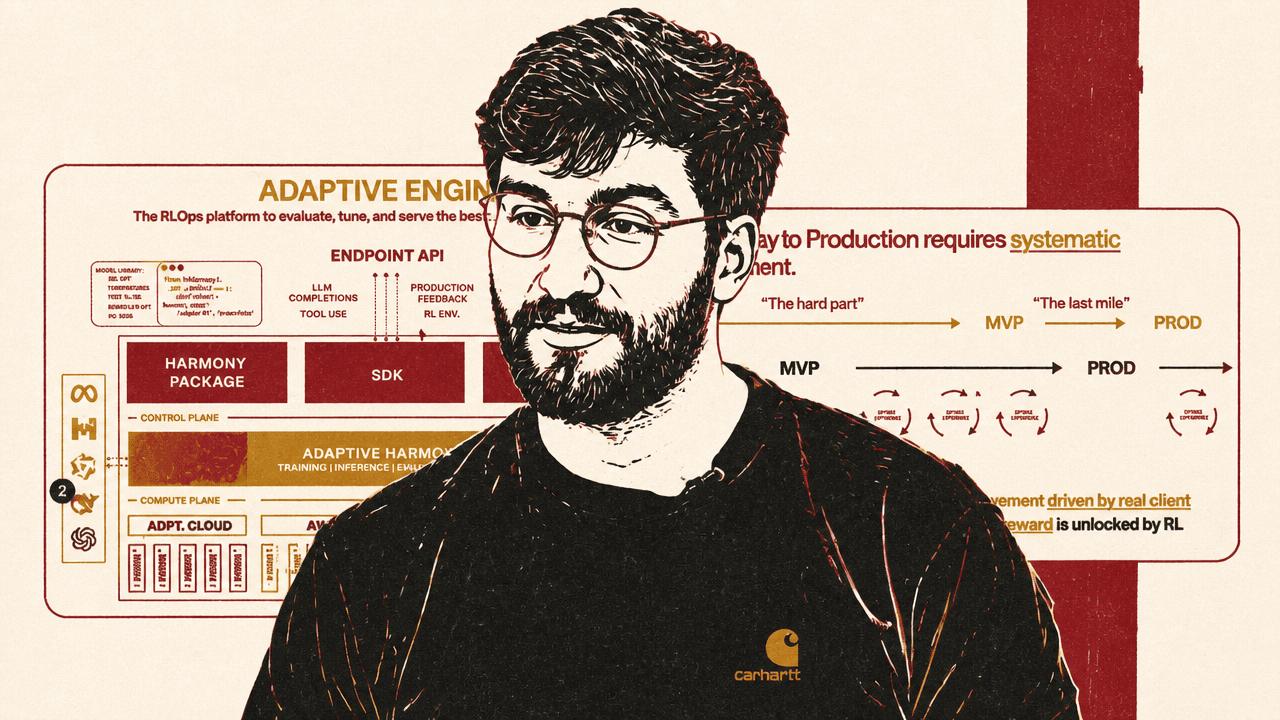

The platform is described as covering evaluation, tuning, serving, observability, production feedback, RL environments, SDKs, UI, training, inference, GPU management, and deployment across Adaptive's cloud, AWS, Azure, or on-prem infrastructure. Cappelli says the goal is not merely to accelerate training. It is to accelerate the entire model lifecycle: observe behavior, evaluate it, identify defects before and after production, and act on them systematically.

The architecture slide presents Adaptive Engine as a layer connecting endpoint APIs, LLM observability, production feedback, evaluation and RL environments, an SDK, a platform UI, and training and inference infrastructure. A zoomed view highlights “state-of-the-art RL pipelines” with pre-built modular recipes supporting synthetic data, verifiable rewards, and production feedback. In Cappelli's explanation, that is the platform answer to the complexity of RL: the enterprise defines rubrics and outcomes, while Adaptive handles the machinery of the training recipe.

Adaptive's approach, as Cappelli describes it, builds on open models. He names recent Gemma, Mistral, and Qwen models as examples of base models enterprises can specialize. The presentation says Adaptive Engine is powered by open models and can specialize open models “of all sizes and modalities” with RL; it also shows Meta, Mistral AI, and Hugging Face in that context. The point is not that one particular base model solves the enterprise problem. It is that open models can be specialized against the business's own data, environment, and reward signals.

The product abstraction Cappelli emphasizes is pre-built modular RL recipes. Rather than asking enterprise teams to implement algorithms such as PPO or GRPO themselves, Adaptive exposes recipes supporting synthetic data, verifiable rewards, and production feedback. In his framing, the enterprise defines rubrics and outcomes; the platform handles the reinforcement-learning machinery.

That is where the talk's strongest claim sits. Prompting and instruction fine-tuning can create demos. Cappelli's contention is that reinforcement learning is the mechanism that turns defects, feedback, KPIs, judge rubrics, and environment rewards into a repeatable improvement loop.