The Mouse Pointer Becomes a Reference Tool for AI Interfaces

Google DeepMind researcher Adrien Baranes argues that the mouse pointer can become more than a tool for selecting and clicking. In an experimental prototype, he presents the cursor as an AI-mediated reference layer: a way for Gemini to connect words such as “this,” “that,” and “here” to the precise objects, app data, and screen content a user is indicating. The aim is to make pointing function as shared context between a person and an AI system across documents, calendars, maps, and images.

The pointer becomes a machine-readable reference layer

Adrien Baranes frames the mouse pointer as one of computing’s most durable interfaces: a constant presence across websites, documents, and workflows for more than half a century. The research question he poses is not whether the pointer can be made visually different, but whether it can become context-aware: an AI model behind the pointer, such as Gemini, “listening to us, paying attention to the screen,” and interpreting what the user means in the way another person might.

What if behind the pointer there was an AI model, like Gemini, actually listening to us, paying attention to the screen, and trying to interpret whatever we're saying, like another person would?

The consequence for interface design is specific: this is not a smarter cursor skin, but an attempt to turn pointing into a machine-readable reference layer across applications. The project is an experimental AI-enabled pointer designed to understand three things at once: what the user is pointing at, why that object matters, and how to act on it.

Baranes describes the work as grounded in prototyping and user experimentation, with the aim of understanding people’s needs and creating systems that satisfy them. Its core premise is familiar from human collaboration. Words such as “this,” “that,” “here,” and “there” only work when the listener can see or infer what they refer to. The prototype tries to give an AI system that same shared reference.

One interface shows the problem directly: a user works beside a shopping list and a text editor, while a tooltip identifies the hovered object as TEXT_NOTES_841. The instruction is simply, “make this sentence more formal.” The language leaves the referent implicit; the pointer supplies it.

A recipe example makes the same point with multiple objects. A ratatouille recipe lists ingredients including a courgette, aubergine, yellow pepper, and red onion beside an empty shopping list. The user points to ingredients and asks, “Could you get these two ingredients, and also this one, and add them to my shopping list here?” The system replies, “Done.” The user does not have to name every item or specify the destination as a formal field. The pointer and model cooperate to connect deictic speech to the relevant parts of the screen.

The prototype acts on objects below the visible interface

The initial prototype works by using pointer position to expose the object behind the visible interface. Adrien Baranes explains that when the user hovers over a note, the AI-enabled pointer knows “the data that’s behind the scene.” In the text-editing example, the visible poem is paired with underlying HTML for the hovered element: a div with the id text_notes_841, styled in blue at 16 pixels. When the user says, “Make this orange,” the word “this” is not left as a vague natural-language reference. Typing the word “this,” Baranes explains, adds the actual text node to the prompt.

The visible code reads in part: <div id = "text_notes_841" style = " color: blue; font-size: 16px; ">. The pointer is not only indicating a place on the screen; it is helping identify the underlying object the system can act on.

That mechanism extends beyond text. Baranes says the pointer can “dig through all of the layers of data,” combining voice, text, and image understanding. A calendar example shows a poster for The Thinking Game advertising “Friday April 17th at 20:30” beside a calendar draft for the same event. The user asks, “Can you make this 8 PM?” The event’s start time changes to 20:00, and the system responds, “I’ve updated the draft to start at 8:00 PM.”

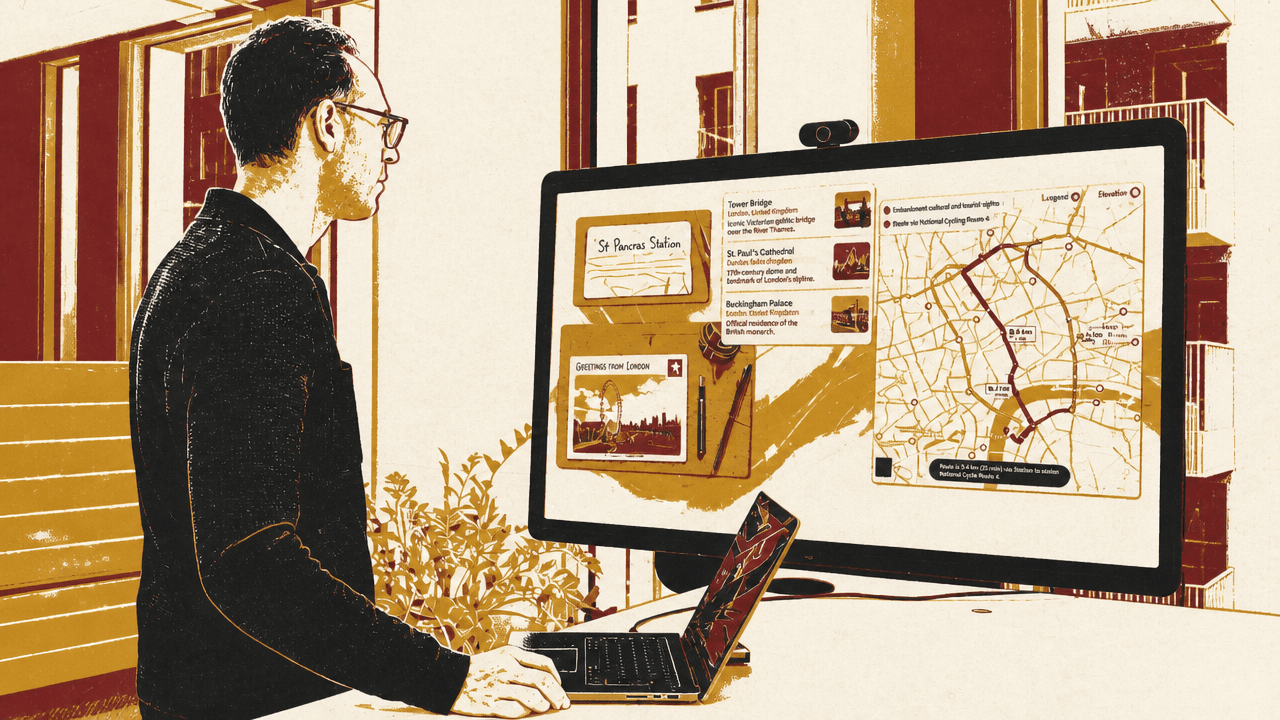

The maps example compresses the cross-application version of the same idea. One panel contains a handwritten note reading “St Pancras train station, London, UK”; another shows a postcard image of the London Eye; a map panel displays a route. The user asks, “Can you show me how to go from this place to that place?” The system responds with directions between the two locations. The demonstrated interaction is not a form field, a pasted address, or a single-app command. It is a combination of pointing, language, and visual interpretation across multiple windows.

Shared attention is the larger interface ambition

The most expansive version of the prototype is not limited to a conventional mouse demonstration. Adrien Baranes uses head tracking in one example. A restaurant menu appears on one side of the interface and a watercolor-style illustration of a blue parrot on the other. The user says: “Hey Gemini. So, can you generate an image based on this whole menu here? I would like you to use the style in this image.” The pointer highlights the bird image, whose visible identifier is image_234.

The system replies that it is generating the image. Baranes says the result transfers the menu’s content and the bird image’s style into a new image. His point is not simply that Gemini can generate images. It is that generation can be directed through the shared screen: the user indicates one source for content and another source for style by pointing, while the spoken command describes the relationship between them.

Baranes’s broader interface model is “a new type of operating system”: AI surfaces content the user might find useful; the user points back at that content; both sides share attention and a canvas “like if I was working with another person.” In that framing, the pointer is no longer just a device for selecting and clicking. It becomes a way for the human and the model to establish what they are jointly looking at, what aspect of it matters, and what should happen next.

The work is explicitly described as an experimental research project. Its demonstrated direction is specific: make the long-static pointer into an AI-mediated reference tool that can bind vague human instructions to precise objects, structured app data, and cross-window actions.