Agents Push Applied AI From Model Capability To Operating Capacity

Today’s sources frame agents less as standalone model breakthroughs than as systems that need infrastructure, pricing, permissions, feedback loops, and engineering discipline around them. Bloomberg’s reporting on compute supply, Perplexity’s digital-labor pitch, Replit’s agent revenue story, and production guidance from Pydantic, Raindrop, and Matt Pocock all point to the same constraint: turning agent demos into repeatable work.

Agents are becoming an infrastructure problem before they become a labor market fact

The day’s applied-AI throughline starts below the product layer. If agents are going to become persistent workers rather than impressive demos, the constraint is not only whether the model can reason. It is whether the industry can supply the compute, power, financing, orchestration, and deployment capacity required to run those workers at scale.

Bloomberg’s AI-infrastructure segment put that plainly through Arm, Anthropic, SpaceX, and CoreWeave. Arm chief executive Rene Haas argued that agentic workloads increase CPU demand because agents do not only need accelerators for model inference; they also need orchestration, scheduling, and fast response coordination inside data centers. In Haas’s account, that is CPU work. Arm’s data-center business had doubled year over year, and demand for its new Arm AGI CPU had moved from roughly $1 billion in visible orders to $2 billion in five weeks. His line was not that demand is fragile. It was that supply is the management problem.

The same constraint showed up in Anthropic’s reported compute lease from SpaceX. Seth Fiegerman described the arrangement as unusual because Elon Musk has criticized Anthropic and xAI competes with it. The reason it still makes sense, in Bloomberg’s account, is immediate capacity. SpaceX’s Colossus 1 data center exists now. Anthropic needs compute now. On-screen notes said the deal gives Anthropic access to more than 300 megawatts of compute power and helps it raise product usage limits. That is smaller than the multi-gigawatt facilities being discussed elsewhere, but the point is availability, not theoretical scale.

CoreWeave’s position fit the same pattern. Bloomberg’s Dina Bass said the demand signal for AI compute remains strong across hyperscalers and AI companies, and CoreWeave has been adding customers such as Anthropic, Meta, and Jane Street. But the investor question has shifted toward financing and expansion: how the company pays to acquire, build, and develop enough capacity, and whether it can diversify beyond concentrated early customers while moving up the services stack.

The useful synthesis is that if agents become labor, compute becomes payroll infrastructure. A digital worker still needs chips, racks, power, memory, networking, cloud contracts, and capital expenditure. If a company wants agents to run continuously across email, code, spreadsheets, data warehouses, browsers, internal systems, and customer workflows, then the “labor” metaphor quickly becomes an infrastructure claim.

That does not settle which providers win. Arm is making a CPU-orchestration argument. SpaceX is monetizing available capacity. Anthropic is buying access where it can get it. CoreWeave is trying to finance growth and diversify customers. But the sources agree on the broad shape: applied AI’s bottlenecks are moving from model possibility toward system capacity.

Perplexity’s agent pitch is software priced like labor

Perplexity gave the day’s clearest business-language version of the same shift. Dmitry Shevelenko, Perplexity’s chief business officer, framed computer-use agents as “digital labor”: not another feature tier, but work capacity that users may pay for when it performs economically useful tasks. In his account, customers are beginning to think less in terms of software spend and more in terms of payroll-like leverage — a team of digital agents or digital workers that has to justify its cost through output.

That framing matters because it explains several product choices that otherwise look disconnected: broad permissions, workflow packaging, model orchestration, persistent machines, and metered compute credits. Labor-like software has to operate across the user’s real work surface. It may need calendar, email, documents, internal data, code repositories, local files, and browser sessions. It may also have highly variable cost. One task may cost cents; another, such as long-running video or complex computer-use work, may cost far more. A flat subscription strains under that range.

Perplexity’s proposed commercial answer is a membership-plus-usage model. Shevelenko compared it to Costco: users pay a high-margin membership to enter, then buy what they need inside. Computer credits are the metered layer. The claim is not that every AI task should be expensive, but that agents doing work create variable compute costs and therefore need pricing that can follow usage.

| Agent requirement | Perplexity’s framing | Operational implication |

|---|---|---|

| Access | Digital workers need to reach real workflows | Users must grant permissions to email, calendar, files, apps, or internal data |

| Packaging | Blank prompts create friction | Workflow templates package tasks such as financial modeling, taxes, and client preparation |

| Model choice | No single model is best at every step | Perplexity positions itself as an orchestrator across Opus, GPT, Gemini, Grok, Sonnet, and open models |

| Persistence | Some tasks need always-on availability | Mac Minis provide local access and continuity, though inference is not currently local |

| Pricing | Work costs vary by task | Membership plus compute credits replaces a purely flat-rate model |

The trust bargain is heavy. Alex Kantrowitz read aloud the Google permissions he had granted for a digest workflow: access to contacts, calendars, Gmail, Workspace directory data, and calendar editing. Shevelenko responded that the agent does not act independently in the feared sense; the user initiates tasks, and companies can start with read-only access or no connectors. But he did not deny the core point. The more useful the agent, the more it resembles an employee with access rights. That shifts trust from abstract model confidence to product design: permissions, transparency, staged adoption, review, and boundaries.

Perplexity’s argument also pushes back on a narrow consumer-usage reading of AI. Kantrowitz raised signs of flattening consumer AI usage and noted that Perplexity’s traffic remains far below ChatGPT’s. Shevelenko answered that he watches revenue more closely than monthly active users because MAUs can reflect novelty. He said Perplexity had crossed $500 million in ARR after starting the year below $250 million, and he argued that the relevant users are often prosumers using AI for work, side businesses, analysis, or project leverage rather than casual search.

The most important point is not whether Perplexity’s particular package wins. It is that the company is making explicit what many agent companies imply: agents will be judged by completed work, not by novelty sessions. That makes the product question less “Can the model use a browser?” and more “Can the system safely, repeatedly, and economically complete tasks users would otherwise assign to employees, agencies, analysts, or engineers?”

Replit shows the market-creation version of the agent story

Replit supplied the concrete case study: an agentic workflow that, according to founder Amjad Masad, turned years of platform ambition into sudden revenue pull. Masad said Replit’s revenue run-rate rose from roughly $2.5 million to $250 million between 2024 and 2025, with Replit Agent producing about $1 million of ARR on its first day and $2 million by the second. He described the launch not as a smooth growth curve, but as a market-creation event.

The distinction matters. Replit had long wanted to be more than a coding environment. A 2015 “Master Plan” slide described tools for teachers and students, an AI-assisted interface that blurred learning and building, and a platform to learn, build, explore, and host applications. Masad’s account is that the old platform thesis became practical only when AI could collapse the workflow around software creation: setting up an environment, installing packages, configuring a database, debugging, deploying, hosting, and eventually monetizing.

That is where Replit’s story rhymes with Perplexity’s. Users do not merely want output from a model. They want an outcome. In Replit’s case, the outcome is not “generate code.” It is “make the app run.” Masad said AI was already strong at writing code, but code alone was not enough to create software. Replit Agent’s claimed breakout came from joining code generation to the surrounding production workflow.

| Layer of work | Why code generation alone is insufficient | Replit Agent’s claimed role |

|---|---|---|

| Environment | Users still need runtimes, packages, and configuration | Set up the working development context |

| Application logic | The model can generate code but may need iteration | Write and debug code in the project |

| Data | Apps often require persistence and database setup | Create or configure databases |

| Deployment | A local artifact is not a usable product | Deploy the application to the cloud |

| Business use | Builders often need payments or internal workflows | Integrate services such as Stripe and support business automation |

Replit’s most revealing internal test was whether non-engineers could use it. Masad described watching Jeff Burk, Replit’s head of partnerships, as a proxy customer: smart, but unable to configure Python. Burk failed at first, then eventually built the app. Masad took that as the signal that the agent had crossed from developer tool into market expansion.

The operating constraint then changed. Before Agent, Replit’s problem was finding demand. After launch, Masad said, the company had to catch up to demand. Enterprises were already using Replit internally and asking for deals. The company had to build sales, support, adoption capacity, and platform capacity. In his account, Replit went from a technical product-search problem to an organizational throughput problem.

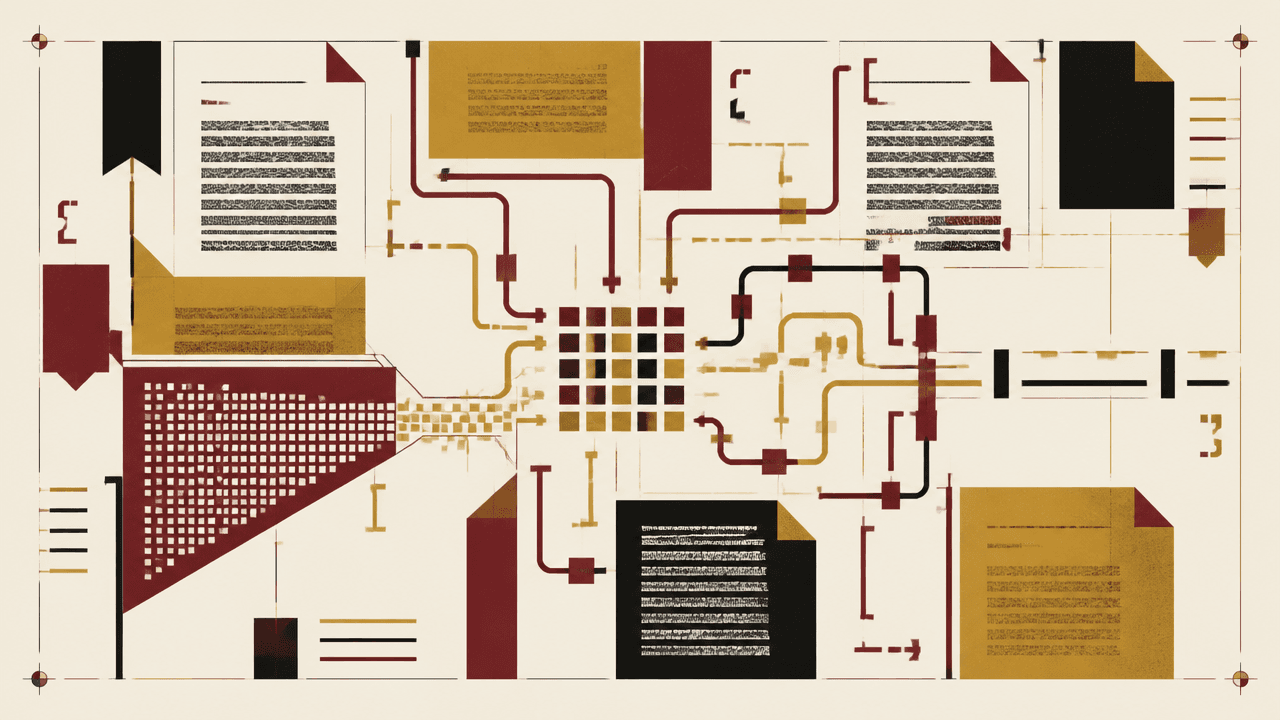

This is the useful lesson for applied AI beyond Replit. Agentic products become economically meaningful when they compress a full workflow, not when they show isolated capability. Perplexity’s “digital labor” theory and Replit’s revenue story are different forms of the same argument. The agent is valuable when it turns intent into completed work through the messy middle: permissions, context, tools, environment, deployment, review, and iteration.

The production bottleneck is feedback, control, and semantic observability

The harder part begins after agents are launched. Pydantic’s Samuel Colvin and Raindrop’s Zubin Kumar, Danny Gollapalli, and Ben Hylak approached production agents from different angles, but their claims fit together: teams need control loops, not one-time eval checklists.

Colvin’s focus was the ability to change a deployed agent safely. In his Pydantic AI and Logfire workshop, he argued that production agents need traces, evals, and typed managed variables that can alter prompts, models, max tokens, and other parameters without redeploying the application. Standard observability can show what happened. Colvin’s workflow is about turning observed behavior into controlled change.

The operational mechanism was managed variables: typed configuration objects, defined as Pydantic models, editable from Logfire. In the demo, an AgentConfig included instructions, model, and max tokens. Colvin changed the instruction to reply in French, then German, and changed the model field from an Anthropic model to GPT-4.1, without treating application deployment as the only control surface. His broader point was that prompt management is too narrow a frame. Agent configuration includes prompts, models, tools, context strategy, compaction behavior, and other knobs.

Managed variables matter because agent systems change faster than ordinary deployment cycles. If a model regresses, a prompt needs a targeted fix, or a subset of traffic should see a variant, teams need typed, observable ways to adjust behavior.

Colvin’s GEPA example showed both the promise and the limits of optimization. GEPA improved a narrow political-relations extraction benchmark from an initial prompt in the mid-to-high 80s to a reported best validation score of 96.69%. But the workshop also showed the familiar risks: prompt bloat, overfitting to insufficient examples, model dependence, imperfect golden data, and a live example where the optimized prompt failed to fix the visible case Colvin hoped it would.

Raindrop’s workshop widened the reliability problem beyond offline evals. Kumar argued that agents are non-deterministic, unbounded, and tool-using. They accept open-ended inputs, produce open-ended outputs, and act across external systems. That means many production failures will not appear as clean exceptions or be covered by golden datasets. A coding agent may fail only for users of a particular database provider. A task may go wrong through a strange workaround. A refusal pattern may rise after a feature change. Users may express frustration before conventional telemetry identifies the issue.

Raindrop’s taxonomy is useful because it names two different surfaces. Explicit signals are objective telemetry: tool errors, latency, cost, regenerations. Implicit signals are semantic patterns inferred from unstructured behavior: user frustration, refusals, task failure, capability gaps, jailbreak attempts, laziness, forgetting, and wins. Kumar’s point was not that teams should let LLMs vaguely judge quality. The best implicit signals, in his framing, are detectors: concrete binary or categorical signals that can be tracked over time.

| Production need | Pydantic emphasis | Raindrop emphasis |

|---|---|---|

| Know what happened | Traces through Logfire and OpenTelemetry-style observability | End-to-end trajectories, tool sequences, errors, and trace search |

| Define better behavior | Deterministic evals, golden datasets where possible, custom scoring functions | Semantic signals such as frustration, refusals, task failure, and capability gaps |

| Change the system | Typed managed variables for prompts, models, max tokens, and other configuration | Experiments comparing variants against production signals |

| Avoid false confidence | Optimization can overfit, bloat prompts, and depend on one model | Offline evals miss long-tail production behavior and fuzzy failures |

| Close the loop | Future direction: self-driving managed variables driven by evals | Alerts, clustering, self-diagnostics, metadata, and signal-based postmortems |

Gollapalli’s self-diagnostics example added another source of signal: the agent itself. He described giving an agent a generic report tool and asking it to tell creators about notable behavior, such as using a workaround after a file-write tool failed. His practical guidance was that the tool should be framed as helpful reporting, not self-incrimination, because models may avoid confessing wrongdoing if the tool name implies blame.

Taken together, Pydantic and Raindrop are not saying “buy better observability” in the generic sense. They are saying that deployed agents need an operating discipline: representative data, explicit objectives, trace inspection, production signals, metadata, safe configuration knobs, and experiment loops. The hard question remains human and product-specific: what counts as better? Lower cost may matter. Lower latency may matter. Fewer refusals may matter. More tool use may be good or bad depending on whether it completes the task. A higher benchmark score may still fail a visible edge case.

That is the production reality behind the agent economy. If agents are sold as work, someone has to inspect the work, define quality, catch regressions, and safely change the system.

AI coding makes engineering judgment more valuable, not less

Replit’s story can be read as non-engineers gaining the ability to build software. Matt Pocock’s argument supplies the counterweight: generated code still lands inside systems, and systems still need architecture. In a conversation with Shawn Wang, Pocock said AI coding has made software-engineering fundamentals more important, not less. When he tried to ignore the code and treat prompts as the only meaningful layer, he said he ended up with “this terrible mess.”

His central claim is that codebases easy for humans to change are also easier for AI systems to change. The inverse also holds: a codebase difficult for humans to modify will be worse for AI. Pocock has therefore returned to older engineering texts and practices — Extreme Programming, The Pragmatic Programmer, John Ousterhout’s A Philosophy of Software Design, and domain-driven design — not as nostalgia, but as practical guidance for agentic development.

The important boundary is the part the human designs. Wang connected Pocock’s view to the idea of a “narrow waist”: a simple, stable interface that constrains internal complexity. Pocock mapped that to Ousterhout’s “deep modules,” where substantial functionality sits behind a simple interface. In that division of labor, the human defines the boundary and contract; the AI can fill in the internals. Delegation becomes safer when the receiving system has a clear shape.

Domain language is part of that architecture. Pocock argued that domain-driven design is newly useful because it gives humans and models a shared vocabulary. His example was the word “mole”: in one system it might mean a skin feature, in another a spy, in another an animal. Mature systems accumulate this kind of local meaning. Without an explicit vocabulary, both humans and models can make wrong assumptions. Pocock’s “ubiquitous language” skill scans a codebase for terms and helps refine them into a Markdown reference that he keeps open while prompting.

This closes the loop with the day’s agent material. Replit says non-engineers can now create and deploy software through an agentic workflow. Perplexity says users can delegate work to digital labor. Pydantic and Raindrop say production agents need evals, observability, managed variables, and semantic signals. Pocock adds that the systems being modified still need coherent modules, interfaces, and domain language.

Tool-specific knowledge also decays quickly. Pocock noted that AI SDK v5 was announced during a course built around v4, and Claude Code changed while he was teaching it. That volatility led him to make a course nominally about Claude Code into one mostly about engineering fundamentals. The durable layer is not memorizing the current harness. It is understanding how to structure code, define boundaries, teach domain concepts, and preserve intent across changing tools.