AI Coding Makes Software-Engineering Fundamentals More Important

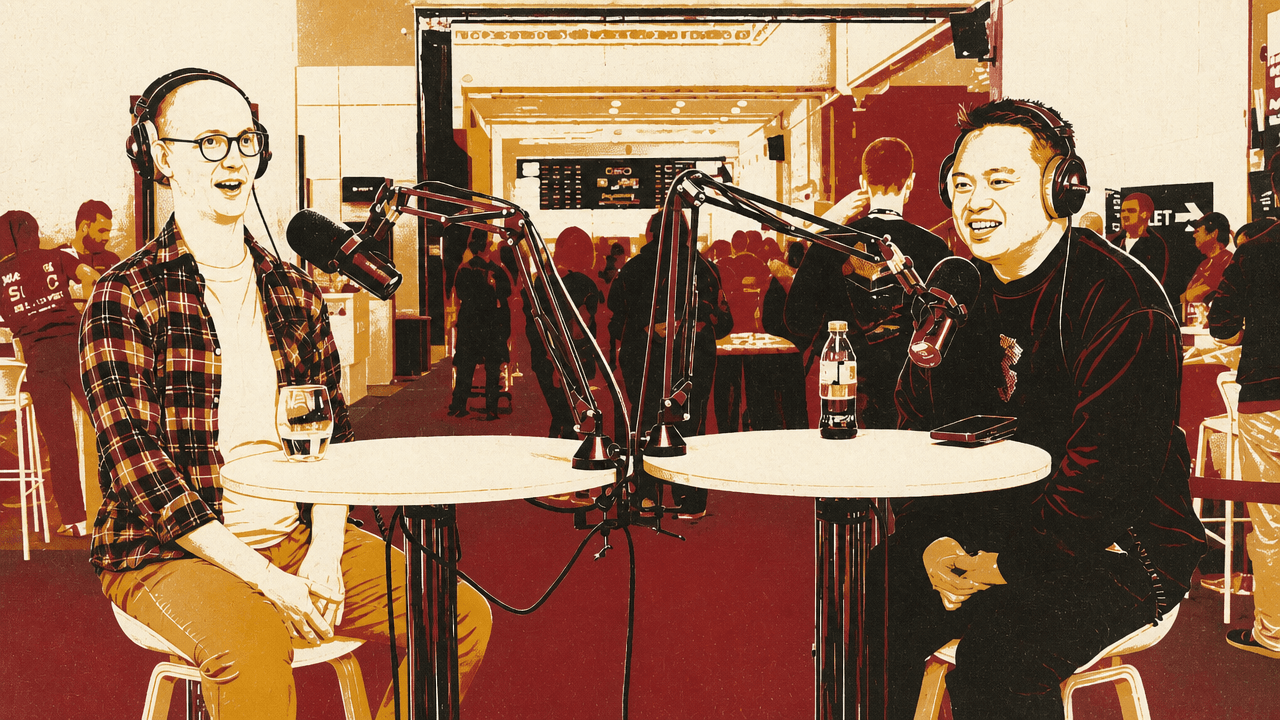

Matt Pocock, a TypeScript teacher now focused on AI engineering, argues that AI coding has made software-engineering fundamentals more important rather than less. In a conversation with Shawn Wang, Pocock says code generation works best when humans define the architecture, module boundaries and domain language that give agents a coherent system to change. The lesson he draws from Claude Code and other fast-moving tools is that tool-specific knowledge ages quickly, while engineering judgment remains the durable layer.

AI coding has made old software-engineering texts newly practical

Matt Pocock’s current position is not that code has stopped mattering, but that the ability to generate code makes engineering fundamentals harder to avoid. He framed the prevailing temptation clearly: if an AI system can take English descriptions and compile them into code, then perhaps the code itself becomes merely a target artifact. Under that view, the developer does not need to hold the codebase in mind; the prompt is the meaningful layer and the implementation can be treated as disposable output.

Pocock said his experience has pointed the other way. Whenever he tried to ignore the code, he “would just end up with this terrible mess.” That led him back to older software-engineering books and practices: Extreme Programming, The Pragmatic Programmer, John Ousterhout’s A Philosophy of Software Design, and domain-driven design. His discovery was not that these texts needed to be replaced for the AI era, but that many of their ideas could be placed directly into prompts and workflows.

The useful translation, for Pocock, is that codebases designed to be easy for humans to change are also easier for AI systems to change. The human does not necessarily need to review every generated line, but still needs a grasp of the architecture, especially the modules. That architectural intent gives the AI a better working surface.

If you have a codebase that's easy to change for humans, it's going to be easy for AI to change too.

The inverse also matters. Pocock warned that a codebase that is difficult for humans to change will be “even worse for AI.” AI does not remove the cost of poor structure; it still has to operate inside the system it is given.

That is why the course he was finishing, though nominally about Claude Code, had become “mostly about engineering and software fundamentals.” The same pattern showed up in his conference talk. The tooling changes quickly: during one previous course, AI SDK v5 was announced on day two of a course built around v4; during the Claude Code course, a “one million context window” was released around the beginning, and the Claude Code source later leaked. Pocock called himself “cursed” by timing, but also said the volatility reinforced the point. A course centered on a specific tool surface ages quickly. A course centered on engineering judgment is more durable.

The useful boundary is the part the human designs

Shawn Wang connected Pocock’s argument to the idea of a “narrow waist” in system design: the place where a broad range of internal complexity is constrained by a simple, stable interface. Wang borrowed the metaphor from internet architecture, where he described TCP/IP and HTTP as the dominant middle layers. Applied to a codebase, the point is to contain “slop”: define what goes in and what comes out, then allow more freedom inside.

Pocock mapped that directly to Ousterhout’s idea of “deep modules”: a large amount of functionality behind a simple interface. In a well-designed system, the human can be intentional about the interface, understand it, and then delegate implementation details to the AI. The AI is not asked to invent the system’s shape from scratch; it works inside a box whose contract has been designed by someone who understands the architecture.

That division of labor is where Pocock said AI coding has worked best for him. The human decides the boundary. The model fills in the internals. The more explicit the boundary, the less the developer has to trust a model’s implicit assumptions.

Wang extended the same model beyond software. AI Engineer, he said, is now a nine-person business where different people own different “parts of the elephant.” Running the conference involves connecting website, speakers, content, AV, hotels, accommodations, and other operational details into a system where attendees do not notice the rough edges. He said it increasingly feels less like programming and more like systems design.

Pocock agreed that the same principle can apply to organizations as well as codebases: self-contained boxes, clear interfaces, and delegated implementation. The lesson is not only “use modules.” It is that delegation becomes safer when the receiving system—whether an AI agent or a person in an organization—has a clear contract.

Domain-driven design gives the model a language to share with the developer

Matt Pocock described domain-driven design as newly attractive because it is already a mature vocabulary for aligning software with language. He was careful not to present himself as a DDD expert; he said he had encountered it relatively recently. But he sees its building blocks and practices as useful because they are old enough and widely discussed enough to be present in the “latent space” of models.

That matters because the developer does not need to invent a private terminology system for every project. If a developer can say that they are using concepts from domain-driven design, the model is more likely to understand the shape of the request. Pocock described DDD as “very flexible” and “very composable,” especially because it is already concerned with aligning code to the language of the domain.

His shorthand for the AI era is that domain-driven design helps the developer and the AI speak the same language.

The concrete example was a skill in Pocock’s public mattpocock/skills repository, which he said had become “insanely popular” and had roughly 13,000 stars. One of the skills is a “ubiquitous language” skill. In DDD, ubiquitous language is the shared vocabulary used by developers and domain experts so that terms in conversation map cleanly to concepts in the system.

Pocock’s example was the word “mole.” In one application, a mole might refer to a skin feature in a health context. In another, it might refer to a spy. In another, it could be an animal in a zoo application. Mature projects accumulate this kind of domain-specific jargon, and without an explicit vocabulary, both humans and models can make wrong assumptions.

His skill scans a codebase, identifies terms, and refines them with the developer into a Markdown document. Pocock said he keeps that document open when prompting because it gives him clearer conversations with the AI. It is not only a machine instruction file; it is also a human reference that improves the prompt writer’s own precision.

He does keep a reference to the generated document in agents.md, but he is cautious about putting too much context directly into agent instructions. The file exists in the repository, and when the agent explores the repo, searches with the project’s own terms can surface it. The design is modest: do not overload the agent’s system context, but make the project language discoverable.

Pocock’s teaching style is built around durability, not punditry

Matt Pocock resisted the label “YouTuber” as an identity, not because he rejects video, but because he distinguishes teaching from punditry. He said there is a category of YouTubers who predict the future, chase hot takes, or change favorite tools every two weeks. That content can perform well, and he acknowledged that the numbers are tempting. But he said he is trying to teach material that is durable and that he feels good about.

His background helps explain the emphasis. Before becoming a developer, Pocock spent six years as a voice coach. When he entered software from the bottom and moved from junior to mid-level to senior roles, he found that communication was a “ridiculous, overpowered skill” compared with what many other developers had. Over the last few years, he has narrowed his focus around teaching rather than commentary.

That focus changes how he researches. He described his preparation process as an “explore and exploit” cycle. For a recent course, he spent about two months producing roughly four and a half hours of tightly edited video, with many exercises and around a hundred units. He begins by asking what is interesting in the environment, what is worth showing, and what his angle should be. He collects a large volume of loose notes in an Obsidian vault, in a style he compared to a Zettelkasten.

Those notes gradually “coagulate” into a plan. Pocock also uses a custom application he built to plan course material, which lets him see sections and groupings of information. From there, he prioritizes lessons as P1, P2, and P3 based on how essential they are. He records mostly the P1s, and much of the rest is left out.

Each lesson should only teach one thing. Each lesson should depend, and the dependencies between all the knowledge should be super clear.

The constraint underneath the process is that a learner should be challenged without being overwhelmed. Pocock presented this less as a formal theory than as a set of instincts built over years of teaching. It also explains why older software texts are useful to him: they provide models and phrases he can use to explain ideas. He said he spends his time both learning the subject and finding the right phrases for it.

AI can assist learning, but Pocock still designs around doing the work

Matt Pocock separated teaching into three categories: knowledge, skills, and wisdom. Knowledge is taught through lectures: concepts, terminology, and how things work. Skills are taught through practice: interactive exercises and doing the thing. Wisdom is harder. For him, small-group workshop discussions are the recommended way to teach it.

AI changes that third category somewhat. Pocock said working with AI can help people “get to wisdom a little bit” by talking through situations with a model. Books are mostly knowledge-based; they may contain wisdom, but the reader has to do more work to extract it. AI provides a more conversational learning mechanism than books or lectures alone.

But Pocock also reported a practical limit: the more he leans into experimental AI-native learning formats, the more it can turn people off his materials. His conclusion was not that AI tutoring is useless, but that a traditional approach still works for most people. Wang agreed from the broader creator-education world: during the cohort-course wave, interactive discussions were emphasized, but many learners still wanted lectures, perhaps with some homework, and many did not complete the homework.

Pocock’s own courses still force work. In his TypeScript material, he often thrusts learners into a problem first and gives them the knowledge afterward. That structure makes the learner confront the gap before receiving the explanation. The AI era has not removed that need.

TypeScript’s pull in AI engineering is practical, not settled

Shawn Wang raised a shift he said he had noticed in AI engineering: he had initially tried to keep AI Engineer balanced between Python and TypeScript, but now sees TypeScript gaining ground. Wang said that, in his view, TypeScript had overtaken Python in a GitHub survey this year, and that he had not foreseen it. He still described Python as expressive and especially useful on the backend, while noting the familiar dependency issues in the TypeScript world.

Matt Pocock declined to make a grand prediction. He repeatedly emphasized that he is “not a pundit,” and admitted his own echo chamber is “100% TypeScript.” But he argued that TypeScript cannot be overlooked in conversations about building applications because of its rich ecosystem of frameworks and tools. Wang pointed to Vercel, Next.js, and Cloudflare; Pocock agreed with the ecosystem point while joking about the crowded landscape.

The practical claim was narrower than “TypeScript will win.” Pocock said that when people are building chat applications and care about UX and shipping polished products, he mostly sees them doing it in TypeScript. Wang wondered aloud whether TypeScript might win AI engineering in a way he had not anticipated, and said that would affect which frameworks he promotes or bets on in his own work.

Pocock’s own experiments have touched this question. He built a TypeScript evals framework called Evalite, partly while exploring the idea of TypeScript “coming and eating Python.” But he said the framework had stalled and that, from his experience, people are “not really interested in evals” yet. Wang noted that many people at the conference would disagree. Pocock’s point was about demand for his content and conversations: evals did not seem to be attracting broad excitement in his audience.

The next agent debate is control versus convenience

Matt Pocock thinks the coding-agent debate is “sort of ending” in one respect: agents are converging toward similar shapes. He described the emerging pattern as file-based systems with a simple set of tools. His next interest is less in one particular agent than in methods that work across agents: software-factory-style practices that sit at a meta level above the harness being used.

The key design tension, as he sees it, is inversion of control. He expects the next few months to make more important the question of how much control sits with the developer versus how much is hidden inside the agent harness.

Shawn Wang pushed on that: don’t developers want less control, not more? Pocock answered with a trade-off. With Claude Code, at least until recently, developers could not see its internals. There was a box around the tool, and that could be comforting. The developer could lean into what the harness wanted and avoid worrying about the underlying machinery. The benefit is ease of use.

The cost is reduced observability and less ability to control or tweak what is happening. Pocock contrasted Claude Code with a more primitive, composable approach: tiny pieces that the developer builds up from the ground. If someone can use that style well, they get control and observability across the system. But they also inherit more maintenance burden, more details to care about, and more places where things can go wrong.

That is the trade-off Pocock wants to explore: the closed harness that makes the easy path easy, versus the composable primitive system that gives the developer more power and more responsibility. His direction is not simply “more abstraction” or “less abstraction.” It is a search for methods that preserve developer intent while still taking advantage of agentic implementation.

Small AI skills may travel beyond software

Shawn Wang broadened the question from software engineering to general productivity: how people can live, work, run teams, and run companies more efficiently with AI. He pointed to Obsidian as an example of a surprisingly small team with outsized impact, saying the company had four people and had recently posted a job for a fifth, attracting wide attention because users were surprised by how much had been built with such a small staff.

Matt Pocock said software engineers appear to be a step ahead because they work in a domain where AI can already produce useful output. That makes software a laboratory for patterns that may later be pulled into everyday work.

One of his examples is a skill called “grill me,” from mattpocock.com/skills, which he described as only about three sentences long. Its function is to make the AI interview the user relentlessly until they reach a shared idea. Pocock said he wants that pattern in any domain where he needs the AI to align with him: the model should question its assumptions and his assumptions until they arrive at a shared design concept.

He has used that approach for generating documents and other tasks beyond narrow coding work. The important property is composability. The more Pocock can break useful AI behaviors into small, reusable parts, the more easily they can move from software engineering into other kinds of work.