Production Agents Need Evals and Managed Variables After Deployment

Samuel Colvin of Pydantic argues that production agents need more than observability after deployment: they need evals, traces, and typed configuration that can change prompts, models, and other parameters without a redeploy. Using Pydantic AI, Logfire, managed variables, and GEPA, he shows a workflow for moving from manual prompt tuning toward continuous optimization. His case is practical rather than automatic: GEPA can improve a narrow benchmark, but only if the team has representative data, sound evaluation criteria, and a clear definition of what better means.

Production agents need a way to change after they are deployed

Samuel Colvin framed the problem as what happens after an agent is already live. Deploying an agent is not the end of the engineering loop: prompts need to change, models may need to change, evaluation criteria need to improve, and behavior needs to be observed closely enough that optimization is based on failures rather than intuition.

Colvin’s stack for the demonstration was Pydantic AI, the agent framework, and Pydantic Logfire, the observability platform. Logfire is, in his description, a general observability platform under the hood: OpenTelemetry, logs, metrics, and traces. He was explicit that he does not “really believe in AI observability” as a durable standalone category. In his view, it is a feature rather than a category and will eventually be eaten either by observability products or by AI products. Pydantic currently sells Logfire as AI observability because that is what people understand they want, but the features he described go beyond viewing traces: evals, managed variables, and work underway toward agent optimization from the platform.

The practical distinction matters. Standard observability tells an engineering team what happened. The workflow Colvin demonstrated uses traces and evaluation results to change the agent itself: first manually, then through managed variables, and, in the product direction he said Pydantic is working on, through more automated optimization. Managed variables are the bridge. They make prompts and other configuration values editable from Logfire without redeploying services; in the demo, those values included a structured agent configuration with instructions, model, and max tokens.

That is the “playground in prod” idea in its operational sense: not treating production as an unstructured testing ground, but giving production systems controlled, typed, observable knobs that can be changed, targeted, and compared. Prompt management is a narrow version of this. Colvin’s version generalizes the concept: a managed variable can be any object expressible as a Pydantic model, not just a string prompt.

The second major tool was GEPA, which Colvin described as an optimization library for strings. The string might be a plain text prompt, or it might be JSON containing multiple values. The name comes from “Genetic-Pareto”: genetic because it searches for better candidates by mutating and combining successful values, and Pareto because it draws from the Pareto frontier of the best-performing examples. Colvin’s analogy was racehorse breeding: you do not usually breed in the slow horse to see what happens; you breed from the strongest candidates and hope the next generation improves.

This optimization technique, whilst the state of the art, is not actually that groundbreaking.

He deliberately demystified the approach. Relative to the complexity of the models themselves, the optimization loop is crude: ask an agent to generate a better prompt, evaluate whether it performs better, keep useful pieces, and continue until time, budget, or a target score runs out. The sophistication is not that the loop is magical. It is that the loop is tied to explicit evals and observable runs rather than to vibes.

The demo task exposed the exact kind of prompt failure evals are meant to catch

The structured-extraction task was deliberately narrow: identify political ancestors or parent-generation relatives of UK Members of Parliament from their Wikipedia pages. The motivating example came from a question Colvin had previously investigated after listening to a discussion on The Rest Is Politics about political dynasties. He had used Pydantic AI to analyze MPs’ Wikipedia pages and estimate the share with politician ancestors, later recalling the number as perhaps 24%. At the time, he said, he ran the analysis with whatever model he could get, submitted the question, heard it read out, and did not rigorously check how well the agent had done.

For this workshop, that old task became an optimization target. The agent takes the text of an MP’s Wikipedia page and returns structured output: a list of political relations, each with fields such as name, role, relation, and party. The HTML pages were pre-scraped into an archive so participants would not need to scrape Wikipedia during the session. BeautifulSoup was used to strip page HTML down to text before passing it to the agent. The Pydantic AI agent was configured with a structured output type: a list of political relation objects.

The subtlety was not whether models could find relations at all. Colvin said they were “pretty damn accurate” at finding political relations, even when relatively small or “dumb” models were used. The recurring failure was respecting the definition of the task. The agent was supposed to find ancestors or parent-generation relatives. It should not count spouses, siblings, children, grandchildren, cousins, or other same-generation or downstream relations, even if those people were politicians.

That distinction repeatedly confused models. Once asked for “relations,” they tended to include spouses, children, siblings, or in-laws. Colvin said that when he first solved the problem a year earlier, he effectively gave up on getting the model to obey the ancestry boundary perfectly. He let it include relations of all kinds, then post-processed the output to remove obviously invalid relation types. In the workshop, the point was to see whether prompt optimization could make the agent discount non-ancestors directly.

The initial prompt was intentionally sparse: Inspect the supplied Wikipedia page text for a UK MP and extract only ancestor or parent-generation relatives who held political roles. A more detailed “expert” prompt added rules such as excluding spouses, partners, siblings, cousins, children, and grandchildren; using the relationship stated on the page as the relation value; and keeping the political role short and specific. The expert prompt was not presented as a perfected prompt, but as the kind of better version a human might write, or might ask a model to write, after seeing the task.

The golden dataset was a JSON file of supposedly correct relations for MPs. Colvin was careful about its status. It had been generated with a similar script using Opus 4.6, then checked “quite a lot,” and he described it as appearing “pretty much correct,” not guaranteed perfect. One visible example was Stephen Kinnock. The golden relations included his father, Neil Kinnock, and mother, Glenys Kinnock, but the underlying relation data could also include his wife, the former prime minister of Denmark, which illustrated why the ancestry filter mattered.

That caveat about the golden set became important later. Evals are much easier when there is a reference answer. If the reference answer is imperfect, the optimization target is imperfect. If the reference answer fails to cover important edge cases, the optimizer can overfit around them.

Deterministic evals beat LLM-as-judge when the answer can be specified

The evaluation harness loaded the golden relations file, built a dataset with one case per MP, and registered a custom evaluator. Colvin did not claim the evaluator was perfect. He treated it as a useful start: it compared the model output to the golden data and produced multiple metrics or assertions, with accuracy as the most relevant headline measure.

He contrasted this with LLM-as-judge evaluation. Logfire and Pydantic AI support prebuilt evaluators including LLM-as-judge, but Colvin’s view was that deterministic evals are generally better when they are available. His phrase for LLM-as-judge was “the lunatics running the asylum”: using another model as the judge can be useful, but it introduces its own failure modes. If the task can be evaluated against a golden dataset, a deterministic comparison is preferable.

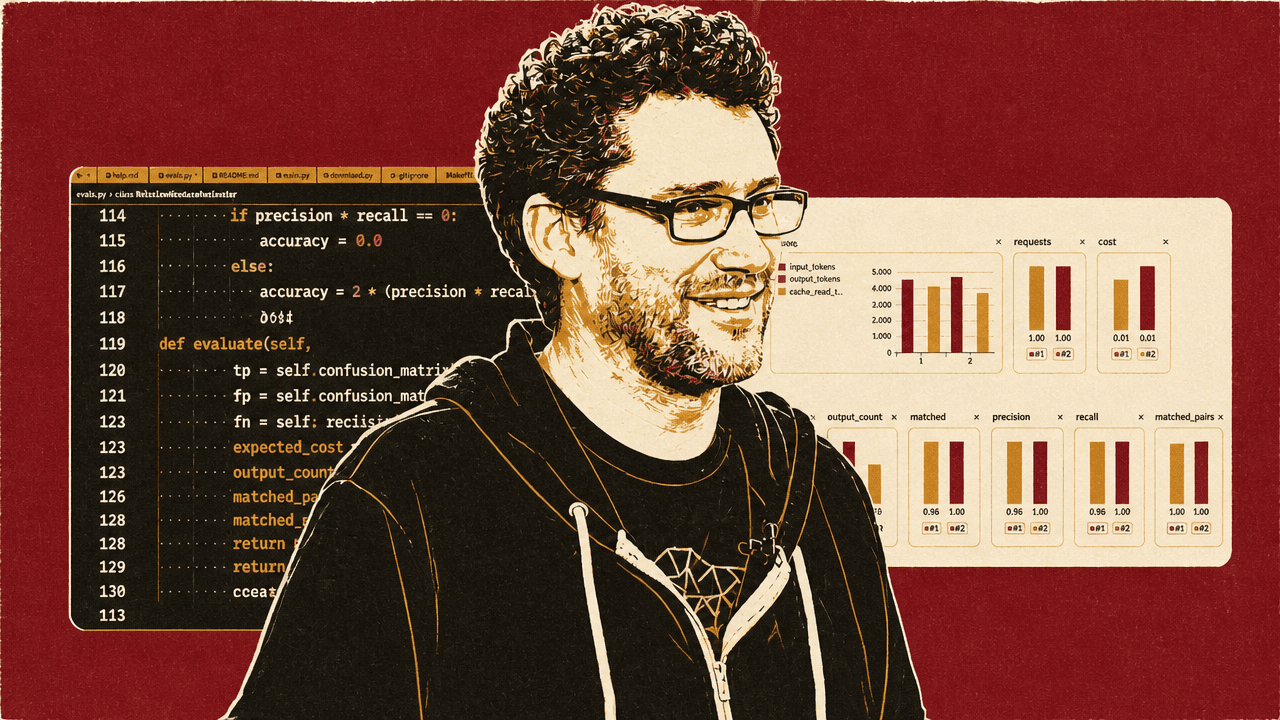

The eval runner used Pydantic AI’s override functionality to test different prompts and models against the same agent code. In the workshop, the model was held mostly constant, using gateway/openai:gpt-4.1 for speed and reasonable performance. The dataset evaluation ran in parallel with a max concurrency of five and produced traces in Logfire. The terminal showed only progress; Logfire carried the details.

One run used 65 test cases and the initial one-line prompt. In Logfire, each case could be inspected down to the system prompt, the Wikipedia-derived input text, and the final structured output. One example shown was an MP for whom the agent returned an empty list. The eval view then showed case names, expected outputs, actual outputs, and scores. Accuracy appeared as 1 for correct cases, 0 for incorrect cases, and partial values where an output was mostly right but differed in detail. Colvin pointed to one case where the model got a father’s name right but differed slightly in the description, producing a score around 0.9.

On the first test run, the initial prompt produced about 85% accuracy in one view. In a later comparison run, the initial prompt was shown at 87% and the expert prompt at 92%. After GEPA optimization, the best validation score reported in the terminal was 96.69%.

| Prompt variant | Score shown | Context |

|---|---|---|

| Initial prompt | About 85% | Single eval view on the 65-case test split |

| Initial prompt | 87% | Comparison view against the expert prompt |

| Expert prompt | 92% | Comparison view against the initial prompt |

| GEPA-optimized prompt | 96.69% | Best validation score reported after optimization |

The comparison view was more useful than the aggregate number because it exposed the kinds of failures each prompt produced. For Jo White, the initial prompt returned a spouse, John Mann, Baron Mann, even though spouses should be excluded. The expert prompt correctly ignored that relation. For Laura Kyrke-Smith, both prompts found Sir John Pelly, 1st Baronet, described as a four-times great grandfather and Governor of the Hudson’s Bay Company. The expected output was no match, and Colvin explained the failure as confusing a public figure with a politician.

Those case-level differences are the substance GEPA uses. It is not merely told that a prompt scored 0.87 or 0.92. The proposer agent receives examples of inputs, outputs, expected answers, and feedback, then generates a new candidate prompt designed to handle the observed failures.

GEPA improved the prompt, but the improvement came with familiar optimization risks

Samuel Colvin wrapped the same task and evaluator in a GEPA optimization adapter. He described GEPA itself as “the state of the art now in agent optimization,” while also noting rough engineering edges: it does not handle async as nicely as he would like, and it is not as type-safe as he would prefer. The adapter pattern is how GEPA learns how to call and evaluate a particular agent.

In the demo, a Pydantic AI proposer agent generated new prompts for another Pydantic AI agent. The proposer used GPT-4.1 for speed. Its instructions were direct: act as an expert prompt engineer; improve system prompts based on evaluation feedback; consider current instructions, examples of inputs and outputs, and feedback; return only the improved instructions text. Colvin noted the recursive absurdity: one can “go round in circles” optimizing the prompt for the agent that optimizes prompts. But the structure was simple enough: GEPA proposes a candidate prompt, the eval harness tests it, the score feeds back into the optimizer, and candidate prompts are selected or discarded based on performance.

He ran the optimizer with uv run -m main optimize --max-calls 400, saying that with 50 calls it tended to die before getting anywhere. The terminal showed GEPA selecting programs, proposing new text, and moving to full evaluation when a subsample score beat the old score. Logfire showed the optimization trace: the evaluation agent runs, the proposer-agent runs, the proposer’s input payload, and GEPA’s printed iteration output captured through Logfire instrumentation.

The optimizer reached a best validation score of 96.69%. The optimized instructions were much longer than the human-written expert prompt, with detailed rules about family relation, political roles, and edge cases.

That gain came with tradeoffs. A participant asked whether GEPA adjusts and edits a system prompt or tends to append more and more edge cases. Colvin said that when he used GPT-4o-mini earlier, one problem was that it produced an enormous system prompt, which slowed the agent. He did not know whether it performed better; it seemed roughly similar. The failure mode is real: optimizing by free-form prompt generation can create verbose prompts.

His proposed mitigation was to change the object being optimized. GEPA works over a dict of keys and values. In the demo, there was essentially one key-value pair: instructions. But a production implementation could split a prompt into many candidate sentences and constrain the optimizer to choose, say, the best 20 out of 200. By definition, that prevents uncontrolled verbosity. It also resembles a standard approach in DSPy: select which examples or prompt components to include.

Several audience questions exposed the same boundary: GEPA is an optimizer, not a guarantee. In the demonstrated setup, it proposed a whole new prompt, not a diff. Colvin said that if multiple keys are exposed—model, system prompt, tool set, prompt lines—GEPA can combine the best values across those keys rather than rewriting one monolithic string.

The most important risk was overfitting. One participant reported that their optimization run reached high accuracy but produced a final prompt that explicitly excluded aunts and uncles, even though aunts and uncles appeared in the golden relations. Colvin suspected the optimizer had been exposed to a subset that did not include those cases and learned a simpler but wrong rule. The remedy is more data, split properly: enough coverage for one full set to train on and another full set to evaluate on.

He also made the model-dependence explicit. A participant asked whether an optimized prompt only works with the model it was optimized against. Colvin said the usual view is yes: if the model changes, the optimization should be rerun. That is one reason evals are hard, and one reason many teams do not run them. They write a decent prompt, ask a coding assistant whether it looks good, eyeball the answer, ship it, and then a new model comes out a month later that may supersede the optimization anyway.

Optimization is most valuable when the model is cheaper than the obvious model

Colvin presented prompt and agent optimization as an economic choice. It is most compelling when the goal is to make a smaller, faster, or cheaper model perform a task that a frontier model could do more expensively. If the state-of-the-art model has all relevant information and answers correctly most of the time, prompt optimization may matter less. But if the task involves private data, internal rules, or high-volume processing, the value changes.

He cited an example from Shopify using GEPA. In his telling, Shopify had been analyzing Shopify sites for issues such as fraud or tax categorization. The expensive baseline was giving the whole website to GPT-5 and asking what it was. The optimized approach used an agent, a Qwen model, and GEPA prompt optimization. Colvin said the annual cost fell from $5 million to a much lower figure that he stated unclearly in the talk, sounding like roughly tens of thousands of dollars, while performance improved over time. He acknowledged that the savings were not only from prompt optimization: the system also moved to an agentic design and changed models. The example mattered because optimization spanned prompt, model, and workflow.

He also gave a private-equity example: a firm with 200 million invoices across its portfolio may reasonably spend weeks optimizing a specific version of a small Qwen model rather than throwing GPT-4o at the entire job. If the difference is around $10 million, then assigning an expensive analyst for several weeks to build a reliable optimization harness can make sense.

This is where he located GEPA relative to fine-tuning. A participant asked whether GEPA-style optimization and fine-tuning are competing strategies. Colvin said they are. He also argued that the big model labs discourage fine-tuning partly because the next base model often makes the fine-tune obsolete. For many applications, improving the harness, waiting for the next model, or even showing users a nicer loading indicator may be a better investment than spending tens of thousands of dollars fine-tuning. But in finance or other high-volume domains where small improvements over huge numbers of runs matter, fine-tuning can still apply.

Private data was the recurring constraint. Colvin cited a statistic he had heard that 98% of data is private, while admitting he did not know whether that number was correct. Even if it were 50/50, his argument stands: when models have not trained on the data, and when the task depends on a large internal policy or domain-specific specification, choosing the right context and instructions becomes highly valuable. Public demo tasks struggle to show that because the model may already know the answer. In the MP example, Colvin noted that the model could sometimes infer who represented a borough without truly relying on the provided data, because the information was public.

The technique is also harder when the task is broad and open-ended. A participant asked how to approach optimization for less narrowly specified agents. Colvin’s suspicion was that the wider the task, the harder this GEPA-style optimization becomes. A coding agent might be evaluated on 5,000 tests, but that could still be “a drop in the ocean” relative to the space of things users ask coding agents to do. Over-optimization against the test suite becomes a serious risk. For broad tasks, the answer may be less about prompt search and more about using a better underlying agent.

The hard part of evals is deciding what counts as right

Golden datasets make evals straightforward, but most useful production agents do not arrive with a clean answer key. Colvin repeatedly returned to the fragility of evaluation itself: optimization is only as meaningful as the thing being optimized.

A participant asked what happens if there is no golden set. Colvin’s answer was blunt: things get difficult. In practice, teams often use a human-annotated subset as a starting point. In some tasks, a loop can provide deterministic feedback: if an agent writes code, run the code and see whether it fails. If it generates an action that can be checked downstream, use the downstream result. But “working out what your judge is” is the hard part.

Binary pass/fail tests are often insufficient. If code runs, that is useful, but it does not capture whether it used unavailable libraries, violated style constraints, took the wrong approach, or solved the wrong problem. If an agent advises someone to stop smoking, Colvin gave the absurd “ultimate eval”: wait 40 years and see when the person dies. Since that is impossible, the practical eval becomes proxies: did the answer include certain required content? Did it avoid clearly bad suggestions, such as telling the user to take up cigars? “Text does not include cigar” sounds dumb, he said, but many real evals end up looking like that.

Variance is another cost. A participant said they ran the same evaluation and got different results. Colvin said the best way to reduce variance is to run cases many times. He knows hedge funds spending about $20,000 a night running their full eval suites to see if they improve over time. If a team owns GPUs, it may treat repeated runs as effectively free and run everything 100 times overnight. But variance remains one of the challenges.

The scoring function can be customized. In the adapter code, evaluation returned an EvaluationBatch with scores as a list of floats. Colvin said teams can include whatever floats they care about. That could mean accuracy, variance, cost, latency, or a composite objective. Trajectories, in this context, are the sequence of steps the agent took to reach a result, similar to traces.

For sensitive domains, teams may not want to send inputs, outputs, or system prompts into an external observability system. Colvin said Logfire can be used without recording raw input and output; a team could record only performance such as good or bad and aggregate metrics. His “somewhat biased” answer was that enterprise self-hosted Logfire inside a customer’s VPC is the natural solution. But categorical performance can also be exported without generated content. He cited legal-tech companies such as Legora and Harvey as examples of environments where client data cannot be exfiltrated; in such cases, categorical labels—good, bad, or more granular grades—can be recorded without sending private content.

User feedback is another source of labels, but Colvin was skeptical of explicit ratings. His first piece of advice: no one clicks them. If teams use them, he recommends thumbs up/down only. Logfire has an annotation system, not demonstrated, that can record annotations against prompts and help build a golden dataset. But the best feedback is implicit in the user’s next action. If the user says “no, I mean X,” that is a signal. If they say “thanks, that’s great” or leave, that is a different signal. Colvin compared this to old Google ranking behavior: if a user clicked a result, immediately returned, and clicked another, the first page was probably bad.

Managed variables turn prompt and model changes into typed production configuration

Samuel Colvin demonstrated managed variables with a small FastAPI application: one endpoint returned HTML, another handled a form submission. The page was a simple “MP Search” interface where a user could ask questions about UK Members of Parliament. The app was instrumented with Logfire, and the agent had a tool that could grep through the MP page data.

A query such as “who is the MP for Hammersmith where I live” caused the agent to search the local data and answer Andy Slaughter. Logfire showed the HTTP POST request, the search_agent_run, the tool activity, and the final answer.

The important part was how the agent was configured. In code, Colvin defined an AgentConfig Pydantic model with fields including instructions, model, and max tokens. Then he created a Logfire variable named mp_search_agent_config, with that Pydantic type and a default value. The default instructions told the agent to answer questions about UK MPs, use the mp_search tool, and provide concise factual answers. The model default used a gateway Anthropic Claude Sonnet model; max tokens were set to 1024.

Because the configuration was a managed variable, it could be pushed from local code to Logfire and edited in the Logfire UI. Colvin noted a rough edge: at the time of the workshop, managed variables were not configured through the same logfire projects use command used earlier. Users needed a separate API key with read/write variable permissions. He said that would be solved soon.

Once pushed, the variable appeared in Logfire’s Managed Variables dashboard. It had fields, update history, and targeting controls. Targeting lets the team define what percentage of calls use which value. Colvin compared it to A/B testing. Under the hood, managed variables use the OpenFeature standard, so in theory anything that speaks OpenFeature can connect to Logfire.

He then edited the instructions field to “reply in French,” saved a new version, and set targeting so 100% of calls used the latest value. Running the same MP query returned a French answer: “Selon les résultats de la recherche, Andy Slaughter (Labour) est le député (MP) pour Hammersmith.” He changed the instruction again to German and got a German answer. He also changed the model field from an Anthropic model to gateway/openai:gpt-4.1, reset the instruction to English, and asked “what model are you?” The answer identified itself as ChatGPT rather than Anthropic.

The point was not that production systems should ask models what model they are. It was that prompt, model, and other typed values can be changed in a running service without treating application deployment as the only control surface. In a local demo, redeploying is trivial. In a production environment with CI, approvals, staging, and deployment windows, the ability to change configuration quickly can matter.

Managed variables also generalize beyond plain prompts. Because the variable type is a Pydantic model, a team can expose multiple fields safely. In the demo, those fields were instructions, model, and max tokens; elsewhere in the discussion, Colvin described model choice, prompt content, tool registration, and compaction strategy as among the broader knobs an agent system might optimize.

The optimized-prompt web example failed, which is why it mattered

Colvin did not present managed variables as the same thing as optimization. The sharper claim was about connecting pieces that still mostly require manual wiring: evals that define success, traces that show failures, optimizers such as GEPA that propose changes, and managed variables that can apply those changes in a running service. He described the future target as “self-driving for managed variables”: given evals, the platform would hill-climb toward better prompts, models, or agent configuration without a person manually pasting values into a UI. He described that as a feature Pydantic is working on, not as the thing being fully demonstrated.

The workshop only approximated that. After optimizing the MP relation-extraction prompt with GEPA, Colvin showed a second managed variable, mp_relations_instructions, intended to control the relation-extraction agent’s instructions. The web agent had two tools: the MP search tool used for general questions, and an extract_political_relations tool that called the same extraction logic optimized earlier. Asking “who are the political relations of Stephen Kinnock” caused the search agent to call the extraction tool, which returned Neil Kinnock and Glenys Kinnock. Logfire showed the nested tool call and the inner relations_agent run.

He then tried to find a case where the dumb prompt failed and the optimized prompt might visibly improve the web app. In the eval comparison, Andrew Gwynne appeared as a case where the correct answer was no political relations, but prompts had returned a father who was a sports commentator—again confusing a public figure with a political figure. Colvin ran the web query and got the wrong sports-commentator relation. He then pasted the long GEPA-optimized prompt into the managed variable and reran the query.

The moment was not a clean proof of no-redeploy production updating. During this part of the demo, Colvin noticed a code issue, made a quick tweak so the web path used the managed instruction variable, and restarted the server. That mattered: the earlier French, German, and model-switching examples showed the managed-variable mechanism; this later example was a rough prototype of how an optimized prompt could be applied through the same mechanism.

The result still went wrong. The Andrew Gwynne query again identified the sports commentator relation.

Colvin did not hide that. He joked about the “demo gods,” but the failed improvement usefully bounded the claim. The managed-variable mechanism worked. GEPA had improved aggregate validation performance. But a single visible example did not necessarily flip, and the system was not yet an end-to-end autonomous optimizer.

That was consistent with his broader argument. GEPA is useful but not magic. Managed variables are powerful but do not make a bad eval set good. Prompt optimization can improve a narrow benchmark while still failing a case the presenter hoped would improve live. Production optimization requires the full loop: typed configuration, traces, evals, feedback, enough data, and careful objective design.

Agent optimization extends beyond prompts

Several questions pushed Colvin to broaden the frame beyond prompt strings.

A participant asked whether prompt optimization is a form of context engineering and whether it should be combined with strategies such as summarization, context restarts, and compaction. Colvin agreed that compaction strategy is one of the parameters worth optimizing in more complex cases. The demo optimized something everyone could understand in a workshop, not the full space of possible agent design. But in a real agent, optimization might include how context is compressed, when fresh model calls are started, and how much history is carried forward.

He returned to the Shopify example: giving an entire website to GPT-5 is one approach; using a Qwen model requires a more agentic workflow where the system queries for particular terms or evidence. That is context engineering as much as prompt engineering, and it can let a smaller model perform a task more deterministically.

He also listed other knobs that could be optimized: model choice, compaction strategy, tool registration, and including “code mode.” The next step Pydantic wants to support, as he described it, is optimization across the full range of agent choices, not just system prompts. If the optimizer can choose from multiple models and workflow strategies, the exact prompt may matter less.

Asked whether one could simply evaluate different models instead of prompts, Colvin said yes. If there are ten models under consideration, a team might run the same eval across all ten and pick the best according to performance, price, latency, or some combined objective. In the demo, the model was held constant to isolate prompt behavior and keep the workshop fast.

Internally, he said Pydantic has an agent inside Logfire that converts free-text search into SQL. They have optimized it “a fair bit,” and token count is not a major concern because the volume is not high enough. The important objective is generating good SQL. They do not use it as a public demo because user queries can contain private data—someone might ask for “invoice 12345,” and that private detail would end up in the SQL or trace.

At the end, he briefly showed that Pydantic AI can expose an agent through a web chat interface with minimal code using an integration with the Vercel AI protocol. The specific relation-extraction agent was structured-output-oriented and not designed to be chatty, but the point was that a fuller chat UI could retain context and support follow-up questions. The workshop app itself was intentionally crude: Colvin described it as the simplest example he could get running.