Voice Will Be the Primary Interface for AI Agents and Robots

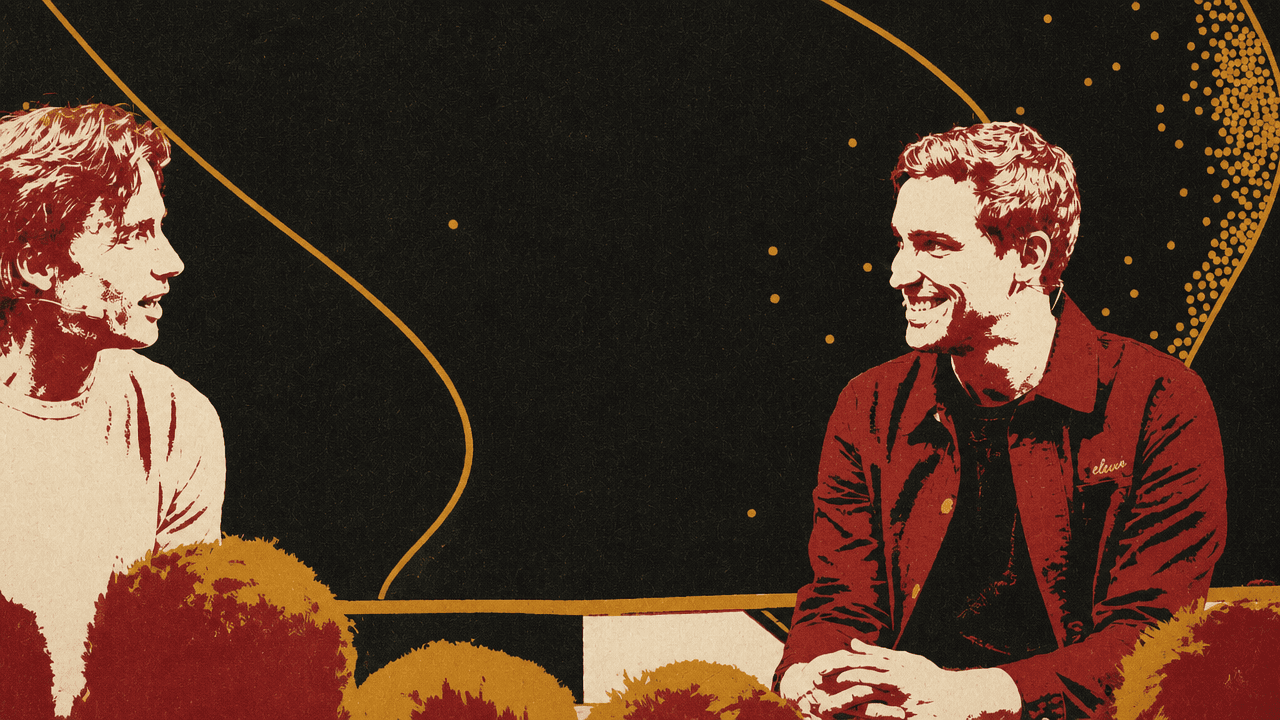

At Sequoia’s AI Ascent 2026, ElevenLabs co-founder and CEO Mati Staniszewski argues that audio was an overlooked frontier in 2022 because the AI field was focused on text and images, leaving room for a smaller company to build quickly and monetize early. His broader case is that as AI intelligence becomes more capable, voice becomes the interface problem: the way people will use agents, robots, services, education and healthcare. Staniszewski says the next hard problems are emotional intelligence, timing, authentication and workflow, not merely making synthetic speech sound human.

Voice becomes the bottleneck when intelligence is abundant

Mati Staniszewski presented ElevenLabs’ origin as a product of both childhood experience and a bet on where AI would need an interface. He and co-founder Piotr grew up in Poland, where foreign films were commonly narrated in Polish by a single monotone voice, regardless of whether the character on screen was male or female. The experience made the failure concrete: the language changed, but the emotion, identity, and performance collapsed.

That led to a broader thesis. The missing capability was not merely speech synthesis. It was the ability for anyone to speak any language “with the same emotion, the same intonation.” From dubbing, the problem widened to books unavailable in audio form, news articles that could be listened to, language barriers, and eventually the way people will interact with robots, devices, and agents.

Staniszewski’s larger claim is that as AI systems become more capable, the constraint shifts from intelligence itself to communication with that intelligence. Voice, paired with visual input, becomes one way to make AI usable in the physical and service-heavy parts of life: instructing devices, interacting with robots, booking appointments, getting government help, learning, or moving through sales and support without forms and dropdowns.

He did not argue that voice makes human interaction less valuable. He argued the opposite may happen. As synthetic voice becomes common, events, artists, and other real human encounters may become more valuable. But for routine interaction with machines and services, he expects voice to become a primary interface.

That future changes the trust problem. Today, systems often try to detect AI-generated audio. In a world where people may use authenticated voice agents to book restaurants or give information to healthcare providers, Staniszewski expects the default to invert: systems will need to detect what is real, what is authenticated AI, and what is watermarked or opted in. Everything else may be assumed fake.

Audio was overlooked enough to be a frontier wedge

Andrew Reed framed ElevenLabs as unusual among frontier-model companies because it did not begin by raising hundreds of millions or billions of dollars before finding a business. Mati Staniszewski answered with timing, domain choice, and an operating model built around early revenue.

In 2022, he said, much of the technology conversation was still about crypto and the metaverse. AI work was starting to concentrate on text and image models, but audio was still treated as a niche. There were relatively few researchers in the area. Audio models were smaller than some adjacent model categories, and the compute requirements were lower. The data needs were still large, but the hard work, as Staniszewski saw it, was transcribing and annotating audio well enough to make it useful.

That created an opening for a smaller team. ElevenLabs started in London, with many people between London and Warsaw, and operated remotely from the beginning. Instead of hiring only from obvious hubs, the company looked for researchers based on their work, including by searching GitHub and reaching out with audio samples.

The company also chose to monetize quickly. Staniszewski described early revenue as a way to fund model work, keep margins healthy, and preserve independence in development. ElevenLabs did later raise external capital as its ambitions grew, but the initial point was to build a revenue stream rather than assume indefinite financing would come first.

The lesson he offered founders was not that every foundation-model company can avoid large capital needs. It was narrower: some technical domains still look small because others have not recognized their future value. Audio gave ElevenLabs a wedge because it had a different cost structure, a thinner research labor market, and a set of customer problems that were not yet crowded.

The stack moved from speech generation to real-time interaction

ElevenLabs began with text-to-speech. Mati Staniszewski described the first model as one that could understand the context of written text and use that context to generate emotion and intonation. A happy sentence should sound happy. Dialogue should sound like dialogue.

The initial product was tied to breaking language barriers, but dubbing required more than generated speech. The first three model areas became text-to-speech, speech-to-text, and the combination of those systems for transcription, translation, and dubbing. As reasoning models became faster and more capable, ElevenLabs moved into real-time streaming audio and conversational experiences, adding the turn-taking and orchestration required for a voice engine behind a voice agent.

The company later expanded into music, which Staniszewski called one of the hardest audio modalities. As he described it, ElevenLabs now works across text-to-speech, speech-to-text, localization and dubbing, voice agents, orchestration, and music.

The early “wow” moments show what the company counted as progress. The first was internal: the model could replicate Staniszewski’s own accented voice from a good sample. The second was laughter. When the system could laugh, pause, include “ums” and “ahs,” and reproduce imperfections, the experience felt more human. Staniszewski said the company made it to the top of Hacker News with what he called the first AI model that could laugh.

Then came public examples of translated speech that preserved the speaker’s voice. He cited a Javier Milei speech that went viral in 2023 or 2024 after being translated into English while still sounding like Milei. He also mentioned examples involving Narendra Modi, President Zelenskyy, and Matthew McConaughey delivering his newsletter in Spanish and Portuguese, including for family members who speak those languages.

For Staniszewski, the next internal step change is emotional intelligence in interactive voice. A voice agent should not only speak with the right intonation; it should understand the emotional state of the person on the other side. If someone is stressed, it should respond with a soothing and reassuring tone. If someone is excited, it may match that energy. If someone speaks slowly, it should slow down.

He also described a future capability he called “audio general intelligence,” where models can combine audio behaviors in one continuous stream. A model might narrate, pause, and then sing in the same continuous voice. That is extremely hard today, he said, but he expects it to become possible soon.

Voice agents are moving beyond support

Customer support is the obvious voice-agent market, and Mati Staniszewski acknowledged that it is the one most people know. But he said ElevenLabs is seeing a shift toward revenue-generating use cases, especially sales.

Deliveroo was his example of operational outreach. Voice agents can contact restaurants to capture opening times, which then helps update riders, drivers, and customers about when orders can be placed or fulfilled. Deutsche Telekom was his example of inbound sales: customers can ask about a service or product through a voice agent instead of using a dropdown or form.

ElevenLabs uses voice agents in its own inbound sales flow. Staniszewski said the company found two things. First, the process was simpler and faster than a form. Second, users volunteered more information. Instead of only submitting structured fields, people described their use cases, where the product worked, where it did not, and what else they were evaluating. That extra context could then support a better follow-up experience.

The more overlooked areas, in his view, are citizen support, education, and healthcare.

In citizen support, he argued that most people would benefit from better access to government services, whether that means help with taxes or understanding travel policy. He pointed to work with the Government of Ukraine, which he described as one of the most advanced governments in this area. Because of the war and limits on frontline access, Ukraine wanted another channel for citizens to call in and get information. Staniszewski said the resulting voice agent could provide information about the front line, deliver educational help and lectures to children, and support proactive safety engagement.

Education is his favorite example. He described the possibility of having an excellent teacher available at any time through headphones: someone like Karpathy for technical subjects or Richard Feynman for physics. He said ElevenLabs is already seeing pockets of this. MasterClass, known for static lectures from well-known teachers, recently launched interactive versions. Staniszewski mentioned Gordon Ramsay teaching cooking in the kitchen and Chris Voss helping users practice negotiation live on the phone.

The common thread is that voice is not just a different input field. The interaction changes when a user can explain context in ordinary speech, ask follow-ups, or receive coaching in the moment.

Negotiation depends on timing, interruption, and emotional intelligence

An audience question asked whether voice agents are being deployed to negotiate, whether agents are beginning to negotiate with other agents, and whether agent-to-agent communication would still sound like human speech.

Mati Staniszewski said he has seen early inklings, but not truly successful negotiation yet. Current examples are closer to order-taking: asking for a price, capturing information, and sending it back to a team. Some startups are working on organizational workflows, such as calling many venues, getting prices, and then calling again with a budget.

Real negotiation, in his view, will depend heavily on emotional intelligence. It is not only what the agent says, but how it delivers the message, when it pauses, and whether it can manage interruption. Today, many voice agents are designed so that a human can interrupt them. In negotiation, the reverse may also matter: the agent may need to interrupt the human. Staniszewski called that an extreme version of the interface problem.

On agent-to-agent communication, he described a hackathon case from roughly a year and a half earlier. One agent spoke with another agent, they detected that both sides were agents, and they switched to a different language that transmitted information more efficiently than ordinary spoken words. Staniszewski said he expects this kind of behavior to happen. The open question is whether the medium remains voice or becomes another transmission format, depending on the infrastructure built around it.

That answer sets a boundary around the voice thesis. Voice may be the human interface to agents, devices, and robots. But when agents realize they are talking to agents, human-like speech may be unnecessary.

ElevenLabs is organized to stay small inside

Andrew Reed cited more than $100 million of net new ARR in Q1 and asked for counterintuitive operating lessons. Mati Staniszewski gave a separate scale marker: ElevenLabs is just over 400 people and over $400 million in revenue, while keeping teams “extremely small.”

He described an approximate cap of fewer than 10 people for research, product, go-to-market operations, talent, and other teams. Most people have around 10 direct reports, keeping the organization relatively flat. The company also has no titles, which he said helps it optimize for impact rather than tenure.

The more unusual practice is embedding engineers inside non-technical teams. Staniszewski said the people team, go-to-market team, and legal team all have engineers who build automation and help others upskill. That has become more important as non-technical employees increasingly use coding tools and “vibe coding” to create internal systems. The issue is no longer only whether employees can produce software-like outputs; it is whether those outputs are reviewed properly for security, infrastructure, and correctness.

He gave several examples. On recruiting, technical support inside the team can help with scraping and with analyzing what has worked in past hiring. In legal, engineers can help the team use tools and automate recurring decisions. Staniszewski described a scoring system for sales negotiations: depending on customer size, the sales team can spend a certain number of points on concessions such as indemnity provisions, liability caps, or clauses. The goal is to avoid repeated ad hoc negotiations about whether the company has already given too much.

Staniszewski presented these choices with caution. ElevenLabs is still four years old, he said, so time will tell whether the model holds. But his operating claim is clear: small teams, flat structure, technical talent inside non-technical functions, and impact-based progression have helped the company move quickly while scaling.

Audio’s jagged edge is emotional and musical

Asked whether audio models have their own version of “jagged intelligence,” Mati Staniszewski said there is still a lot on the bad side. Voice agents work reliably in support settings and are beginning to work in early sales. But true emotional interaction is not yet working. The models do not read emotion well enough, and they are still slightly too slow.

Music has a different version of the same gap. Staniszewski said models can generate good production music, but not top-chart music, even with artist input. He expects that to change over the next year or two.

A follow-up question asked whether these gaps exist because labs train for economically valuable use cases, or because some problems are genuinely harder. Staniszewski answered that ElevenLabs tries to build models, products, and ecosystems that create the largest impact for customers and users, which should correlate with revenue over the long term. But the company often invests before short-term value appears.

The example he gave was data labeling. ElevenLabs spends time labeling not only the “what” of audio, but the “how”: what emotions were used, how a voice should be described, how music should be described. Staniszewski said the company has assembled a team of more than 1,000 people who were previously voice coaches, musicians, or artists to annotate data behind the scenes. He does not expect that work to necessarily pay off in six to 12 months, but believes it will matter in the 12-to-24-month horizon.

This is one of the places where his view of audio differs from a purely scale-driven account. The barrier is not only compute. It is preference data, emotional annotation, domain expertise, and the ability to describe and evaluate aspects of sound that are not captured by text alone.

The moat is model quality plus workflow and ecosystem

The final question asked what is defensible in frontier audio models as other labs move toward the category. Mati Staniszewski answered by distinguishing between model work and the broader product system.

He recalled meeting Jensen, who commented that ElevenLabs’ speech-to-text models are “technology” while text-to-speech is “artistry.” Staniszewski said the line won him “a client for life,” but he also used it to make a substantive point: emotional text-to-speech requires focus. To improve quality, a company must get in front of users, collect data and preferences, use that data to fine-tune models, and understand how the models perform in production.

Domain specificity matters. Healthcare is different from financial services, which is different from education or creative experiences. Staniszewski expects an advantage for companies that care deeply about quality and keep investing in model work.

But he also acknowledged that in many use cases, the model is only a small part of the stack. ElevenLabs therefore spends time on product workflow: understanding the user problem, combining audio models with knowledge, integrating with telephony systems, supporting interaction across channels, and building evaluation, testing, and monitoring.

The ecosystem layer is the final piece. Staniszewski said ElevenLabs wants to build a trusted platform where users can start from existing templates for agents, workflows in creative production, or voices. He said the company now has more than 20,000 contributed voices across languages, styles, and use cases. In his view, that diversity makes it easier for customers to begin and helps the platform serve varied workflows.

His defensibility claim is layered: focused model quality, specialized data, user preference loops, domain-specific deployment, workflow integrations, evaluation infrastructure, brand trust, distribution, and a library of voices and templates.