Personal AI Lets One Builder Do the Work of Teams

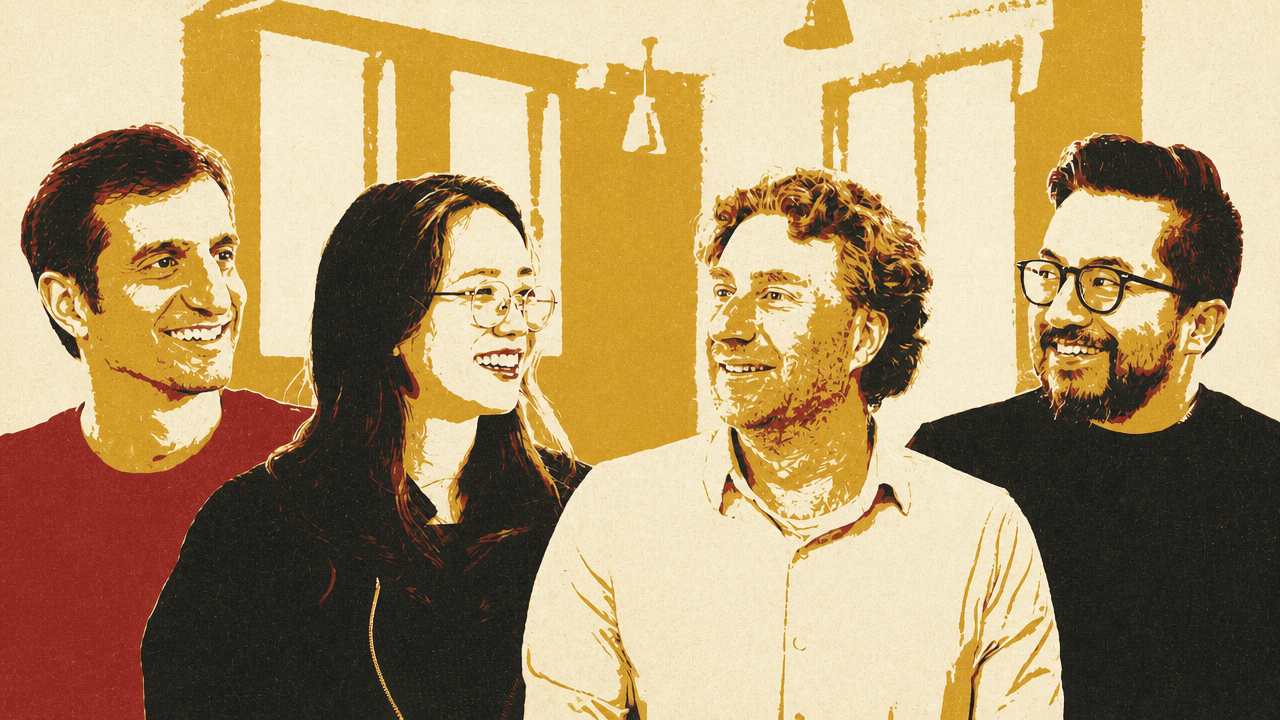

Y Combinator CEO Garry Tan argues that personal AI is reaching a stage comparable to the early personal computer: powerful enough to let one person build software that once required a team, but still brittle enough to demand technical ownership. Drawing on his work with Claude Code, OpenClaw and his GStack workflow, Tan makes the case for heavy token use, Markdown-encoded “skills” and multiple coding agents under one accountable human operator. The larger question, he says, is whether users will control their own AI tools, data and prompts, or work inside opaque systems controlled by others.

Personal AI is entering its kit-computer phase

Garry Tan frames the new AI tooling less as a productivity feature than as a control question: will individuals own their tools, data, prompts, and integrations, or will AI become another opaque hosted feed controlled by someone else?

That is the thread running through his return to building. After 13 years of mostly not coding, Tan says he is now doing “about 400x” the amount of work he did the last time he was spending significant time writing code. He later clarifies that this was not because he personally typed 400 times more code. He was “directing 15 agents at a time.”

The analogy he keeps returning to is the personal computer. Today’s personal AI, in his view, resembles the early kit-computer era: astonishing leverage for people willing to assemble, inspect, and repair their own setup. OpenClaw, he says, feels like a Ferrari — fast enough to do things a machine did not seem capable of doing, but brittle enough that the driver still needs to be a mechanic.

Using OpenClaw these days is like driving a Ferrari and it's like exhilarating. It's insane. But then it's also like a Ferrari in that you better be a mechanic.

The first project that pulled him back into writing software was Garry’s List, a political and civic site focused on California, San Francisco, and Los Angeles. The motivation was not a tool benchmark. Tan wanted to organize people around issues he cared about, including access to algebra in San Francisco public schools, which he tied to his own path through public school, engineering, and programming.

Garry’s List also gave him a comparison across three generations of building roughly the same product. Posterous, his 2008 YC startup, was “dead simple blogs by email,” became a top-200 website, and was bought by Twitter for about $20 million. He later rebuilt it as Posthaven with Brett Gibson after Twitter shut Posterous down. In his telling, Posterous took roughly $4 million, six or seven people, and about a year and a half. Posthaven took about $100,000, two people, and around three months. The 2025 version, built with Claude Code, took about $200 for his Claude Code Max account and “probably 5 days.”

The point is not only that a blog platform became cheaper. Tan says the new version includes “full RAG” and “agentic retrieval”: it can ingest internet material, read tweets, recursively crawl, research topics, collect arguments for and against an issue, and produce detailed sourced reports. The software is not merely a publishing surface. It does part of the reporting work.

That is where his phrase “tokenmaxxing” comes in. For the equivalent of “five or ten dollars of Opus calls,” he says, the system can do research that would otherwise require a person to go through dozens of articles, read books, annotate sources, and assemble quotable material. The ideal is not to make a single-source summary; it is to retrieve more context, cross-reference it, and preserve disagreement — “these 13 sources say this, and these seven sources disagree with that.”

I think now it's, it's going to permeate every part of society. Like every thing that we would call knowledge work could be tokenmaxxed. And um, I don't think that it means that we're going to get rid of people. I think it means that people need to still supply uh the agency.

For Tan, the machine can be made to read more, test more, compare more, and search more. It cannot decide that San Francisco middle-school algebra matters. The human remains responsible for what should exist, what good looks like, and when the machine is wrong.

GStack turned repeated judgment into reusable skills

Diana Hu describes Tan’s work on Garry’s List as the place where patterns around tokenmaxxing and a new way of building crystallized into GStack. Tan says he did not begin by trying to create a framework. He simply got tired of typing the same instructions into Claude Code and started collecting them in Apple Notes.

Those repeated instructions became skills and reviews. The first pattern was plan review. Claude would sometimes get confused, write bugs, or leave work incomplete. Asking it to code directly was not enough. Asking it to diagram the system first improved the work.

The on-screen plan-review material shows how explicit the instructions became. One Markdown file gives the agent a “Priority Hierarchy,” tells it to flag repetition aggressively, prefer tests, aim for code that is “engineered enough,” give strong opinions, minimize diffs, and use ASCII diagrams for “data flow, state machines, dependency graphs, processing pipelines, and decision trees.”

Another section, “Step 0: Scope Challenge,” forces the agent to ask what subproblems already have partial solutions, whether the plan is too complex, and whether the user wants scope reduction, one-thing-at-a-time review, or a smaller compressed review. Tan’s practical explanation is that the model should load the relevant context into its own working process before doing the work. If it maps inputs, outputs, user flows, errors, and data movement, it “boiled the ocean better.”

The plan-review workflow then breaks into architecture, code quality, and tests. The architecture review shown on screen asks for system design, component boundaries, dependency concerns, missing pieces, security architecture, realistic production failure scenarios, and a warning not to hallucinate: “trust existing code bases over your own confidence.”

The “Pre-REVIEW SYSTEM AUDIT” makes the workflow closer to a repository inspection checklist than a loose prompt. It tells the agent to run git log --oneline -30, git diff main..HEAD, search for FIXME, TODO, HACK, and XXX, inspect recently touched files, then read CLAUDE.md, TODOS.md, and architecture docs. Before judging a plan, the agent has to map current system state, work already in flight, known pain points, and relevant TODO comments.

Testing became central because the first attempts failed in predictable ways. Tan says that when he wrote code himself, he did the minimum testing because tests were not fun. When he started “vibe coding,” he hit the common failure mode: “slop” that worked for the 80% case but fell over when users touched it. His initial response was to aim for 100% test coverage; he later concluded that 80% to 90% is usually the better practice.

He posted an early iteration of his Claude Code plan-review skill on X. The visible post says it “works super well to shake out all issues, shake out architecture and code smell issues, perf issues, and test cases,” and shows his instruction never to skip Step 0 or the test diagram. Tan says roughly 200,000 people saw it.

He then made a more expansive version, first called “mega plan” and later “CEO plan.” This used meta-prompting: take an existing review plan and imagine, for example, Brian Chesky sitting with the agent. Tan points to Chesky’s “10-star experience” exercise as a product-design frame. Instead of asking whether something is adequate, the prompt forces the model to imagine the more ambitious version.

The later “Nuclear Scope Challenge + Mode Selection” asks whether the plan solves the right problem, what happens if nothing is done, whether existing code can be reused, whether something is being rebuilt unnecessarily, and what the 12-month ideal state should be. In scope-expansion mode, it asks for a “10x check”: the version that is 10 times more ambitious and delivers 10 times more value for twice the effort.

Tan likes the CEO skill because, as he puts it, he is “an ADHD CEO” drawn to potential. The agent is not merely told to implement. It is told to challenge premises, expand ambition, reduce scope, and critique the plan before implementation.

The operating loop is many agents, one accountable human

Harj Taggar summarizes Tan’s setup as the workflow behind shipping “hundreds of thousands of lines of code a month.” Tan says he had dropped 13 pull requests in the preceding 48 hours and describes a loop in which ideas are queued, planned, reviewed, approved, implemented, tested, and manually checked when needed.

The workflow starts in plan mode. For a new idea, Tan runs the CEO skill to expand and sharpen the product concept, the engineering skill to make it rigorous and tested, and other GStack roles depending on the task: office hours, designer, developer experience, plan-eng, and Codex. Office hours simulates YC company-building questions: who is this for, how do you know they want it, what does it do, and what is the impact?

GStack relies heavily on AskUserQuestion because the operator still has to supply context and judgment. Tan is skeptical that software can be made well with no human involvement at all. His goal is not to leave the loop; it is to give the machine the work he does not want to do.

Manual QA became one bottleneck. Tan says he had about 15 features queued up, all passing end-to-end, integration, and unit tests, but still needing manual verification. For Garry’s List, that meant opening a Rails server, loading a user, putting the system into a particular configuration, and checking that the feature worked. Claude in Chrome MCP was too slow for him: two to three seconds per turn.

That frustration led to GStack’s browser tooling. Tan recalls typing a prompt along the lines of: “I’m so sick of using Claude in Chrome MCP it’s too slow, let’s go ahead and wrap Microsoft’s Playwright. Can we do that?” In the later version of GStack, when he typed that again, the system recognized that the work already existed: GStack already had a Playwright wrapper at browse/, a long-lived HTTP daemon with roughly 70 commands, exposed as a CLI binary and used by /browse, /qa, and /scrape, faster than MCP because it talks HTTP to a persistent Chromium.

QA, in Tan’s workflow, is browse plus branch context. The prompt asks what changed; if there is UI or data mutation, it uses the browser to test it. When it worked, he says, it felt like having a black-box browser and “mini AGI” already being here, though he immediately distinguishes that from “true AGI,” which would mean he was no longer needed at all.

The setup also uses different model roles. Tan says Claude Code is “ideal for the ADHD CEO,” but Claude can sometimes “BS a bunch of stuff.” From YC alumni, he heard that some people preferred Codex for harder problems. He casts the difference as archetypes: Claude Code as the energetic CEO, Codex as a “200 IQ nearly non-verbal CTO.” In GStack, /codex can take a plan or implemented repo, run Codex from the command line with instructions to find problems and bugs, and return feedback to Claude Code. He later added the inverse: if Codex is the primary coding agent, /claude can bring Claude in as the CEO.

This is not a single omniscient tool. It is a working arrangement of roles, checks, handoffs, and decisions, with the human operator still choosing what to build and how to respond when the agents disagree or fail.

Markdown is where Tan encodes taste

Tan’s “thin harness, fat skills” idea came partly from being mocked for “peddling Markdown.” His answer is that Markdown is “actually code,” compiled differently. In his workflow, structured natural-language instructions are not documentation around the system. They are part of how the system behaves.

A harness, in Tan’s definition, is the core loop: it takes user input, gives it to the LLM, runs what the LLM does, and handles tool calls. YC had repeatedly built internal agents and called that loop the harness. After spending all day in Claude Code, Tan concluded that teams should not keep rebuilding harnesses when existing ones are already strong. The harder work is deciding what instructions, preferences, processes, and judgment should live in Markdown.

His analogy is a wedding planner. If an experienced planner wanted to teach the next person how to throw a wedding, the reusable judgment would be written down in plain English: checklists, preferences, sequencing, edge cases, warnings. That belongs in Markdown. But if the task is to call 20 venues, that should be a real action, perhaps a call to a service such as Twilio.

The engineering distinction is between latent-space work and deterministic code. Code executes; it does not understand who the user is, what the user wants, or what special cases mean. LLMs can use context about motivation, preference, and intent to handle generic cases. Agentic engineering, in Tan’s view, is deciding what belongs in “LLM land” and what belongs in “code land.”

The danger is misplacing work on either side. If engineers put in code what should be in Markdown, Tan says the result can be brittle because deterministic code cannot adapt to the cases the instruction was supposed to cover. If they skip tests, they are shipping “slop” that is “10x worse than human written code” because they do not know what will happen.

That is why his “boil the ocean” philosophy also applies to testing. The machine does not mind writing and running more tests. Tan argues that users should “zap the rocks more” and get to 80% or 90% test coverage, with unit tests and integration tests. The system may still not be perfect, but it can become reliable enough to change what one person can attempt.

Brittleness is tolerable when another agent can fix it

Tan compares the moment to the Homebrew Computer Club era and the Apple I: early personal computers were kit-like, fragile, and accessible mainly to technical people willing to spend time assembling and debugging them. In his current estimate, a relatively technical person may need a few hours and perhaps $500 to $1,000 in tokens and Claude usage to get a serious setup running. Once it works, it feels like a “kit car Ferrari.”

Harj Taggar adds that the need to fix the system yourself is hard to understand until someone has pushed through it. He sketches the progression: Stack Overflow once felt miraculous as a place to consult when stuck; ChatGPT made that interactive, but the user still copied code back and forth; Claude Code removed much of the copy-paste by executing and running code; OpenClaw can still “brick itself” or behave annoyingly, but another agent can be sitting there to repair it.

For Taggar, brittleness matters less when an agent can be assigned to repair the brittle tool. He does not present that as the long-term interface, but as a current working method.

Tan says his own time has shifted. He was once entirely “Claude Code pilled,” but now only about 50% to 60% of his product-building or agentic-engineering time is in Claude Code; much of the rest is in OpenClaw, especially while working on gbrain. Gbrain grew from a need he saw while experimenting with OpenClaw and a repository full of Markdown context. OpenClaw was using grep, which he found wasteful and imprecise for retrieving context.

Because he had already built Garry’s List, he had practical code for chunking, embeddings, hybrid RRF, RAG, and citations. He could point an agent at that code and ask it to extract the relevant machinery into a full RAG system for OpenClaw using Postgres and pgvector. That is part of why Tan thinks this may become a golden age of open source: a builder can carry patterns from one project into another by asking agents to inspect an existing codebase, understand the working implementation, and extract the useful system.

Token spend is an input, not overhead

The speakers do not present today’s agents as uniformly reliable. Taggar says his OpenClaw agent often fails on semi-complicated programming tasks even using the same model as Claude Code, which makes it feel like the pre-Claude-Code era when tools were “not quite there.” Tan’s answer is that this pattern will recur: what feels not quite usable now may feel obvious a year later.

His larger claim is that “every single person on the planet will have their own personal AI.” The central question is ownership. In one future, people have their own AI, their own data, their own integrations, their own prompts, and visibility into what is happening. In the other, AI is corporate-controlled, more like a hosted feed whose algorithm, beneficiaries, and business model are opaque.

Tan connects this directly to the personal computer revolution. The personal computer gave individuals control over their own tools. Personal AI, he argues, raises the same choice again. If users do not write their own prompts, they live “below the API line” of some product manager or developer who does not understand their needs or what they uniquely care about.

Diana Hu names the practical barrier: the best version of this workflow depends on the latest models and heavy token use, which is expensive. People trying free models, cheaper tiers, or basic subscriptions may not see the same capabilities. Tan agrees on the present cost but answers “for now.” Hu frames the building experience as dependent on “burning lots of tokens,” the tokenmaxxing paradigm.

Taggar compares token spend to San Francisco rent for founders. YC often has to persuade founders that the rent is worth paying because the cost of not being in the right place can be higher. In the same way, he argues, token spend should not be treated like a desk or couch where economizing is harmless. For founders, model usage may be an input they should push hard on because it produces leverage.

Tan ties that back to YC’s maxim: “Live in the future and build what’s missing.” In this case, living in the future may mean being willing, in his example, to spend $500 in a day on tokens if the user is building something valuable and correct. Tokens become a way to buy machine work.

Jared Friedman raises the possibility that Tan’s constraints as CEO of Y Combinator helped produce the workflow. Because Tan’s time was scarce, he had to automate what a full-time engineer might have done manually. Tan answers by contrasting human scarcity with machine abundance. He says he envies “time billionaires,” including young people with vast uncommitted time. Tokenmaxxing, in his phrasing, lets him buy “millions of years of consciousness” — not his own time, but machine time working on causes and institutions he cares about.

Tan does not present this as a grand plan. It emerged from using the tools, talking about them, and realizing from others — including Boris Cherny, who had said his team did not write a single line of code — that he could work the same way. The access point, in his telling, is not mystical: the same prompt, the same MacBook Pro, and a willingness to draw on machine time.