AI’s Demo Phase Is Giving Way to Infrastructure and Compliance Fights

On Diet TBPN, John Coogan and Jordi Hays framed the day’s AI news around the point where software claims meet physical, financial and political constraints. Coogan argued that the Sanders-AOC data center proposal is less a simple moratorium fight than a question of definitions, grid costs and who pays for externalities, while Hays said local objections cannot simply be dismissed. Across segments on ChatGPT personal finance, circular revenue, office prompting, Tesla’s lead and a possible SpaceX IPO, the show treated AI’s next phase as an institutional test rather than a demo problem.

The data center fight is about power, cost, and where the burden lands

John Coogan treated the Sanders-AOC AI data center moratorium proposal less as a simple “build or don’t build” fight than as an argument over definitions, grid costs, externalities, and the political durability of AI infrastructure. The first question, he said, is how a law like this defines an “AI data center” at all. GPUs can be wired together for AI and machine learning, but they can also be used for other compute-intensive work, including rendering CGI films. A moratorium that targets “AI” infrastructure has to distinguish use cases that may look physically similar.

A producer supplied the definition from the bill: a data center would be a building with more than 20 megawatts of maximum power capacity or total peak power, used either to deliver 20 kilowatts or more to a single server rack or to use liquid cooling for individual hardware components. Coogan immediately wondered about workarounds. If one large building is regulated, would builders try “a thousand smaller buildings”? The bill, he noted after hearing more, also appears to account for buildings that are contiguous or adjacent, which led him to joke about whether developers would end up putting trees between them.

The political backdrop, as shown on screen through a Garry Tan post, was a claim that Sanders and AOC had introduced a bill to pause all AI data center construction, that more than 300 local bills had been filed, and that half of planned 2026 data centers were facing delays or cancellation. Tan’s framing was that each data center brings billions to local economies and that blocking them would obstruct “the biggest job creation engine since the interstate highway system.” Coogan also cited a sharper criticism from Nick Davidov, who called the effort economic sabotage.

Jordi Hays added that the bill was the same one introduced in March and had not passed committee. He said it did not appear to have bipartisan support and was “very unlikely” to advance in its current form. But he also argued that the opposition cannot simply dismiss every concern. NIMBYism, noise complaints, environmental objections, and local costs “do have to be addressed in a democratic society” if the buildout is going to proceed smoothly.

Coogan separated the water debate from the energy debate. A tweet shown on screen compared continental U.S. almond water consumption with data center water consumption from 1999 to 2026, with almonds vastly higher and data centers near the x-axis. Hays noted that almonds have long drawn criticism for water use in California, especially during droughts. Coogan said a sufficiently aggressive projection of data center growth could make the lines look more concerning in future years, but he also pointed to technical mitigations such as closed-loop cooling and power turbines that do not require water.

The energy issue, in his view, is different. Elizabeth Warren’s on-screen post said a single AI data center uses as much electricity as 100,000 households and that utilities are passing upgrade costs to customers rather than trillion-dollar tech companies. Coogan said that concern was what the “ratepayer protection pledge” had attempted to address, but the effort was still early. Unlike the water issue, he said, the energy intensity of data centers has not been “debunked.” The need for more clean energy, more power, and grid upgrades is real. The live policy question is who pays.

Coogan described the core issue as a negative externality: costs being passed to people who do not benefit. In that frame, government intervention is not inherently strange; governments have long tried to internalize externalities. The better version of the debate, he suggested, is not whether data centers should exist, but what a data center with broad public approval would look like.

The hosts floated an “abundance” version of the project: powered by clean energy, located somewhere remote, not noisy, not visibly disruptive, and still within a workable commute. Coogan mentioned the idea of a 40-minute commute, common for many Americans, and said that around Las Vegas, driving 40 minutes in many directions takes one to large stretches of empty land where a large building would not be an eyesore and noise would be less salient. A producer compared that to TSMC’s Arizona plant, described as roughly 30 minutes north of central Phoenix, over a hill, on land that looked otherwise empty.

Circular revenue is funny until investors have to decide what counts

The on-screen joke site Revswap.ai offered a deliberately crude version of a real diligence problem: “Trade dollars with other startups. Book it as revenue.” Coogan read the site’s pitch as satire — “the world’s first peer-to-peer revenue laundering platform” — but Hays warned that jokes like this become ambiguous at internet scale. A post with half a million views can be funny to insiders and still convince outsiders either that this is how startups actually operate or that they should try it themselves.

Coogan asked Rahul Sonwalkar whether venture investors are now more focused on the quality of revenue: concentration, circular revenue, and swap-like arrangements. Sonwalkar said good investors still care deeply about those issues and do due diligence, especially at the mid-to-late stage. But he also argued that the line can get blurry. He pointed to Nvidia investing in “neo-clouds” that buy its GPUs and helping start companies that become downstream consumers of its products. Nvidia also invests in AI labs that buy or use the same GPU supply chain. In one sense, he said, that can resemble a revenue swap: investing in a company that then buys your product.

That does not make every reciprocal commercial relationship fraudulent. Sonwalkar’s distinction was purpose and substance. If an early-stage founder is pursuing arrangements like that merely to appear larger, he said, they should ask what they are really doing: building a business and a product people want, or “larping” as a founder.

Hays pushed the harder line: “this is also like a crime.” Coogan narrowed that claim. In his view, a circular deal is not necessarily wire fraud if it is disclosed accurately. If a company tells investors it has a weird circular transaction and that investors should discount it to zero, the issue is not deception. The fraud begins when a company states one thing as another — when it lies about the quality or nature of revenue.

The exchange preserved a useful tension. Sonwalkar emphasized investor diligence and founder intent. Hays emphasized the legal and ethical danger. Coogan emphasized disclosure: a bad revenue line may be worthless, but worthless and fraudulent are not the same category unless it is misrepresented.

Speaking to AI makes office work more legible and more embarrassing

A Wall Street Journal story about workplaces becoming “high-end call centers” led Coogan to ask Sonwalkar whether the future office should sound more like a sales floor, with employees talking aloud to Codex, Claude Code, and other AI assistants. The premise was that some workers who once typed quietly are now dictating prompts, and that at Ramp engineers had been seen wearing gaming headsets so they could talk to AI assistants at their desks.

Sonwalkar was partly in favor of the shift. Spoken prompting makes work more auditable, he said. If someone is typing, a manager walking by cannot tell whether they are working, browsing Instagram, or watching Twitch. If they are talking to an AI, the content is at least partly legible. Coogan extended the joke: if an employee is saying “scroll, scroll, scroll,” that may create a management issue.

But Sonwalkar also pointed to the obvious downside: “I don’t want to hear your prompts.” He said he would be embarrassed if someone read his prompts. Spoken AI work creates accountability, but also exposes a form of thinking that many workers would rather keep private.

Asked for his May 2026 approach to prompting, Sonwalkar said he had stopped “yelling at AI.” He used to issue blunt instructions — “just frickin do this” — but now uses what he called “cognitive behavioral therapy” with models. He tries to reassure them, set them up for success, and assign them a role. One example: telling the model to think as if it were Palmer Luckey designing a new piece of hardware that had never existed before. His shift was partly because models have improved. The older style of admonition feels less necessary.

Coogan described a parallel change in his own prompting. He said he no longer spends much effort telling models not to hallucinate or not to make mistakes, because those instructions feel as if they have been absorbed into pretraining, post-training, or system prompts. He used to inject specific style constraints to remove AI writing tells, especially “antithetical parallelism” — phrases like “it’s not this, it’s that” — and overuse of hyphens. He has since removed that special prompt and returned to defaults because the major model providers appear to have made their base outputs less “clankery.”

The point was not that prompting no longer matters. It was that the frontier of prompting has shifted. The hosts and Sonwalkar described a move away from defensive incantations and toward context-setting, role assignment, and socially awkward collaboration with systems that are good enough to make the old rituals feel dated.

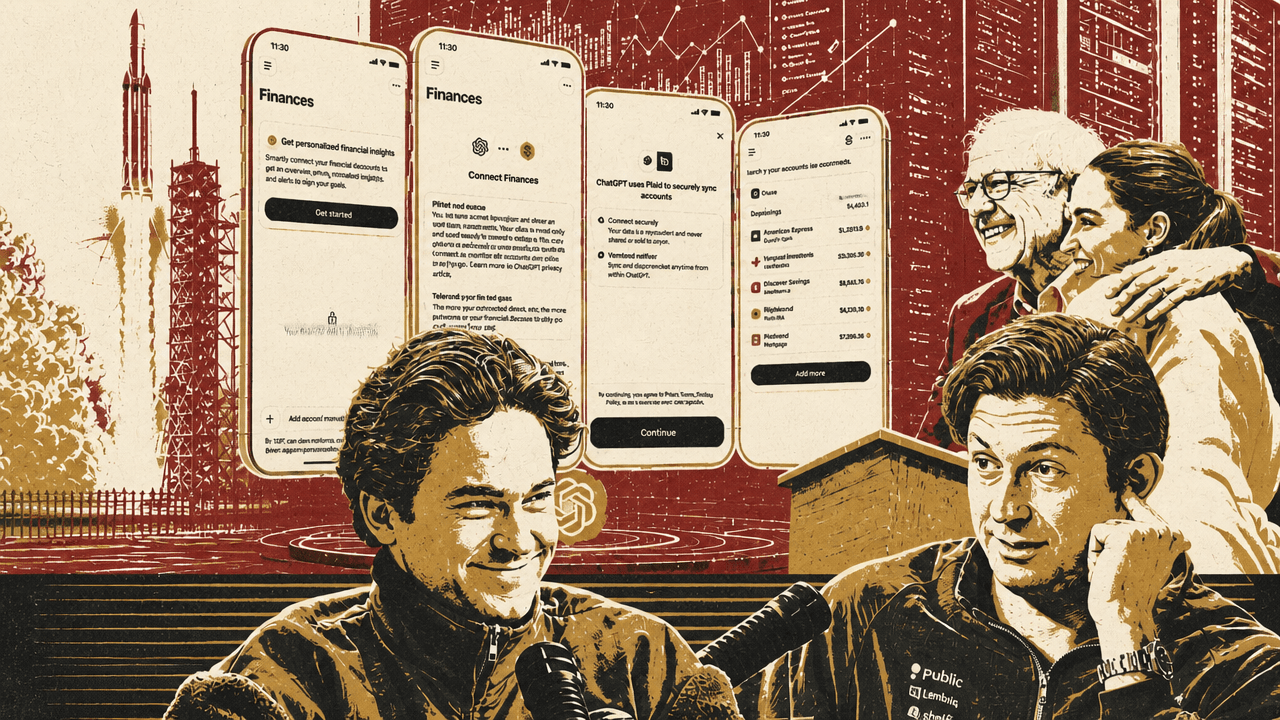

AI personal finance becomes obvious once bank data can meet a general assistant

ChatGPT’s personal finance features, launched in partnership with Plaid according to the hosts, fit a use case Hays said had already seemed inevitable: connecting account data to an LLM and asking it to explain, categorize, and interrogate spending. The Bloomberg screenshot shown on screen framed the product as “OpenAI, Plaid to Bring Tailored Financial Guidance to Masses,” with Plaid connecting accounts for custom money advice.

Hays said he and Coogan had previously talked with Zach Perret about this kind of product direction. Perret, in Hays’s telling, had been “very AI pilled” in connection with Plaid and had wired his own personal finances into dashboards. Hays inferred that some of that thinking may have informed the new product.

The value proposition, as Hays described it, is not exotic financial planning. It is ordinary household clarity at lower friction: pulling in financial data, identifying spending patterns, noticing if a user has duplicate subscriptions, consolidating or flagging expenses, and answering questions like whether gas or grocery spending is rising in a way the user expected. Prior products such as Mint and specialized subscription-management tools addressed parts of this. A general assistant with account access could make the interaction conversational.

Coogan did not dwell on the security or regulatory implications in the segment. The substantive point was product-market obviousness: once Plaid can connect the accounts and ChatGPT can answer questions, a large class of personal finance software becomes an interface problem.

Every company has an AI chat answer; car companies still do not have a Tesla answer

The Lamborghini Fenomeno Roadster announcement prompted a comparison that quickly moved away from the car itself. The vehicle was described on screen as a limited 15-unit, open-top Lamborghini with a naturally aspirated V12 hybrid powertrain, aerospace-inspired carbon fiber structure, active aerodynamics, and 0-to-100-kilometer acceleration in 2.4 seconds. Coogan found it strange that a 2026 supercar announcement did not talk about onboard supercomputing. His first question was what models he could run locally while driving.

Hays used the joke to make a broader point about Tesla’s lead in onboard compute and driver-assistance software. In the LLM market, he said, every major technology company has produced some kind of answer: ChatGPT, Claude, Gemini, Grok, Meta AI, Microsoft Copilot, Apple’s ChatGPT integration, Ramp’s chat interface, and many others. Apple may be behind, but an iPhone user can still download ChatGPT, Claude, or Gemini and fill the gap immediately.

Cars are different. Hays argued that every car company seems “ten years behind Tesla.” He found it striking that companies have not managed to clone Tesla-style full self-driving capabilities into Rivian, GM, and others. GM’s Super Cruise exists, he noted, but he characterized it as much less effective because it works only on certain roads.

The comparison turned on deployment more than research. In consumer AI chat, laggards can wrap models, integrate APIs, or ship a conversational interface relatively quickly. In automobiles, Hays said, Tesla’s combination of onboard compute and driving software remains a gap that competitors have not closed.

The SpaceX listing was framed as a Nasdaq and index-allocation story

Coogan read a Reuters-style headline saying SpaceX had accelerated its IPO timeline and was targeting June 11 pricing on the Nasdaq. He focused on the exchange choice. If SpaceX lists on Nasdaq, he said, it could be allocated into QQQ quickly, and he understood that to have been a priority in the process.

The hosts did not develop the financial mechanics further, but the emphasis was clear: the IPO date mattered, but so did the market structure around it. A Nasdaq listing was not treated as a neutral venue decision. It was connected to index inclusion and the flow of capital that can follow.

Buffett’s lunch price is down from the peak but still a market signal

The show’s opening item was the latest Warren Buffett charity lunch auction, shown through a Wall Street Journal post saying a mystery bidder paid just over $9 million to win lunch with Buffett and that Steph and Ayesha Curry would also attend. Coogan asked whether the better choice would be $9 million in one’s bank account or lunch with the “Oracle of Omaha.” Sonwalkar said he would take the lunch “any day.” Coogan countered that one could buy Berkshire shares and let Buffett work for them forever.

A chart shown on screen tracked winning bids for Buffett lunches from 2003 to 2024. Coogan said the price began at a few hundred thousand dollars in 2003, became roughly a $2 million lunch, and then spiked close to $20 million the last time Buffett participated before taking time off around the pandemic period. This year’s bid, above $9 million, was a steep drop from that peak, but Hays emphasized that the long-term trend still looked positive. Coogan agreed that interpolated over time, Buffett was still raising multimillions.

The presence of Steph Curry complicated the interpretation. Hays argued that until the mystery bidder is identified, it is hard to know whether the bidder wanted Buffett, Curry, or the combination. The buyer could be a Berkshire shareholder-conference type, or a courtside regular more interested in basketball. Coogan accepted the ambiguity: the motive cannot be disentangled without knowing the bidder.

Buffett’s own remarks drew attention too. Coogan quoted him saying that at 92 he “ran out of gas”: the spirit remained eager, but the flesh became progressively weaker. He also quoted Buffett saying “both the money and the message remained important,” a line Coogan found oddly resonant and Hays said sounded more dramatic because of how Coogan delivered it.

The wet bar’s decline says something about how status rooms change

A Wall Street Journal mansion-section profile of an Oklahoma couple, Ann and Mark Ferrow, became a discussion about how tastes inside homes change across generations. Hays summarized the story: over 40 years, the couple bought, renovated, lived in, and sold eight houses around Tulsa, with a ninth built from the ground up. Their transactions totaled roughly $14 million, and their latest house was a 7,200-square-foot build. They rejected being called flippers and preferred to see themselves as serial renovation lovers. Hays called them “real operators,” comparing the couple’s teamwork to family-business builders.

The detail that pulled the conversation sideways was their first home, bought in 1986 for $86,000 and sold in 1993 for $97,000. It was a 2,000-square-foot Cape Cod-style starter house with lavender Formica countertops and a wet bar. Hays used the wet bar as a marker of a prior status regime. A feature once common enough to appear in a modest starter home is no longer at the top of many buyers’ lists.

Hays connected that to falling alcohol consumption. He read from a Derek Thompson post that listed secular anti-alcohol forces: GLP-1 drugs, helicopter parenting, reaction against late-20th-century binge drinking, phones suppressing teenage partying, young adult fitness culture, and broader “healthmaxxing” among both liberal yuppies and MAHA devotees. A chart attributed to Grant Bailey and the Monitoring the Future Study showed the share of 12th graders who had ever consumed alcohol falling from 92% in 1980 to 47% in 2024.

Hays added possible substitution from cannabis and the improvement of non-alcoholic beer. The implication for housing was simple: the next generation of real estate development may not prioritize the wet bar. Buyers may prefer a home office, sauna, pool, playroom, or movie theater. Coogan joked that 2026 was the year “the wet bar and home datacenter swapped.”

A cartoon about in-context learning captured the gap between imitation and generalization

Near the end, Hays showed a Delip Rao cartoon captioned “In-context learning in LLMs.” In the four-panel sequence, a person takes a robot to paint a fence, paints two full planks and part of a third, then hands the brush to the robot and says “continue.” The robot repeats the pattern exactly, leaving the analogous third plank unfinished.

Tyler Cosgrove reduced the joke to its technical point: “Doesn’t generalize.” Hays asked whether the problem is solvable. Tyler answered yes.

That brief exchange was the cleanest technical metaphor in the source. The cartoon distinguished pattern continuation from task understanding. The model can infer and reproduce the observed sequence, but the intended instruction is not merely “repeat the visible pattern.” It is “finish painting the fence.” The joke works because the robot has learned the local demonstration too literally.