AI Is Pushing Science Beyond the Paper as Its Core Artifact

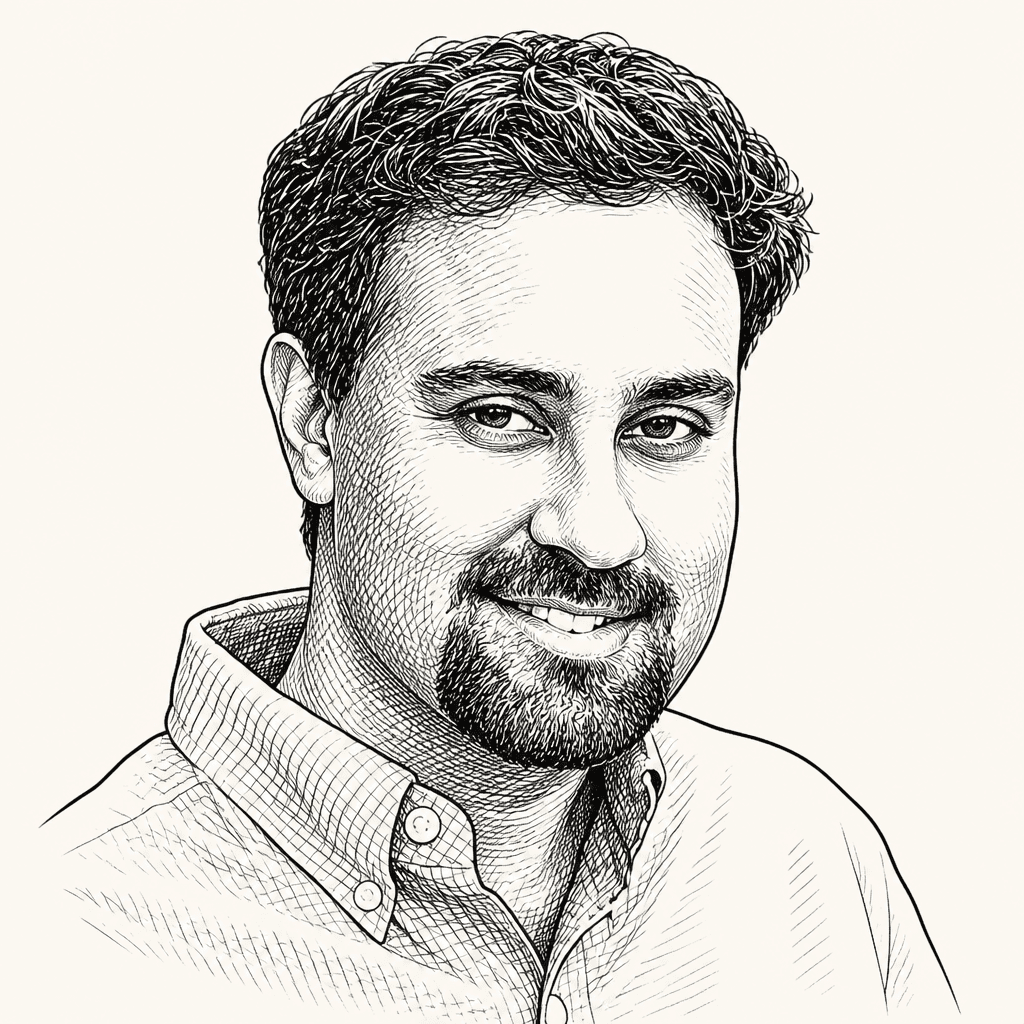

In closing remarks from an AI and science meeting, Risa Wechsler argued that AI is reshaping scientific fields unevenly, depending on their data, theory and modes of inquiry, and that scientists should use the moment to choose structures aligned with human values. Surya Ganguli pushed the question toward scientific communication itself, suggesting that papers may be too narrow an artifact for AI-assisted science and that richer institutional records of research could better transfer knowledge. Both framed AI for science as a design problem around human purposes, not just faster automation.

AI is changing science unevenly, and that unevenness is the point

Risa Wechsler framed the day’s most useful lesson as comparative rather than universal: different scientific fields are not adopting AI in the same way, at the same speed, or for the same reasons. The differences matter because fields vary in how data-rich they are, how much they are based in theory, and what kinds of scientific work AI can plausibly accelerate.

For Wechsler, the value of bringing those fields into the same room was not to force a single account of “AI for science,” but to see how similarities and differences expose what each field can learn from the others. Some domains are already positioned to use AI because they have abundant data; others may be shaped by less data, stronger dependence on theory, or different modes of inquiry. The result is not a single transformation but a set of transformations occurring, in her words, “at different paces and in different ways.”

I think it's really interesting to see how AI is transforming those different fields at different paces and in different ways.

She also treated the current moment as a choice point. Because AI is changing scientific practice quickly, Wechsler said scientists should think deeply about “the future we want”: what human values should guide the work, what scientists care about in science, and what structures would best support those commitments.

That responsibility was not merely defensive. In Wechsler’s account, rapid change creates an opportunity to be deliberate. If AI is going to reshape how discovery happens, scientists should not only ask what the tools can do. They should ask what kind of scientific future those tools are helping to build.

The scientific paper may no longer be the right artifact for acceleration

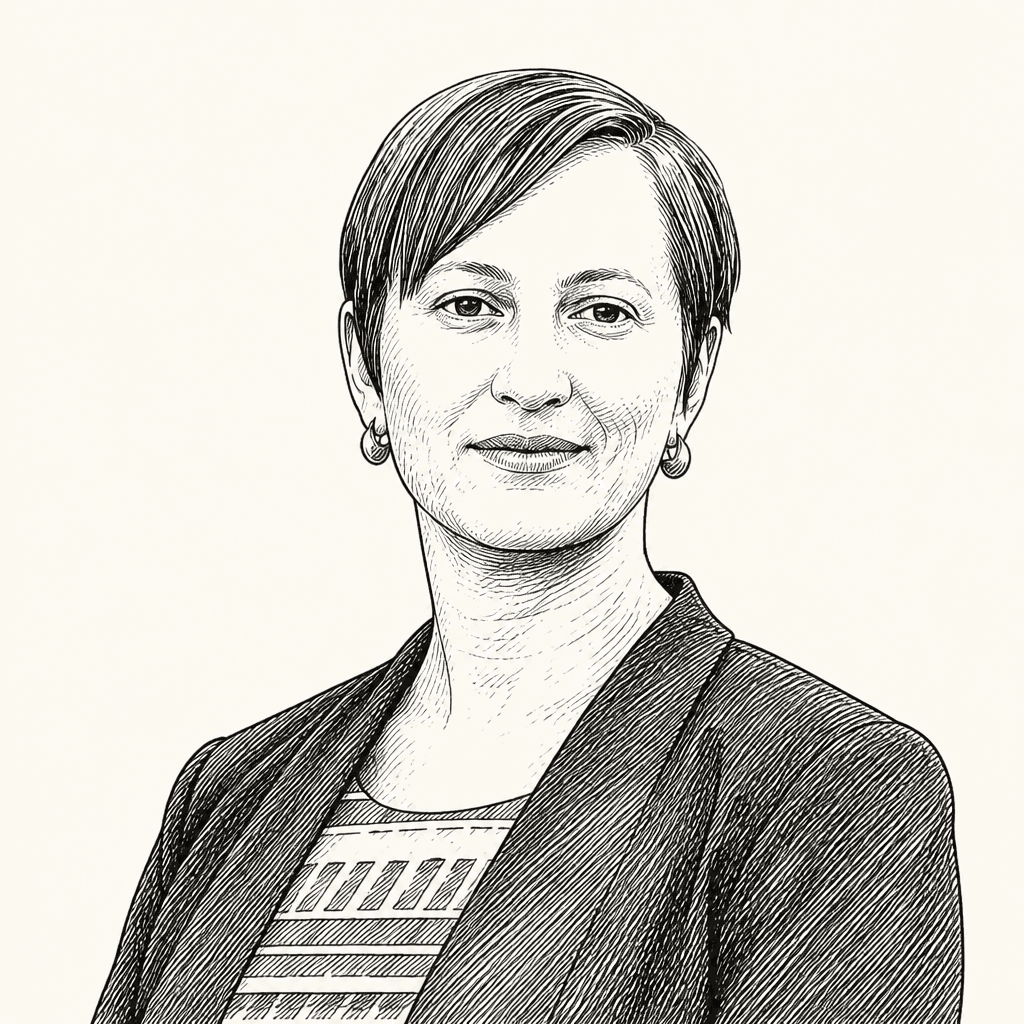

Surya Ganguli picked up the interdisciplinary thread but pushed it toward a more radical question: if the goal is to accelerate scientific impact, what should the main artifact of science be?

His answer began by challenging the paper’s status as the default unit of scientific communication. A paper, he said, is “a tied up in a bow story” that leaves out the “pain and suffering and the learnings” that happened along the way. It communicates the final narrative, not the full process.

What should an artifact of science be to really accelerate our impact? And it may not be anymore a paper written for humans.

Ganguli’s proposed alternative was a much richer institutional record of science. He imagined an entire institution in which scientists and AI agents document every step of the scientific process. That accumulated record could then be loaded into the context window or skill files of an AI system and transferred to another institution. In that scenario, what moves between institutions is not only a finished conclusion, but something closer to what he called a “codec” for how to solve a large institutional problem.

The claim is not simply that papers are inefficient. It is that the scientific process contains work and learning that papers routinely compress away. Ganguli’s example suggests that if AI systems can absorb and reuse a fuller record, then the relevant object of communication may become the documented process itself: the steps taken, the lessons learned, and the institutional context that helped produce a result.

His image of institutions exchanging accumulated scientific memory points to a different model of collaboration. The paper is an artifact written for human readers. The institutional record he described would also be usable by AI systems, which could take in the broader process behind a discovery rather than only the polished account that appears at the end.

AI for science still has to be designed around human purposes

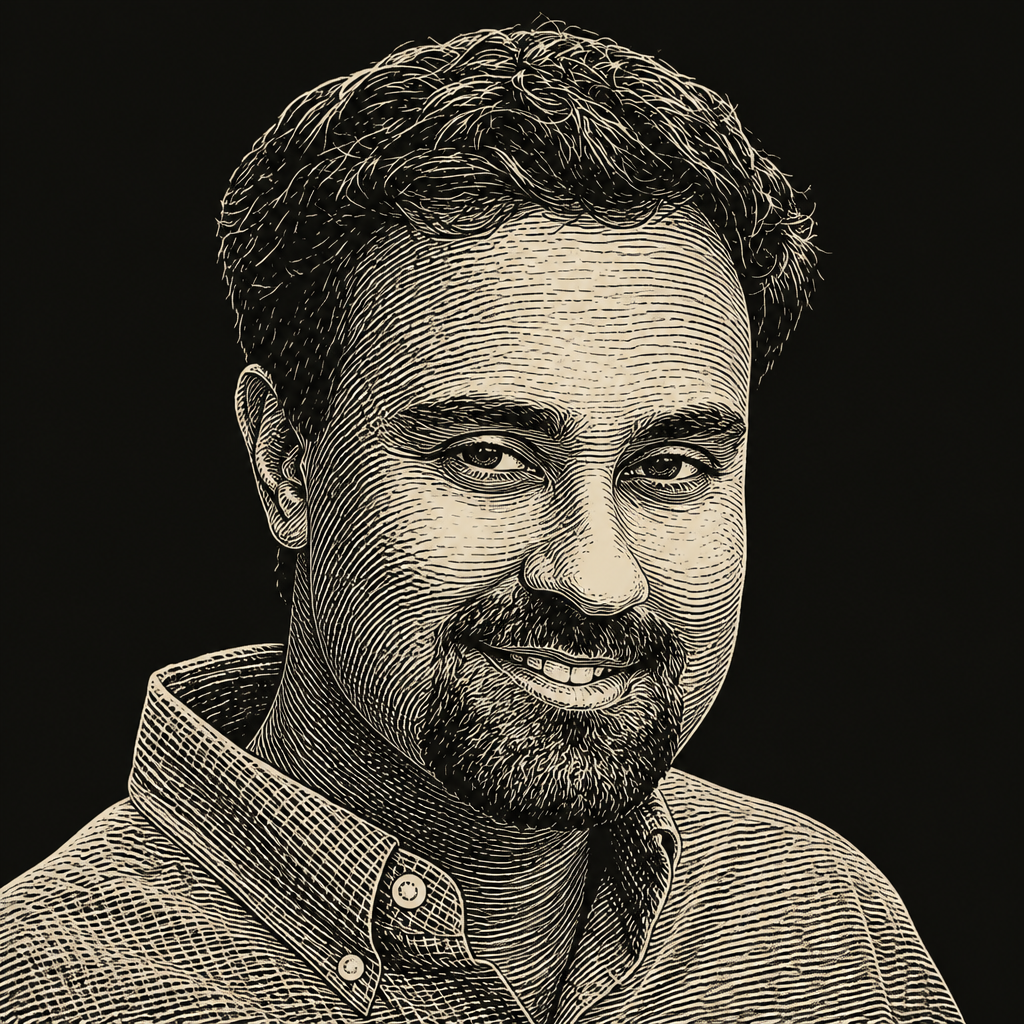

Risa Wechsler and Surya Ganguli converged on the idea that AI for science should be intentionally shaped around human purposes, not treated as a purely technical acceleration problem. Wechsler emphasized human values, scientific priorities, and the structures needed to support them. Ganguli described the joy of doing science as a force that could “sculpt” the development of AI for science toward human understanding.

The design question looks different across fields. Wechsler’s point about uneven transformation implies that AI infrastructure cannot be treated as one generic layer placed over science. Fields differ in data richness, theory dependence, and scientific practice. Ganguli made one possible design problem concrete: if the paper is too narrow an artifact for AI-assisted science, institutions may need ways to capture more of the research process and preserve enough context for that record to be useful beyond the group that produced it.

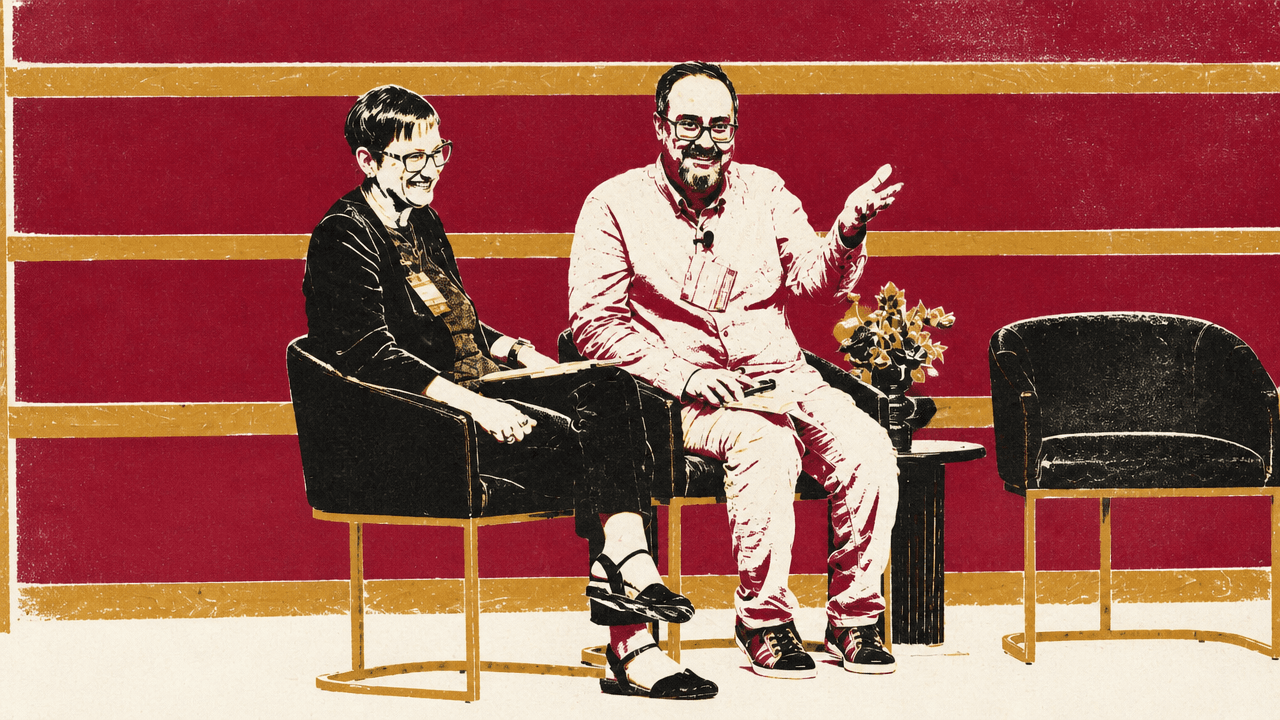

Ganguli’s closing example made the human-purpose point through culture rather than methodology. He noted that an ocean model discussed earlier by Laure was named Samudra, Sanskrit for ocean. He also mentioned a foundation model for the sun called Surya, his own name. Sanskrit, he said, is closely related to his native Bengali and dominated thousands of years ago in the Indian subcontinent, where many people were farmers. For him, the fact that an ancient language now names frontier AI models in a technological age marked by enormous change showed a continuity of culture amid transformation.

The example did not function as a technical argument about model design. It was a reminder that scientific and technological systems carry human memory, homage, and identity. Even as scientific practice changes through AI, Ganguli suggested, the work remains tied to human origins and human meaning.

In his phrasing, “we’re doing science for humanity.” AI may help science move faster, but Wechsler’s emphasis on field differences and Ganguli’s challenge to the scientific paper both point to a more specific question: what should be preserved, shared, automated, or made legible if the goal is not just more output, but better science for human understanding?