Compute Allocation Is Anthropic’s Core Constraint as Claude Revenue Surges

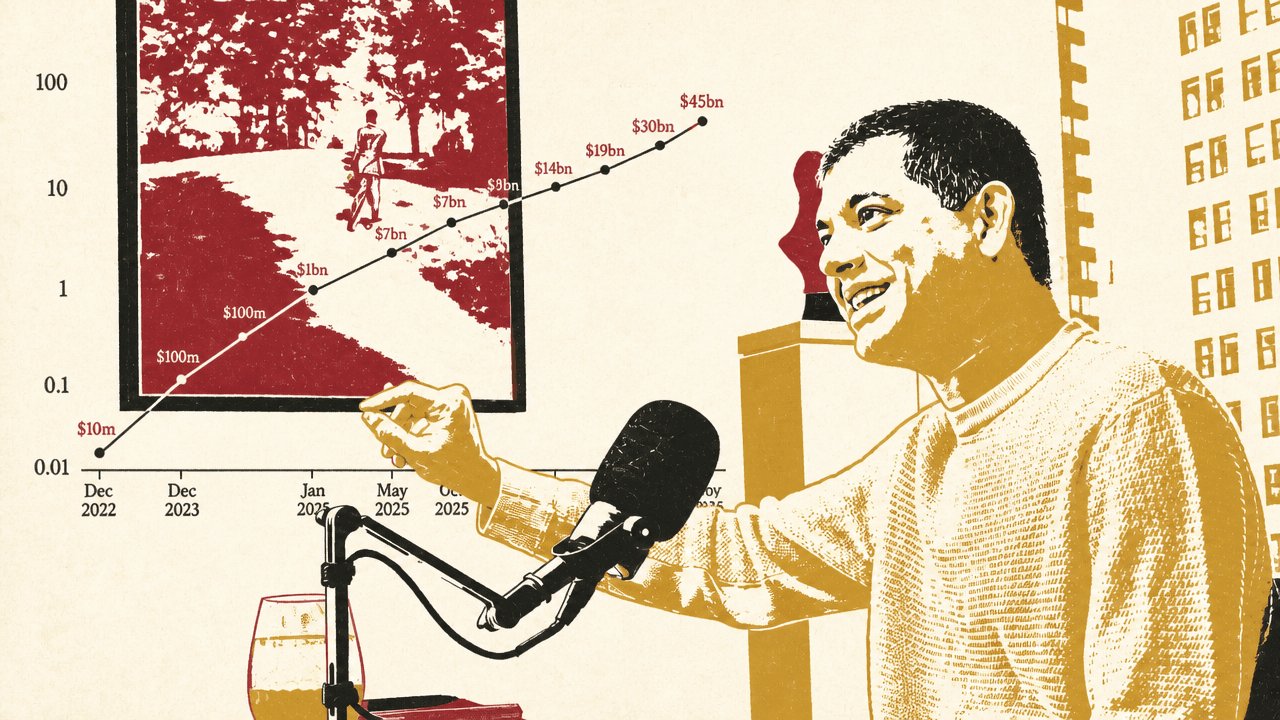

Anthropic CFO Krishna Rao argues that the company’s rise is best understood through compute: a scarce capital asset that must be bought years ahead and constantly reallocated across model training, customer demand, internal automation and future products. In an interview with Patrick O’Shaughnessy, Rao says ordinary forecasting and software-margin frameworks break down when model capability, adoption and revenue compound together, leaving Anthropic to manage growth through scenarios rather than point estimates.

Compute is Anthropic’s capital allocation problem

Krishna Rao describes compute as “the lifeblood” of Anthropic’s business: the canvas on which model development, internal acceleration, and customer service are all built. The central operating problem is not simply acquiring enough chips. It is deciding, years in advance, how much compute to buy in a business whose demand and capabilities are compounding faster than ordinary planning cycles can handle.

That makes compute both procurement and product strategy. The same scarce resource determines whether Anthropic can train the next model, serve today’s customers, accelerate its own employees, and ship the products that make the next model useful. Rao’s finance problem is therefore not a narrow infrastructure budget. It is a recurring capital-allocation decision across present revenue, future capability, and internal productivity.

The risk cuts both ways. Buy too much compute, Rao says, and “you gut a business.” Buy too little, and Anthropic cannot serve customers or remain at the frontier. Compute cannot be ordered on a weekly basis at the scale Anthropic needs; his example was a gigawatt of compute, which requires long-range planning rather than a last-minute purchase order. That forces Anthropic to work backward from scenarios rather than fixed forecasts.

Rao says he still spends 30% to 40% of his time on compute. That includes procurement, but also allocation: deciding how much goes to model development, internal use, and customer demand. Anthropic has a floor below which it will not cut model-development compute, even if that creates pressure elsewhere, because Rao sees frontier model capability as the engine of the company’s future enterprise revenue.

The company’s flexibility, according to Rao, comes from using three chip platforms: Amazon Trainium, Google TPUs, and Nvidia GPUs. Anthropic uses them fungibly across training, internal acceleration, and customer-serving inference. That capability did not appear overnight. Anthropic began using TPUs at scale when many observers thought GPUs were the obvious default, and Rao argues that years of investment now let the company route workloads across chip types and generations more efficiently than other frontier labs.

That flexibility is technical, not just contractual. Anthropic has built an orchestration layer to use different compute types for different workloads. Rao also says the company builds its own compilers and works from the chip level up, including close collaboration with Amazon’s Annapurna Labs team to influence chip roadmaps. His claim is that this makes “a dollar of compute” go further inside Anthropic than it would elsewhere.

The reason this matters is that Anthropic’s compute base is heterogeneous and time-staggered. Some capacity is available near term; some arrives through long-term deals; some is better suited for reinforcement learning, some for leading-edge training, some for fast customer inference. The business is not optimizing a single fleet against a single use case. It is trying to place every class and generation of compute where it has the best return.

Rao calls this a “layer cake” of compute: different capabilities, different start dates, different price-performance curves, different locations, and different durations. Anthropic evaluates near-term opportunities and long-term commitments using the same basic framework: what type of compute it is, what it costs, when it lands, how efficiently Anthropic can run it, and what return the company can generate with it.

One example is Anthropic’s announced partnership with xAI for the Colossus facility in Memphis, which Rao says will help expand especially on the consumer and prosumer side. He also points to much larger commitments: a 5-gigawatt Google and Broadcom TPU deal beginning in 2027, a Trainium deal with Amazon for up to 5 gigawatts, and more than $100 billion of commitments, with some compute already landing and more arriving through the rest of the year and into the next.

| Compute source | Platform or facility | Scale or role Rao described |

|---|---|---|

| Amazon | Trainium | Up to 5 gigawatts; also a deeply embedded chip and capacity partnership |

| Google and Broadcom | TPUs | 5 gigawatts beginning in 2027 |

| Nvidia | GPUs | One of Anthropic’s three chip platforms |

| xAI | Colossus facility in Memphis | Near-term compute to support expansion, especially consumer and prosumer use |

The practical consequence, according to Rao, is that Anthropic can absorb additional compute much faster than he thinks it could have one or two years ago. If a large heterogeneous pool of compute were dropped on the company today, he says, it would be deployed “very rapidly” across model development, internal acceleration, and customer demand. A year or two earlier, differences among platforms and their operating idiosyncrasies would have made that harder.

This is the through-line for Anthropic’s finance logic: compute is treated less like a simple cost of goods sold and more like a fungible capital asset. The same resource can support present revenue, future model capability, internal productivity, and product development, depending on how it is allocated at a given moment.

Forecasting fails when the business compounds weekly

Krishna Rao frames Anthropic’s planning problem as a “cone of uncertainty.” Small differences in weekly or monthly growth rates produce radically different outcomes when the business is growing exponentially. Rao says he had to break his own habit of thinking linearly after joining the company.

That affects both revenue planning and compute procurement. Anthropic looks at scenarios over one- to two-year periods, then works backward to ensure it can stay at the frontier, serve customers, and reserve enough internal compute to accelerate employees. The last category is not incidental: Rao says Anthropic could serve billions of dollars of revenue with compute currently allocated to internal employee use, but chooses not to because internal acceleration is part of the long-term return.

The old cadence of quarterly forecasting and revisiting assumptions at the next board meeting does not work, in Rao’s view. A capability that was unavailable a month earlier may suddenly exist, changing the market size and invalidating the prior model. Anthropic therefore keeps a low threshold for updating assumptions.

Coding is the analogy Rao uses. Around Sonnet 3.5 and 3.6, Anthropic saw a significant jump in coding capability, followed by adoption, usage, and revenue. Rao says that pattern now helps the company think about what might happen in other parts of the economy: model capability improves, more use cases become possible, customers consume more, and revenue follows.

The underlying forecast is not a point estimate. It is a range of outcomes, and the company tries to preserve enough flexibility to operate near the high end. Rao’s warning is that trouble comes when the company finds itself at one point in the cone but has only purchased compute for a lower one. Anthropic’s compute efficiency and cross-platform flexibility are meant to narrow that operational risk.

This also shapes Rao’s view of capital raising. He says Anthropic has raised $7.5 billion since he joined, with another $50 billion to come in the future from Amazon and Google deals. But he characterizes that capital not primarily as funding current operating losses. The reason to raise, in his description, is the cone of uncertainty: the need to support growth if the upper-end scenarios continue to materialize.

When Rao joined, Anthropic had about $250 million of run-rate revenue and a plan to reach $1 billion. His first reaction was to ask, “in what year?” He now describes that as linear thinking. Dario Amodei, Rao says, has been a much better predictor of revenue than he has been, though Rao expects the forecasting gap to narrow.

What changed Rao’s mind was not abandoning discipline. It was observing that the revenue exponential sits on top of other exponentials: model capability, customer adoption, product release velocity, internal automation, and compute efficiency. Those dynamics made the usual objections — laws of large numbers, enterprise adoption speed, customer inertia — less decisive than they first seemed.

Frontier intelligence unlocks use cases, not just benchmark points

Krishna Rao rejects the idea that model intelligence should be treated as a single IQ-like number. Anthropic publishes benchmark cards, but he says many benchmarks are saturated, and the company ultimately measures capability through customer experience: what the model can actually do in real workflows.

The gains he emphasizes are multidimensional. New models may be better at long-horizon tasks, tool use, computer use, agentic workflows, or simply doing equivalent work faster. Rao gives a human analogy: if two employees can both complete an assignment but one takes a week and the other takes a day, the faster worker may be seven times more valuable in practice even if both are “capable” in a narrow sense.

People tend to think about model intelligence as IQ. It’s a single number. Okay, this model was at 110 and then it goes to 125. We think of it kind of differently. Intelligence for us is multidimensional.

That is why Rao argues that the returns to frontier intelligence are especially high in enterprise. Customers are not merely trying the newest model because it is novel. They are pushing the limits of what models can do inside coding, financial services, life sciences, security, and other workflows. Each model generation unlocks more total addressable market, makes existing tasks more efficient, and lets customers invest in more tokens.

Rao illustrates this with Anthropic’s recent revenue trajectory. He says the company started the year at about $9 billion of run-rate revenue and ended the quarter north of $30 billion. He attributes that kind of change to leaps in model intelligence and the products built around them.

The model-efficiency side matters as much as capability. Rao says the common analogy of new model generations as moving from a sedan to a sports car fails because, in Anthropic’s case, capability improvements can come with better “fuel efficiency.” From Opus 4 to 4.5, 4.6, and 4.7, he says each leap brought a multiplier in how efficiently the model processed tokens, though the leaps were not equal.

That efficiency feeds back into research. Reinforcement learning, in Rao’s description, is “basically inference within a sandbox with a reward function.” If a model becomes more efficient at inference, reinforcement learning becomes more efficient too. A new model can therefore give customers more capability while also making Anthropic’s internal development loop cheaper or faster.

Anthropic also deploys efficiency improvements between major model generations. Rao describes a continuous process: research improves model capability and serving efficiency; internal workloads use the best models, including unreleased models; customer demand informs future training targets; and better models make the next round of internal work more productive.

Recursive improvement is real, but Rao still puts researchers at the center

The possibility that frontier models help build the next generation of frontier models is no longer theoretical inside Anthropic. Krishna Rao says the company sees progress accelerating and that, for Anthropic, scaling laws remain “alive and well.” Inside the company, he says more than 90% of code is written by Claude Code, and “a lot of Claude Code’s code is written by Claude Code.”

That is one reason Anthropic allocates scarce compute internally even when that compute could otherwise serve revenue: the models are helping build the next generation of models and products. Rao does not describe this as a fully autonomous lab. The core of Anthropic remains a research organization running experiments and pushing model limits. Models assist that process today, and Rao expects them to become more helpful over time, but he emphasizes the role of human researchers in setting direction, choosing priorities, and identifying new areas of discovery.

His preferred framing is that models accentuate and accelerate high-density talent. Anthropic talks internally about “talent density” beating “talent mass,” and Rao applies that logic to research, inference engineering, product development, and finance. The combination Anthropic wants is not merely the largest group of people or the most compute. It is a dense group of capable people using the best models against the right problems.

Scaling laws are discussed internally through multiple lenses. During pre-training, Anthropic compares loss curves to prior models. The company does similar comparisons around reinforcement learning. Customer feedback then becomes another signal: where models get stuck, what capabilities customers need, and what products customers could build if the model were better in specific ways.

Rao stresses that Anthropic does not train on enterprise customer data. On the prosumer side, he says training happens only if a user opts in. But customer requests still shape targets. If a customer says a capability is not yet good enough to support a product, Rao says Anthropic often tells them to build for that future state because the R&D side is expected to improve it over time.

Rao’s view is not that scaling laws are guaranteed forever. He says Anthropic is a skeptical place, scientific in method, and willing to challenge prior assumptions. But from what Anthropic currently sees, he says, “the scaling laws are not slowing down.”

Anthropic wants to be mostly platform, with selective applications

Krishna Rao describes Anthropic’s business strategy as mostly horizontal and platform-oriented. The platform is not just raw model access. It includes prompt caching, virtual machines, Claude Code being called within broader workflows, Dispatch, the Claude Agents SDK, managed agents, and other tools that Rao sees as vectors for customers to access model intelligence.

His analogy is early AWS: a platform can accrue significant value while customers building on top of it accrue even more. Anthropic wants customers to build products and internal systems on Claude, and Rao says most of the company’s attention is directed toward that enabling layer.

Anthropic will still build applications when it believes it has a particular reason to do so. Rao gives two rationales. The first is building ahead of model capability. Claude Code, in his account, was built from a Claude-led rather than developer-led perspective: Anthropic believed the models would soon become capable enough for that form factor even if they were not fully there at launch. The second reason is to demonstrate value for the ecosystem, as with Claude for financial services, Claude for life sciences, and Claude Security.

That creates a tension with customers and would-be customers who worry Anthropic may become a competitor. The underlying model provider can move into an application layer adjacent to companies building on top of it. Rao does not deny the anxiety. He says capability progress is so fast that releases can surprise even Anthropic, and what happened over five, 10, or 20 years in prior technology waves can now happen in months.

The company’s answer, he says, is to be partner-oriented: run early-access programs, work closely with customers, listen to desired capabilities, and release products in collaboration with ecosystems where possible. But he also treats some of the tension as inherent to frontier model development. If models become much more capable quickly, product boundaries will move quickly too.

Pricing is designed for adoption, not maximum short-term scarcity rent

Krishna Rao argues that Anthropic’s pricing cannot be understood simply as scarcity pricing in a compute-constrained market. The company is still early in its commercial life. It is a little over five years old; March marked the third anniversary of its first dollar of revenue; and Rao says it only had a true frontier model for the first time in March 2024.

Within that context, Anthropic has kept pricing relatively stable across Haiku, Sonnet, Opus, and now Mythos. Rao says the biggest pricing change was lowering the price of Opus-class models when Opus 4.5 launched. The reason was underutilization. Customers were trying to force Opus-level problems into Sonnet workloads because Opus was too expensive relative to how they wanted to use it.

Efficiency improvements allowed Anthropic to serve Opus more cheaply, and lowering the price made the model more accessible. Rao says usage rose far more than expected, invoking Jevons paradox: as effective cost falls, consumption can increase enough to expand total use dramatically. Once customers built Opus into their workflows, Anthropic could release Opus 4.6 without changing price and let customers slot in the improvement.

Rao’s pricing philosophy is therefore linked to market formation. Anthropic wants customers to see high ROI, proliferate the use of frontier intelligence across startups and large enterprises, and build durable workloads around the models. Pricing stability helps customers invest.

Margins are not ignored, but Rao says Anthropic thinks less in terms of narrow per-token variable cost and more in terms of return on the full compute envelope. Compute supports revenue over different time horizons. Inference serves revenue today. Model development may unlock a capability and market six months from now. Internal acceleration may help launch a product. All of those uses draw from the same fungible resource.

This is why Rao thinks ordinary software gross-margin frameworks can mislead. Compute is not merely an incremental cost to serve the next customer. Anthropic might use the same chip for inference in the morning and model development later in the day. In a traditional company, R&D people cannot become factory cogs and factory cogs cannot become R&D people. Anthropic’s compute can move across those roles.

Rao says returns on compute spend are “robust” today. The measuring stick is the return on compute across all workloads, not a clean separation between customer-serving cost and research cost. That paradigm, he says, remains one of the hardest things for investors to understand.

Rao’s finance team is his example of the enterprise thesis

Krishna Rao uses his own organization as an example of how Claude changes knowledge work. Anthropic’s finance team began using Claude Code about a year earlier not only for coding tasks but as a general assistant or “digital co-worker.” Rao says that early behavior foreshadowed Co-work, Anthropic’s extension of the Claude Code pattern into broader knowledge work.

The finance team now uses Claude to produce statutory financial statements for Anthropic’s legal entities, with human review. Rao says the team has also built a real-time platform called Ant Stats and a library of more than 70 finance-specific Claude skills available through a common repository.

One of those skills produces Anthropic’s monthly financial review. Rao says the output is 90% to 95% ready, shifting the discussion from “what exactly happened” to what the business should do about it. Claude is not only reporting figures, in Rao’s description; it is helping identify drivers and reasons numbers changed.

The effect is speed. Work that used to take hours, such as a weekly report on revenue drivers or compute utilization, can now take about 30 minutes. Rao says this lets finance move faster as an “insight engine,” getting analysis to business leaders sooner and spending more time on strategic implications.

Anthropic also tracks token usage internally. Rao says some of the heaviest users on the finance team are senior people, not only younger employees with coding backgrounds. The head of tax, he says, has been the team’s top user, applying Claude to tax policy engines and automation of large workloads. Rao’s standard for his own team is explicit: if Anthropic employees are not superusers pushing the limits of Claude, they cannot expect customers to do so.

Rao’s interpretation of this shift is more optimistic than a simple “AI tells people what to do” framing. He sees a version of Jevons paradox for labor: as people become more productive, Anthropic hires more people because there is more work to do. Employees spend less time reconciling numbers or closing books and more time deciding how to allocate resources and reinvest in the business.

Investor skepticism moved from “why frontier?” to “how does compute return?”

When Krishna Rao joined Anthropic two years earlier, the company was closing its Series D. He says that fundraising was not straightforward. Anthropic only had a frontier model partway through the process, and the FTX transaction was liquidating a block of Anthropic shares near the tail end. Investors questioned why a frontier model was necessary, whether AI safety and building a large business were in tension, and whether Anthropic needed a traditional enterprise software salesforce.

By the end of 2024, Anthropic raised its Series E with close to $1 billion of run-rate revenue. But Rao says the first close happened the day DeepSeek news came out, creating volatility and causing investors to reconsider their AI assumptions. Even then, investors looked at the company’s forecasts and doubted that enterprise adoption could move fast enough to sustain growth.

Rao says the business continued to validate the thesis that returns to frontier intelligence were high. He describes Anthropic’s growth as “model-led,” enabled by products, go-to-market, and distribution. He also says investors came to understand a linkage they had initially doubted: Anthropic’s safety orientation could support, rather than undermine, enterprise sales.

The safety investments Rao highlights include interpretability, which he compares to an MRI for a model, and alignment science, which studies whether the model does what it is told and how often it strays. These were mission-driven investments, he says, but they had downstream business effects. If Anthropic can look inside models, it may be better at building them. If enterprises trust Anthropic’s safety posture, they may be more comfortable placing sensitive workflows on Claude.

Rao says Anthropic now sells to nine of the Fortune 10. Those customers entrust the company with customer information, internal data, employee interactions, and in some cases customer-facing workflows. His claim is that safety, interpretability, and alignment investments benefit those customers because trust matters when AI enters sensitive enterprise systems.

Rao says the right investor questions for frontier labs are direct: what is the ROI on compute across all uses, what is the timing and shape of that return, are customers deploying at meaningful scale rather than just testing, and where will future compute come from when suppliers may also sell to others or use compute internally?

On customer ROI, Rao says Anthropic’s annualized net dollar retention rate is more than 500%, and he argues the company is no longer dealing only with pilots. On the way to the interview, he says, he signed two double-digit-million-dollar commitments during a 20-minute Uber ride.

Mythos forced a different release pattern

Krishna Rao distinguished between Mythos’s broad capability and a specific spike in cyber performance. Mythos, in his description, should not be understood merely as a cyber model. It is highly capable across many dimensions, but cyber was one area where it “spiked.” That spike changed the release process. Anthropic chose a phased release rather than a broad immediate launch.

The reason was dual-use capability. Rao says Mythos could be used positively to patch codebases and strengthen defenses. But the same capability was also worrying. In one open-source codebase example, Rao says a prior model found 22 security vulnerabilities while Mythos found 250. Anthropic decided to begin with a group that could use the cyber capability defensively and then expand over time.

Rao sees that phased approach as a possible template for future releases. It did not mean suppressing the model indefinitely. It meant acknowledging a particular capability spike and shaping access around positive use, especially defensive cyber use.

Rao’s comments about government are related but distinct. He says Anthropic prioritizes a strong relationship with government because regulation has a role in how models develop. He describes Anthropic as “America First” in its approach, wanting the technology to support the United States and democratic countries around the world. He says the company has worked closely with the administration on Mythos.

The balance Rao wants is rapid innovation with a responsibility framework. Anthropic has long argued, in his account, that the technology has implications that should be discussed honestly, including with government. The Mythos process is his example of that principle in practice.

More broadly, Rao says the AI industry needs to do a better job explaining both opportunity and risk to the public. He refers to Dario Amodei’s “Machines of Loving Grace” essay as an articulation of AI’s potential in drug development, curing common and rare diseases, improving healthcare delivery, raising living standards, and helping places with fewer resources. But Rao says the industry should not tell people only that everything will be great. Compressed change will create bumps, and people are more likely to trust a balanced account than a purely optimistic one.

Anthropic’s culture is built to make hard allocation decisions without fiefdoms

Krishna Rao says Anthropic’s culture affects hiring, retention, compute allocation, and investor perception. The company has seven co-founders, which he says “shouldn’t work on paper” but does in practice because they set cultural norms.

Anthropic uses a culture interview as a real part of hiring, not a box-checking exercise. Rao says a candidate can be exceptional on every other dimension and still not be hired if they fail the cultural bar. He describes the culture as collaborative, humble, transparent, intellectually open, and intolerant of fiefdoms, sharp elbows, or excessive credit-seeking.

That matters for compute allocation because the resource is scarce and every team has a plausible claim. Rao says teams represent what they would do with compute, then engage in a frank discussion about ROI. Debate can be rigorous, but once the company makes a decision, there is alignment rather than second-guessing or politics.

Transparency is another feature Rao emphasizes. Dario Amodei meets with the company every two weeks, usually after writing a short document on three or four topics, then takes open questions. Rao says the questions are not planted or soft, and the forum gives employees a window into leadership’s thinking even though it is not a decision-making body.

Rao connects this culture to retention. All seven co-founders are still at Anthropic, and most of the first 20 to 30 employees remain. During a period when Meta and others were offering large compensation packages to technical talent across large language model labs, Rao says Anthropic lost two people while other labs lost dozens.

His explanation is that many Anthropic employees want impact, talent density, collaboration, transparency, and mission alignment more than simply the highest compensation package. He also describes a “race to the top”: Anthropic does not claim to have every answer, but wants parts of its approach to be emulated so the technology is developed better across the industry.

The next frontier is the virtual collaborator

For Krishna Rao, the near frontier is not just a smarter chatbot. It is a “virtual collaborator” for enterprise knowledge work: a system with organizational context, access to company-specific tools, memory, the ability to learn from its own mistakes and users’ mistakes, and the capacity to work over long time horizons on ideas rather than isolated tasks.

That requires both model capability and product form factor. Intelligence, in Rao’s framing, is not generic smartness. It must become useful for specific organizational workflows, using the tools and context that matter inside a company.

Claude Code is the leading example. Rao expects what happened in coding to spread elsewhere in the economy. Co-work, he says, is growing faster than Claude Code did when indexed to the same point in time, which he finds striking because developers are usually fast adopters of new tools.

Anthropic’s own product development has already changed, according to Rao. He says it is no longer one product manager and two engineers shipping over three months. Product development is daily, with fleets of agents working across the company on specific tasks. That makes everyone more like a manager of agents.

The limits of this future are not absent. Rao names three risks that could push Anthropic toward the low end of its cone of uncertainty. First, diffusion inside customer organizations could slow. Use cases are still catching up to model capability, and large organizations have entrenched tools, practices, and habits. Second, scaling laws could slow or stop holding, though Anthropic does not currently see that. Third, Anthropic could fail to remain at the frontier in a competitive market.

The opportunity that most excites Rao is biotechnology and healthcare. He imagines a world in which someone diagnosed with an incurable disease might live long enough for a cure to be discovered, because AI accelerates drug development and eventually drug discovery. Today, he says, AI is already helping with paperwork, clinical study reports, and related processes. The more powerful application is further upstream: molecules, proteins, and small changes with large biological implications.

If lab throughput rises 10x or 100x, Rao argues, researchers can run more experiments and get better results faster. That could affect common diseases, rare diseases, and disorders far down the chain rather than only a narrow set of high-priority targets.