Real AI Gains Are Powering Unproven Compute, IPO, and Layoff Narratives

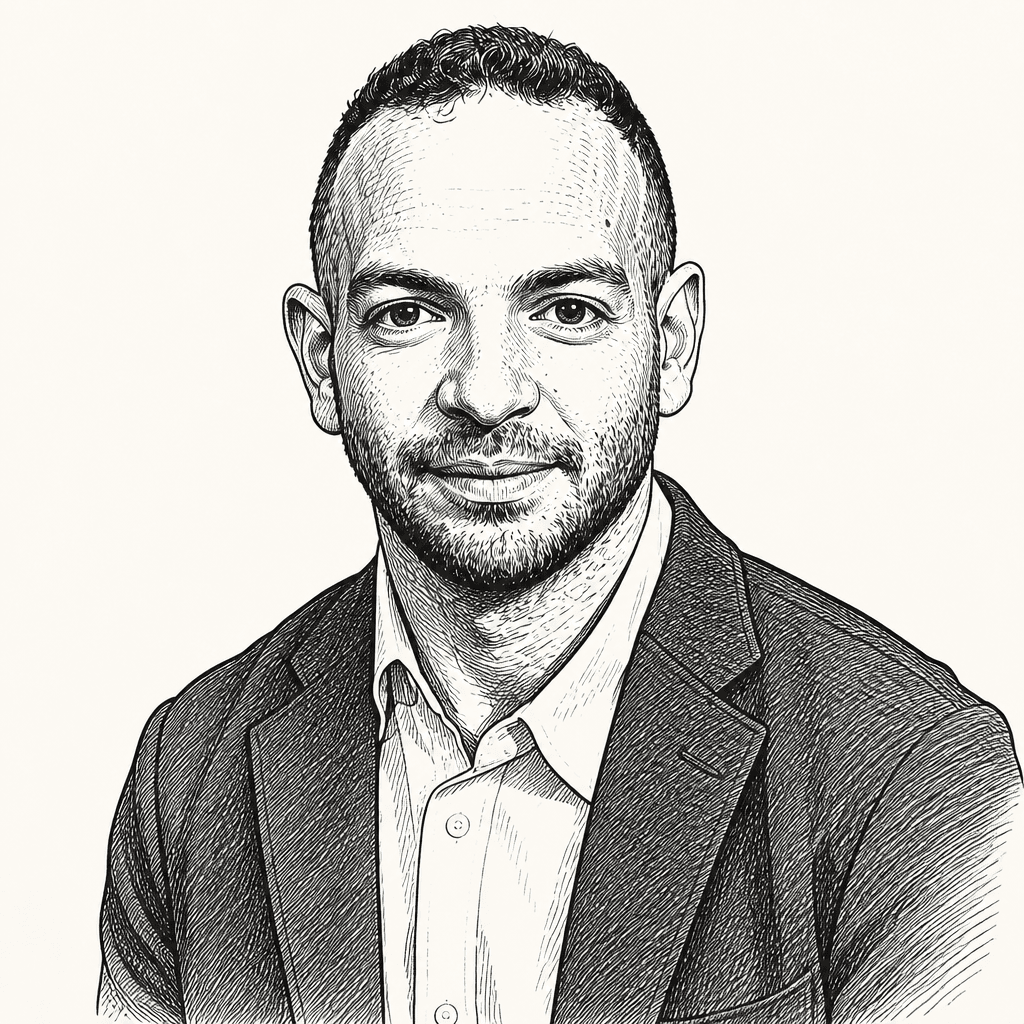

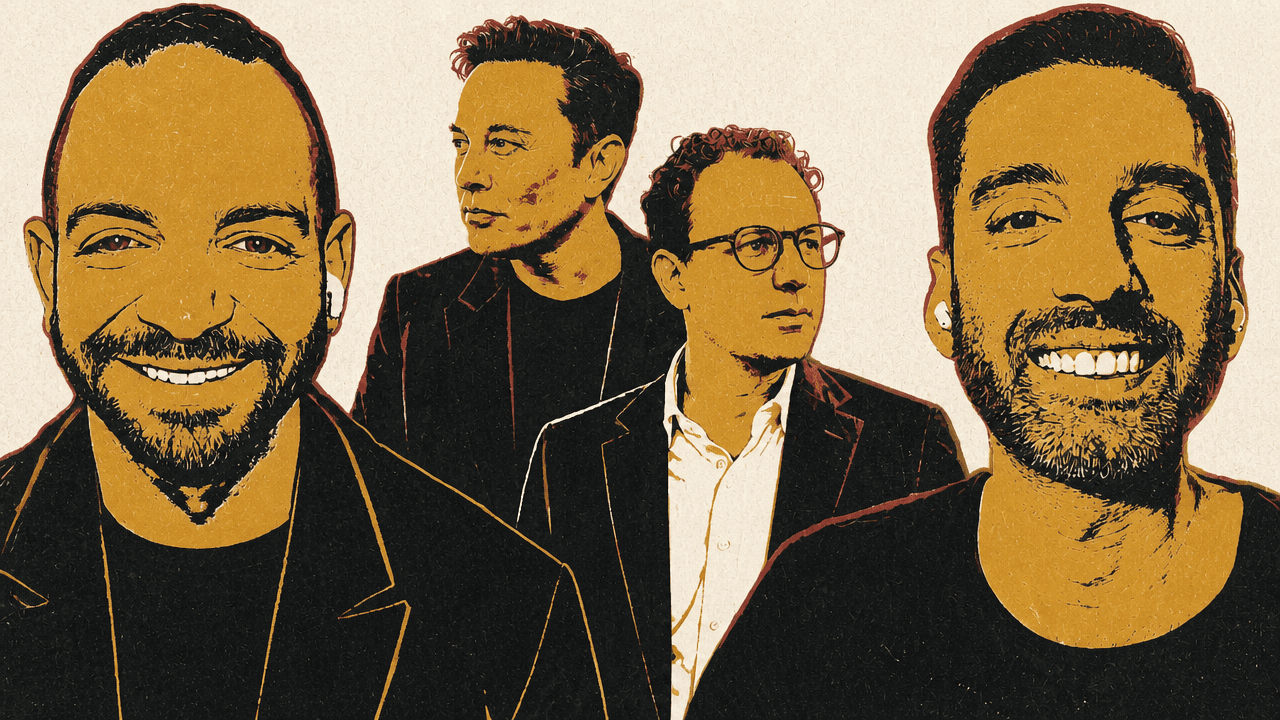

Alex Kantrowitz and Ranjan Roy read Anthropic’s SpaceX compute deal as both a real answer to Claude’s capacity constraints and a piece of market theater around AI demand, financing and IPO timing. Kantrowitz argues the Colossus 1 capacity could materially ease Anthropic’s limits and sharpen its race with OpenAI; Roy cautions that explosive usage and infrastructure announcements are also serving valuation narratives. The discussion extends that frame to OpenAI trial messages, Anthropic’s Mythos security claims and AI-linked layoffs: genuine progress, they argue, is being folded into stories that remain only partly proven.

Anthropic’s SpaceX deal is both capacity relief and market theater

Anthropic’s agreement to use SpaceX’s Colossus 1 capacity was presented with unusually concrete near-term terms: SpaceX would supply 300 megawatts of new computing capacity, using more than 220,000 Nvidia GPUs, by the end of the month, and Anthropic would use all of the computing capacity at the Colossus 1 data center. The deal also came with a more speculative flourish: Anthropic expressed interest in working with SpaceX on AI data centers in space, a long-running Musk priority.

The immediate significance is that Anthropic’s most visible constraint has not been model quality, but supply. Claude Code and the Claude API have been capacity-limited while demand has grown rapidly. Alongside the SpaceX announcement, Anthropic said it was doubling Claude Code’s five-hour rate limits for Pro, Max, Team, and seat-based Enterprise plans; removing peak-hours limit reductions on Claude Code for Pro and Max accounts; and raising API rate limits for Claude Opus models. For Alex Kantrowitz, that made the arrangement more than a long-dated infrastructure press release. It had an immediate customer-facing consequence.

The political and strategic oddity is just as important as the hardware. Elon Musk has spent months attacking Anthropic and Dario Amodei, including calling the company “misanthropic.” Now a Musk-controlled company is effectively renting Anthropic a full data center’s worth of GPUs. Kantrowitz interpreted that as a tacit concession by Musk’s side of the AI race. In his reading, if xAI could use this compute to train a frontier model that could beat or catch OpenAI and Anthropic, the capacity would not be leased away. He framed SpaceX as becoming “the new CoreWeave” rather than “the new OpenAI.”

Ranjan Roy did not dispute that Anthropic needs compute or that rate limits have hurt it. His objection was to accepting the announcement as a simple supply-demand story. Large AI infrastructure deals increasingly function as valuation support, customer signaling, and IPO preparation as much as operational commitments. Anthropic needs a credible story about relieving compute constraints. SpaceX benefits from showing a major new revenue stream and positioning itself as infrastructure supplier to one of the fastest-growing AI companies. In Roy’s view, that does not make the agreement fake. It means the market incentives around the agreement are doing some of the work.

The disagreement sharpened around whether the demand curve can be extrapolated. Anthropic’s reported demand is hard to wave away. Kantrowitz cited reporting from Anthropic’s developer conference that Amodei said the company had planned for roughly 10x growth this year, only to reach a rate that could make it 80x as large. Amodei was quoted saying, “I hope that 80-times growth doesn’t continue because that’s just crazy and it’s too hard to handle. I’m hoping for some more normal numbers.” Kantrowitz’s point was simple: that cannot all be gamification or wasted usage.

Roy’s answer was narrower. He was not saying the growth is fake. He was asking whether 80x should be treated as a stable signal, or whether it reflects a period when Claude Code had less direct competition and enterprise teams were spending on agentic AI with little scrutiny. Over the prior six to eight months, he said, many enterprises had effectively given teams permission to experiment without asking hard questions about token consumption. Before reasoning models and agentic coding, token use was much lower. Once autonomous knowledge work entered workflows, usage exploded. But that growth may partly reflect a period when nobody cared about cost.

That is what Roy called “token maxing”: a culture in which more model usage became an implicit sign of seriousness. He described senior technology executives bragging casually about large AI spend as if it were a status marker. Nobody boasts about a cloud bill anymore, he said, but some executives now boast about AI budgets. That behavior, to him, is a warning sign that some consumption is performative or undisciplined.

Kantrowitz accepted that some usage is inefficient. He offered a concrete example from his own use of Anthropic’s Opus 4.7: he asked it to make a PDF, and it spent roughly 30 minutes searching and calling tools instead of simply exporting a file it had already created. The model eventually apologized for going “down a rabbit hole worrying about a constraint that wasn’t actually blocking us.” Kantrowitz saw that as evidence of a fixable product problem. Roy saw the same fact as a limit on today’s compute extrapolations. Either Anthropic fixes the inefficiency, which lowers token burn for the same task, or users fix their own behavior because they do not want to pay for waste. Either way, a shift from “more is better” to “what are we spending?” changes the demand curve.

The Jevons paradox argument remains the industry’s strongest reply. Kantrowitz described the case this way: when a valuable good gets cheaper, people may use more of it because cheaper capability unlocks new uses. If models become more efficient, companies may run more agents in parallel, delegate more work, and consume more aggregate compute even as the cost per task falls. Roy agreed that demand can grow as AI gets cheaper and more capable. He resisted the stronger assumption that demand must grow exponentially, especially when the companies buying compute, selling compute, and raising capital all benefit from presenting the future that way.

| Question | Kantrowitz’s reading | Roy’s caution |

|---|---|---|

| Does the SpaceX capacity matter? | Yes: Colossus 1 is already built, and Anthropic immediately tied it to higher Claude Code and API limits. | Yes, but major AI compute announcements also function as signaling and financing narratives. |

| What does 80x growth imply? | Demand is too large to dismiss as wasted usage or hype. | The rate may reflect a temporary period of weak competition and low scrutiny over token spend. |

| Will efficiency reduce compute demand? | Cheaper, better models could unlock more use and more parallel agents. | Efficiency and cost discipline could make demand growth less exponential than the industry assumes. |

| How should the deal be read competitively? | It reduces Anthropic’s central constraint and makes the OpenAI-Anthropic race more direct. | It may be real and still partly designed to support IPO and revenue stories for both sides. |

Kantrowitz also cited a Financial Times figure that Anthropic’s annualized revenue, extrapolated from recent weeks, was expected to cross $45 billion imminently, up fivefold from $9 billion at the end of the prior year. Roy objected to the looseness of “annualized revenue” based on “recent weeks.” Actual annual revenue and a run rate based on aggressive short-term AI budgets are not the same thing. Kantrowitz’s broader claim remained that a company cannot approach that kind of annualized number with nothing underneath it. Roy’s answer was that the underlying activity may still include a lot of budget being thrown at AI before the business value is fully proven.

The practical disagreement is not whether Anthropic needs compute. It does. It is whether the SpaceX deal should be read primarily as a capacity event, a financing event, or a competitive event. Kantrowitz sees the arrangement as reducing Anthropic’s most important constraint and turning OpenAI versus Anthropic into a more direct heavyweight fight. Roy sees the same real technology and real demand inside a financing environment where every major party benefits from making the compute shortage sound permanent and exponential.

Kantrowitz reads Musk’s move through OpenAI rivalry and IPO timing

The SpaceX-Anthropic pairing is difficult to explain only as a cloud contract. In Alex Kantrowitz’s speculative reading, it makes more sense if Musk’s greater hostility is toward Sam Altman and OpenAI, not Dario Amodei and Anthropic. Musk may dislike Amodei, Kantrowitz argued, but he appears to dislike Altman more. If Musk cannot win the frontier model race directly, he can still help OpenAI’s strongest rival.

Kantrowitz put the logic bluntly: if Musk cannot win, he may prefer “the enemy of my enemy” to win.

Ranjan Roy pointed to Musk’s own public framing as a source of risk. Musk said SpaceX would provide compute to AI companies “taking the right steps to ensure it is good for humanity” and reserved the right to reclaim compute if their AI engaged in actions that harmed humanity. Roy treated that language as a possible escape hatch. If Musk later decides Anthropic is not acting in humanity’s interest, the same public-interest justification could be used to pull back.

Roy also argued that Anthropic’s dependence on Musk-controlled systems may already be larger than the compute deal suggests. He said much of Anthropic’s power in the AI community has come from X, where Claude and Claude Code use cases circulate heavily. X, in that sense, is “the AI platform.” Musk has influence over the attention layer, and the SpaceX deal could add influence over the compute layer.

Kantrowitz accepted the leverage point but returned to the OpenAI angle. Musk is simultaneously challenging OpenAI over its move away from its nonprofit structure. Kantrowitz’s theory was that the SpaceX deal could fit into a broader competitive sequence: SpaceX goes public first; Anthropic goes public before OpenAI; Anthropic uses SpaceX capacity to support extraordinary demand growth; and OpenAI arrives later with reputational damage from the trial and a less decisive compute advantage. Kantrowitz framed that as one possible way Musk could try to damage OpenAI, not as an established motive.

That theory made the deal more legible to Roy than a pure supply-demand account. The personal animosity between Musk and Amodei becomes less important if the real target is Altman and OpenAI. Anthropic’s success would then be useful not because Musk has changed his mind about Anthropic, but because a stronger Anthropic weakens OpenAI.

The IPO calendar matters because, in the speakers’ reading, SpaceX, Anthropic, and OpenAI all have reasons to shape investor expectations before going public or raising another major round. Roy asked why OpenAI and Anthropic need to go public at all when private capital has seemed abundant. Kantrowitz offered another theory: they may have expected to raise one more massive private round from “the final boss of private capital,” the Gulf states. If political or geopolitical developments made Gulf capital more hesitant to pour money into U.S. companies, the public markets would become more important.

Roy said that explanation made the sudden urgency more coherent. The conversation referred loosely to OpenAI’s last round as “$122 billion,” in the context of valuation rather than proceeds, and compared that private-market scale with historic IPO fundraises. Kantrowitz said the largest IPO he had in mind was Saudi Aramco, around $30 billion to $39 billion in proceeds. Roy’s point was that if private-market financing remains effectively unlimited, the urgency to go public is harder to explain. If the next giant private-money source has become less certain, the public-market race looks more urgent.

Anthropic, Kantrowitz added, is still likely to raise at least one more private round. He cited reporting that pointed to a possible $50 billion raise at a $900 billion pre-money valuation, and quoted an investor saying people are ready to throw any dollar amount at Anthropic; the issue is when the company wants to “pop their heads up” and say it is ready. The discussion treated those figures as reported possibilities, not as completed financing.

The SpaceX deal therefore sits at the intersection of operational need and market positioning. Anthropic needs compute to serve demand. SpaceX benefits from a growth narrative beyond its existing businesses. In Kantrowitz’s theory, Musk has strategic reasons to help OpenAI’s strongest rival even if he remains hostile to Anthropic’s politics and leadership. The agreement can be real and still be theater.

Mythos looks useful, not magical

Anthropic’s Mythos system illustrates a recurring AI distinction: a capability can be real without proving the most expansive story told about it. The relevant claims are different. One is that Mythos represents a dramatic model leap. The other is that Mythos can find real vulnerabilities.

Kantrowitz cited TechCrunch reporting on Mozilla’s use of Mythos in Firefox security work. According to the article excerpt, Mozilla said Mythos had found a “wealth of high-severity bugs,” including some dormant in the code for more than a decade. The report contrasted this with earlier AI security tools that inundated teams with low-quality reports and false positives. Mozilla researchers said newer agentic systems had improved because they can assess their own work and filter bad results.

The numbers were striking. Firefox shipped 423 bug fixes in April 2026, compared with 31 in April 2025. The monthly Firefox security bug-fix chart across all sources and severities showed most 2025 months sitting in the teens or twenties; January 2026 was 25; February was 61; March was 76; April jumped to 423. Brian Grinstead, a distinguished engineer at Mozilla, was quoted saying, “These things are actually just suddenly very good,” based on internal scanning, external bug reports, and signals across the industry.

| Month | Firefox security bug fixes |

|---|---|

| Jan 2025 | 21 |

| Feb 2025 | 20 |

| Mar 2025 | 26 |

| Apr 2025 | 31 |

| May 2025 | 17 |

| Jun 2025 | 21 |

| Jul 2025 | 22 |

| Aug 2025 | 17 |

| Sep 2025 | 18 |

| Oct 2025 | 26 |

| Nov 2025 | 19 |

| Dec 2025 | 20 |

| Jan 2026 | 25 |

| Feb 2026 | 61 |

| Mar 2026 | 76 |

| Apr 2026 | 423 |

Ranjan Roy remained unconvinced that this proved the broader marketing case for Mythos. He questioned how many of the bugs were truly catastrophic vulnerabilities and whether the results reflected a distinct new system or Anthropic applying existing models and attention to a security workflow. One cited example — a 15-year-old error in how the browser parses an HTML element — did not, to him, settle whether Mythos represented a fundamental shift.

Kantrowitz then cited Ethan Mollick’s framing. Mollick wrote that “Mythos as hype” means two different things to different audiences. For insiders, it means Mythos was not a magical step-change in AI ability. For outsiders, it means Mythos could not really find zero-day exploits. Mollick’s view was that the latter was wrong and the former was likely right.

Roy restated the distinction: Mythos can find zero-days, but that does not make it a magical step-change in AI ability. Kantrowitz agreed. The evidence discussed made Mythos real enough to matter for security work, but not enough to establish a wholly new model regime.

The OpenAI trial evidence made governance instability tangible

The OpenAI trial produced a different kind of evidence: not benchmarks, revenue estimates, or compute commitments, but internal messages from the weekend in November 2023 when Sam Altman was fired and then reinstated. The texts between Altman and Mira Murati, OpenAI’s former CTO and interim CEO during the crisis, made the governance fight concrete.

Altman asked Murati to officially invite him to the office for a meeting. Murati asked whether he had an update. Altman said Adam was trying to get the board to agree to a configuration, that the board now said it needed until end of day, and that he and Satya Nadella said that did not work and they needed to prepare for “plan B.”

As the day progressed, Altman repeatedly pressed for directional updates and asked whether he could come in. Murati told him the direction was “very bad,” then, “Sam, this is very bad.” When Altman asked whether the board wanted him fired or something new, Murati answered that they wanted him gone. She told him the board had walked her through the reasons and issues with him and why he could not be CEO.

The exchange’s most memorable turn came when Altman asked whether the board knew who the new CEO would be. Murati wrote, “Trying to add Satya now.” Altman asked, “Still don’t want me?” Murati answered: “New guy is rando Twitch guy. They don’t want you.” Roy noted the absurdity of that label: the person in question was an extremely successful entrepreneur, but inside the stress of the moment he became “rando Twitch guy.”

The messages mattered because they revived the unresolved question of OpenAI’s structure. Alex Kantrowitz and Ranjan Roy said they had believed at the time that the drama was not over, because the organization’s structure still lent itself to instability. Roy observed that, more than two years later, internal drama had not vanished, even though Altman now appears firmly in control.

Kantrowitz connected the trial evidence to Musk’s challenge to OpenAI’s conversion from a nonprofit-controlled structure toward a for-profit model. The texts themselves do not directly prove Musk’s case, but they relate to the larger governance question. The structure is still not settled, and because the case is being tried before a jury, emotional appeals matter alongside documents and legal arguments.

Roy was blunt about Musk’s motives. He asked whether Musk is doing this “for the good of humanity” to preserve nonprofit structures. Kantrowitz answered no: Musk is doing it because he invested more than $30 million and feels betrayed. Roy corrected an initial slip in which Kantrowitz said billion rather than million, then argued that even $30 million is unlikely to be the whole explanation for Musk. The case is strategic, tied to OpenAI, xAI, and the broader competitive landscape. Both agreed Musk dislikes Altman more than Amodei.

The Microsoft evidence added another layer. Kantrowitz cited a 2018 email chain among Microsoft executives considering whether to invest in OpenAI. Nadella wrote that he could not tell what research OpenAI was doing or how, if shared, it would help Microsoft get ahead. He noted that, according to what Musk was telling people, OpenAI believed it was on the verge of major AGI breakthroughs, and that the company was pushing AI at a level Microsoft’s first-party or third-party efforts were not.

Kevin Scott, Microsoft’s CTO, was quoted as being skeptical of imminent AGI breakthroughs and worried that OpenAI was treating Microsoft as “a bucket of undifferentiated GPUs.” Scott said there could be public-relations downsides if Microsoft did not fund OpenAI and the company stormed off to Amazon, “shit-talk”ing Microsoft and Azure on the way out. Another executive worried about OpenAI leaving Azure for AWS, badmouthing Microsoft, and then landing some major innovation shared with Microsoft’s competitors.

For Roy, the emails showed that “everything is comms in the end.” The investment decision involved technical differentiation, cloud capacity, AGI risk, and strategic positioning, but the internal anxiety also included how Microsoft would look if OpenAI left and attacked Azure. Kantrowitz joked that they had previously been told by a listener they talk too much about communications, but the emails suggested the communications layer is not incidental. It can sit inside multibillion-dollar strategic decisions.

AI layoffs may be real, AI-washed, and still caused by AI

Recent tech layoffs were not framed as a binary question — AI displacement or ordinary cost-cutting — but as a muddier transition. Kantrowitz cited Block and Coinbase as examples of companies making cuts, then used Brian Armstrong’s Coinbase explanation as the displayed example. Armstrong said the company was in a down market and needed to adjust its cost structure to emerge leaner, faster, and more efficient. He also said AI was changing how work gets done: engineers were using AI to ship in days what used to take teams weeks; nontechnical teams were shipping production code; many workflows were being automated; and the pace of what a small team can do had changed dramatically.

Roy’s answer was “both.” Many tech companies grew large and bureaucratic, especially in big tech. Entrepreneurs naturally want to flatten organizations and reduce hierarchy when companies feel bloated. Roy liked some of the ideas attached to Coinbase’s restructuring, including pushing everyone toward being an individual contributor rather than only a manager, and limiting the number of hierarchy levels from the CEO.

But AI is not merely an excuse in his reading. If employees resist changing how they work, companies will become more aggressive. The “AI train” rhetoric has some reality behind it: the people adopting the tools can become much more productive, and organizations will not tolerate broad refusal indefinitely.

Kantrowitz introduced a counterargument from Meta engineer Arnav Gupta, summarized with commentary from The Pragmatic Engineer. Gupta’s line was that “the layoffs will continue till we learn to use AI.” The argument is subtle: many “AI layoffs” may be backwards. They may not be happening because AI is already replacing workers. They may be happening because AI spending is not yet producing better business results.

Gupta wrote that even if layoffs are AI-washing, they are still because of AI. Until the world figures out how GDP actually grows because of AI, companies have to offset large annual token spend by cutting salaries. Until people figure out how to unblock each other faster, they can be removed from the org chart.

Roy agreed with the general direction. AI can make good people great, he said, but that does not automatically transform an entire organization. The real question is what a company should look like three to five years from now. He argued that today’s corporate structure is not ancient; it has changed materially over recent decades, especially as IT grew with the internet and cloud. If AI changes workflows as substantially as claimed, organizations will have to restructure. Companies are beginning to prepare for that future before the full productivity gains have arrived.

Kantrowitz cited another bearish formulation: if AI were already working well enough, companies could keep their people and produce more by adding AI. The fact that companies are cutting workers instead may imply that productivity is not yet appearing at the organizational level. He floated the possibility that the issue is change management rather than technology, while also admitting the more skeptical possibility: perhaps AI has not really increased productivity and the industry is deluding itself.

Roy drew a distinction between aggregate productivity and individual productivity. In aggregate, especially in enterprises, he does not think agentic AI has yet been widely adopted at scale outside coding. But he compared resistance to AI tools with earlier technological refusals: writing on a notepad instead of using word processing, or sending letters instead of email. Kantrowitz argued that this shift is different because the tools are more powerful. Roy countered that people likely said similar things about prior shifts, and compared AI’s effect on knowledge work to manufacturing automation and outsourcing’s effect on blue-collar work. The difference, he said, is that knowledge-work disruption is a much louder story.

When pressed for a bottom line, Roy said the layoffs are more about preparing for an AI future than recognizing one that has fully arrived. Companies believe they must figure it out now or face problems later. Kantrowitz mostly agreed, but added a caveat: two prominent companies making this argument, Coinbase and Block, are crypto-linked businesses, and crypto is in a downturn. That made him suspect some restructuring is driven by business realities first, with AI providing the more compelling explanation.