ElevenLabs Voice Engine Wraps Existing Chat Agents Without Rebuilding Them

Luke Harries of ElevenLabs argues that the next step for chat agents is not a new orchestration stack but a voice layer around the agents companies have already built. His case for ElevenLabs’ Voice Engine is that teams can keep their existing LLM logic, RAG, tools and business rules, while offloading speech-to-text, text-to-speech, turn-taking and interruption handling to a wrapper. The product is positioned for companies that want voice interfaces across web, phone and meeting channels without rebuilding their chat agents inside a fully managed platform.

The upgrade is a voice layer, not a rebuilt agent

Luke Harries argued that 2025 was the year SaaS products added chat agents. In his account, companies either “died a SaaS” or became AI-first by putting a chat agent into their app. He pointed to product home screens becoming chat interfaces, and to gov.uk moving in the same direction, as evidence that chat became the default way to interact with AI: declarative, compatible with tool calling and RAG, and a fast way to expose an agent inside an existing product.

But chat, in Harries’s view, now feels like an intermediate interface rather than the end state. Voice is faster, more interactive, and more accessible for users who struggle with keyboards or dyslexia. It also changes where the agent can live. A chat agent is typically embedded in a web or mobile surface; a voice-enabled agent can become a phone line or join a Zoom call, where Harries imagines an analytics agent correcting wrong stats as people talk.

Chat’s cool, but it doesn’t feel like you’re building the future though.

The practical product claim is that teams do not necessarily lack agent logic. Many have already built and tuned it. What they lack is a separate audio layer: speech-to-text, text-to-speech, voice activity detection, turn-taking, and interruption handling.

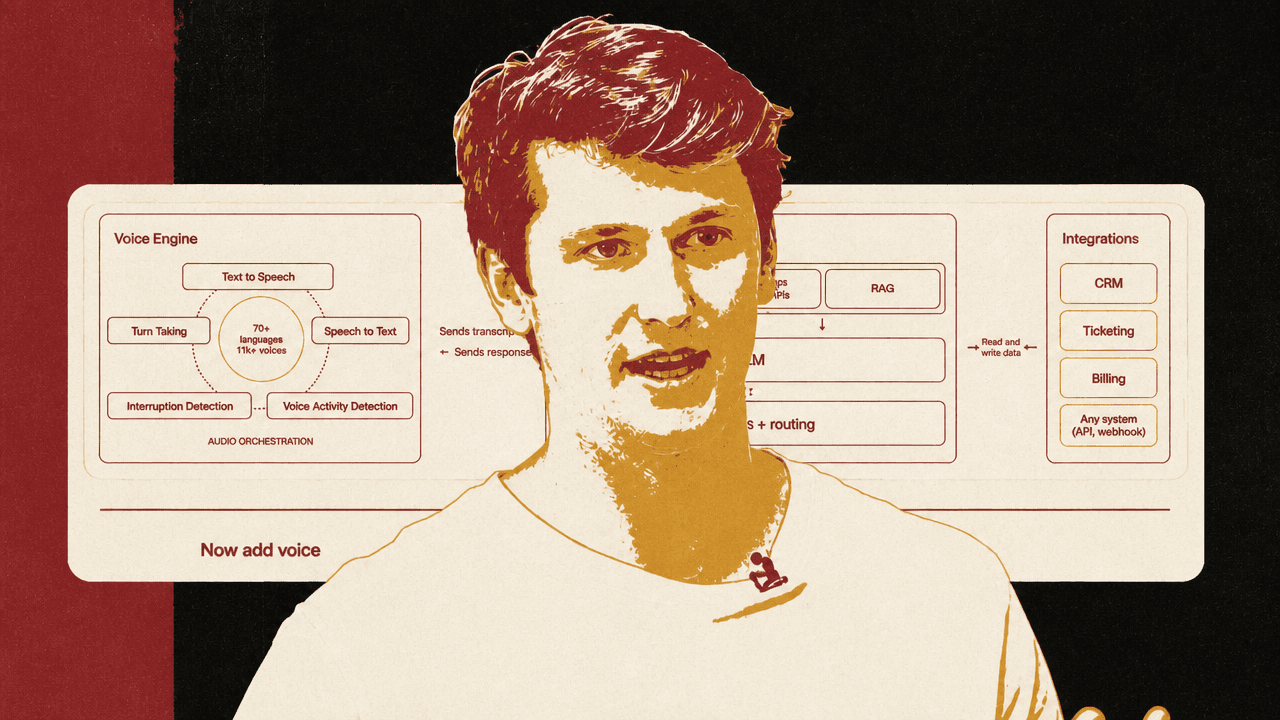

Voice agents split into an audio layer and an orchestration layer

The architecture Harries presents separates a voice agent into Voice Engine, Agent Orchestration, and Integrations, with “audio orchestration” shown between the voice and agent layers. The Voice Engine side includes text to speech, speech to text, turn taking, interruption detection, and voice activity detection. The orchestration layer contains the system prompt, knowledge base or SOPs, RAG, LLM, workflows, and routing. Integrations connect the agent to systems such as CRM, ticketing, billing, APIs, and webhooks.

| Layer | Responsibilities shown in the architecture |

|---|---|

| Voice Engine | Text to speech, speech to text, turn taking, interruption detection, and voice activity detection |

| Agent Orchestration | System prompt, knowledge base or SOPs, RAG, LLM, workflows, and routing |

| Integrations | CRM, ticketing, billing, APIs, webhooks, and other systems |

That split matters because many ElevenLabs customers were not starting from scratch. Harries said ElevenLabs began by focusing on text-to-speech models, then was pulled into broader voice-agent systems while working with customers, including Revolut’s customer support. Some customers wanted a full out-of-the-box agent. Others pushed back: they had already built their agent, invested in evaluations and existing logic, and did not want to replace it just to add voice.

Voice Engine is Harries’s answer to that second case. He described it as an early preview of a product due “in a couple of weeks” that turns the audio side into a first-class primitive. The intent is to wrap an existing chat agent rather than displace it.

The feature list includes speech-to-text through Scribe, text-to-speech models such as V3, emotion- and context-aware ASR and TTS, turn-taking and interruption handling, “emotionally expressive” native voices, more than 11,000 voices, more than 70 languages, and a developer experience designed for teams that already have production chat agents.

The important architectural claim is that voice does not require moving the agent’s reasoning, retrieval, routing, or business logic into ElevenLabs. The wrapper proxies the user’s spoken transcript into the existing agent and sends the resulting response back through the voice layer.

The server stays text-only while Voice Engine handles speech

The server-side pattern Harries shows is intentionally small. A developer imports ElevenLabsClient, creates a client, retrieves a Voice Engine by ID, and attaches it to an HTTP server at a WebSocket path. Inside onTranscript, the slide routes the transcript into yourLLM.generate(...) and sends the response back through the session:

const engine = await elevenlabs.voice_engine.get('veng_97Qi1kmb0symaaar2jnv5x8dv9n2');

engine.attach(httpServer, '/api/voice-engine/ws', {

async onTranscript(transcript, signal_session) {

const response = await yourLLM.generate(transcript, { signal });

session.sendResponse(response);

}

});

Harries’s point is the wrapper pattern: each new session starts a loop that proxies the voice interaction into the agent the team already uses. The audio-facing concerns remain in the Voice Engine; the application’s LLM call, retrieval behavior, system prompt, and business rules remain wherever they already are.

The client-side example is similarly minimal. Harries shows an import from @elevenlabs/client, fetching a token from /get-token, and starting a conversation session with that token:

const token = await fetch('/get-token').then(r => r.text());

const conversation = await Conversation.startSession({

conversationToken: token

});

He said this gives a site a widget in a few lines, and that once a team has added the client SDK it can also add telephony and CCaaS-style channels “pretty much out the box” after the agent is wrapped.

One-prompt migration changes the interface

The migration path Harries describes starts from an ordinary local chat support agent. The agent responds normally to “Hello how are you,” producing the support reply: “Hello! I am doing well, thank you. How can I assist you today?” The upgrade prompt shown on screen is direct: “Upgrade this chat agent to a voice agent, use the skill.”

The agent logic is intentionally ordinary. The claim is that the surrounding voice interface can be generated around it. Harries said the release will include a skill containing ElevenLabs’ “best in class stuff,” so a coding agent can analyze the codebase, identify the chat agent, determine how it is deployed, and wrap it with Voice Engine.

The generated code returns to the same server-side pattern: retrieve the Voice Engine, attach it to the HTTP server, receive transcripts, call the existing LLM, and send responses back to the voice session.

ElevenLabs also has UI components built around shadcn and Vercel-style design patterns. The visible examples include components labeled “Noise Fill,” “Customer Support Agent,” and “Orbs.” Harries’s framing is pragmatic: teams can point a coding agent at the components and use them as the front end for the voice experience rather than designing the whole interaction surface from scratch.

Voice Engine is for existing orchestration; Agents Platform is for starting over

Luke Harries distinguished Voice Engine from ElevenLabs’ Agents Platform by the team’s starting point. Voice Engine is for teams that already have their own LLM, orchestration, custom RAG, and business logic. It uses a text-only server interface with no audio handling required on the application side, shares the Conversation SDK, and is meant to be added to any chat agent.

Agents Platform is for teams that want a fully managed system. In the comparison slide, Harries lists a fully managed LLM, built-in tools and knowledge base, a dashboard for non-developers, telephony out of the box, the lowest possible latency, and a build-from-scratch workflow.

| Path | Best fit | What ElevenLabs handles | What the team brings |

|---|---|---|---|

| Voice Engine | Existing chat agents | Voice layer, turn-taking, speech I/O, interruption handling | Own LLM, orchestration, custom RAG, and business logic |

| Agents Platform | Build-from-scratch voice agents | Fully managed LLM, built-in tools and knowledge base, dashboard, telephony, lowest-latency setup | A new agent built inside the managed platform |

The buyer-facing distinction is not just feature packaging. Voice Engine preserves the team’s existing agent investment and adds audio as an interface layer. Agents Platform consolidates more of the stack inside ElevenLabs for teams that prefer a managed build. Harries also argued for a broader developer pattern: moving away from isolated speech-to-text and text-to-speech APIs toward higher-level bundles that match the way teams actually build agents.

Tool calling stays mostly where it already is

In the Q&A, an audience member asked how tool calling works. Harries’s answer was that, for most existing chat agents, tool calling already happens in the backend orchestration. Because Voice Engine wraps the chat agent rather than replacing it, the wrapper does not need to own most tool-call logic.

He said ElevenLabs also has the concept of client-side tools and server-side tools. Client-side tools can expose front-end capabilities, such as manipulating the DOM. Harries added that ElevenLabs plans to add a way to proxy some of those tool calls to the wrapped agent. But his main point was conservative: most teams adopting this path should not need to reimplement tool calling in the voice layer, because their chat agent already handles it.