Agent Observability Is Moving From Dashboards to Eval-Driven Optimization

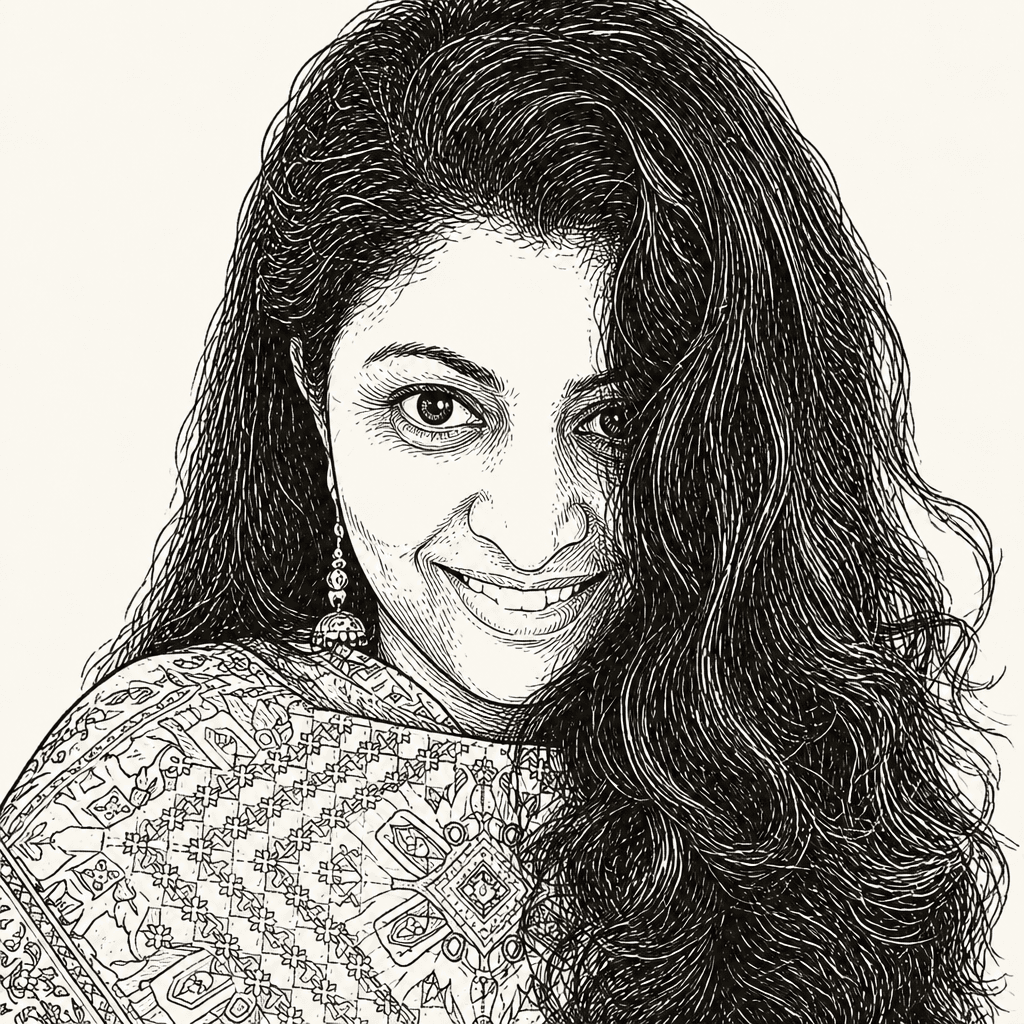

Amy Boyd and Nitya Narasimhan of Microsoft argue that agent observability has to track the widening gap between what an AI agent is meant to do and what it actually does as models, prompts, tools and user behavior change. Their walkthrough of Microsoft Foundry frames observability as a loop of OpenTelemetry tracing, trace-linked evaluations, monitoring, optimization and red teaming. The central demonstration is an observe skill that can generate an evaluation dataset, run batch tests, optimize prompts, compare versions and roll back to the best-performing agent version from a sparse starting point.

AI Engineer·May 14, 2026·18 min read