Pretraining and Attention Infrastructure Made Vision Transformers Practical

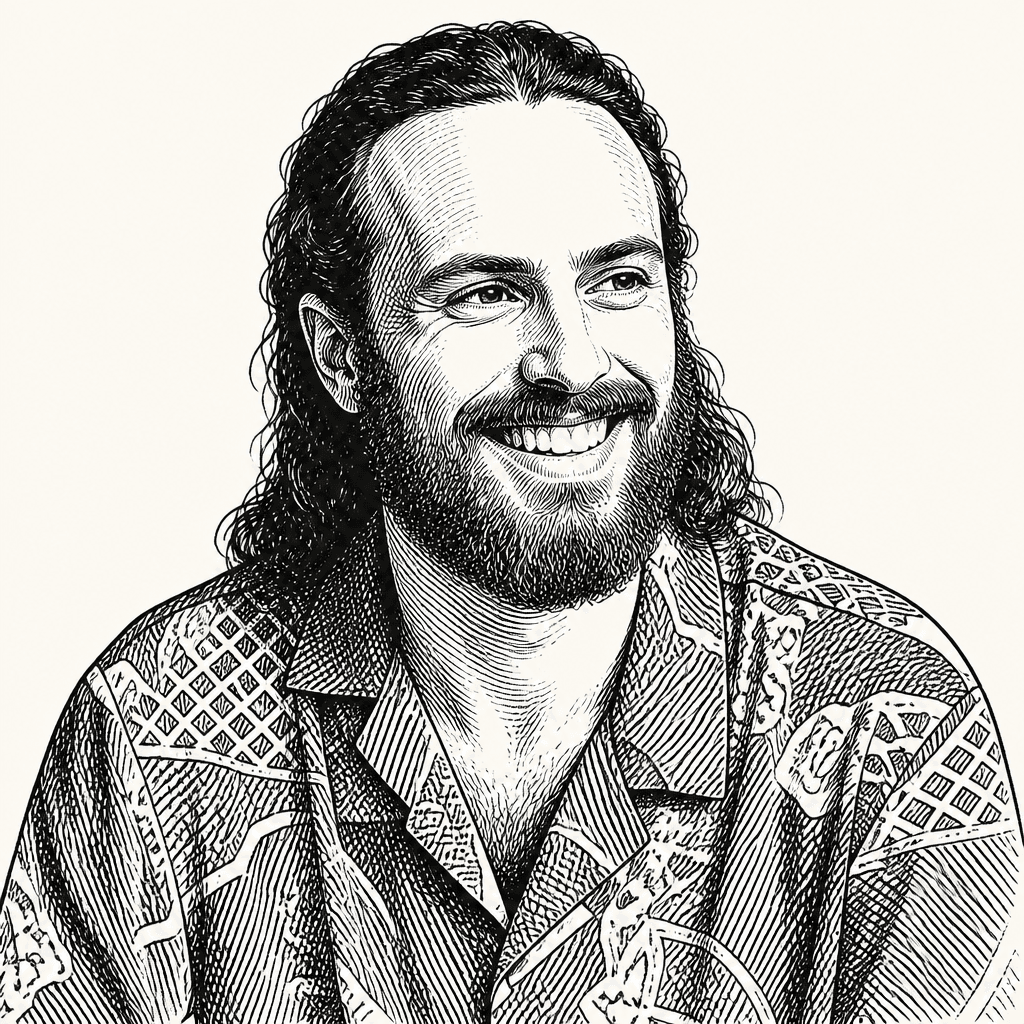

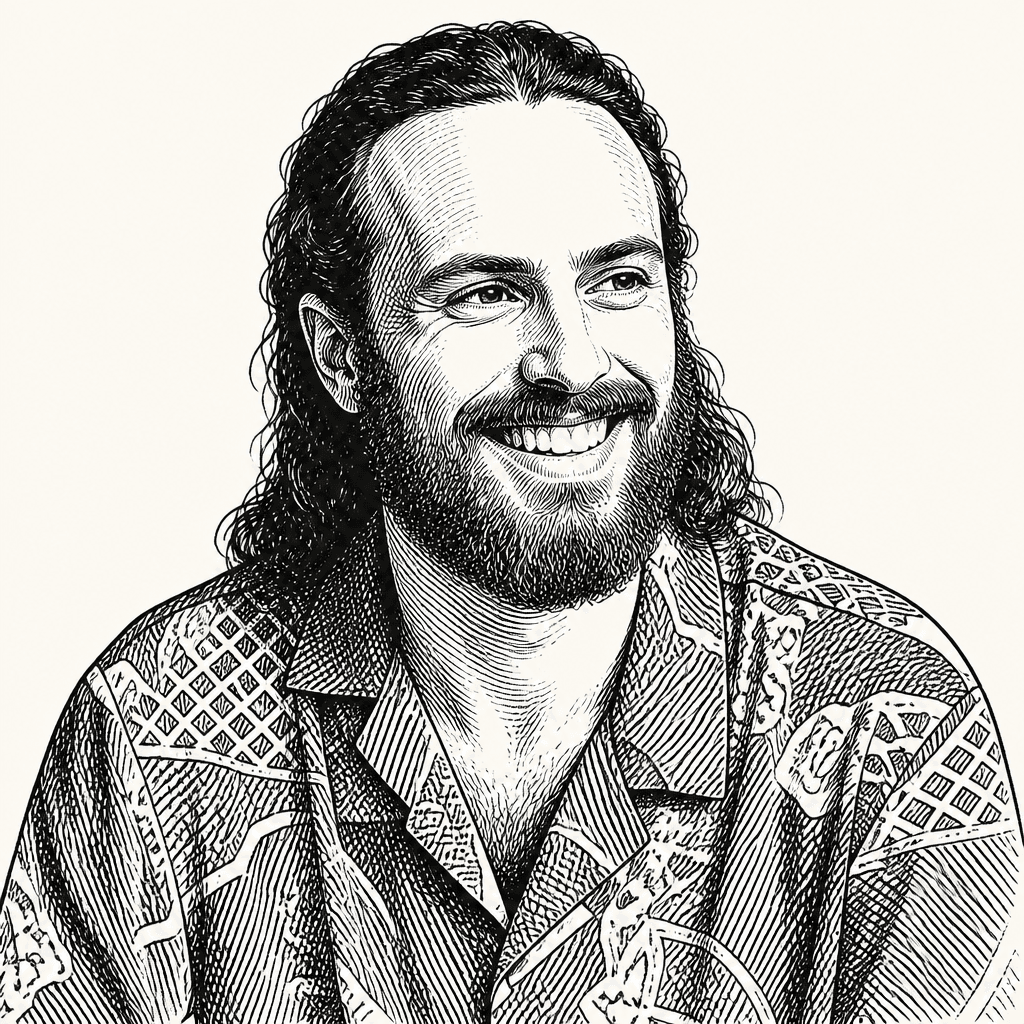

Isaac Robinson of Roboflow argues that transformers overtook convolutional networks in vision not because images stopped needing visual structure, but because that structure moved from hand-built architecture into pretraining, scaling and tooling. In his account, ViT-style models first lacked the inductive biases and efficiency that made CNNs dominant, but self-supervised vision pretraining and attention infrastructure from the LLM world made the simpler architecture practical. Robinson frames the next problem as deployment: turning large foundation backbones into model families that can meet real latency, cost and hardware constraints.

AI Engineer·May 8, 2026·10 min read