Cerebras IPO Puts a Public Price on Fast AI Inference

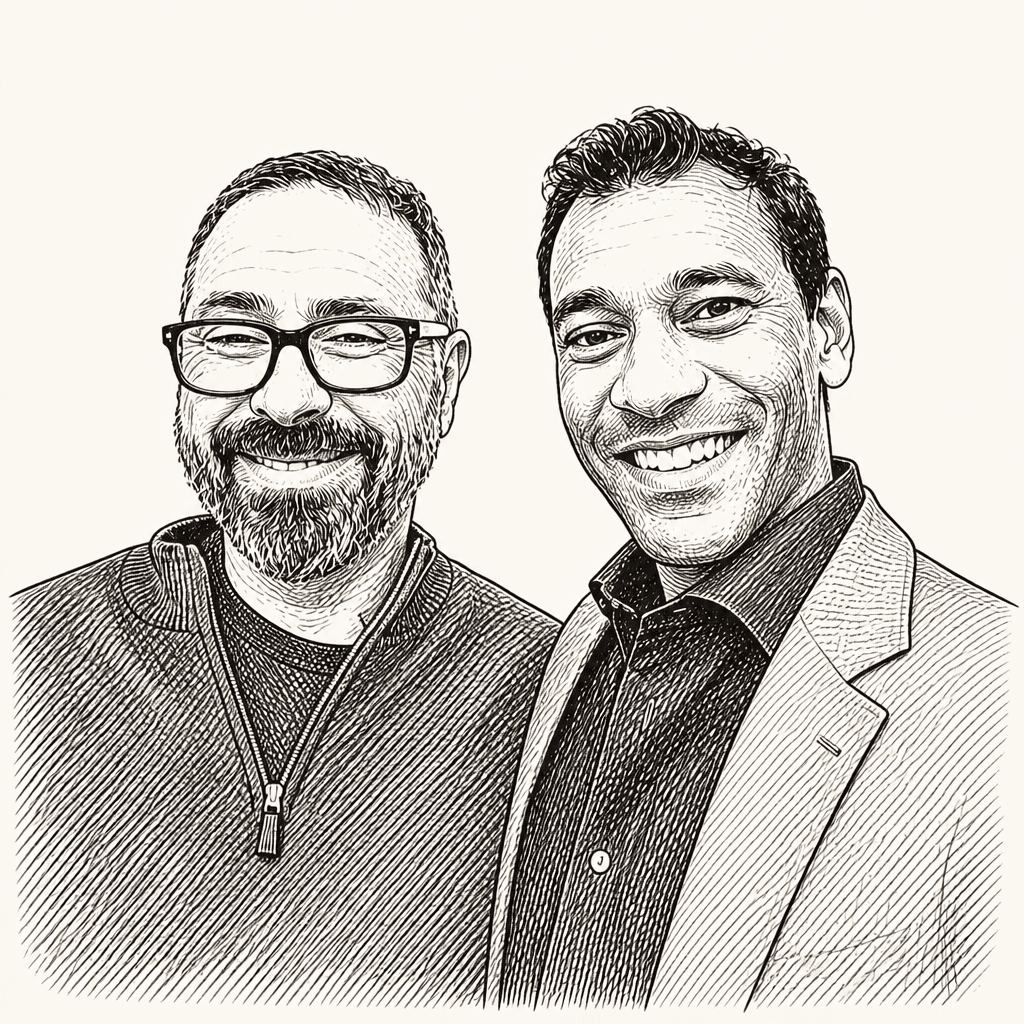

TBPN’s John Coogan and Jordi Hays use Cerebras’s first day as a public company to frame a narrower AI hardware argument: the market is beginning to price low-latency inference as a product in its own right. Cerebras founder Andrew Feldman argues that fast inference will eventually consume demand for slow AI responses, while SemiAnalysis’s Doug O’Laughlin cautions that the company’s wafer-scale SRAM architecture may be limited by memory scaling and model size. The result is a public-market test of whether owning a valuable slice of the AI compute stack is enough.

TBPN·May 14, 2026·33 min read