DeepSeek V4 Claims Frontier-Adjacent Open Weights With One-Million-Token Context

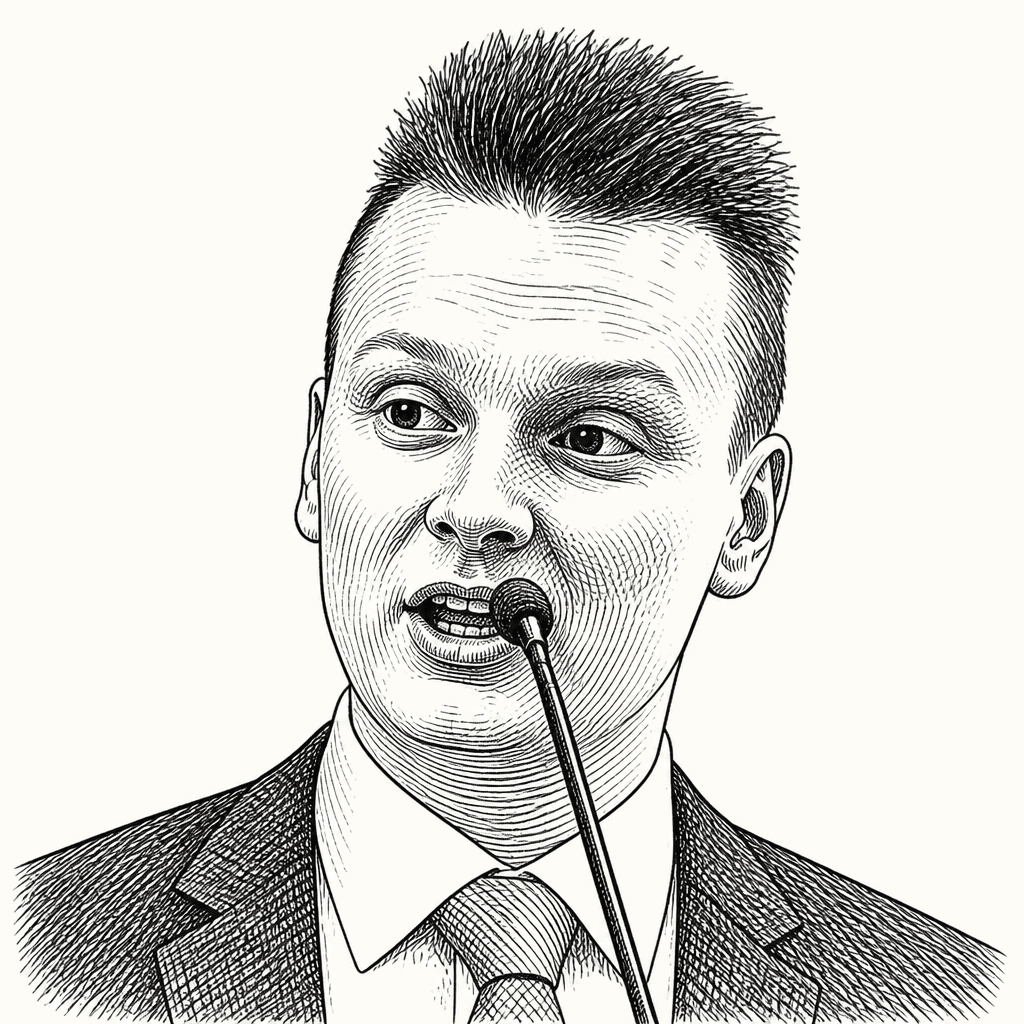

Károly Zsolnai-Fehér of Two Minute Papers argues that DeepSeek V4 Preview is a consequential open-weight AI release because it pairs frontier-adjacent benchmark results with a reported one-million-token text context window and sharply lower long-context memory costs. His case rests less on outright benchmark dominance than on access economics: a freely self-hostable model appears close enough to recent closed frontier systems to change what developers can afford to use. He also stresses the limits: DeepSeek V4 is text-only, degrades near the edge of its context window, and still needs serious hardware at full scale.

The open-weight claim is context, not just size

Károly Zsolnai-Fehér presents DeepSeek V4 Preview as an unusually consequential open-weight release: two mixture-of-experts models, a 58-page technical report, API access, web access, and open weights. The striking specification is not only that DeepSeek-V4-Pro has 1.6T total parameters with 49B active parameters, or that DeepSeek-V4-Flash has 284B total parameters with 13B active parameters. It is that both are presented as supporting a one-million-token context window.

Zsolnai-Fehér compares that to Google’s Gemini, where long context was a flagship feature not long ago. His framing is direct: an open-weight system now claims the ability to “inhale about 1,500 pages of dense documentation.” DeepSeek’s own release materials describe the Pro model as rivaling top closed-source systems, while the Flash model is positioned as the faster and cheaper option.

| Model | Total params | Active params | Pre-trained tokens | Context length | Mode |

|---|---|---|---|---|---|

| DeepSeek-V4-Pro | 1.6T | 49B | 33T | 1M | Expert |

| DeepSeek-V4-Flash | 284B | 13B | 32T | 1M | Instant |

On DeepSeek’s benchmark chart, V4-Pro-Max is placed beside Claude Opus 4.6 Max, GPT-5.4 xHigh, and Gemini 3.1 Pro High. The results vary by task: DeepSeek is shown ahead on Apex Shortlist and Codeforces, tied or near-tied on SWE Verified, behind Gemini on SimpleQA and HLE, and behind GPT on Terminal Bench 2.0 and Toolathlon. Zsolnai-Fehér’s read is that the Pro model roughly matches many-billion-dollar frontier models from only months earlier.

The important claim is not that DeepSeek wins every benchmark. It is that a freely available open-weight model is now close enough to recent closed frontier systems, with a very long text context window, that the economics of access look different.

DeepSeek’s efficiency story is compression

The technical explanation Károly Zsolnai-Fehér gives is organized around the KV cache, the memory used to store information from the prompt and documents as the model works over context. He is careful to say this is KV cache compression, not model compression. The full model still has to be loaded. In his words, this does not mean the full DeepSeek Pro model can run “onto a toaster.”

DeepSeek’s paper, as shown, says that in a one-million-token context setting, DeepSeek-V4-Pro requires 27% of the single-token inference FLOPs and 10% of the KV cache compared with DeepSeek-V3.2. A DeepSeek chart labels V4-Pro as 3.7x lower in single-token FLOPs than V3.2 and V4-Flash as 9.8x lower. Another chart labels accumulated KV cache as 9.5x smaller for V4-Pro and 13.7x smaller for V4-Flash.

Zsolnai-Fehér explains the compression stack with book metaphors. Token-level compression is like compressing each paragraph into one sentence: the book remains, but search becomes faster. Heavily Compressed Attention is closer to a table of contents, giving the model a high-level view of the whole structure. The paper excerpt shown describes a 128-to-1 compression rate for this layer. Compressed Sparse Attention is compared to a book index: if the model is looking for “a fight,” it does not need to reread everything; it needs a shortlist of relevant locations.

His summary is simple: summaries, structure, and index. Local detail remains available, but the model also gets global context without carrying the full cache burden in the old way.

The long-context evidence is promising, but not unlimited

Károly Zsolnai-Fehér treats the long-context tests as the point where the compression claim has to earn credibility. The test shown is MRCR 8-needle, where eight facts are hidden inside increasingly long inputs. DeepSeek’s chart shows both Pro and Flash holding high scores across shorter and mid-length contexts, then degrading as input approaches the one-million-token limit.

| Input tokens | DeepSeek-V4-Pro-Max | DeepSeek-V4-Flash-Max |

|---|---|---|

| 8k | 0.90 | 0.91 |

| 16k | 0.85 | 0.84 |

| 32k | 0.94 | 0.87 |

| 64k | 0.90 | 0.85 |

| 128k | 0.92 | 0.87 |

| 256k | 0.82 | 0.76 |

| 512k | 0.66 | 0.60 |

| 1024k | 0.59 | 0.49 |

For DeepSeek-V4-Pro-Max, the shown Average MMR values remain around 0.90 through 128k tokens, then fall to 0.82 at 256k, 0.66 at 512k, and 0.59 at 1024k. Flash follows the same shape, falling to 0.49 at 1024k. Zsolnai-Fehér says DeepSeek reports that Pro recalls better than Gemini 3.1 Pro on this test, but he immediately adds the key limitation: as context windows fill up, models start to forget, drift, and hallucinate.

DeepSeek’s broader tables show V4 improving over V3.2 on many knowledge and reasoning tests despite heavier compression. Zsolnai-Fehér’s point is that the compression does not appear, in the shown results, to destroy capability in the way one might expect.

Coding and pricing make the release feel practical

The demonstrations Károly Zsolnai-Fehér shows are mostly small programs labeled “Coded via DeepSeek”: grid games, water simulations, 3D browser scenes, a platform runner, and a simple path-tracing scene. He says the model is “fantastic at coding,” especially for producing JavaScript that can be pasted into a website and run. In some cases, he says, programs can run inside the DeepSeek interface with one click.

His own test is in an area he knows: light transport and ray tracing. The shown result is a Cornell-box-like scene with colored walls and spheres, progressively refined by samples. His verdict is positive for the basic task, but qualified: it still failed to properly implement more advanced algorithms. He presents that as a boundary of the current model, not a reason to dismiss it.

The pricing claim is aggressive. Zsolnai-Fehér says DeepSeek is available at the price of free if users can self-host it. For hosted online use, he says it is extremely cheap: “Soon, intelligence will get too cheap to meter.” A tweet shown in the piece claims 831,962,136 tokens consumed in under two days for about $10, with an on-screen note that this reflected discounted pricing and now costs more, though still much cheaper than frontier models.

He then gives ranges: with discounts, pricing can be about thirty times cheaper than Anthropic’s Claude; without discounts, he says it can still be eight to twenty times cheaper.

The limitations are not minor

Károly Zsolnai-Fehér explicitly warns against the version of this story that media headlines tend to produce. The first limitation is modality. DeepSeek V4, as described here, is unimodal. It can handle text, but not images or audio. His phrase is that it is “blind and deaf,” so the million-token window should not be read as “10 hours of audio” or “a full feature-length movie.”

The second limitation is scientific understanding. A highlighted excerpt from the paper says that two techniques maintain training stability, but that a comprehensive theoretical understanding of their mechanisms “remains an open question for now.” Zsolnai-Fehér says this happens to researchers and praises the transparency, but he still treats it as a limitation: the system works in ways even its creators do not fully explain.

The third limitation is degradation near the end of the context window. The MRCR chart is not flat. At the edge of one million tokens, the model’s recall is materially worse than at shorter contexts. His warning is practical: do not get oversold.

The final analogy is about why the architecture is interesting. Walking through a forest, a person can look near to avoid tripping or far to enjoy the view. The better strategy is to do both: scan near, glance far. Zsolnai-Fehér argues that DeepSeek’s approach follows the same pattern: local detail plus global context, with compression making both affordable enough to use.