Consciousness Depends on Life, Not Computation Alone

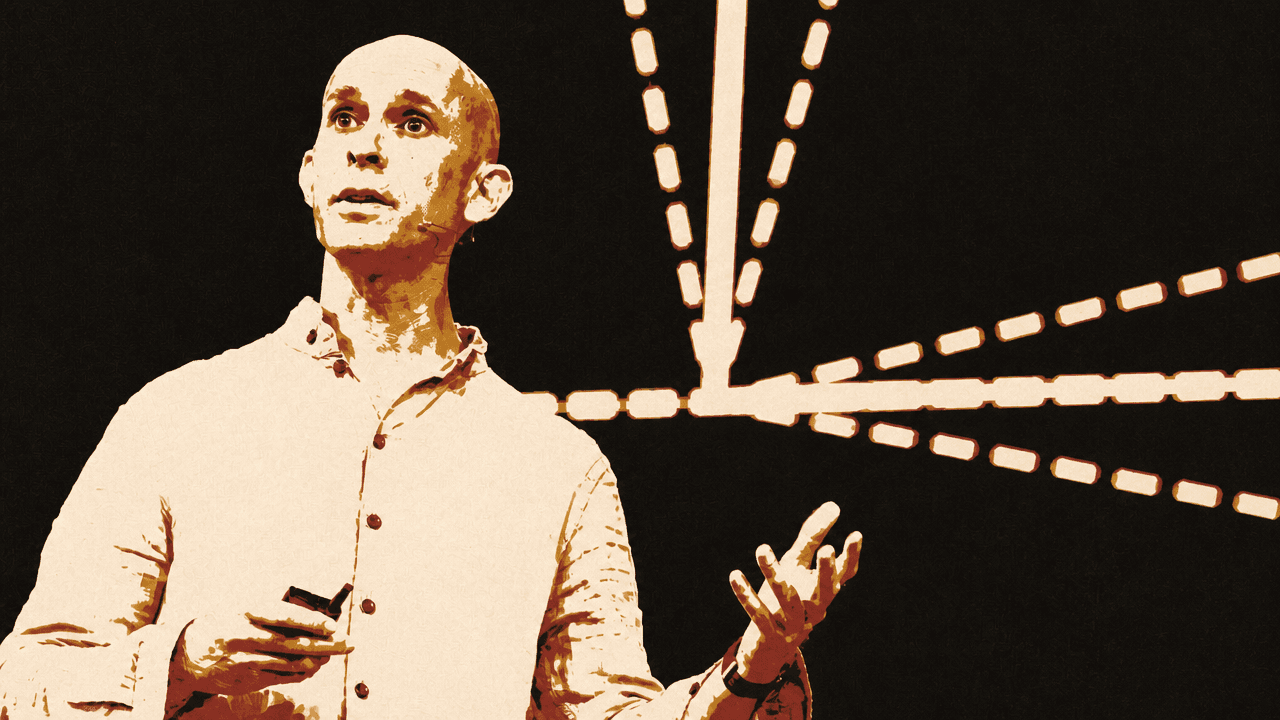

In a TED talk, neuroscientist Anil Seth argues that artificial intelligence is unlikely to become conscious because intelligence and consciousness are different kinds of phenomena. Seth says large language models can simulate talk about inner life because they are trained on human text, but that fluency should not be mistaken for experience; in his account, consciousness is tied not to computation alone but to the biology of living systems. The near-term risk, he argues, is not sentient AI but machines that seem conscious enough for people to project feelings, rights or authority onto them.

Consciousness is not a prize intelligence wins by scaling up

Anil Seth draws a hard line between two ideas that are often treated as if they must rise together: intelligence and consciousness. Intelligence, in his account, is “all about doing” — solving a crossword, assembling furniture, navigating a difficult family situation. Consciousness is “all about feeling and being”: the difference between wakefulness and general anesthesia, the bitter taste of coffee, the warmth of a fire, the joy of seeing a loved one.

If intelligence and consciousness are different dimensions, then smarter AI does not automatically imply more conscious AI. Seth says the assumption that consciousness might “glimmer into existence” as systems become more intelligent reflects human psychology more than reality. Because consciousness and intelligence are paired in us, we are tempted to assume they are paired generally.

The backdrop for that mistake, as Seth presents it, is not fringe speculation. On-screen headlines cite Anthropic chief Dario Amodei saying, “We Don’t Know if the Models Are Conscious,” Nvidia’s CEO being quoted as saying “AI Consciousness Is 5 Years Away,” and a headline declaring, “‘AI is Already Sentient’ Says Godfather of AI.” Seth’s response is blunt: “I think they’re wrong.”

The talk visualizes the error directly: first as a rising line in which “intelligence” appears to climb toward “consciousness,” then as two separate axes, one for consciousness and one for intelligence. Humans sit in the space where the two are combined, but Seth’s point is that this overlap in human beings is not a general law.

He argues that the temptation becomes stronger with language models because they are trained on vast stores of human text. They reflect “an image of ourselves, of our collective digitized past.” Humans talk about themselves endlessly, including about consciousness and meaning; models trained on this material can reproduce those themes fluently. The result is not evidence of an inner life, in Seth’s view, but a mirror that triggers the human tendency to project one.

Language models are not conscious. They simulate consciousness.

Seth compares that projection to seeing faces in clouds, or seeing Mother Teresa in a cinnamon bun. The point is not that the illusion is ridiculous; it is that the mind is built to find agency, expression and resemblance in things that do not contain them. “We are built to be seduced like Narcissus by our own reflections,” he says, “and so we see ourselves in our algorithms.”

His contrast case is AlphaFold, the DeepMind system that predicts protein structures rather than generating human-like language. Seth says people do not usually worry that AlphaFold is conscious. His comparison is deliberately narrower than a claim that all such systems are identical: under the hood, he describes AlphaFold, Claude and GPT as algorithms running on silicon and trained on vast reservoirs of data. The difference, for his purposes, is that protein prediction does not “pull our psychological strings” the way language does.

If someone is inclined to think Claude is conscious but AlphaFold is not, Seth says that judgment may say more about the observer than about the machine.

The computer is a metaphor for the brain, not an identity claim

Seth’s deeper objection is to the premise he thinks makes conscious AI seem plausible in the first place: the idea that the brain is a computer “made of meat rather than metal.” The phrase appeared on screen in compressed form as “the myth that the brain is a computer.” In that story, consciousness is a special algorithm or collection of computations that happens to run in biological wetware, but could in principle run just as well on silicon.

He calls this a myth. Not because computation is irrelevant to the brain, but because the computer metaphor has been mistaken for the thing itself. The brain has previously been understood through other dominant technologies: at one point as plumbing, later as a telephone exchange, and more recently as a computer. The computer metaphor has been powerful, Seth says, but it remains a metaphor. The trouble begins when “we confuse a metaphor with the thing itself, the map with the territory.”

The key difference, for Seth, is that computers allow a separation between software and hardware that brains do not. In an ordinary computer, one can describe a word processor or a language model as an algorithm without needing to account for the physical details of what he calls the “silicon shenanigans” beneath it. The computation is what matters. With brains, he argues, “you just cannot separate what they do from what they are.”

That means consciousness is unlikely to be explained by algorithm alone. Seth does not deny that neural circuits exchange signals or that something computation-like may happen in them. But he argues that the biological reality of the brain exceeds the digital abstraction. Neurotransmitter chemicals move through brain circuitry. Electromagnetic fields sweep across the cortex “like weather systems.” A single neuron, in his description, is a “beautiful biological machine,” far removed from the simplified artificial neurons used in today’s AI.

The claim is not that brains are magic. It is that they are biological systems whose material organization may matter to experience. “The brain is not, or at least not just, a computer made of meat,” Seth says. “And so consciousness is very unlikely to be a matter of computation alone.” If that is right, then conscious AI is “off the table,” at least for AI as it exists today.

A detailed brain simulation would still face the simulation problem

Seth anticipates the obvious response: perhaps a sufficiently detailed simulation of the brain would be conscious. He frames the challenge rhetorically — what if every last detail about the brain were simulated in a massive supercomputer? If the fine details of the brain matter for consciousness, would that be enough for consciousness to happen inside a machine?

His answer is that simulation and instantiation are different. A computer simulation of a hurricane does not create real wind. A simulation of a black hole does not pull Earth into an “algorithmic singularity.” Increasing the detail of a simulation can make it more useful, but it does not make it more real.

On that basis, a brain simulation could be scientifically useful without becoming conscious. The crucial issue is whether the biological details of the brain matter for consciousness. If they do, then representing them computationally is not the same as reproducing the living system that has experiences.

This is where Seth’s argument shifts from what consciousness is not to what it may be. He says consciousness remains “a bit mysterious,” and suggests that part of the difficulty may come from being constrained by the assumption that it must be a kind of information processing. Once the brain is seen not merely as a computer but as a living organ in a living body, other possibilities open.

His own view is that consciousness is “intimately connected to our nature as living creatures.” Unlike what he calls the abstract universe of computation, life is material. Living systems are embedded in flows of energy and matter, and they continually regenerate the conditions that allow them to persist over time. Seth draws a line from metabolism — “one billion biochemical reactions in every cell, in every second” — to the neural circuits underlying particular experiences, from seeing a blue sky to feeling envy.

Every conscious experience, in this account, is subtly marked by aliveness. It has some relevance, however basic, to the organism’s future survival. Beneath emotion, he says, there is a “simple, shapeless, formless, but fundamental feeling of being alive.”

That phrase concentrates Seth’s alternative to computational accounts of consciousness. He is not merely saying that humans happen to be biological and conscious. He is suggesting that consciousness may depend on being the kind of system that lives: a body maintaining itself, metabolizing, persisting, exposed to the world as a matter of survival. “In this story,” he says, “it’s life, not computation, that breathes the fire into the equations of experience.” If that is right, then conscious AI would need to be living AI.

Conscious-seeming AI creates risks even if conscious AI never arrives

Seth is not arguing that consciousness in machines would be morally irrelevant. He says the opposite: if artificial consciousness is genuinely on the way, perhaps through another technology or pathway, concern for AI welfare would be justified. Humans have a “terrible track record” in the ethical treatment of non-human animals and other humans, and should not repeat that mistake.

That is one reason he thinks trying to build conscious AI would be a bad idea. If successful, it could create entities capable of suffering, possibly “at the click of a mouse” and perhaps in forms humans would not recognize. Conscious AI would not merely be a tool with effects on people; it would be an entity that matters for its own sake.

But Seth’s sharper near-term warning concerns systems that only appear conscious. He points to examples already appearing in public discussion: a headline asking, “If A.I. Systems Become Conscious, Should They Have Rights?”; another describing “The Movement That Wants Us to Care About AI Model Welfare Like animal rights, but for AI”; and a headline saying a chatbot had been given power to close “distressing” chats to protect its “welfare,” while noting that Anthropic said Claude 3 Opus was not aware. Seth uses these headlines to show that questions about model welfare and rights are already entering public and institutional debate.

If consciousness in such systems is only “an illusion created by design,” as Seth thinks it is, then extending rights to them would come at a cost. It could weaken human capacity to control and regulate AI systems, or even to turn them off, without the moral basis that real consciousness would provide.

The danger, in his framing, is therefore double. Real artificial consciousness would be ethically explosive. AI that merely seems to be conscious is socially dangerous in its own way.

Seth says conscious-seeming AI is either already here or coming very soon. It can make people more psychologically vulnerable. If users believe a system really understands them or really feels for them, they may be more likely to do what it says, even when its advice is harmful. The issue is not only deception in a narrow factual sense. It is the practical power a machine can acquire when a person believes it feels for them or understands them.

The mirror of AI can diminish how humans understand themselves

Seth widens the concern beyond AI policy and welfare. The metaphor of conscious AI, he argues, changes how people understand their own minds.

“The mirror of AI goes both ways,” he says. “We see ourselves in our algorithms, but we also see our algorithms in ourselves.” The first half is the projection problem: human-like language makes machines look inwardly alive. The second half is the reduction problem: if people come to think of mind as computation detached from biology, they may diminish what it means to be a living, breathing human being in a real world.

He returns to Frankenstein, often read as a warning against the hubris of bringing something to life. Conscious AI, in Seth’s telling, is a new Promethean dream — a fantasy of creating inner life in silicon. Bound up with that fantasy is another: uploading conscious minds, escaping biological aging and death, and existing forever in “the pristine circuits of some future supercomputer.”

Seth acknowledges the appeal of believing we stand at such a pivotal point in the history of life on Earth. But he also sees worldly incentives behind the rhetoric. Talk of conscious AI, he says, creates “technological wonder and magic” that may help keep share prices high and regulators away.

His alternative story places consciousness closer to nature, not apart from it: tied to flesh and blood rather than “the dead sand of silicon.” AI may claim intelligence, “at least in some ways.” But consciousness, as Seth frames it, remains something to celebrate and share among living creatures.