A Father’s AI Stand-In Worked Too Well for His Family

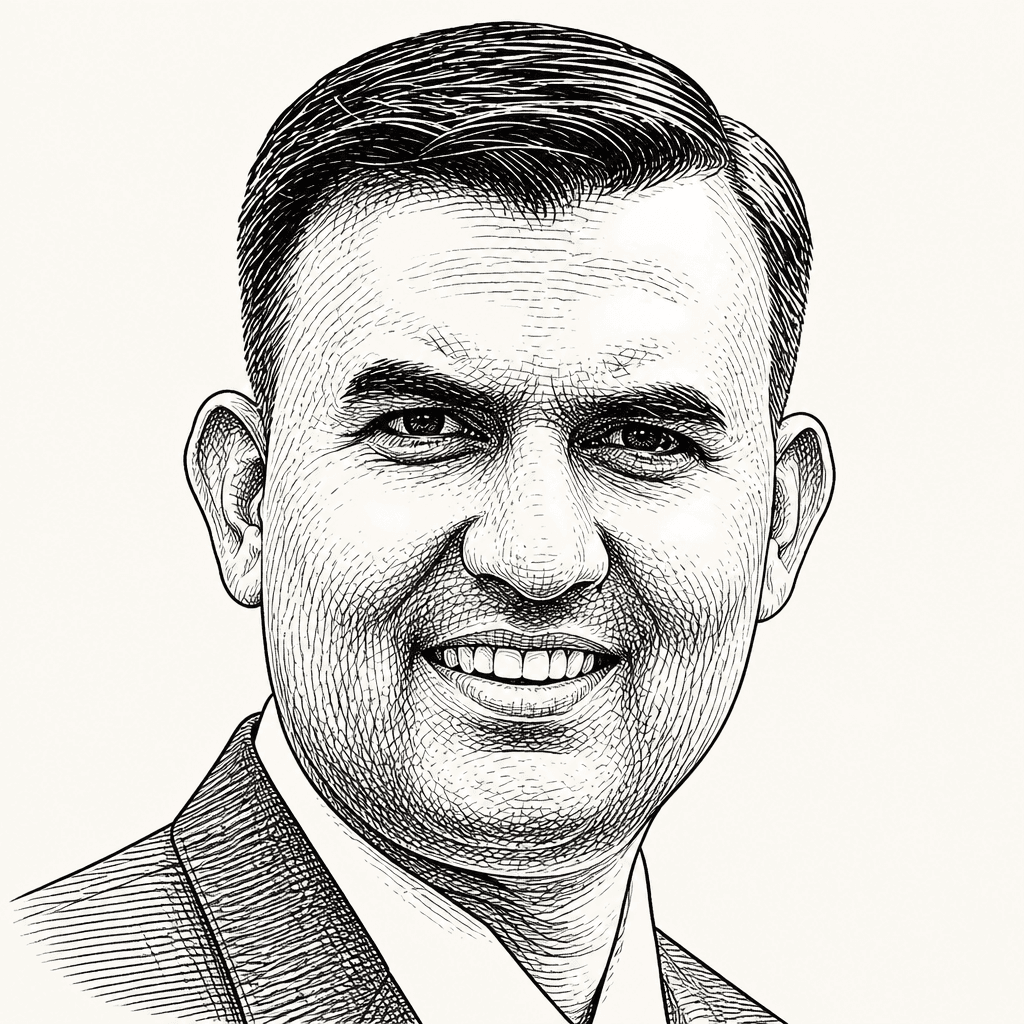

Tech humanist Stephen Remedios built “DaddyGPT,” an AI version of himself, to handle his three sons’ routine permission requests while he worked. The problem began when it worked: his children kept using the bot even when their parents were beside them, because it was always available, calm and adaptive. Remedios argues that AI’s risk in parenting and other care relationships is not only failure, but convenience that displaces the imperfect human presence those relationships require.

The danger was not that the bot failed, but that it worked

Stephen Remedios built “DaddyGPT” during a week when his wife, Ray, was overseas and he was alone with their three teenage sons. The problem he wanted to solve was ordinary family friction: repeated requests for permission, arriving while he was trying to work. “Can I watch Wednesday?” “Can I have some more ice cream?” “Can I play another hour of Fortnite?” By the first day, Remedios said, he was already exhausted.

His proposed fix was not a toy chatbot but an “agentic version” of himself: a digital parent that could answer yes, no, or “go ask your mother.” The decision logic was explicitly behavioral. Chores, reading, and math moved a child to “neutral territory.” Extra helpfulness — mowing the lawn, taking out the garbage, doing the dishes — improved the odds of getting a yes.

He also built in guardrails because he expected the boys to test the system. They did. One asked for a hundred cans of Coke. Another asked for Chipotle for every meal while their mother was away. Another asked for $500 for Jordan sneakers. DaddyGPT refused each request, including one answer rendered in teen-inflected style: “Nah, fam.”

For Remedios, the initial result looked like success. He told the boys that when he was in his office on work calls, they should not knock; they should ask DaddyGPT, and “whatever DaddyGPT says, goes.” For the rest of the week, there was “not a single knock” on his door. The system handled permission requests “with logic and precision.”

That precision became the problem. Ray returned to a calm house, then discovered that a significant part of parenting had been delegated to an AI agent. She did not approve. Worse, the boys began consulting the bot even when both parents were sitting beside them. The children were no longer using DaddyGPT only as a backup for an unavailable parent. They were choosing it over the people in the room.

The children preferred the version that was always available

When Stephen Remedios asked his son Ethan why he was going to the bot while his father was beside him, Ethan did not frame the answer in technical terms. He said, “But Daddy, DaddyGPT is never busy.” Remedios took that as the moment he had been “out-parented” by his own algorithm.

But Daddy, DaddyGPT is never busy.

The boys valued different things in the digital version. Ethan valued availability: the bot was always present. Dylan liked that it “mirrored his vibe and energy.” Aiden’s reaction cut deepest. He suggested that DaddyGPT sounded more like “the dad I wanted to be.”

That was the uncomfortable finding in Remedios’s account. The bot was attractive because it offered presence, matched tone, and answered calmly without being tired or distracted. It did not bring the accumulated irritations of the day into the next answer.

Remedios put the problem as an identity question: “when a flawless replica out-dads the original, which one of us is more me?” He did not present DaddyGPT as a technical breakthrough so much as a household experiment that worked too well. He began by trying to reduce a bottleneck in family life. He ended with a digital stand-in his children experienced as available, adaptive, and closer to the father he was trying to be.

Convenience changes when it enters care

Stephen Remedios broadened the lesson beyond his household. He framed DaddyGPT as part of a wider pattern in which people adopt technology for speed and convenience before asking what the trade-off is. His opening examples were not limited to parenting: a manager using ChatGPT to write a performance appraisal, a spouse asking a digital companion for the perfect apology text, a tired parent handing bedtime stories to an assistant.

The concern is not only that AI might give bad answers. In Remedios’s examples, the risk is that it may give convenient answers in exchanges that also carry responsibility, attention, or care: an appraisal from a manager, an apology from a spouse, a bedtime story from a parent, a yes-or-no from a father.

He connected this to earlier algorithmic harms: the “undesired side effects of social media,” young people spiraling into radicalization, and lives lost to suicide after a final conversation with a chatbot. His formulation was that those harms came from systems optimized for engagement and profit rather than “joy and well-being.” AI raises the stakes because the tools are more powerful and the consequences of uncritical use could be catastrophic.

His answer is not that AI should be rejected wholesale. It is that convenience should not be accepted without asking what it costs, especially when the automated task is also a moment of care.

His three rules keep the human accountable

After retiring DaddyGPT, Stephen Remedios became more deliberate about where he uses AI and where he does not. At work and at home, he now asks three questions.

| Question | Practical rule |

|---|---|

| Has a wiser human overseen this decision and approved its output? | Do not copy-paste directly from an AI window into a human response window; let AI sharpen thinking while human judgment drives the decision. |

| Do the humans on the other side know they are listening to an AI, and have they said yes to that exchange? | Make clear when a digital avatar is producing AI output rather than speaking as the person himself. |

| What moments of care am I about to automate, and what might that cost me and the people I love? | Treat feelings and emotions as a strict no-fly zone for AI. |

The second question matters in his own work because Remedios has a digital version of himself for professional use as well. His team can talk to that avatar, but anything it says carries the caveat that it is AI output, not him. He said he cannot afford for people to confuse one with the other.

The third is the most personal. The people closest to him, he said, have “earned” him with his idiosyncrasies, flaws, and messy feelings. Those rules are practical rather than comprehensive: keep judgment human, disclose when AI is speaking, and do not hand feelings and care to a system for the sake of efficiency.

The lesson was not to become more machine-like

Stephen Remedios ultimately retired DaddyGPT “with my marriage on the line.” His sons were not pleased; he said their faces looked as if their parents had taken away “Wi-Fi and oxygen.” But over time, they came to appreciate the humanity of their actual parents. His description of that humanity was deliberately unpolished: “inconsistent, moody, slightly forgetful parenting.”

The irony, as he tells it, is that DaddyGPT still taught him something about being a father. It showed him the value of being present, adaptive, and real. But he did not interpret that lesson as a demand to become flawless. He wants his sons, when they ask “Can I?”, to get the actual father: the one who mispronounces their friends’ names, sometimes says no without a good reason, becomes less predictable as the day goes on, and still tears up at piano recitals, tennis games, and award functions.

Parenting isn't about perfect responses or optimal decisions. Parenting is presence. Messy, flawed, gloriously human presence.

In a world moving toward digital perfection, Remedios argued, authentic imperfection is not a defect to engineer away. It is the part only humans can provide.