AI Tools Are Moving Creative and Software Work Toward Specification

TBPN’s discussion uses Debater Center, AI-generated Monet-style clips, Cursor, Figma and a 67-year-old AI founder to question whether tech labels describe what is actually happening underneath. The speakers argue that ranked debate software may need an audience to create the performative pressure people associate with online debate, while AI tools such as Luma and Cursor are shifting creative and technical work from manual execution toward higher-level specification. Their shorter points on Figma and the older founder make the same corrective move: they resist premature obituaries for products, skills and founder archetypes that are still active.

Debate software has to decide whether it is hosting disagreement or performance

Debater Center is presented as ranked social software for argument. The landing page shown on screen calls it “Your platform for ranked debating” and says users can “Compete in 1v1 voice debates to climb the leaderboard.” A second screen makes the competitive frame more explicit: matches, match history, a leaderboard, usernames, ELO, wins, losses, and draws.

The premise, as described by the speakers, is simple: the product pairs a user randomly with another person, gives them a topic, and places them in a room together. The first reaction is not dismissal. One speaker calls it “an interesting idea.” But the critique comes quickly: if the product only gives two strangers a private room, it may be missing the condition that makes online debate feel like debate.

One speaker jokes that the real mechanic is that “whoever makes the other person rage quit wins.” The line is partly a joke about internet argument, but it also exposes the product question underneath. Rage quitting is an understandable win condition if the product is trying to convert argument into a game-like contest. It gives the interaction a visible endpoint. It also implies a version of debate less concerned with persuasion than with escalation, endurance, or getting the other person to break.

When another speaker asks what happens if neither person rage quits, the answer is simply: “Then I don’t know.” That uncertainty matters because the screenshots show a system built around rankings and outcomes. A leaderboard can track wins and losses, but the supplied material does not explain how Debater Center would judge a substantive debate if neither participant exits. The product is legible as a competitive interface; the evaluative mechanism remains unclear in the discussion.

The speakers then separate disagreement from debate. One speaker says he often has one-on-one conversations with friends where they “completely disagree” on a topic and there is no rage. In that setting, he says, “It’s just a conversation.” The rage usually arrives when an audience is present, because people want to look good in front of others. Another speaker immediately names the dynamic: “It’s a performance.”

The rage usually comes when you want to look good in front of the audience.

That distinction is the core of their critique. In their framing, the audience is not merely a distribution channel after the debate happens. It changes the behavior inside the debate. A private room may produce disagreement, but an audience can turn disagreement into a performed contest. The source’s language is audience, performance, rage, and looking good; the product implication they draw is that a ranked debating app may need to think about spectators if it wants the emotional shape of online debate rather than a private argument between strangers.

The proposed fix is an “Omegle type audience”: a rotating or ambient audience that can watch the exchange. The idea is rough, but the reasoning is clear. “Debating in an empty room is no fun,” one speaker says. “I can debate the wall right now.” The line does not mean debate is impossible without spectators. It means the internet version of debate that the speakers are discussing — the ragey, performative, scoreboard-oriented version — depends heavily on being seen.

Gaming becomes the adjacent example. One speaker notes that gaming is “highly rage inducing” and says it fits the same criteria. The comparison is not developed into a full theory of games, but the connection is direct enough: gaming combines competition, performance, and emotional escalation. Debater Center’s ELO and win-loss interface borrows some of that competitive grammar. The speakers’ critique is that the live debate itself may still lack the audience pressure that makes people behave as though something is at stake.

The opening banter matters because the speakers place themselves inside the behavior they are analyzing. They begin by mocking people who spend all day debating online — “the guy tweeting from a hot tub in Dubai” — then admit that they sometimes debate that person and sometimes are that person. “Maybe we’re that guy,” one says. “We are,” the other answers. The criticism is not delivered from outside internet argument culture. It is a product observation from people who recognize the impulse in themselves.

That self-implication keeps the Debater Center critique from becoming a simple complaint about other people’s bad online behavior. The speakers are describing a social mechanic they know from use: the audience changes the stakes, and the desire to perform for that audience changes the tone. A debate platform can make matches, assign topics, and track outcomes, but the speakers’ question is whether the private interaction contains the same social voltage as the public one.

The screenshots reinforce that the product is already borrowing from competitive systems. “Leaderboard,” “ELO,” “W,” “L,” and “D” make argument measurable in the same broad way games and ladders make performance measurable. The missing piece, in the speakers’ view, is not more scoreboard language. It is the social condition that makes people care about the scoreboard in the first place. If nobody is watching, the exchange may feel less like an event and more like a conversation with a stranger.

That is why the wall joke lands as a product critique. “I can debate the wall right now” is not a claim that the software has no value. It is a claim about isolation. Debate, as the speakers are using the word, has a public-facing element. It becomes more than disagreement when there is something to win or lose socially. An empty room can host argument; it may not create the same incentive to posture, escalate, or perform.

Debater Center, as shown, has the surface elements of ranked competition: sign-up, matchmaking, ELO, wins, losses, and draws. The speakers’ objection is that debate is not just a two-player format. It is also a social situation. A disagreement in private can remain a conversation. A disagreement in front of an audience can become a performance. If the product is meant to create the latter, the audience question is not cosmetic in their framing. It is bound up with what kind of behavior the product will actually produce.

The Monet example turns on the difference between making the work and receiving it

The AI art discussion centers on a tweet shown on screen from Min Choi. The visible text reads: “1. Input: 6 Monet’s paintings. 2. Output:” The attached video is labeled “Luma Dream Machine” and shows painterly animated scenes, including a vibrant sunset over water and a blooming flower field. The post display also shows “0:26” and “230.1K views.”

The speakers use the example to separate two questions that often get treated as one: how difficult the work is to make, and what the finished output does to the viewer.

One speaker grants the craft point immediately. An actual Monet painting involves “a harder skill” and “much more skill” than generating an animation from inputs. Monet had to spend the time, develop the style, and physically execute the painting. The AI output does not carry that same process.

But the speaker’s more disruptive claim is about reception. For him, the Luma-generated output conveys “100% basically of the emotion” that looking at an actual Monet conveys. He is careful, in his own way, to distinguish that from saying the processes are equivalent. The original painting is harder to make and more impressive as an act of execution. The generated output can still deliver the same emotional effect to him as a viewer.

That is the tension the discussion keeps circling. If the value of the work is tied to authorship, difficulty, and physical execution, Monet’s painting remains categorically different. If the value is tied to the experience produced in the viewer, the generated output competes more directly. The speakers do not deny the higher skill involved in making the original. They emphasize that the viewer may respond primarily to the finished artifact.

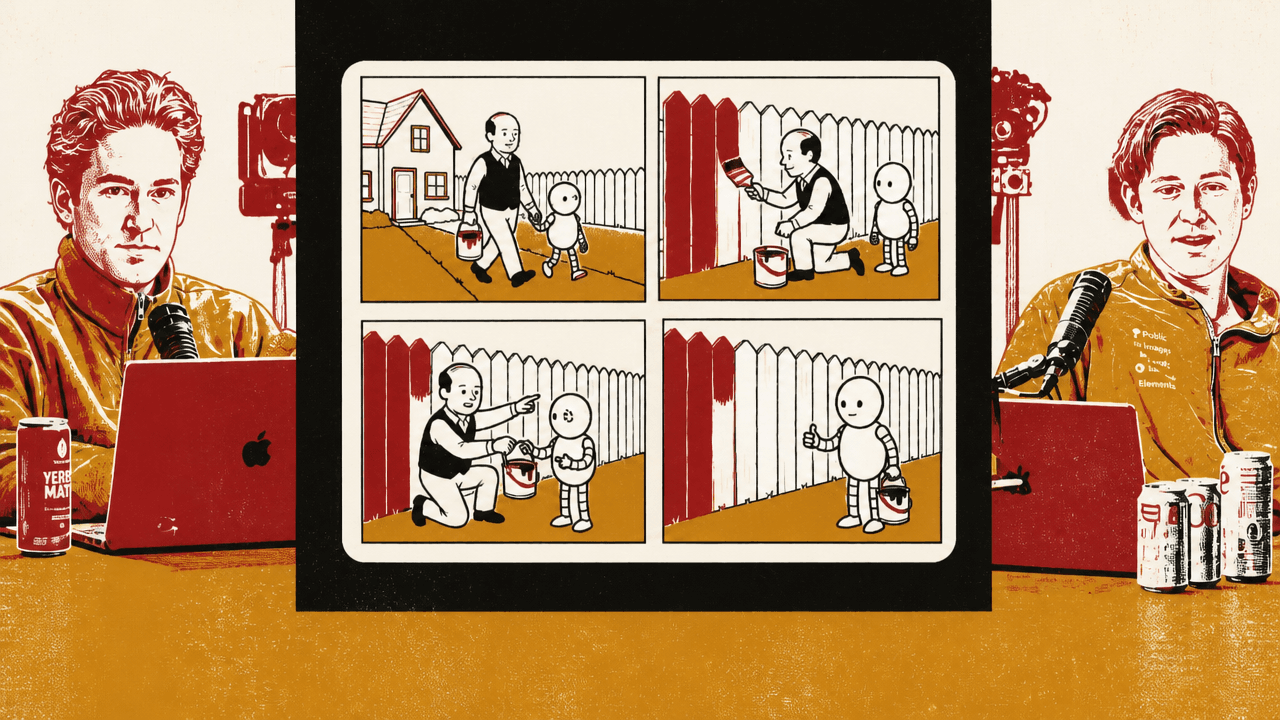

One speaker then links the art example to software. He compares Monet’s craft to working at a lower level of a technical stack. Monet had to determine how to place paint on canvas in a way that produced a particular emotional and visual result. In the AI case, the user operates through what the speaker calls a “prompt compiler”: the user asks for the desired result, and the system produces it.

The point is not that prompting is as difficult as painting. The speaker says the opposite. The point is that AI changes the level at which the person operates. Instead of executing every step directly, the person provides direction, examples, or constraints and then evaluates the output. The work shifts from direct manual production toward specification and selection.

The Min Choi example is important because the input is not described as a purely textual prompt. One speaker says the creator provided images — “exact images” and “key frames” — showing what he wanted the result to look like. Luma then generated the intermediate frames. The speakers repeatedly call those in-between frames “boilerplate.”

Boilerplate carries much of the argument here. Applying the term to animation treats the frames between key images as connective execution that the model can fill in once the target states are supplied. The speakers are not giving a detailed account of animation craft. They are making a broader claim about AI systems: when the desired endpoints are clear enough, the machine can synthesize work that previously required specialized manual execution.

The game-engine analogy makes the same point from another direction. A game engine handles intermediate calculations — geometry, rendering, movement — so the person building with it does not manually compute each step. The speakers see Luma operating similarly in the Monet example. The creator supplies references or target states; the system generates the motion and transitions between them.

That analogy also helps explain why the speakers are not primarily interested in whether the generated clip is an authentic Monet. Their attention is on the production stack. A painterly moving image once required a chain of human decisions and executions across composition, style, and motion. In the example shown, the speaker’s description is that the user supplied the reference images and Luma filled the missing continuity. The system becomes a layer that translates a desired output into the intervening visual work.

The emotional claim remains separate from the execution claim. One speaker says the clip gives him essentially the same feeling as looking at an actual Monet. That statement is personal and experiential. It is not presented as a universal theory of art value. But it does explain why the technical abstraction matters: if a generated artifact can create the intended feeling for a viewer, the lower cost of producing it becomes culturally and economically significant.

The speakers keep returning to the end result because that is where the AI pressure lands. The human maker’s effort may still matter as biography, craft, or status. But the viewer may encounter the artifact first as an experience. If the generated output delivers that experience, the production method becomes less visible at the moment of consumption. The result can feel competitive even when the process is not comparable.

That is why the Monet example becomes more than an art example. It is used as evidence for a broader abstraction shift. In the speakers’ framing, AI is not only producing content. It is absorbing intermediate labor. The user can move closer to describing the desired end state while the system handles more of the production path.

Cursor and Luma are treated as versions of the same abstraction layer

The speakers connect the Monet animation directly to front-end engineering and AI-assisted software work. The domain changes, but the pattern they see is the same: a tool absorbs execution work, and the human operates at a higher level of instruction.

Cursor is the named software reference. One speaker says that building UI with Cursor has “zero” real skill associated with it compared with traditional front-end engineering. The phrasing is deliberately blunt. Its force is in the contrast: traditional implementation required the builder to execute the interface directly; AI-assisted implementation allows the builder to ask for the interface and have the tool generate much of the result.

The analogy to prompt engineering is explicit. One speaker says the situation is “almost exactly” what he had been describing in the movement from UI engineering or front-end engineering to prompt engineering. What matters, in that framing, is the final product and the end-user experience: does the thing work, and does it evoke the intended response?

That is the same logic used in the Monet discussion. Monet had to build the emotional effect through the physical manipulation of paint. The AI user can provide examples or instructions and receive a generated result. In front-end work, the traditional developer had to implement the interface; the AI-assisted user can increasingly describe what the interface should be and let the system generate the implementation.

The compiler metaphor makes the structure of the claim clearer. A compiler lets someone express intent in a higher-level language while the machine handles lower-level translation. The speaker applies that pattern to art and UI alike. Monet worked close to the “assembly” of the medium, placing paint on canvas to create the final image. The AI user works through a prompt layer. In UI work, the person using Cursor can operate closer to the desired output instead of manually writing every part of the front end.

The speakers’ repeated use of “boilerplate” is also central here. In the Luma case, the frames between key images are described as boilerplate. In the UI case, implementation work is treated as something the tool can increasingly supply once the desired result is specified. The argument is not that judgment disappears. The source still implies a role for knowing what one wants and recognizing whether the output satisfies it. But the speakers are focused on the disruptive side: the old execution layer is becoming easier to bypass.

The difference between skill and output remains unresolved but visible. In the Monet example, the speaker says the original painting is harder and more impressive to make. In the Cursor example, the speaker says AI-assisted UI work carries far less “real” skill than traditional front-end engineering. Yet in both cases, the end result is what pressures the older skill category. If the generated artifact is good enough for the viewer or user, the fact that it was produced through a simpler path may matter less to the person consuming it.

The strongest form of the claim is that AI compresses a production process. A sequence of intermediate operations that once had to be performed manually can be replaced by high-level direction plus model-generated execution. In the Monet example, Luma fills frames between visual references. In the UI example, Cursor fills implementation between a request and an interface. The domains differ, but the abstraction pattern is the same.

The speakers’ language is intentionally reductive because the point is about shock at the new level of leverage. “Zero” skill is not a careful taxonomy of design, engineering taste, maintainability, or interaction quality. It is a way of saying that the visible act of producing the thing has moved. The person no longer needs to demonstrate the same manual competence at every layer if the model can supply enough of the layer underneath.

That shift puts pressure on how skill is recognized. In the older frame, the skilled person is the one who can execute the medium directly: paint the canvas, animate the frames, write the front end. In the AI-assisted frame described by the speakers, skill moves toward specifying what should exist, providing the right references, and deciding whether the output works. The source does not give that new skill set a settled name, but “prompt engineering” is the closest phrase used in the discussion.

The key continuity between the examples is not art style or coding tools; it is delegation. In each case, the human supplies an intended direction and the system fills in intermediate work. The model becomes the layer between desire and artifact. That is why the speakers can move so quickly from Monet-style animation to UI generation: both are treated as cases where the “in-between” has become machine-generated.

The implications are sharper in creative and technical domains where the audience or user mainly encounters the final object. If a viewer feels the intended emotion, or a user sees the desired interface, the hidden production process may lose some of its practical importance. That does not erase the difference between Monet and Luma, or between an experienced front-end engineer and a person prompting Cursor. The speakers’ point is that the market and the viewer may not always care about those differences in the same way practitioners do.

The discussion also frames AI as an abstraction layer rather than merely a replacement tool. An abstraction layer does not simply perform one task faster. It changes what the operator has to know and where the operator has to intervene. In the speakers’ examples, the operator no longer manually constructs every frame or every line of interface code. The operator works closer to the desired output and lets the system translate that direction into production.

That is the sharper version of the AI claim in the supplied material. AI reduces the amount of manual execution required between intention and result. The more of the intermediate path the model can fill, the more production begins to resemble specification. The Monet clip and Cursor-generated UI are treated as different manifestations of that same movement.

Premature obituaries are treated as a category error

The Figma and older-founder threads are smaller than the Debater Center and AI-abstraction discussions, but they share a posture: resistance to premature dismissal. In both cases, the speakers reject a familiar tech reflex — declaring that a tool is dead, or assuming that startup ambition belongs to a narrow founder archetype.

The Figma material is brief. The claim is practical rather than elaborate: “Figma is not dead.” The reason given is that people are still using it “for all their design needs,” and “the tool is just too good.”

A tweet shown on screen makes the same point in public-post form: “Figma is not dead, it’s just evolving. The community is stronger than ever.” The supplied material does not develop a competitive map around Figma or spell out the product changes implied by “evolving.” The defense is simpler: continued use, tool quality, and community strength make the death framing too blunt.

That standard is intentionally modest. The speakers are not arguing that Figma is insulated from change. They are rejecting the “dead” label as premature. A tool can be under pressure, changing, or debated and still remain central to actual work. In the source’s language, the decisive counterweight is that people are still using it and the product remains good.

The older-founder story is similarly framed as a counterexample. It appears through a Hacker News screenshot with the headline “67-Year-Old Founder Proves Silicon Valley Wrong.” One speaker introduces it as “a really cool story,” and another calls it “super inspiring.”

The contrast is with the familiar image of “20 year old dropouts.” The supplied material includes one line that the founder is building an AI data center, but the developed point stays at the level of archetype rather than operating detail. The story is inspiring because it cuts against the youth-centered founder narrative.

The Hacker News headline does much of the framing: “Proves Silicon Valley Wrong.” The speakers pick up that frame as a cultural point. A 67-year-old founder building in AI infrastructure is treated as enough to puncture the assumption that serious startup ambition is primarily the province of the very young.

The connection between Figma and the founder story is not that they are the same kind of object. One is a design tool; the other is a founder anecdote. The connection is the error being resisted. “Dead” is a premature obituary for a product that people still use. “Too old” is an implied obituary for a founder who is still building. In both cases, the speakers treat the received story as too narrow for the evidence immediately in front of them.

The Figma point is about persistence under narrative pressure. The founder point is about capability outside a stereotyped age profile. Neither thread is built into a comprehensive argument, but both work as corrections to tech shorthand. A product’s reputation can swing faster than its actual utility. A founder archetype can become so familiar that it excludes people who do not match it. The supplied material pushes back on both habits.

This also fits the broader pattern of the discussion. Debater Center prompts the speakers to ask what condition actually creates the behavior people associate with online debate. The Luma and Cursor examples prompt them to ask which parts of production are becoming abstracted away. Figma and the 67-year-old founder prompt a simpler question: what is being written off too quickly? The answer, in both cases, is that the obituary arrives before the underlying activity has stopped.

The common thread is the gap between surface format and actual behavior

The four topics look scattered: a ranked debate platform, Figma’s relevance, an older founder building in AI infrastructure, and AI-generated Monet-style animation. The through-line is not a single market thesis. It is a repeated interest in the gap between the visible label and the thing that actually makes the system work.

For Debater Center, the visible label is debate. The screenshots supply the recognizable parts of a competitive product: matchmaking, leaderboard, ELO, wins, losses, draws. The speakers’ question is whether those parts are enough to produce the behavior associated with debate online. Their answer leans toward no, because they see audience pressure as the factor that turns disagreement into performance.

For the Monet example, the visible label is art or AI art. The speakers immediately split the issue into production and reception. As production, the original Monet painting represents skill, time, style formation, and manual execution. As reception, the generated clip can still deliver the feeling one speaker associates with Monet. The label “AI-generated” does not settle the matter because the relevant question changes depending on whether one values process or effect.

For Cursor, the visible label is software work. The speakers treat AI-assisted front-end generation as a shift in where work happens. The old surface — a person building an interface — may look similar at the end: an interface exists. But the underlying behavior has changed if the person specifies the result and the system fills in much of the implementation. The speaker’s blunt claim about “zero” real skill compared with traditional front-end work is aimed at that changed production path.

For Figma, the visible label being challenged is “dead.” The speakers respond with continued use, quality, and community. The label is too final for a tool that is still part of people’s design work. For the 67-year-old founder, the visible label is the startup archetype: youth, dropout status, early-career intensity. The counterexample is an older founder attached to an AI data center story and described as inspiring.

These are not all equally developed threads. The debate and AI-abstraction sections carry the most substance. The Figma and founder items are shorter and more anecdotal. But the editorial logic is consistent enough: do not confuse the wrapper for the operative mechanism. Debate is not just a room with two opponents; in the speakers’ framing, it is shaped by an audience. AI art is not only a question of whether the process is as hard as painting; it is also a question of whether the output moves the viewer. Front-end work is not only the existence of a UI; it is the changing path by which the UI gets made. Figma is not dead simply because people are debating its future. A founder is not disqualified from the startup story by being outside the default age myth.

The source is loose, and the speakers often express the claims as riffs rather than as formal arguments. But the useful substance is in those distinctions. They are asking, across domains, what actually produces the effect people care about: the audience, the output, the abstraction layer, the continued use, the counterexample. The answer is usually more specific than the label.