Cerebras IPO Tests Public Demand for Faster AI Inference

John Coogan and Jordi Hays frame Cerebras’s IPO as a public-market test of whether AI customers will pay heavily for faster inference, while noting that the company’s wafer-scale architecture still faces limits around memory, context windows and large-model serving. In their account, the same standard of evidence runs through the day’s other stories: Kevin Warsh’s narrow Fed confirmation, Figure’s robot demo and Musk’s case against OpenAI all turn less on rhetoric than on whether technical, institutional or legal claims can be substantiated.

Cerebras is now a public-market bet on faster inference

John Coogan framed the Cerebras IPO as public-market validation for a company that had long carried both technical skepticism and strategic ambiguity. Cerebras, he said, had “doubled their valuation basically overnight,” with shares trading far above the already-raised IPO range. Jordi Hays put the market capitalization at roughly $64 billion and noted that some prediction markets had not even included categories above $50 billion.

The underlying company, Coogan explained, is unusual because its chip design rejects the standard pattern of cutting a wafer into many separate chips. Cerebras uses the whole wafer. He called it “a genius idea” and “one of those simple ideas taken deadly seriously,” but also emphasized why investors and observers had doubted it. In conventional manufacturing, defects on a wafer can be tolerated because bad individual chips can be discarded. If the entire wafer is one chip, a single defect appears to threaten the whole product.

Coogan said Cerebras addressed that yield problem with redundant cores: not all cores are activated, so defective areas can be routed around. That, in his account, answered one of the earliest critiques of the architecture. Another doubt was whether the AI architecture landscape itself might move away from Cerebras’s assumptions — for example, if transformers stopped being central. But Coogan’s bottom-line assessment was simple: “Cerebras chips work.” Hays added that they can be used today in “Codex 5.3 Spark.”

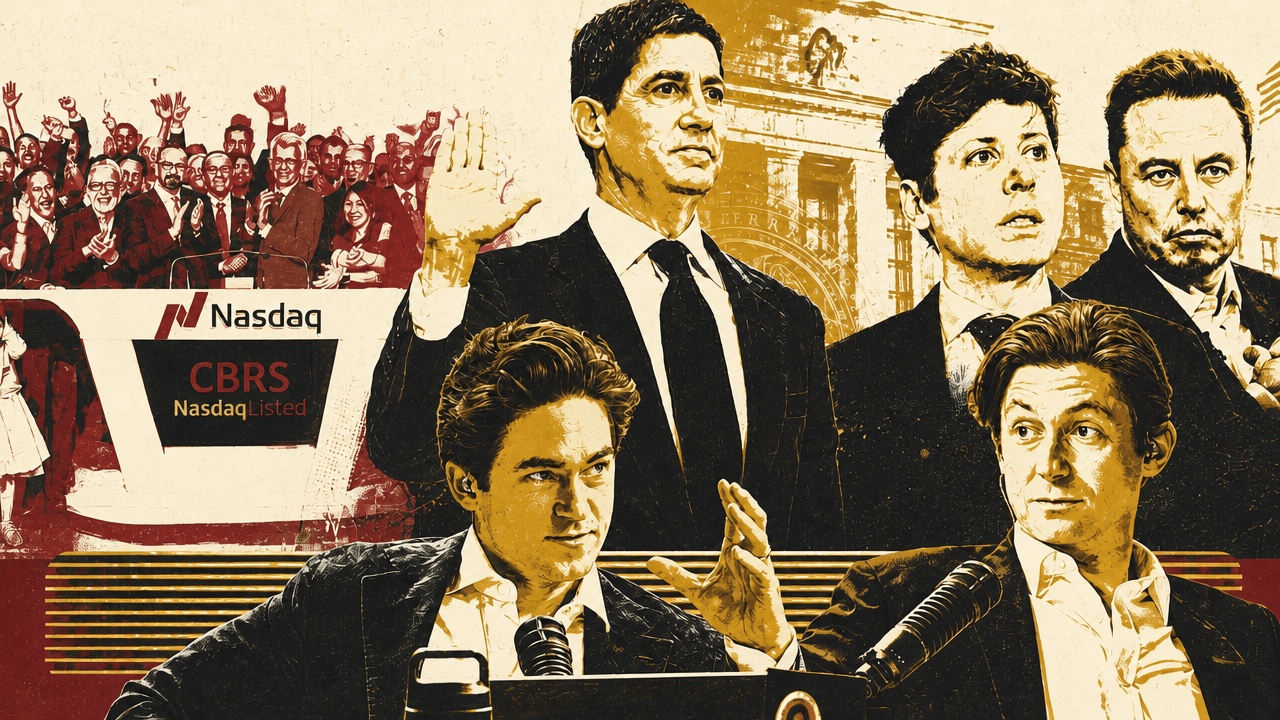

A NASDAQ visual for CBRS showed Cerebras at $307.00, up $122.00, or 65.95%, on the day. Coogan said the IPO pricing had moved sharply upward: from an earlier range of $115 to $125, to $150 to $160 on Monday, to $185 at pricing, and then much higher in public trading.

The core investment case Coogan drew from Semianalysis was not merely that Cerebras can produce AI chips, but that AI customers have shown a revealed willingness to pay heavily for speed. He cited Semianalysis’s usage of Anthropic’s Opus 4.6 Fast mode, saying it charged six times the price for roughly two and a half times the interactivity, and later under two times faster. Buyers were paying disproportionately more money for speed than a linear performance calculation might imply.

That mattered because there had been an open question in AI product design: should models become smarter, or should they become faster? Coogan characterized Sam Altman as leaning toward speed as a “magical superpower” for productivity, while Andrej Karpathy preferred smarter models and was willing to let them run overnight. Semianalysis, by contrast, appeared to reveal through its own spending that interactivity mattered: Coogan said it was spending 80% of its AI budget on Opus 4.6 Fast.

Hays sharpened the distinction into three variables: capability, speed, and intelligence. The question, he said, is not just whether there is a “250 IQ model,” but whether there is a more capable model that uses tools more efficiently and operates quickly.

Coogan argued that users’ expectations around large language models are still conditioned by slow token streaming — a response arriving word by word, almost like a person typing. That can feel natural, but he said the better experience is closer to loading a Wikipedia page: the whole thing appears immediately, and the user scrolls. In coding, he said, users firing off a task want the code immediately. He said he had used Spark and described it as an “interesting experience” because it can be used not only in coding contexts but also as a general chatbot.

Hays compared two employees with the same skill set: if one is five times faster, that person can create way more value for an organization. Coogan extended the analogy, arguing that in some jobs, being twice as effective can command far more than twice the compensation. He also invoked the often-repeated e-commerce latency lesson — that small delays in Amazon page loads could hurt sales — while acknowledging he was not sure of the precise quote. The point, for him, was that latency causes distraction. A user waiting on an LLM can drift to Instagram Reels and forget the original task. He said this applies in business contexts as well.

I personally think there will be huge demand for faster inference across all parts of the AI economy.

Coogan also said OpenAI is “clearly very pilled on Cerebras,” attributing that view to a claimed 750-megawatt deal and to Cerebras chips already serving GPT-5.3 in Codex under the name Spark. In his telling, that is the practical significance of the speed thesis: faster inference is not a benchmark abstraction, but a product experience users may quickly come to expect.

The same architecture that gives Cerebras speed may constrain its scale

The bullish case for Cerebras did not erase the technical limits Coogan drew from Semianalysis. The main concern is whether Cerebras can serve larger models and larger context windows as the market’s demands grow.

Coogan said Cerebras chips are not currently as capable at holding very large models in their limited memory, or at networking multiple chips together to serve those models, compared with systems such as Nvidia’s NVL72 racks. He attributed to Semianalysis the view that the industry is trending toward larger context windows “ad infinitum,” and that 128,000-token context will not remain acceptable for long, especially as agentic workloads become more common.

The problem, as Coogan described it, is not easily solved by making the wafer larger, because TSMC uses standard wafer sizes. Nor is it straightforward to add much more memory to the current architecture. Cerebras’s design depends on large amounts of SRAM — static random access memory — on the wafer itself, and Coogan said SRAM is not shrinking much with each new semiconductor node. WSE2 had 40 gigabytes of memory; WSE3 had 44 gigabytes. Coogan emphasized that this was only about a 10% increase across an iteration where customers might hope for a doubling or more.

The trade-off is physical. On a single wafer, more memory can mean less compute area. In Coogan’s telling, that creates a structural question about how Cerebras scales as models grow larger.

His answer was not that Cerebras must own every workload. Instead, he proposed that AI infrastructure may move from an “agentic age” into an “orchestration age.” In that model, the largest and smartest models act like bosses, delegating some tasks to smaller, faster models. The top model might run on Nvidia NVL72 racks, TPUs, or another system better suited to massive memory and scale, while Cerebras serves fast delegated workloads.

Coogan compared this to how current models already use tools: a model may search the web or query a database, delegating CPU-bound tasks outside the model itself. In a similar hybrid architecture, a large “boss model” could assign sub-tasks to “Cerebras speed workers.” The implication is that Cerebras does not need to displace every other AI compute architecture to matter; it needs to dominate the latency-sensitive slices of AI execution.

Hays reduced the broader trend to a blunt formulation: “We’re gonna make big computers.” Coogan agreed. The AI supply chain, in his view, is expanding rather than collapsing into a single winner. The initial assumption that the AI age simply meant Nvidia GPUs may be giving way to a more mixed infrastructure stack that includes GPUs, CPUs, ARM, Intel, TPUs, and specialized systems such as Cerebras.

The IPO also became a venture-capital story about individuals, allocation, and timing

The Cerebras IPO was also presented as a test of venture judgment. A tweet by Ho Nam, shown on screen and read by Hays, argued that the IPO illustrated “the power of an individual partner over the brand name firm in VC.” Nam wrote that Pierre Lamond had been a partner at both Sequoia and Khosla, but that Cerebras was backed in its early years by Eclipse, the firm Lamond joined at age 84. Hays called it “an awesome story,” emphasizing that Lamond was born in 1930, the same year as Warren Buffett.

Another visible post, from Matthew Sigel, described IPO order-book dynamics: one-third of the book got zero allocation, and the top 25 investors took 60%. Hays interpreted that as likely meaning large funds — “the Fidelitys, the State Streets, the BlackRocks” — received major allocations and had done well on the first day.

Coogan then walked through the valuation history: Series A in 2016 at $100 million with Foundation, Benchmark, and Eclipse; Series B in 2016 led by Co-2; Series C in 2017 led by VY Capital; $1.6 billion valuation in 2018; $2.4 billion in 2019; $4 billion in 2021; $8 billion in 2025 with Atreides and Fidelity; $23 billion with Tiger; and an IPO valuation in May 2026 of $48.8 billion.

| Stage or year | Valuation or event | Named investors or notes |

|---|---|---|

| Series A, 2016 | $100 million | Foundation, Benchmark, Eclipse |

| Series B, 2016 | Not specified | Co-2 led |

| Series C, 2017 | Not specified | VY Capital led |

| 2018 | $1.6 billion | — |

| 2019 | $2.4 billion | — |

| 2021 | $4 billion | — |

| 2025 | $8 billion | Atreides and Fidelity |

| Later round | $23 billion | Tiger |

| May 2026 IPO | $48.8 billion | IPO valuation cited by Coogan |

Warsh’s confirmation exposed the politics around rate cuts

Kevin Warsh’s confirmation as Federal Reserve chair was narrow enough, in John Coogan’s view, to signal immediate political difficulty. Warsh was confirmed as the Federal Reserve’s 17th chair in a 54–45 Senate vote. The visual shown on screen listed 54 yes votes, 45 no votes, one senator not voting, and highlighted John Fetterman under “Senators who didn’t vote with their party” with a “Yes” vote.

The contrast with Jerome Powell was stark. Coogan said Powell had received at least 80 Senate votes for each of his two confirmations as Fed chair. For Coogan, Warsh’s narrower vote reflected how tensions with the White House had pulled the Fed deeper into political conflict, particularly because President Trump had demanded rate cuts from a Fed committee that Coogan described as skeptical of them.

The economic risk Coogan emphasized was stagflation. In a low-inflation stagnation, he said, the Fed can cut rates more easily. In a hot economy with high GDP growth and high inflation, raising rates can pull back on both growth and prices. Stagflation is harder because it combines weak growth with inflation. Coogan said that may be the task facing Warsh.

The ensuing Powell discussion stayed within that frame: how to judge a Fed chair partly depends on the crisis they inherited. Hays suggested Paul Volcker as a favorite chair; Coogan put Volcker and Ben Bernanke in the top tier, but hesitated to rank Powell with Bernanke because Powell, in his view, had not faced a 2008-style moment in which the Fed had to “save the economy.”

Hays pushed back by pointing to the global pandemic. The transcript becomes muddled around speaker attribution, but the counterargument was that the U.S. economy entered 2020 from a position of relative strength: strong consumer balance sheets, low debt, and no shadow banking bomb waiting to explode. While unemployment spiked and stimulus was needed, the argument continued, setting rates was Powell’s core job, and the Fed was not forced into the same rescue posture as during the financial crisis.

Hays’s final point was that the absence of disaster may itself be evidence of competence. If Powell had performed worse and COVID had produced a more severe crash, observers might treat the pandemic as proof that he faced a massive challenge. The disagreement was less about whether Powell did well than about whether his record belongs in the same category as chairs who confronted more visibly systemic failures.

Figure’s robot demo turned on what “fully autonomous” means

The Figure robot discussion treated two claims as simultaneously possible: the demo could be impressive, and the autonomy claim could still require clarification. John Coogan said Brett Adcock had described the system as “fully autonomous running Helix 2,” and that the 24-hour stream drew 3.4 million views. A clip circulating on X raised doubts because the humanoid robot missed packages, touched the side of its head, and then appeared to stop missing.

Coogan called the demonstration “remarkable” and “extremely impressive” even if it had been teleoperated. The robot, in his view, was “clearly working.” But if the company says it is not teleoperation, the head-touching moment still needs a plausible explanation.

Hays offered the explanation Adcock had apparently given: when the robot reaches across its body to the right, it raises the other hand to get it out of the way. Coogan said a related possibility occurred to him: the hand might be blocking a sensor or camera, so the robot raises it to clear its field of view before looking at the next package.

The harder question was whether there was a human in the loop. To Coogan, “fully autonomous” seemed to imply no human involvement. A mocking X post from teortaxes presented an “artist’s impression” of Helix-02 as a human wearing a VR headset and motion-capture gear, capturing the suspicion that the robot might be externally driven.

Coogan’s distinction was less a formal taxonomy than a test of the language being used. “Fully autonomous,” in his reading, should mean no human in the loop. “Teleoperated” would mean something else. He also floated a deliberately absurd edge case: if an orangutan in a VR headset were puppeteering the robot, one could technically claim there was no human in the loop while still avoiding the ordinary meaning of autonomy. Hays played along, saying the chimpanzee would be running its own neural network. The joke clarified the serious point: autonomy claims depend on whether the system is actually doing the work, not merely on whether the operator is literally human.

Musk v. OpenAI came down to credibility versus contract

The Musk-OpenAI trial was entering its final phase with prediction markets moving against Musk. Jordi Hays said a Kalshi market asking whether Elon would win his case against OpenAI had peaked at 58% and was then sitting at 30%.

The legal hinge, as John Coogan presented it through courtroom updates, was straightforward: Musk’s side was arguing credibility and betrayal of OpenAI’s charitable purpose; OpenAI’s side was arguing that Musk could not show a specific enforceable agreement that had been breached.

Coogan read from Mike Isaac’s courtroom thread, which said the judge was instructing the jury on the criteria for deciding the case. Coogan emphasized that this matters because it narrows the lens through which the jury evaluates the evidence: “where theater ends,” as he put it.

Musk’s side, in Coogan’s summary of Isaac’s thread, focused on witness credibility and character. Counsel attacked OpenAI executives Sam Altman and Greg Brockman, repeatedly trying to paint Altman as a liar. Musk’s lawyers also used a broader populist framing: OpenAI executives as wealthy people making large amounts of money while running a charity supposedly dedicated to the good of the world. Isaac, as read by Coogan, wondered whether a jury would register that argument when it comes from Musk, “the world’s richest man.”

OpenAI’s side framed the credibility attacks as a sideshow. Coogan quoted an update saying OpenAI lawyer Sarah Eddy argued there was never a firm agreement among the founders that could have been breached. He also read the argument from William Savitt, OpenAI’s lead counsel: Musk does not have a claim unless there was a specific agreement between Musk and OpenAI describing how his donations to the nonprofit should be spent. “That agreement does not exist,” Savitt said, according to the account Coogan read.

The stakes were described as enormous. Coogan read that Musk was asking for more than $150 billion in damages, seeking to remove Altman from OpenAI’s board, and trying to stop the company’s shift to operate as a for-profit company.

Coogan distilled the trial’s closing posture this way: Musk’s camp argued that OpenAI executives are rich and lying; OpenAI’s camp argued that none of Musk’s claims can be sustained under the actual law. Microsoft, he joked, “disappears into the bushes.”

The courtroom’s technical glitches served as a smaller illustration of how much of the trial was theater and logistics as well as law. Wired’s Max Zeff reported that Musk’s lawyers brought a large monitor, OpenAI asked to use it, Musk’s lawyers initially refused, and the judge said they had to share. OpenAI then said it might not be possible to connect their laptops. Coogan’s conclusion was dry: “AGI is here, but we’ll still need a dongle.”