Altman Testimony Casts Musk’s OpenAI Claims as a Fight Over Control

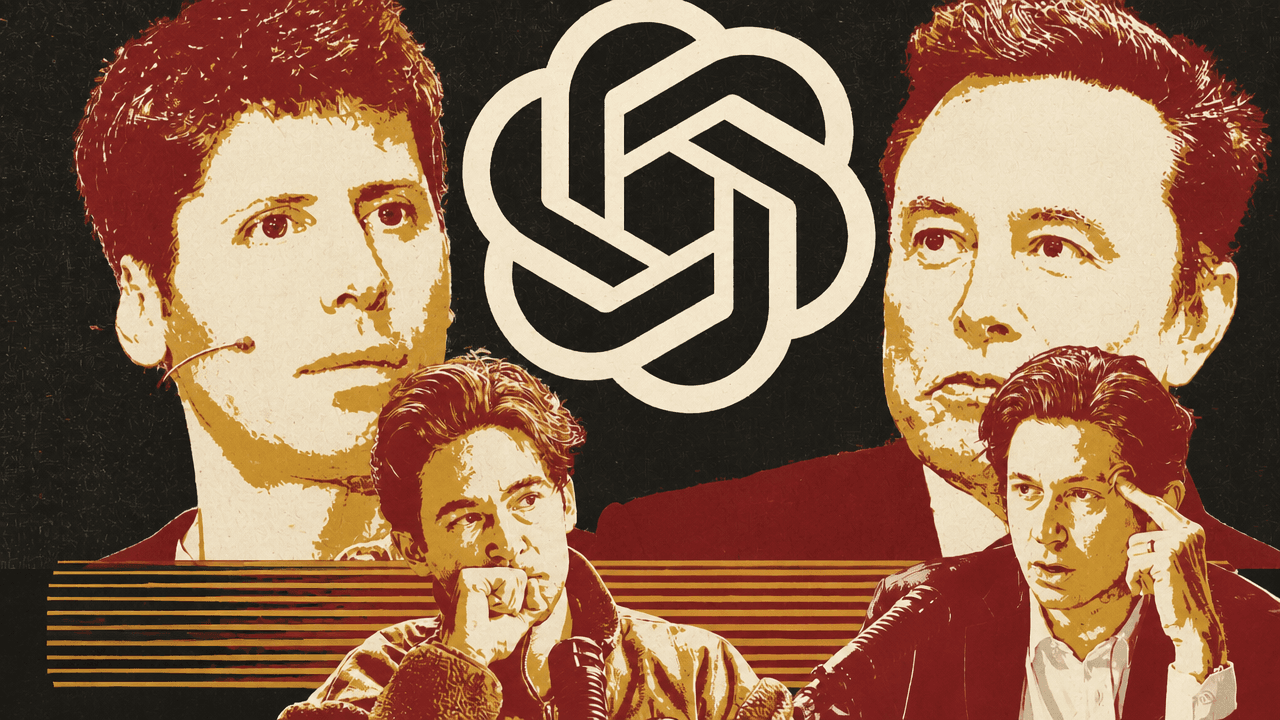

OpenAI’s trial, Anthropic’s secondary-market flare-up, and two media deals are read on Diet TBPN as fights over control, enforceability, and credibility. John Coogan argues that Musk v. OpenAI is increasingly not only about whether OpenAI betrayed its nonprofit mission, but whether Elon Musk accepted a for-profit path only if he controlled it; Jordi Hays frames the Anthropic panic as a test of whether private-company transfer restrictions can hold against demand for AI exposure. Coogan and Hays treat Thinking Machines’ demo separately, as a bet that real-time interaction should be native to AI models, while eBay’s rejected GameStop bid and Byron Allen’s BuzzFeed investment turn on market confidence.

OpenAI’s trial turns on motive and control

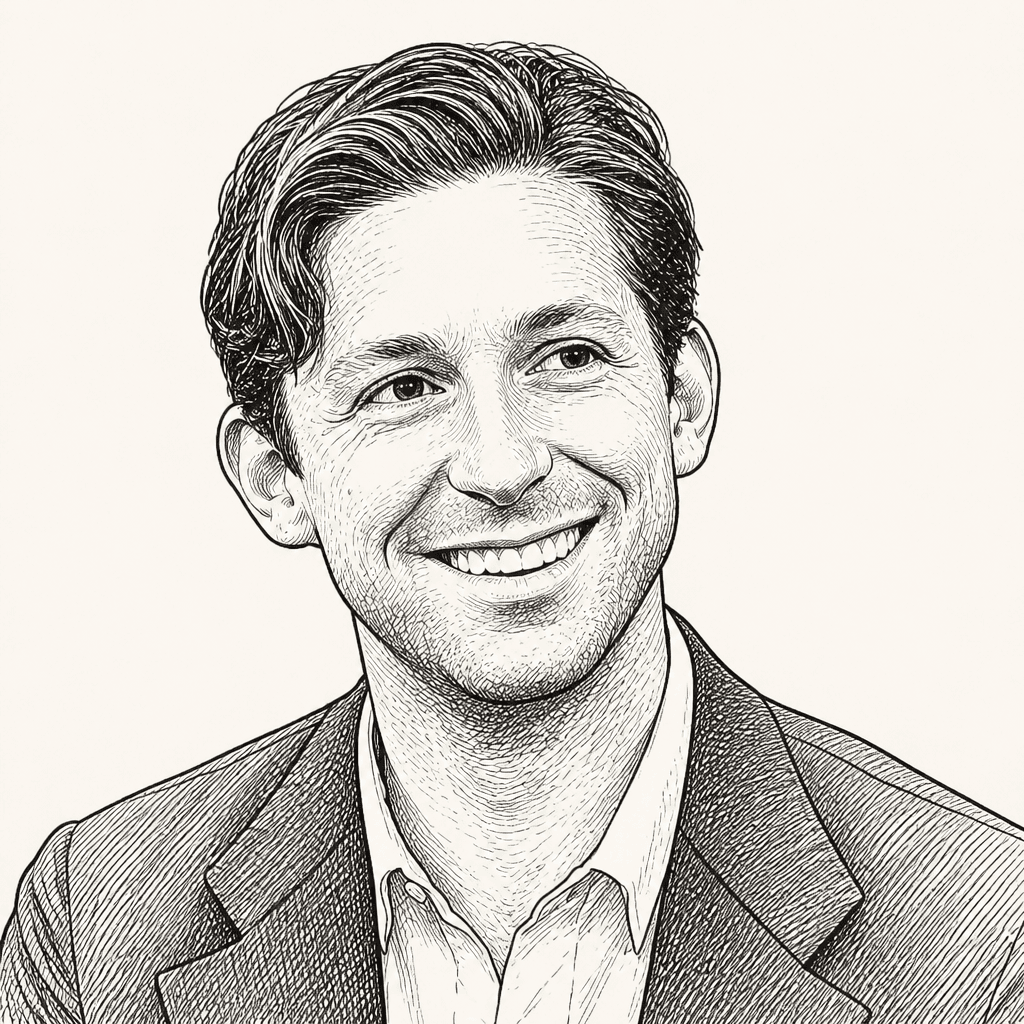

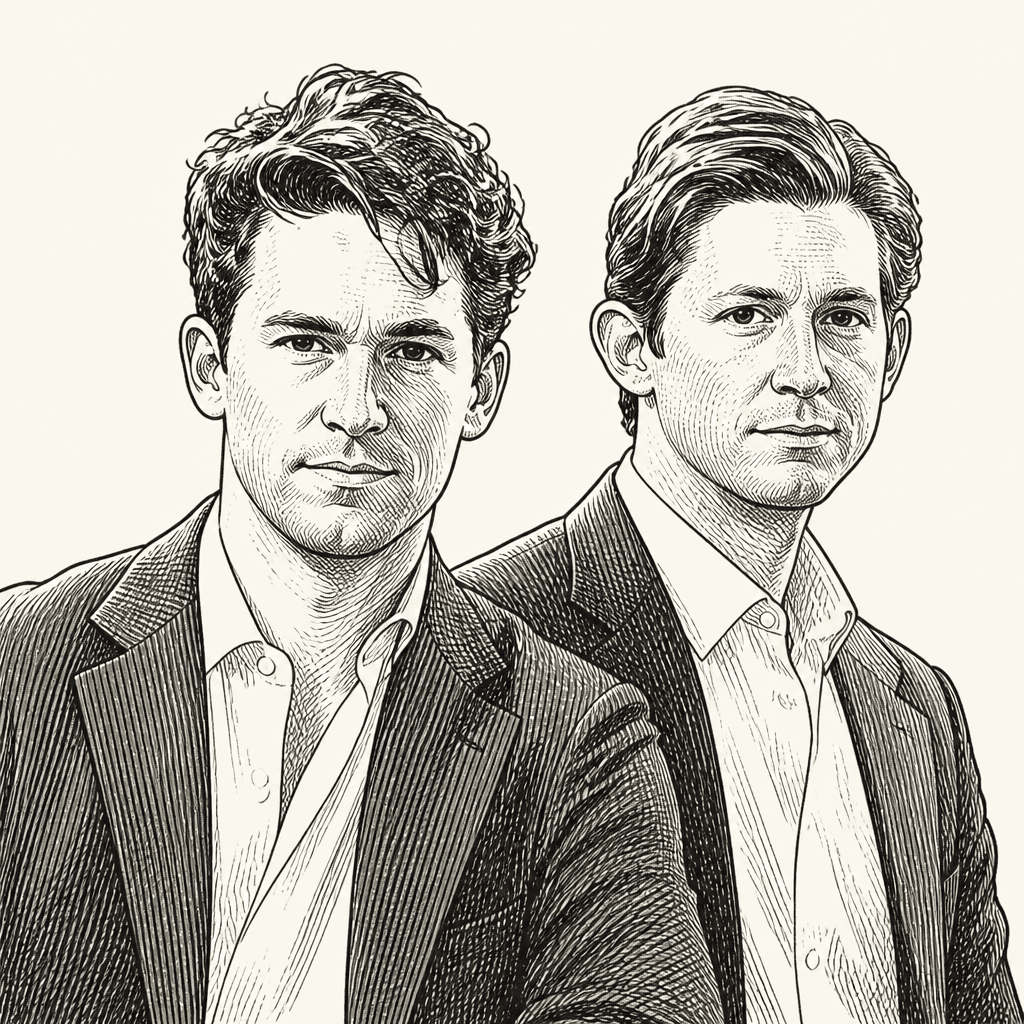

John Coogan framed Sam Altman’s testimony in Musk v. OpenAI as the point where the trial’s two competing stories had to be reconciled. Elon Musk’s case, as Coogan summarized it from The Wall Street Journal, is that OpenAI, Altman, and Greg Brockman allegedly manipulated Musk into contributing tens of millions of dollars to a nonprofit, only to later turn the lab into a for-profit venture. Musk is also suing Microsoft, OpenAI’s largest investor, for allegedly aiding Altman and Brockman in that deception.

By the time Altman took the stand, the trial had already moved through several phases. Coogan described testimony and depositions concerning the failed November 2023 board coup, including former OpenAI CTO Mira Murati and former board member Helen Toner, as critical of Altman’s leadership style and candor. Later, Alex Shan clarified that Murati’s appearance, as he saw it, was a deposition rather than live testimony, and he did not believe she had testified live in the trial yet.

OpenAI’s side, meanwhile, had used testimony from Shivon Zilis and Brockman to complicate Musk’s account of himself as defending a pure nonprofit vision. Coogan said earlier testimony indicated Musk had explored scenarios in which OpenAI could become part of Tesla, Altman might help lead Tesla AI, and Musk could retain deep control. Brockman, in Coogan’s summary, also testified that Musk supported a for-profit conversion if Musk could control it, including a version tied to raising money for Musk’s Mars ambitions.

That distinction changes the shape of the dispute. The question is not only whether OpenAI abandoned a nonprofit mission. It is whether the for-profit turn was a betrayal of what Musk funded, or a practical adaptation to frontier AI economics that Musk himself was willing to accept if he controlled the outcome.

Jordi Hays highlighted the simplest version of that economics through a quote Max Zeff pulled from Ilya Sutskever’s testimony. Zeff’s post quoted Sutskever explaining OpenAI’s for-profit entity under oath: “if there’s no funding, there’s no big computer.” Zeff called it “in the running for quote of the year.” Coogan extended the line.

If there’s no funding, there’s no big computer and you need big computer if you want big AI.

Sutskever’s testimony cut in more than one direction. Coogan said Sutskever testified that he spent a year compiling a 50-page document documenting Altman’s manipulative behavior. But Sutskever also said he never promised Musk that OpenAI would remain permanently nonprofit. Coogan added that it emerged in trial that Sutskever’s OpenAI stake is probably worth around $7 billion, which he said could complicate how judge and jury view Sutskever’s motivations.

Microsoft’s role, as described by Coogan, was to present itself as a stabilizing partner rather than a participant in deception. Satya Nadella testified that Musk never contacted him to complain that Microsoft’s deal violated any agreement Musk had with OpenAI’s nonprofit, even though Musk had Nadella’s number. Nadella also characterized OpenAI’s former directors as operating from “Amateur City,” a line Coogan treated as part of OpenAI’s broader rebuttal to the board’s account.

Altman’s own testimony, as relayed through Mike Isaac’s live posting, gave OpenAI’s side a chance to recast the company’s origins. OpenAI began questioning Altman much as the plaintiff’s side had questioned Musk, establishing that Altman, like Musk, had been intensely interested in AI for years and wanted to build useful things with it. Coogan noted a frequently clipped 2015 Altman line — “AI will probably destroy the world” — and said the fuller context was an answer to a question about key problems to solve, followed by Altman saying he was starting a company, “well it’s more of a nonprofit,” to work on that exact problem.

The early OpenAI framing, in Coogan’s reading of the testimony, involved fear of Google as much as abstract safety. He quoted a 2015 Altman email: “Been thinking a lot whether it’s possible to stop humanity from developing AI. I think the answer is almost definitely not. If it’s going to happen anyway, it seems like it would be good for someone other than Google to do it first.”

Musk’s control demands complicate the nonprofit story

A major part of OpenAI’s answer, as John Coogan described the testimony, is to show that Musk’s objection to OpenAI’s evolution was conditional: he opposed a structure he did not control, not necessarily a for-profit or corporate structure as such.

Altman, according to Mike Isaac’s account as read by Coogan, testified that Musk once said he would potentially pass control of OpenAI to his children upon his death. Coogan lingered on the succession implications, joking that fragmenting control among “20 different children” could create a different dynamic, but the substantive point was that control was not incidental to the record being built.

The Tesla discussions were especially important in that framing. Coogan said an old email suggested Altman might have joined Tesla’s board as part of earlier AI discussions. Musk, in Altman’s telling, offered a board seat partly to assuage Altman’s concern that he would have no direction over AI development if OpenAI were folded into Tesla. But Altman also said the offer came with what felt like a “nascent threat”: if Altman did not accept, Musk hinted he might do AI work inside Tesla regardless.

Coogan compared that move to a familiar Silicon Valley acquisition-threat pattern. He cited Isaac’s contextual note that Mark Zuckerberg has made overtures to companies Meta wants to acquire, sometimes more explicitly: if they do not take the deal, Meta will come for them. Coogan called it “Tony Soprano vibes.”

Altman’s testimony also contrasted Musk’s management style with the culture Altman says AI labs need. Coogan relayed Altman criticizing stack ranking engineers across AI labs, something Musk reportedly likes across his companies and something common at large companies like Meta, Amazon, and Microsoft. Altman’s alternative, as Coogan summarized it, is “psychological safety”: researchers need permission to go “try something random in the corner.” Coogan said Altman linked that bottom-up environment to projects such as deep research and other AI breakthroughs.

The courtroom fight over Altman’s trustworthiness remained central. Coogan relayed Isaac’s live observations of Altman describing the November 2023 firing and reinstatement — internally known as “the blip” — as one of the hardest periods of his life. Altman said, “I had poured the last years of my life into this. I was watching it about to be destroyed.” He also said there was something appealing about going to work at Microsoft, but that he was “very angry, hurt, and upset” and felt “an incredible betrayal.”

Altman addressed the allegation directly. According to Isaac’s feed as read by Coogan, Altman said: “Clearly there were misunderstandings and a breakdown of trust,” and then, “I was not trying to deceive the board. I feel badly for the misunderstandings, but that was never my intent.”

For Coogan, that answer went to the heart of Musk counsel’s case: portraying Altman as “a fundamentally slippery operator who says one thing to one party and something else entirely to others.” OpenAI’s rebuttal, as Coogan presented it, is that the board was dysfunctional and that misunderstandings were being recast as deception.

When cross-examination began, Musk’s lead counsel Stephen Molo moved directly to character. “Are you completely trustworthy?” he asked. Altman answered, “I believe so.” Molo then asked, “Do you always tell the truth?” Coogan described the exchange as “brutal” and said Altman appeared more muted and humble than Musk, who had appeared combative on the stand.

There were also surreal details, but they mattered mostly as illustrations of the cultural collision inside the courtroom. Altman described a Tesla meeting about folding OpenAI into Tesla for AI research, followed by “a long, long period of time with Elon showing us memes on his phone.” Another email between Altman and Zilis about how to tell Musk of a Microsoft investment required Altman to read “cross fingers emoji” aloud.

Those moments were comic, but they were embedded in a serious evidentiary argument. Musk’s side, as Coogan described it, wants the jury focused on Altman’s truthfulness. OpenAI’s side wants the jury focused on Musk’s desire for control and the practical need for funding.

Anthropic’s SPV panic is really about transfer restrictions

A deleted post set off the AI secondary-market discussion. According to Jordi Hays, the post claimed: “Simply brokering an Anthropic secondary deal made me more money than my entire net worth from working in my 20s. This is insane.” Hays added: “It is especially insane because this is not legal. It is insane to post.”

John Coogan asked whether it was actually illegal. Hays’s answer was that brokering securities requires a broker-dealer license. He emphasized that getting one is burdensome enough that even people who do secondary transactions often work under a firm that has the license, roughly like a real-estate agent working under a broker.

Coogan introduced a distinction that mattered for the rest of the analysis: “brokering” a deal is not the only structure people use. An SPV can take investor money and buy secondary shares from someone who has the right to sell. SPVs often charge fees, and Coogan said that structure does not necessarily require a broker-dealer license. Hays accepted the distinction, but said the straightforward interpretation of the deleted post sounded more like a side arrangement: if the intermediary found a buyer for $100 million of shares, the seller would pay $5 million. That kind of thing happens, he said, but “usually and hopefully” through proper channels.

The immediate backdrop was Anthropic publishing more specific language about unauthorized stock sales and investment scams. Gabriel Shapiro’s post called Anthropic’s update a “potential bombshell” and said the company appeared to be calling out active secondary-market activity in Anthropic stock or derivatives, including on well-known platforms, and “essentially” saying these trades were illegal. Hays summarized Anthropic’s own position as saying that any transfer or sale of Anthropic stock, or any interest in Anthropic stock, not approved by its board of directors is void and will not be recognized on its books and records.

Coogan and Hays treated the public notice not as a new rule, but as a public reassertion of restrictions that likely already exist in stock documents. Coogan said these blog posts are “not new rules.” Hays agreed: if someone invested earlier or was an employee, they should already know the transfer restrictions. Companies set this up, Hays explained, because part of being private is controlling who owns the company’s stock; without restrictions, an early angel investor in a successful company could sell to someone the company does not want on the cap table.

Coogan allowed that every deal is negotiated and there could be exceptions somewhere — an early investor or employee might have bargained for unusual terms — but Hays thought that would be very limited, perhaps “one percent of the stock.”

Market demand may have outrun the compliance assumptions. Coogan said people are going “crazy for Anthropic right now” and cited stories such as someone selling a house for Anthropic secondary exposure. Hays pointed out that a house-for-shares transaction creates a public record problem: deed updates could reveal the new owner and connect the real-estate transfer to someone with early Anthropic exposure, making the transaction harder to hide or sanitize.

The cleanest explanation came through a Frog and Toad joke posted by Frankie, which Coogan read because it captured both the SPV workaround logic and its limit: “Frog put the shares in an SPV. ‘There,’ he said. ‘Now we can transfer these shares freely.’ ‘But Anthropic can still exercise its transfer restrictions,’ said Toad. ‘That is true,’ said Frog.”

Putting restricted shares into an SPV may create a wrapper that feels transferable, but Coogan and Hays did not treat that as necessarily defeating the company’s underlying transfer restrictions.

Coogan also read Ankur Nagpal’s question: if Anthropic deems all secondary sales of its stock void, does the original buyer retain the financial interest even after selling it away? Nagpal called it “lawsuit territory.” Coogan and Hays agreed it could get messy. Hays said it could become “almost a billion dollar industry,” referring to the legal and transactional fallout.

Another category is contracts that claim to provide economic exposure without technically transferring shares. In Coogan’s version of the argument, the “economic exposure enthusiasts” would say there was no sale of stock, no transfer of stock, and no direct interest in stock, so no board approval was needed. The board, he said, would likely say “absolutely not” — that the attempted workaround does not count.

Hays’s view was that internet reaction may be more dramatic than necessary in the short term because parties to past side deals may not be eager to surface them. A seller may fear shares being voided or reclaimed; a buyer who purchased exposure at a lower valuation may want the position to keep riding. But if appreciation becomes large enough, incentives can change. A seller might prefer to return the money and reclaim upside. Once that dispute becomes a complaint or lawsuit, Anthropic becomes a third party with a clear reason to object that people have been “messing around” against agreements and terms.

Coogan added a moderating view from Mike Isaac, whose post said private companies draft this kind of language to scare employees who “don’t know better” from trading on secondary markets and to scare buyers seeking those shares, “and yet SPVs, uh, find a way.” The open question, Coogan said, is how far the legal implications actually go.

Thinking Machines wants real-time AI to be native

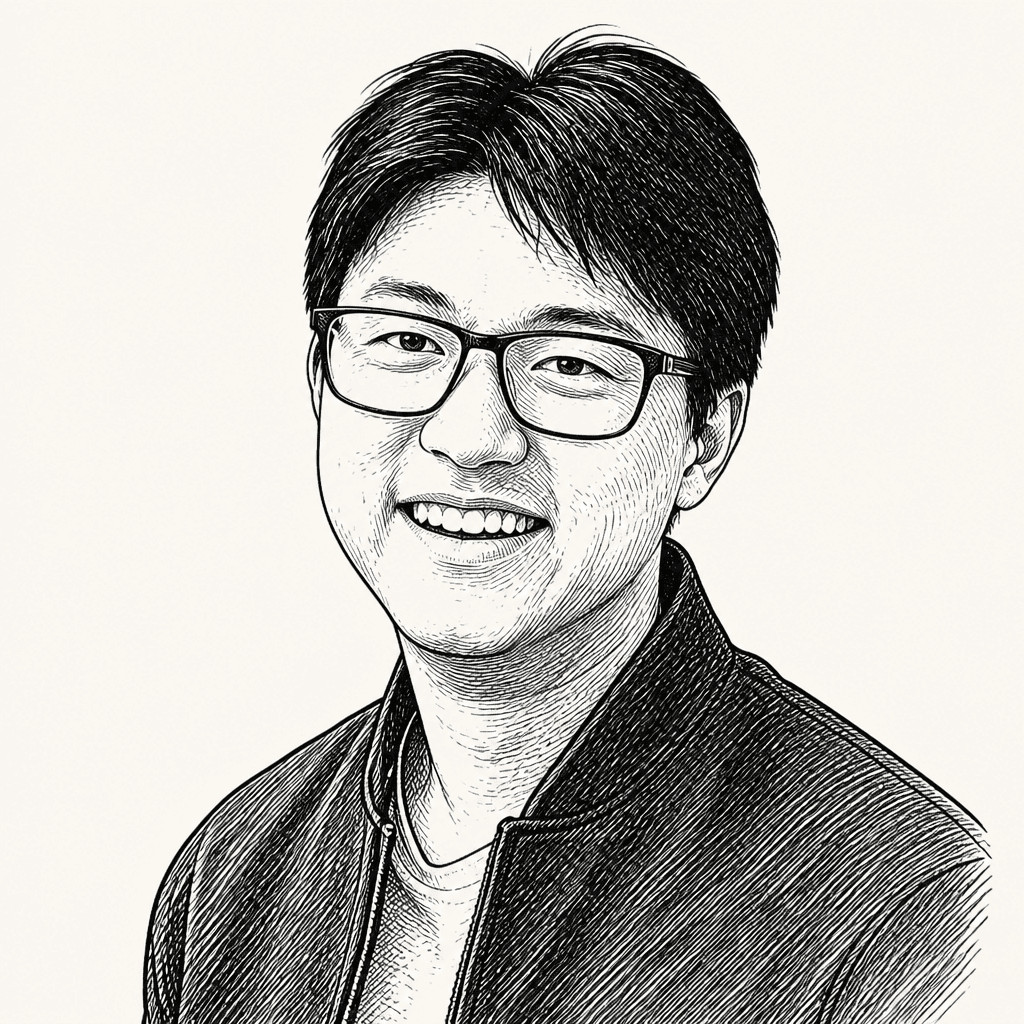

Mira Murati’s Thinking Machines Lab announcement gave the AI discussion a product-architecture turn. John Coogan said Murati’s lab launched “interaction models” with a concise video demonstration. Murati’s post described them as “a new class of model trained from scratch to handle real-time interaction natively, instead of gluing it onto a turn-based one.”

The demo showed a system handling live audio and video. A person told the model that two friends would enter the frame and asked it to say “friend” whenever one appeared. The model agreed, then responded “Friend” when someone entered. The demonstrator described the system as “full duplex audio and video,” meaning input can stream in real time and the model can respond while the user is still speaking.

Coogan’s immediate reaction was that it seemed faster than the original voice mode that had been mocked in viral Instagram reels. The demo then shifted to live translation. A speaker asked whether the system could translate in English in real time for a friend and audience. The model said it would translate as the speaker went, and began rendering the Hindi-language speech into English.

That led Coogan to describe another translation product he had heard about in China: a mask, which he said looked like a Bane mask, that translates what the wearer says out of a speaker on the front. The example he gave was a mother in China using it to speak English continuously with her children even though she did not know enough English to teach them herself. Her children would speak back in English, while headphones translated for her.

Coogan presented the story as part of a broader contrast he had heard in a New York Times Daily segment about AI optimism in China: everyday people seeing AI as immediately useful, such as helping teach children English, rather than primarily as a threat. He extrapolated to the show itself: in theory, he and Jordi Hays could speak Chinese to one another while hearing English back, with the audience hearing the translated output.

Alex Shan pushed back on the mask story’s technical plausibility, or at least on how good the audio would be. Shan said real-time translation models remain expensive through APIs, so the product likely could not run locally if it used the best models. If it ran in the cloud, latency would be an issue. Even the best models, he said, are only now reaching the point where they sound like real people rather than a “super computer” voice like the one in the demo.

Coogan asked whether it could be done on-device. Shan said eventually, perhaps, but it is still difficult. The jokes moved to custom ASICs, battery packs, H100s in backpacks, and an NVLink 72 being dragged like a washing machine. Underneath the comedy, the disagreement was clear: Coogan was excited by the user-facing possibility of live translation; Shan stressed that latency, quality, cost, and on-device inference remain hard constraints.

One rejected bid, one uncertain media bet

John Coogan treated eBay’s rejection of GameStop’s $55 billion takeover bid as unsurprising. The New York Times reported that eBay called the cash-and-stock proposal “neither credible nor attractive.” Coogan’s view was blunt: the odds eBay would accept GameStop’s “brash offer” were “infinitesimally remote.”

The more interesting media transaction, to Coogan, was Byron Allen’s proposed BuzzFeed investment. Variety reported that Allen is buying BuzzFeed, investing $120 million in the digital media company, and will become CEO. BuzzFeed founder and CEO Jonah Peretti would be succeeded by Allen, while Peretti would move into a newly created role as president of BuzzFeed AI.

Coogan gave a short account of Allen’s media career. Allen began as a late-night talk show host, then built a business buying rights to broadcast in certain TV slots and independently selling advertising against the programming he put together. Jordi Hays clarified the economics: Allen was on the hook for airtime, and any spread between ad revenue and airtime cost was his. Coogan said Allen later bought the Weather Channel and other traditional television assets.

The BuzzFeed deal terms, as Coogan stated them, were that Allen Media Digital would pay $120 million for a 52% stake. Coogan said that was a huge jump from where BuzzFeed had been trading, which he recalled as roughly a $40 million to $80 million market cap — “almost a 3x premium.”

Hays was skeptical of the asset. BuzzFeed has name recognition, he said, but he does not know anyone who wakes up wanting to know what BuzzFeed is talking about that day. He allowed that Allen is a media tycoon and may see something others do not, but questioned the play: if someone had $120 million to build a new media property, what else could they do? Is there a large audience still “sneakily more engaged”? Could Allen see a pivot to prediction markets?

Coogan pulled up BuzzFeed’s homepage to test the brand in real time. The top trending article, he said, was “50 ‘why would you put that thing in writing’ photos that prove people are the worst.” Other examples included “41 celebs people used to love and now can’t even stand to look at” and “22 stories about boy moms and their sons that will make you cringe into oblivion.” BuzzFeed still loves the listicle, he noted, and the lists appear to have gotten longer. A “5 key reasons” headline, he joked, would not make the cut at modern BuzzFeed.