AI-for-Science Advances Depend on Evaluation, Not Just Generation

Aishwarya Mandyam

Aishwarya Mandyam Surya Ganguli

Surya Ganguli Aldís Elfarsdóttir

Aldís Elfarsdóttir Amar Venugopal

Amar Venugopal Steven DillmannStanford HAIFriday, May 15, 20267 min read

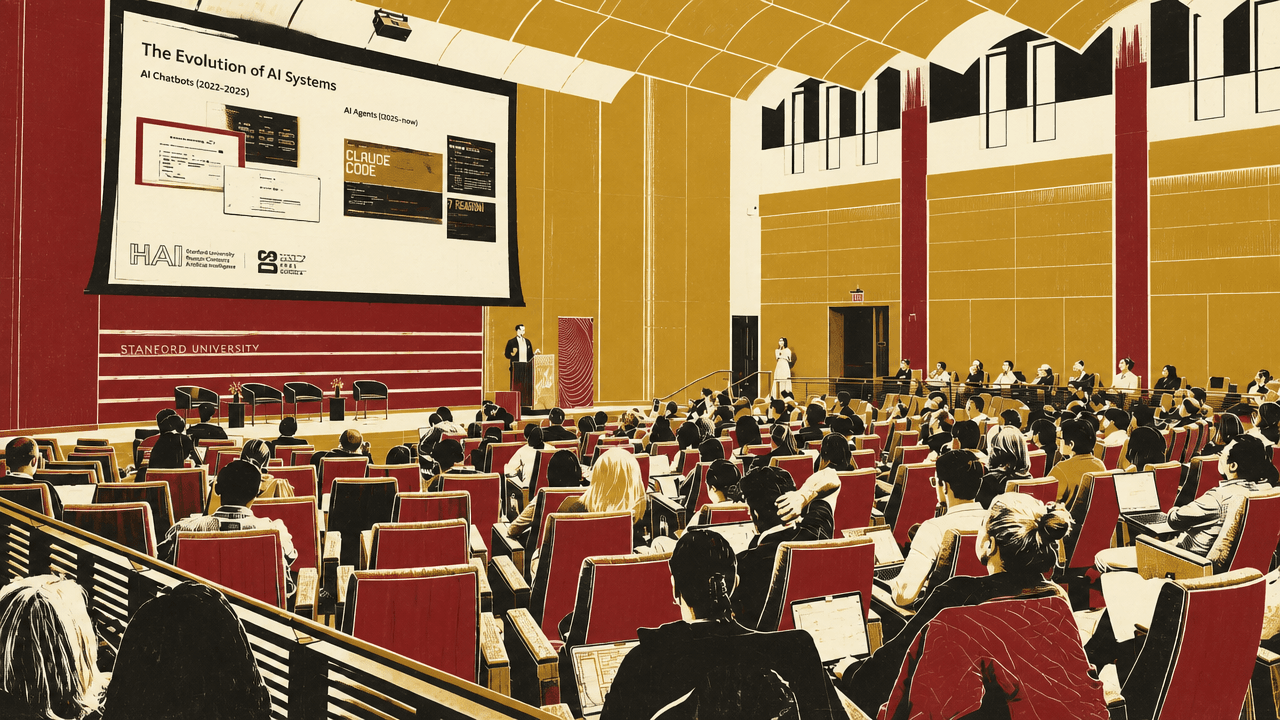

Steven DillmannStanford HAIFriday, May 15, 20267 min readIn a Stanford AI+Science lightning-talk session introduced by Surya Ganguli, four young researchers made a common case: AI-for-science is useful only when paired with rigorous evaluation. Aishwarya Mandyam, Amar Venugopal, Steven Dillmann and Alda Elfarsdóttir each treated AI systems or outputs as claims to be tested — through uncertainty estimates for clinical policies, causal checks on generated text, executable benchmarks for scientific agents, and empirical links between corporate climate language and later emissions.

AI-for-science lives or dies by evaluation

AI was presented here less as a generic assistant than as a measurement problem. The projects asked how to know whether an automated clinical policy is safe before deployment, whether a generated textual counterfactual isolates the feature it claims to test, whether a coding agent can complete real scientific workflows, and whether corporate climate language predicts future emissions.

Surya Ganguli introduced the talks as a rapid view into work by young Stanford researchers, with more detailed discussion reserved for poster sessions. The common thread across the research was narrower than “AI accelerates science.” Each project used AI-enabled methods only in combination with a way to evaluate reliability: statistical uncertainty for policies, causal identification for text, executable benchmarks for agents, and empirical links between disclosure language and future outcomes.

Clinical reinforcement learning needs policy evaluation before deployment

Aishwarya Mandyam described healthcare as a repeated decision process: a patient is observed, a clinician or clinical team chooses a treatment, and the cycle can repeat many times in a week depending on the care setting. At a higher level of abstraction, the clinician becomes an agent, the treatment becomes an action, the patient becomes the environment, and the patient response becomes a reward signal. That mapping makes healthcare a natural setting for reinforcement learning, but also a high-stakes one.

The central question in Mandyam’s talk was not how to learn a clinical policy, but how to know whether a learned policy is good before putting it in a hospital. She focused on off-policy evaluation, which uses an offline behavior dataset to estimate the value of a candidate policy. Higher estimated policy value means the policy is expected to perform better.

The difficulty is coverage. Prior off-policy evaluation methods rely on behavior datasets with finite size and limited support. If the new policy takes an action that was not observed in the dataset, the evaluator has little basis for estimating its value. Mandyam’s work asks whether synthetic data can be combined with real offline behavior data to produce better policy value estimates.

She separated the work into two estimation tasks. The first is initial-state-conditioned policy value estimation: for example, estimating the value of a treatment policy for patients in the same disease stage. For that case, her team introduced CP-Gen, an estimator designed to learn valid conformal prediction intervals. The second is unconditioned policy value estimation: estimating the value of a policy across patients regardless of initial state. For that task, the team introduced DR-PPI, which she said asymptotically learns valid confidence intervals.

Mandyam’s closing claim was that reliable policy evaluation can enable safe automated clinical decision-making. The point was therefore narrower and more demanding than “AI can help doctors”: automated clinical decision systems require a pre-deployment statistical account of uncertainty, especially when real-world data do not cover the decisions the learned policy might make.

Text experiments need counterfactuals that change the right thing

Amar Venugopal presented a pipeline for causal experimentation with text data, aimed at settings where researchers observe documents paired with outcome variables and want to know which textual qualities affect those outcomes. His motivating example was political speech: a politician gives speeches, each speech is associated with polling or approval numbers, and a social scientist wants to identify which aspects of the speech drive variation in approval.

The proposed pipeline has three parts. First, derive a hypothesis from a labeled dataset by featurizing the text and summarizing the qualities likely to matter. Second, modify a new piece of text in line with that hypothesis, producing a quasi-counterfactual version that differs from the original only along the identified textual dimension. Third, run a randomized experiment with respondents and estimate the causal effect of the modification.

The concrete example used California city council meeting public comments, with civility as the outcome. The generated hypothesis was that “insults using ‘you’” are a textual feature likely to affect civility. Given an anodyne comment about leaf blowers — “Thank you for your passionate advocacy... I fully concur with your concerns regarding leaf blowers” — the method produced a more aggressive version: “You continue to recycle your failed attempts at relevance, hiding behind your leaf blower fiasco.”

That example also showed why the causal problem is not solved by text generation alone. The intended modification was to add insults using “you,” but the generated text may also change other attributes, such as introducing disagreement rather than agreement. Venugopal said a naive control strategy fails to recover the true conditional average treatment effects shown in the example, while the team’s residualization-based technique more accurately recovers them.

The methodological claim was that AI can help generate and manipulate textual treatments, but valid causal estimation still depends on accounting for correlated changes introduced during generation. The pipeline, as shown in the final slide, links labeled data, SAE probing, hypothesis generation, steering interventions, experimentation, and CATE estimation via residualization.

Scientific agents need benchmarks built from real workflows, not open-ended ambitions

Steven Dillmann argued that the next stage of AI-for-science progress depends on benchmarks that evaluate agents on executable scientific work. His reference point was Terminal-Bench, a benchmark for terminal-based AI agents such as Claude Code, OpenAI Codex, and Gemini CLI. Unlike chatbots, these agents write, execute, debug, and iterate on code through a computer terminal.

Dillmann said Terminal-Bench became a standard evaluation for terminal-based agents because it was built as a community effort with high-quality tasks and rigorous review. The slide described 89 tasks across software engineering, system administration, and other computer-use settings, along with more than 300 hours of manual task verification.

The progress curve was presented as steep: the best model in March 2025 scored about 20% on Terminal-Bench; by the end of the prior year scores were around 60%; and as of the week of the talk, frontier models such as ChatGPT 5.5, Gemini 3.1 Pro, and Opus 4.6 were around 80%.

Terminal-Bench Science is intended to apply the same benchmark logic to scientific workflows. The target is more than 100 tasks across life sciences, physical sciences, earth sciences, and mathematical sciences. Dillmann emphasized three criteria: tasks must be scientifically grounded, objectively verifiable, and genuinely difficult. The benchmark is not aimed at open-ended hypothesis generation or literature review, because those are too ambiguous to verify. It is aimed at real-world execution-based workflows that current agents cannot yet solve reliably.

The desired loop is explicit: scientists contribute real workflows as benchmark tasks; frontier labs evaluate and improve agents on those tasks; better agents then help scientists accelerate research. Dillmann called this a path toward a “Claude Code/Codex for Science” moment, with scientists shaping the problems on which AI systems are evaluated.

Climate disclosure credibility may be visible in how firms write, not just what they promise

Aldís Elfarsdóttir used corporate climate disclosures as a setting where language itself may contain signals of credible intent. She described a dataset covering a decade and 11,000 companies corresponding to 50% of global market capitalization. The surveys are voluntary: companies may respond because they want to, because investors want them to, or because supply-chain buyers want them to. The question is whether companies are likely to do what they say they will do.

Her team evaluates credibility using a regression with future three-year emissions as the outcome and communication signals, controls, and fixed effects as predictors: future emissions as a function of communication, controls, and fixed effects.

Elfarsdóttir described four contributions. The team used state-of-the-art language models on a novel dataset to produce new features from complex text. It linked credible intent to interactions between language and targets, including specificity multiplied by target type and climate language multiplied by target audience. It controlled for a richer set of information than prior work, including reporting history, initiative types, and audience. It also included private companies, whereas she said the literature has focused on public companies.

The main empirical distinction was between specific language and vague climate language. Specificity — granular, detailed language with quantitative elements, proper nouns, and action items — added credibility to climate targets. Elfarsdóttir compared it to asking an AI agent for a plan before it carries out an objective: if the plan is good, one can have more confidence in the objective. In practical terms, she said the coefficient was like reducing Scope 2, or indirect purchased electricity emissions, by about 20% at the three-year mark.

By contrast, high use of vague climate language around terms such as “net zero,” “renewable,” “sustainable,” “transition risk,” and “climate opportunities” was associated with higher three-year emissions when interacted with a target audience that included investors. Elfarsdóttir interpreted this as a potential signal of greenwashing, while also noting a possible selection explanation: richer companies may emit more and also be able to spend more on climate reports. Compared with baseline, she said the Scope 1 coefficient was like adding two natural gas plants’ worth of direct emissions by the three-year mark.

The conclusion slide put the claim succinctly: “It’s not what firms say, it’s how they say it.” The practical instruction was to look for a specific, detailed path to a cleaner future rather than relying on the existence of a target or on broad climate vocabulary.