AI Is Moving Deeper Into Science, but Validation Remains the Bottleneck

Kyle Cranmer

Kyle Cranmer Douglas Finkbeiner

Douglas Finkbeiner Benjamin NachmanCarina HongStanford HAIFriday, May 15, 202623 min read

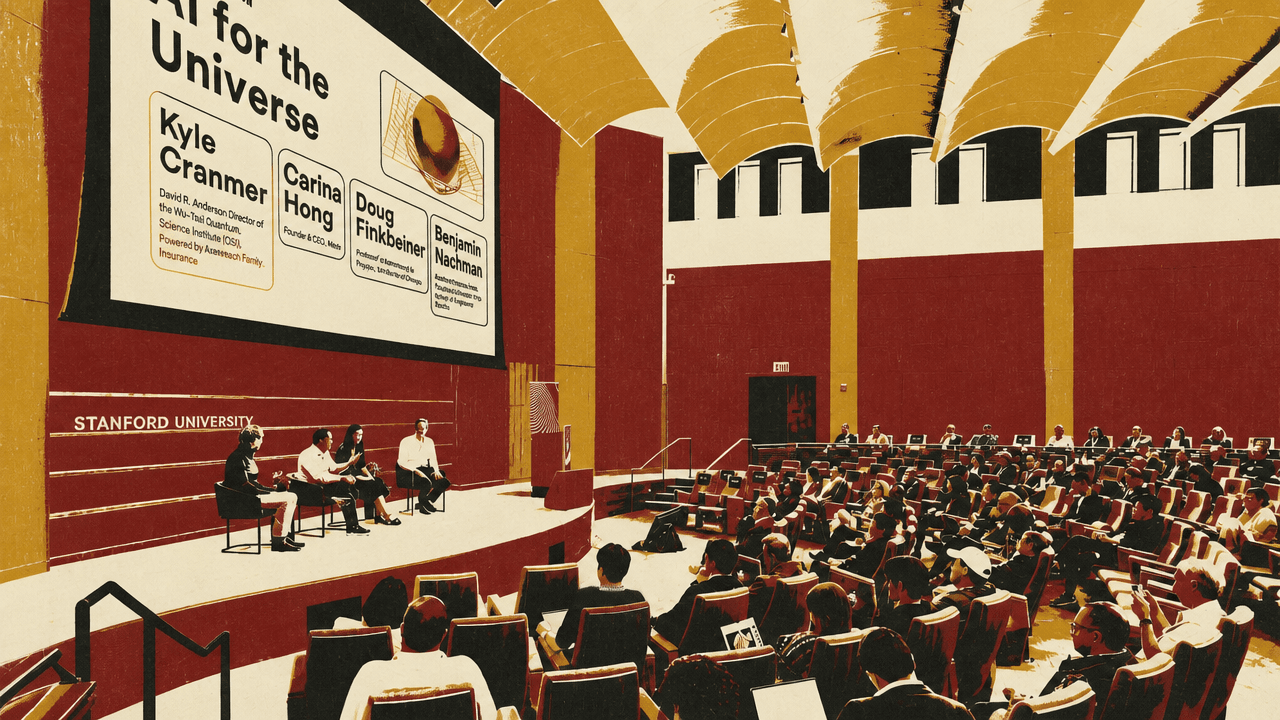

Benjamin NachmanCarina HongStanford HAIFriday, May 15, 202623 min readAt AI+Science: AI for the Universe, Kyle Cranmer, Carina Hong and Douglas Finkbeiner argued that AI is already embedded in scientific work, but its value depends on where validation happens. Cranmer framed physics applications around prediction and inference, where formal checks, simulator calibration or uncertainty correction determine whether model output can support scientific claims. Hong made the parallel case in mathematics, where Lean-style formal proof gives some AI results a clean score but leaves problem selection and theory-building with experts. Finkbeiner said astronomy’s newer disruption is the desk-level AI collaborator, which can improve research work while increasing the need for verification and scientific judgment.

AI’s scientific value depends on where the checking happens

Kyle Cranmer framed AI for science less as a collection of domains or methods than as a set of roles inside the scientific process. The relevant question, in his view, is not simply whether a system is a generative model, a density estimator, or a theorem prover. It is where the system enters the work: forming hypotheses, making predictions, gathering data, inferring theory from observations, designing experiments, controlling instruments, or executing workflows. Just as important is what kind of deficiency the system is expected to have, and what mechanism makes the science trustworthy anyway.

That framing makes AI in physics look less like a single capability and more like a set of recurring verification regimes. Cranmer focused on prediction, where a theory is used to generate expected data, and inference, where observed data is used to estimate or test theory. In both cases, AI can be powerful, but only under very different contracts.

In symbolic theoretical physics, the contract can be unusually clean. Physicists often work inside an accepted framework such as quantum mechanics and try to derive the exact symbolic formula for a specific situation. Modern calculations can involve millions or billions of terms; the blackboard image of deriving everything by hand no longer matches the computational reality. Cranmer described work that treats some theoretical calculations as search over a discrete space of candidate answers, with generative AI proposing possibilities and formal verification checking them.

The important point is that the AI model does not have to be correct by itself. It has to be good at guessing candidates that a separate verification process can accept or reject. Cranmer compared the pattern to AI for mathematics, where a system may try to produce a proof that a proof assistant can verify. He also compared it to drug and materials discovery, where a model proposes a molecule or material that still has to be synthesized and tested. In this pattern, AI accelerates exploration; correctness lives elsewhere.

We don't need the model to be correct, it just needs to be good at guessing.

Carina Hong described formal theorem proving in mathematics as another version of that contract, but with a sharper scoring mechanism than informal mathematical prose. In her account, AxiomProver produces Lean output. If the proof compiles in Lean, the system has proved the statement as formalized; the score is not a fuzzy expert consensus but “zero or perfect.” That property matters because, in Hong’s telling, recent AI-generated informal proofs have often been difficult for mathematicians to grade: sentences can feel partly right, partly wrong, or hand-wavy, making consensus hard.

Formal verification does not remove mathematical judgment. Hong’s strongest caveat was that expert knowledge remains central. AI for mathematics is “here,” she said, but the hype headlines miss how much success comes from experts selecting the right problems, formalizing them in the right way, and knowing whether a target sits inside the available formal library. In Lean-based proving, the Mathlib library defines the boundary of what can be formalized efficiently. Hong said choosing a problem whose statement or proof lies within Mathlib is extremely difficult; she cited First Proof as an example where, in her account, only one problem met that criterion, limiting participation by formal math startups.

Cranmer’s second prediction example had a different kind of guarantee. In numerical prediction, especially in particle physics, approximate errors can contaminate results unless they are explicitly corrected. He used magnetic moments as an example of prediction at extreme precision. For the electron, the theory and experiment values shown on his slide were given to many decimal places, with a level of precision he likened to hitting a hole-in-one from here to China. For the muon, he pointed to the Fermilab Muon g-2 experiment, where data show oscillations corresponding to the muon’s precession in a magnetic field, and theory and experiment are compared at roughly parts-per-billion precision.

Part of that theoretical prediction relies on lattice field theory, a computational approach for studying quantum chromodynamics on a discretized space-time lattice. The task is to estimate expected quantities over distributions of quantum field configurations. Cranmer described these objects as four-dimensional boxes with enormous numbers of degrees of freedom; one slide gave a lattice example with roughly 10^9 numbers, and another contrasted image generation with quantum-field generation: about one million degrees of freedom for a 512-by-512 RGB image versus roughly 100 billion degrees of freedom for a 256-by-256-by-256-by-512 lattice with SU(3) link variables.

Hamiltonian Monte Carlo was invented for this class of problem, Cranmer said, and lattice QCD remains a major user of national-lab supercomputers. But the traditional methods have limitations. The AI-enhanced alternative is to train generative models, such as normalizing flows, to approximate the target distribution and sample from the model rather than rely only on traditional Monte Carlo procedures.

Here again, the central issue is not whether the learned model is perfect. Cranmer emphasized that it will not be. If predictions were made directly from an imperfect learned distribution, the answer could be wrong. But for certain AI models, the mismatch can be evaluated and corrected. Those corrections can produce unbiased estimators and sensible uncertainty estimates. The guarantee is not the same as a formal proof; Cranmer compared it to the guarantees of applied mathematics. In his terminology, this falls under the broader “computational microscope” pattern, with analogues in molecular dynamics, statistical physics, and related fields.

Across these examples, the shared pattern was not that AI replaces scientific validation. It was that different scientific settings require different checking mechanisms: formal proof for symbolic candidates, applied-mathematical correction for learned samplers, experimental synthesis for molecules and materials, and expert review when no mechanical checker exists.

Inference is where a model error can become a scientific claim

When Cranmer turned from prediction to inference, the risk model changed. In experimental particle physics, astrophysics, and cosmology, AI is often embedded in a pipeline that begins with raw detector or observational data and ends with scientific claims. If the model is wrong inside that pipeline, the published conclusion may be wrong. In this setting, he said, “mistakes really matter,” and uncertainty quantification, systematic uncertainties, calibration, and robustness to distribution shift become front and center.

A major reason AI is now central to inference is the role of high-fidelity scientific simulators. Cranmer argued that the forefront of scientific knowledge is often encapsulated in such simulators: particle colliders, neuronal activity, epidemics, gravitational lensing, and the evolution of the universe were all shown on one scale-spanning slide. In AI language, simulators are generative models because they generate data. But Cranmer stressed that they are also causal models: they contain mechanisms that allow intervention-like reasoning, which is crucial for science.

The difficulty is that simulators are often poorly suited to downstream tasks such as statistical inference, experimental design, and decision-making. Cranmer illustrated this with a particle-physics simulation chain. One begins with theory parameters theta. Those parameters feed a model of parton-level momenta, then a shower-splitting model, then a detector-interaction model, and eventually observables x, the raw data. Software packages such as MadGraph, Pythia, and GEANT4 model different pieces of that chain, and each can be viewed as a probability distribution of output given input.

The likelihood needed for inference is a large integral over latent variables: p(x|theta) equals the integral over detector, shower, and parton-level latent variables of p(x|z_d), p(z_d|z_s), p(z_s|z_p), and p(z_p|theta). Cranmer’s point was that this object is central and intractable. The integral spans an enormous latent space; one cannot evaluate it directly.

Simulation-based inference addresses that problem by using deep learning and neural density estimation to approximate likelihoods, likelihood ratios, or posterior distributions from simulator output. The simulator produces training data; a neural network learns a surrogate; the surrogate supports statistical inference. Calibration and verification remain tied to the simulator.

Cranmer contrasted this with older “spherical cow” statistical modeling, where the data-generating process had to be simplified enough to make inference tractable. Simulation-based inference allows researchers to keep much more of the simulator’s complexity while still producing principled statistical claims.

He described the field as rapidly growing, with one slide showing 1,185 simulation-based inference papers across 33 categories, including statistics, astrophysics, computer science, physics, evolutionary biology, mathematics, and ecology.

Douglas Finkbeiner placed astronomy squarely inside this broader simulator-and-inference shift. AI is already infrastructure in astronomy, not an imminent novelty. Survey pipelines use machine learning to classify alerts; Rubin/LSST alert brokers were shown as handling around 10^7 transients per night. Cosmological inference uses simulation-based methods. Image analysis uses convolutional networks for galaxy morphology, strong-lensing detection, and deblending. Literature workflows use domain LLMs and arXiv summarizers. Telescope operations use reinforcement-learning scheduling and machine learning for active optics.

The broader conclusion from the physics and astronomy examples was not that AI has solved scientific inference. It was that AI is changing the shape of the pipeline. Cranmer said AI is now moving beyond assisting predictions and powering inference into hypothesis generation, experiment design, and execution of scientific workflows. That shift, he said, reflects a tightening integration of the scientific process itself.

The new disruption is not survey-scale AI but the collaborator at every desk

Finkbeiner’s distinction was between the “project layer” and the “desk layer.” The project layer consists of survey-scale machine learning built by specialists and embedded at the level of the instrument or survey. That has been maturing for five to ten years. The desk layer is newer: an AI collaborator at every graduate student’s desk, helping with code, plots, fitting, writing, and everyday research work. That layer, he said, is reshaping training and research and is the one that most worries young scientists.

Finkbeiner, a professor of astronomy and physics at Harvard who has been on leave at Anthropic working with the mechanistic interpretability group, said he moved there because he became convinced that the transition to powerful AI, done safely and aligned with human values, was a high priority. He described it as a “drop everything” moment. But his message to scientists was not panic. The capabilities are real, the risks are real, and the work is changing; still, he argued that the work of human scientists is not disappearing.

The fear he addressed was straightforward: if an AI agent can take an abstract assignment, write code, debug it, run analysis, and write it up, perhaps students and postdocs become unnecessary. He rejected both extremes he attributed to a recent framing by David Hogg: “let the AIs cook” without restraint, or ban and punish their use, especially for students. He argued instead for a middle path already emerging in research groups.

His examples were deliberately mundane. One student, Theo O’Neill, wrote a paper on gas clouds using a 3D dust map and other datasets. The science, Finkbeiner said, was done in a relatively traditional way. AI did not primarily make the paper faster. It made the visualizations much better: an interactive 3D rendering of high- and intermediate-velocity clouds in the local interstellar medium, with browser-based controls for spinning, filtering by cloud type, recoloring by velocity or dust, and clicking to inspect. The parts a busy graduate student would normally cut—interactivity, sliders, polish—became feasible.

Another student was searching for a machine-learning architecture related to photon pileup in Chandra X-ray data. Before AI assistance, a student might try two or three architectures, choose the most promising, tune it, and ship before the paper deadline while wondering what was missed. With AI assistance, Finkbeiner said, the student could sweep a broader design space, test variants she would not have had time to write, and find a stronger model. The deadline did not change. The number of papers did not explode. The result inside the paper improved.

This produced what Finkbeiner called a productivity paradox. The feared outcome was 100 papers per year per scientist, an explosion of output count, and redundancy for many science jobs. What he sees instead, at least in his hallway at the Center for Astrophysics, is roughly the same number of papers, but sharper, deeper, and more thorough. Time saved in one place is reinvested into doing the science better.

His caution was that this may differ by field. Astronomy, he said, is often driven by the desire to understand the universe. If an AI claimed to know the answer but could not explain it, that would not satisfy the purpose. In some applied domains, such as finding a drug that works, a black-box answer may be more acceptable. He expects a split between sciences where human-understandable explanation remains central and domains where a working answer may be enough.

The risks he named were not cosmetic. AI systems hallucinate references, equations, and results, so verification must be a deliberate workflow step. They can be brittle. They can induce deskilling. “The doom here isn’t mushroom clouds,” he said; “it’s we all get lazy and stupid.” He compared it to other technological conveniences that reduce physical effort. The risk is that scientists lose the literal and metaphorical muscle of doing things themselves. Over-reliance is related: outsourced judgment remains outsourced judgment, and humans need to stay in the loop on the questions that matter.

Finkbeiner’s final posture was conditional optimism. Humans are not about to know what dark energy and dark matter are “by September.” The questions remain open. The tools are unprecedented. The allocation of scientific labor may change substantially—perhaps more or less emphasis on data analysis, theory, or instrument-building—but he did not see humans cut out of the loop on a grand scale soon.

Math progress is easiest to celebrate where the scoring is clean

Hong described AI for mathematics as moving extremely quickly, especially over the prior year and a half. But her central distinction was between problems whose scoring is clear and research mathematics, where the community still lacks a stable way to measure progress.

On competitions, the headline progress in Hong’s account was dramatic. She traced a timeline beginning in July 2024, when Google DeepMind’s AlphaProof achieved an International Mathematical Olympiad silver medal, one point short of gold. In December 2024, there was no official major-lab attempt at the Putnam, and model solutions were hard to score because answers seemed partly right, partly wrong, and hand-wavy. By July 2025, she said multiple labs and startups in the United States and China participated in the IMO and “everyone got a gold medal,” with a 35 out of 42 performance. By December 2025, according to Hong and her slide, Axiom and Numina achieved perfect scores on the Putnam, an undergraduate exam whose median score she described as zero out of 120.

| Area | What is easier to measure | What remains hard |

|---|---|---|

| Olympiad and Putnam problems | Known exams provide repeatable benchmarks and clear scoring targets. | Informal model solutions can still be hard to grade when reasoning is hand-wavy. |

| Open research problems | Specific wins can be documented after expert review or formal verification. | There is no broadly agreed benchmark, and hard informal proofs are difficult to score. |

| Formal theorem proving | Lean or another proof assistant can check whether a formal proof compiles. | Choosing problems within existing formal libraries is itself difficult. |

Research mathematics is harder to measure. Hong said very few benchmarks exist for AI performance on open research problems. Frontier Math Tier 4, as she described it, is a private benchmark owned by OpenAI, with problems never solved before, numerical answers, and a narrative that each would take an entire math department to solve. Because it is private, she said, other groups cannot use it to track progress. Google DeepMind’s Formal Conjectures formalizes known open conjectures, but Hong said it spans too wide a difficulty range, from Riemann Hypothesis-level problems to more trivial open problems, making the distribution hard to interpret. A more recent “First Proof” effort had only ten PhD-level problems made by famous mathematicians, and, in her account, grading AI-generated informal proofs proved harder than expected.

Without a benchmark, the field has turned to specific problem wins. Hong called this, half-jokingly, “mathematical Pokémon hunting.” Erdos problems have become one hunting ground: a repository maintained by Thomas Bloom of combinatorial problems proposed by Paul Erdos, not to be confused with the harder Erdos Prize problems. The difficulty is that several apparent AI solutions to “open” Erdos problems turned out to be rediscoveries of solutions already in the literature. Hong said large language models proved good at literature search inside their training data, and the resulting mishaps decreased trust among mathematicians who had been promised open-problem solutions.

Still, continued attempts have produced movement, as Hong described it. A community GitHub repository tracking AI contributions to Erdos problems lists multiple problems solved in standalone ways and others closed because previous solutions were found. Hong said well-resourced groups began to “just fold everything,” borrowing the AlphaFold-style lesson: try the whole repository. The result, in her account, was that the number of truly solved Erdos problems rose from zero to roughly a dozen, while many more were marked closed because the literature already contained solutions.

Hong’s own examples were not claims of solving famous conjectures outright, but partial research progress. One result, shown as accepted by Archiv der Mathematik, concerned “almost all primes are partially regular.” Hong explained that in a certain decomposition of class groups, odd-indexed subspaces are well understood, while even parts are not; a longstanding conjecture says the even subspaces should vanish. AxiomProver, in her account, proved a theorem showing that almost all primes have part of these even-indexed subspaces vanish. She described this as a case where a mathematician had an interesting target, was not confident AI could solve it fully, gave it to AI anyway, and obtained partial progress.

Another result, shown as accepted by Indagationes Mathematicae, concerned the Ramanujan tau function. Assuming the ABC conjecture, Hong said, the work showed that the Ramanujan tau function misses 100% of primes in a specific asymptotic sense, with S(X)=O(X^(2/3)) and S(X)/pi(X) tending to zero. Hong emphasized that even whether the tau function vanishes is the famous Lehmer conjecture, so partial results around its values are meaningful. Here again, she stressed that Lean verification made the proof checkable as code.

Autoformalization is another important middle ground. If a mathematician already has a proof or proof sketch, AI may translate it into Lean so that a proof assistant can verify it. If some steps are hand-wavy or wrong, formalization can expose that. Hong also pointed to journal review: math and adjacent journals may lack enough human referees, and a system that can turn a paper into Lean could reduce reviewer burden. She cited examples accepted by Research in the Mathematical Sciences where, in her account, Lean formalization sped up review.

She described Google DeepMind’s Aletheia as an informal system powered by Gemini Deep Think. Unlike AxiomProver, which she presented as formal, Aletheia lets users prompt in natural language and includes a generator, candidate solution, verifier, and revision loop. Hong said DeepMind had a useful way of labeling contributions from negligible novelty through publishable research and landmark breakthroughs, while also identifying the degree of human-AI collaboration. In Hong’s description, Aletheia had solved some problems across lower levels and produced publishable research at level two; she also said the inference-scaling behavior observed at Olympiad level appeared to transfer to research-level problems.

Prompting general LLMs is also part of the field. Hong mentioned mathematicians ranging from Donald Knuth to Timothy Gowers using systems such as Claude, OpenAI models, and Grok to work on research questions. Her message was not that all mathematicians should wait for specialized systems. It was that they should try the available models and agents on real research targets, while recognizing that expert judgment is not optional.

The system-performance ingredients she named were concrete: scaling inference-time compute, especially beyond AlphaProof-style Monte Carlo tree search; agents that recursively decompose problems and learn to backtrack; very good tool calls; strong Lean infrastructure, including AXLE; high-quality data; and expert knowledge whether or not interaction is allowed. She also argued that formal theorem proving’s machine-learning gains have not been fully harvested, and that combining AI for construction or discovery with AI for proving may produce better workflows.

Hong also drew a line between proving and broader mathematical research. AI has shown strength at theorem proving, proof refinement, and in some cases solving selected research problems. It has not yet clearly shown the ability to function like a research mathematician in theory-building: choosing the problem to solve, generating interesting conjectures, inventing key definitions, or producing new frameworks. Whether there has been a “genuinely new idea” from AI remains hard to define.

The paper is becoming one artifact among many

Finkbeiner used the AI-heavy workflow to question academic publishing at a deeper level than manuscript drafting. The old pipeline was data to analysis to paper to reader. The emerging pipeline, as he drew it, includes code, data, a “claude.md” or similar context artifact, AI systems interacting with papers and humans, and AI systems both writing and reading. If AI writes much of the paper and AI reads much of the paper, he asked whether a paper in a journal is still the right API for scientific communication.

He did not offer a replacement. He floated possibilities: GitHub, Zenodo, model memory, skill files, or letting one researcher’s AI interact with another researcher’s AI. His own reading habits have already shifted. He said he reads AI summaries of papers more often than papers themselves, and reads the paper only when he needs the details to reproduce or inspect the work.

But he separated reading from writing. Papers have served multiple functions: communication, search and discovery, and pedagogy among them. Some of those functions may move. AI can summarize on demand, search artifacts by embedding, and explain a paper at different levels. The writing function is different. Constructing an argument, defending each claim, and situating it against prior work catches errors and sharpens thinking. Even if the only immediate reader is an AI, Finkbeiner argued, writing may remain valuable for the writer, especially for students learning how scientific claims are built.

Benjamin Nachman extended the question beyond papers: if the paper may no longer be the right unit, are talks the right unit? Are classes? The question matters because scientific artifacts now train not only people but tools. Researchers want the next generation to use AI systems well, but also to develop them. The format of knowledge transfer becomes part of the research infrastructure.

Hong suggested a “GitHub for math” model, pointing out that software projects can involve hundreds or thousands of collaborators while mathematics papers are often written alone or with a few coauthors. She cited polymath-style efforts and large-scale formalization projects as early signs that mathematical work can be decomposed into many subtasks. Finkbeiner declined to prescribe the new interface, saying he had meant to raise the question rather than answer it.

Cranmer, discussing publishing and trust, separated infrastructure from editorial restraint. If science produces many more artifacts, the infrastructure should scale to handle them. But more artifacts do not automatically solve reproducibility or trust. He noted that methods sections were once narrative instructions for reproducing wet-lab experiments. As computational work became more complex, exact computational reproducibility often meant packaging an environment so that it could be rerun bit for bit. That has value, but it can become little more than zipping and unzipping the same files.

AI may make narrative reproducibility newly important. If a paper or artifact specifies what needs to be done, what checks matter, and what conclusions should follow, perhaps another AI system—possibly a future, stronger one—can redo the work independently. The test becomes whether the conclusion survives re-execution, not merely whether the original container runs.

Trust, Cranmer said, is also social. Large collaborations already contain many people testing clever ideas. A new method is not accepted just because it exists; colleagues must be convinced that it is trustworthy. That process of walking others through the method and building confidence may remain a rate limiter on scientific output as long as science remains a human endeavor.

Open data becomes more fragile when AI can turn it into papers quickly

One of the sharper tensions concerned openness. Finkbeiner said he fears for open data. Science and AI have benefited from open datasets, but AI changes the cost of converting data into publishable results.

His example was the Fermi Gamma-ray Space Telescope, a project he worked on 10 to 15 years earlier and in which Stanford played a large role. He said its photons are still posted online daily as a list of photons. NASA required openness, and the collaboration was comfortable with it because producing the key papers required the knowledge of a 200- or 300-person project. Outsiders could access the data, but only insiders could realistically use it at the same level.

Finkbeiner argued that assumption may no longer hold. With a sufficiently large cloud instance and enough Claude usage, he said, one might reproduce many of those papers quickly. That raises a new question: is it safe to release data openly if someone can instantly scoop the collaboration that produced it?

Hong added that in the AI era, the valuable artifact is not only final data or final solutions. It is the trail of attempts: the failures, backtracks, skills, context, and experience that systems can learn from. Targets matter because they generate those trajectories. The process data around how a solution was reached may become as important as the final answer.

Finkbeiner’s validation rule was deliberately familiar: validate AI results the way scientists validate results from graduate students. A graduate student is also, in his phrase, a black box whose weights and biases cannot be inspected. The advisor asks: have you tried this test, how do you know the claim is true, does it match the asymptotic limit, what checks have you run? The same style of interrogation applies to AI.

Hong, approaching verification from mathematics and code, said standalone LLM judges are not reliable enough if one simply asks one model to grade another model’s answer. Better agent-loop harnesses may help. But when the object being verified is step-by-step reasoning or code rather than a numerical answer, formal verification becomes more important. Math is “verifiable” only in limited ways when the benchmark asks for a number. If the goal is to check proof steps or software behavior, formal systems become much more central.

A new discipline is less important than a new habit of abstraction

Nachman raised the question of whether AI for physics and AI for math require their own training path, career path, or disciplinary identity. The answers were cautious and leaned away from creating a wholly separate discipline.

Cranmer said the sciences differ sharply. Some are experimental; some are observational. Astronomy and particle physics are often hard to intervene in: one cannot touch stars, and even particle colliders offer limited flexibility compared with a tabletop experiment. Other fields can change experimental conditions much more rapidly. Physics often has a clear mechanism and causal story, while in social science or health care the causal structure may be more contested and must be teased apart by careful experimental design. Still, Cranmer saw a unifying thread in mathematical framing, mechanisms, and causality.

Hong connected AI for mathematical discovery to Cranmer’s work in theoretical physics. Some math systems search for outlier patterns, predict structures, or solve puzzle-like formal systems, and she saw that as close to parts of AI for theoretical physics: the same playbook can sometimes move across domains.

Finkbeiner was skeptical that a new department is the answer. He relayed a colleague’s analogy: when differential equations became important, universities did not create departments of differential equations; they started using them. AI, in that sense, becomes part of what scientists do. He later clarified that this does not mean nothing changes; departments did add classes on differential equations.

Cranmer’s answer was more about skill formation than administrative boundaries. He argued for use-inspired research: real scientific problems expose constraints that would be hard to imagine in the abstract. The key skill is to identify what is unique about a scientific problem, articulate it in the language of applied mathematics or AI, abstract it correctly, and make it portable. Simulation-based inference is his example: motivated by particle physics, abstracted properly, and then useful across many domains. That ability to extract and transfer patterns is not something most physicists are trained to do deliberately, but Cranmer thinks more people should.

Hong added that open-source software and documentation matter for training. When tools such as AlphaEvolve are closed, researchers may build open approximations, but usability depends on detailed manuals, code templates, demos, and notebooks that let mathematicians or physicists drive the process themselves. She said compute and high-quality data are needed to push the frontier in formal theorem proving, but academics can still build effective agent loops around available models and open-source provers, sometimes outperforming stronger base models through better systems and data.

An audience question about the role of the research university returned the discussion to training, discipline, and the risk of atrophy. Cranmer said universities still need to walk students through core concepts, critical thinking, and the kinds of questions scientists ask. These skills will remain important and may become more important, though in a different way. The institution has to adapt, but its core function is not obsolete.

Hong said training gives discipline, execution, and agency. It teaches people to think rigorously about science, and rigor can lead to out-of-distribution innovation. Since AI has not yet figured out how to conjecture in the full mathematical sense, she argued, learning fundamentals and rigorous proving may still be what produces the “creativity spike.”

Finkbeiner addressed the worry that students will spend more of their careers doing exercises where the answer is already roughly known. He noted that universities already do this: senior theses, junior theses, and other training projects often have a known general shape even if the exact answer is not predetermined. That pattern may extend further into graduate school. But he rejected the simple story that the frontier will recede exponentially beyond human reach. AI also accelerates the student’s ability to learn and work. For a while, he said, humans may be able to keep up with the receding frontier precisely because the same tools empower them.