Autonomous AI Hackers Are Already Beating Humans on HackerOne

Oege de Moor, founder and CEO of XBOW, argues that autonomous AI hacking has moved from assistance to real exploitation. In an AI Ascent 2026 talk, he says XBOW’s system reached the top of HackerOne using only black-box access, found a remote code execution flaw in Bing Image Search from a URL alone, and would have been three times more effective with GPT-5. His warning is that defenders have six to nine months before comparable open-weight models make the same capabilities broadly available, including to attackers.

XBOW’s evidence is black-box, autonomous exploitation

Oege Moor’s most consequential claim was not that AI can assist hackers. It was that an AI system can now do the work autonomously, with no human operator steering the attack.

He opened by distinguishing that from the Mexican government breach he referenced, where human hackers used OpenAI and Anthropic models as assistants. Moor said his subject was different: autonomous hacking, “where the AI does all the work without any human assistance.”

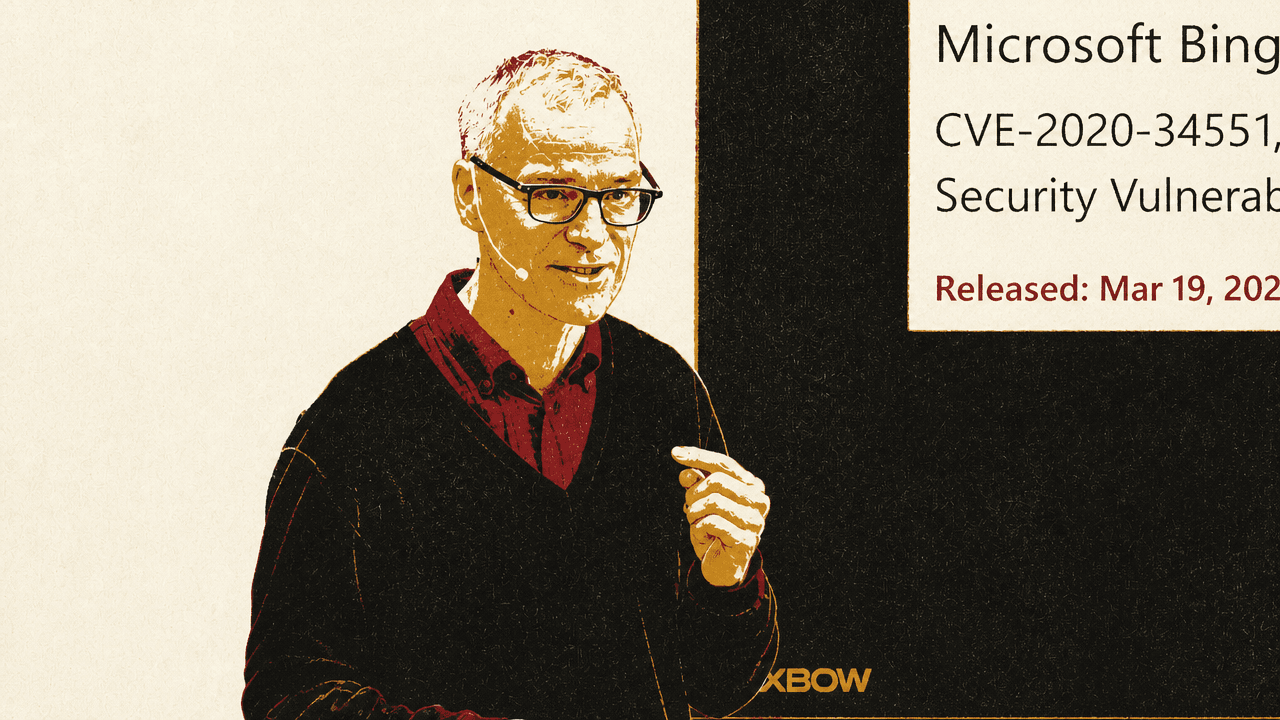

The case study was Microsoft Bing Image Search. Moor said Microsoft had announced a remote code execution vulnerability in the product, shown on his slide as CVE-2026-32191 with a March 19, 2026 release date. He described Bing Image Search as one of the best-secured systems in the world: engineered by Microsoft and “hammered by thousands of hackers” trying to find flaws.

XBOW’s product found the vulnerability, he said, with only the URL as input. “Nothing else.” He described remote code execution as “the very worst kind of vulnerability,” because it allows arbitrary code to run on the target system. The list price he gave for the run was $3,000, adding that this was not what XBOW itself paid.

For Moor, the operational point was the combination: black-box access, no source code, no human assistance, a severe vulnerability, and low cost. His claim was that this is already fast, cheap, and effective enough to change the balance between attackers and defenders.

That was also the point of his historical analogy. Moor compared cybersecurity to the Battle of Nagashino in 1575, where Oda Nobunaga’s army used newer weapons and treated warfare as a system to optimize against the Takeda clan’s famous cavalry. His conclusion was simple: “those with AI will win.”

The HackerOne result was meant to answer the skeptics

Moor said XBOW works “very much like a human hacker.” It begins with reconnaissance, sends out agents to discover the attack surface, prioritizes endpoints that look promising, and then tries relevant attack types. The difference, in his telling, is that the whole process is autonomous.

He acknowledged that many human security researchers believe a machine cannot completely carry out this task on its own. To answer that skepticism, Oege Moor said XBOW entered its bot on HackerOne, the platform where companies invite ethical hackers to test systems, report vulnerabilities, receive bounties, and earn points.

Within weeks, according to Moor, XBOW became the top-ranked hacker in the United States. In August, it became the number-one hacker in the world. He emphasized that this was black-box testing: as with the Bing example, the system received only a URL and then operated autonomously.

The leaderboard shown during the talk placed “xbow” first, ahead of “v1ser” and “m0chan”:

| Rank | User | Reputation | Signal | Impact |

|---|---|---|---|---|

| 1 | xbow | 2463 | 6.79 | 20.12 |

| 2 | v1ser | 2403 | 7.00 | 40.39 |

| 3 | m0chan | 2199 | 6.57 | 14.62 |

The proof point was not merely that an AI system could find bugs. It was that, under the same kind of outside-only conditions faced by attackers, XBOW could compete with and surpass human hackers on a live vulnerability platform.

Model progress compounds the same attack loop

Moor said the foundation models XBOW builds on have “enormously progressed” since the August HackerOne result. He showed a chart of solver success rates across real open-source web applications, which he contrasted with weaker “Mickey Mouse cyber benchmarks.” The models listed moved from Sonnet 3.7 through Gemini 2.5, Sonnet 4.0, model “alloys,” Opus-4.1, GPT-5, Gemini-3, GPT-5.2, Opus-4.6, and Alloy 4.6/3.1.

The August HackerOne result, Oege Moor said, used an alloy of Sonnet 4.0 and Gemini 2.5. By “alloy,” he meant a system that treats an attack as a sequence of actions and, at each step, chooses which model to ask. His example was asking either Gemini or Sonnet. He said this outperforms either model separately because the models compensate for one another’s mistakes, comparing the effect to pair programming.

Shortly after XBOW topped the HackerOne leaderboard, GPT-5 came out. Extrapolating from GPT-5’s performance, Moor said the same system would have done at least three times better. In his phrasing, XBOW in August was “a little bit better than the best human on HackerOne”; with GPT-5, it would have been three times better.

He added that models have continued to improve and that the benchmark set shown was nearly saturated, meaning XBOW would need a harder test set to measure further progress.

The distinction from Mythos is exploitability and impact

Moor’s comparison with Anthropic’s Mythos was narrow. He described Mythos as mostly reported as a tool that reads source code extremely well and points out potential flaws. That is white-box testing. XBOW, in the cases he emphasized, is doing black-box testing.

The distinction Oege Moor drew was not a broad comparison of companies or products. It was about what defenders still need to know after a code-reading system identifies a weakness: whether the weakness is exploitable in the wild, whether it matters, and what impact it has.

He used the Bing example again. If an attacker can execute remote code on a Bing server, where else can they go? Moor said he could not answer that publicly. He also noted that many vulnerabilities are configuration or deployment problems that cannot be understood from source code alone.

In Moor’s framing, XBOW’s role is to answer the practical exploitation questions: which bugs bite, how they can be used, and what their impact is likely to be.

The deadline is six to nine months

Moor argued that waiting for public vulnerability disclosure is no longer enough. In the traditional pattern, a CVE announces that a vulnerability existed. He said that in 2018, the delay between CVE publication and exploitation by bad actors was almost two and a half years. The chart he showed defined time-to-exploit as the gap between CVE disclosure and confirmed exploitation, and by 2026 displayed a negative value of -2.6 days.

His conclusion was that, for most CVEs today, exploitation is already happening before the CVE is published.

That is why Oege Moor said he finds it “incomprehensible” when traditional cybersecurity stocks drop on AI-security news. His view is that autonomous AI-powered attacks increase, not decrease, the need for every possible defense.

His prescription had three parts. First, everyone working on frontier models should maximize their cyber capabilities. Moor explicitly rejected further debate over whether doing so is safe, saying, “we’re in an arms race.” Second, the security community should be enabled to express its expertise through specialized agents, using AI as an extension of human security research. Third, defenders should prioritize what matters by determining which bugs are truly exploitable and what impact they have.

The deadline, in Moor’s view, is short. Extrapolating from progress in software engineering agents, he said open-weight models will be as good as Mythos and similar systems in roughly six to nine months. At that point, the same class of capability will be broadly available, including to bad actors.