Ricursive Wants AI to Design the Chips That Train AI

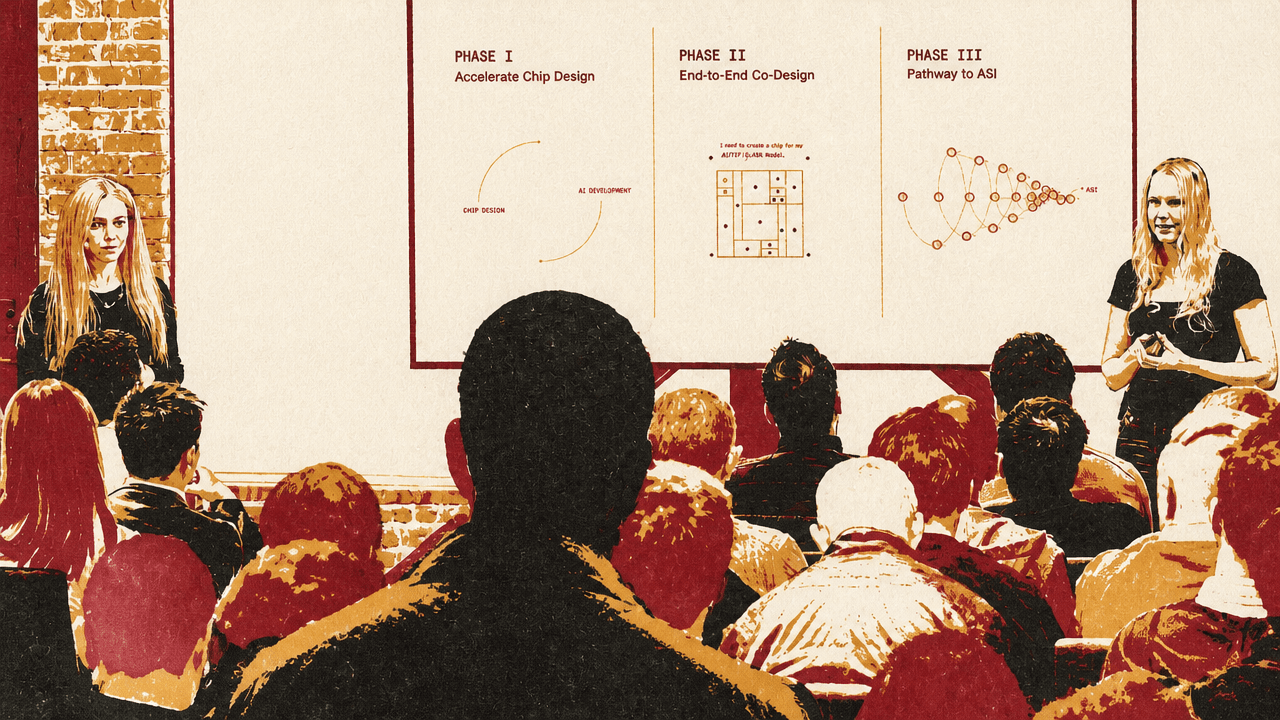

At AI Ascent 2026, Ricursive Intelligence co-founders Anna Goldie and Azalia Mirhoseini argued that the next bottleneck in AI is the chip-design process itself, and that AI should be used to design the hardware that trains and serves it. Drawing on their AlphaChip work, which Goldie said has shipped in four generations of Google TPUs, they described Ricursive’s plan to rebuild chip-design tools for fast AI feedback loops and turn that tooling into a platform for custom silicon. Their larger claim is that workload-specific chips, and eventually co-designed chips and models, require moving chip design from yearlong expert workflows to automated optimization.

The loop Ricursive wants to close

Anna Goldie and Azalia Mirhoseini described Ricursive Intelligence around a recursion: AI needs better chips, and AI should help design those chips. Goldie framed chips as “the fuel for AI,” and said the company’s aim is to use AI to design, optimize, and automate chip design, closing a self-improving loop between AI development and its physical substrate.

Chips are the fuel for AI.

Goldie said the work began in 2018 with AlphaChip, a deep reinforcement learning system she and Mirhoseini helped create. She described it as capable of generating “superhuman chip layouts,” and emphasized that the important part was not only publication in Nature but use in real tapeouts. According to Goldie, AlphaChip has been used in the last four generations of Google TPU AI accelerator chips, in Google’s Axion data center CPUs, in Pixel phones, in autonomous vehicle chips, and by external companies including MediaTek.

Ricursive was also presented alongside press coverage: a Wall Street Journal screenshot said the startup had raised $35 million with backing from Sequoia, while a New York Times screenshot described it as valued at $4 billion.

Ricursive cited wirelength reductions versus human experts across multiple TPU results, including 3.2% for TPU v5e, 4.5% for TPU v5p, and 6.2% for Trillium, with another displayed TPU result at 4.0%.

| AlphaChip result | Wirelength reduction versus human experts |

|---|---|

| TPU v5e | 3.2% |

| Displayed TPU result | 4.0% |

| TPU v5p | 4.5% |

| Trillium | 6.2% |

The first target is the slowest part of chip design

Anna Goldie said Ricursive is in the first of three planned phases: accelerating chip design. The two “long poles,” in her description, are physical design and design verification. Physical design means placing billions of standard cells or transistors and routing billions of components on the chip canvas. Design verification means checking that the chip’s logic is correct. Each process, she said, can take up to a year and involve hundreds or thousands of human experts.

The stakes, in Goldie’s telling, are large enough that even schedule movement matters. She said the team has heard estimates that one day of delay for an Nvidia Blackwell chip could cost roughly $225 million in lost opportunity cost. Ricursive’s phase-one goal is to help existing chip makers get to market faster and build faster, cheaper, more environmentally friendly chips.

| Phase | Goal | What Ricursive says it enables |

|---|---|---|

| Phase I | Accelerate chip design | Help existing chip makers build faster, cheaper, more environmentally friendly chips. |

| Phase II | End-to-end co-design | Take a workload as input and produce a manufacturable chip design. |

| Phase III | Vertical integration | Design chips, train models, and co-evolve them together. |

In phase two, Goldie said Ricursive wants to become a platform for new hardware design. The input would be a workload — she gave “the next Claude model” as an example — and the output would be an architecture that accelerates that workload, followed by the full design process to GDSII. Her claim was that this would expand the customer base for custom chips beyond companies that can employ hundreds or thousands of chip-design specialists.

Phase three is vertical integration. If Ricursive can quickly design high-performing chips, Goldie asked, why not build its own chips, train its own models, co-evolve the two, and serve intelligence at a price or capability that competitors could not match?

Fast tools are the prerequisite for AI design loops

Azalia Mirhoseini focused on Ricursive’s method: redesign the underlying chip-design tools so they run fast enough for AI systems to use them inside optimization loops. She described the traditional chip-design flow from architecture design through floorplanning, verification, synthesis, placement, routing, post-route optimization, signoff, and final GDSII. Today, she said, these phases are handled by human experts working with commercial tools that can take days for a single optimization iteration.

Ricursive’s approach has an “inner loop” and an “outer loop.” The inner loop consists of chip-design tools that the company says can run 100 to 1,000 times faster than leading commercial tools. The outer loop is a self-improving AI system that learns as it solves more instances of the problem using those tools.

Mirhoseini said this matters because AI systems benefit from fast iteration. If the toolchain can produce feedback quickly enough, AI can explore a much larger co-design space and optimize across more of the stack. In her framing, the performance gains come from both automation and co-design.

The concrete example she gave was static timing analysis, a difficult part of physical design. Ricursive said its engine performs full-graph timing analysis in milliseconds and showed 0.999+ correlation with leading commercial tools. The benchmark example had a runtime of 162.7 milliseconds, an R value of 0.999728, and mean absolute error of 0.83 picoseconds.

Mirhoseini’s claim was not just that the static timing tool is faster. It is that a high-fidelity, millisecond-scale feedback signal changes what AI can do. In an AI tool-use or reinforcement-learning loop, she said, that signal can be used directly for optimization.

From fabless to designless

Azalia Mirhoseini described Ricursive’s intended market position as “designless,” by analogy to the fabless era. In her account, TSMC and similar manufacturing platforms let companies such as Nvidia and Apple focus on designing chips while sending fabrication elsewhere. Ricursive wants to occupy the analogous layer for chip design: customers specify requirements, Ricursive delivers optimized, ready-to-build chips, and customers focus on applications and products.

The proposed shift is from separating design and manufacturing to separating requirements and design. Ricursive’s stated impact is market expansion, democratized high-performance silicon, and more specialized chips.

Mirhoseini tied the need for that model to AI inference. Today, she said, there are only a few mainstream chips for AI inference. But she expects demand for much more performance in the coming years, and argued that customization is one route to unlocking it. If chips are tailored to the workload they serve, the result could be more variation by application: frontier models, drones, autonomous vehicles, defense, wearables, robotics, low-power systems, high-throughput systems, and other specialized needs.

The economic question came up directly in Q&A. An audience member asked whether thousands of specialized chips could be made as cheaply as a single broad architecture. Mirhoseini’s answer was that Ricursive is introducing compute as a new knob: by scaling compute, the company aims to reduce chip-design runtimes and improve chip performance. Her answer was that economics would vary by workload and scale, and that even a 1% improvement for a chip serving a frontier model would be a large gain.

AI layouts do not look like human layouts

In response to a question about the shape of AI-generated placements, Anna Goldie said Ricursive is seeing the same pattern as in AlphaChip: curved, organic-looking placements rather than the aligned, regular placements human experts tend to produce. She said those layouts minimize wire length and improve performance, but were initially shocking to physical design engineers.

That detail is a small example of the larger claim Ricursive is making. The company is not only trying to automate human chip-design practice. It expects AI to search physical-design space differently from human engineers, including in ways that may look unfamiliar.

Azalia Mirhoseini also pointed to the team composition as part of the company’s bet. Ricursive combines people with LLM experience — she named Claude, Gemini, and Grok as prior systems team members had worked on — with chip-design experts. A team slide showed logos including Google DeepMind, X, Cadence, Apple, Nvidia, Stanford, Harvard, and MIT.