MagenticLite Brings Full Agent Workflows to Small Language Models

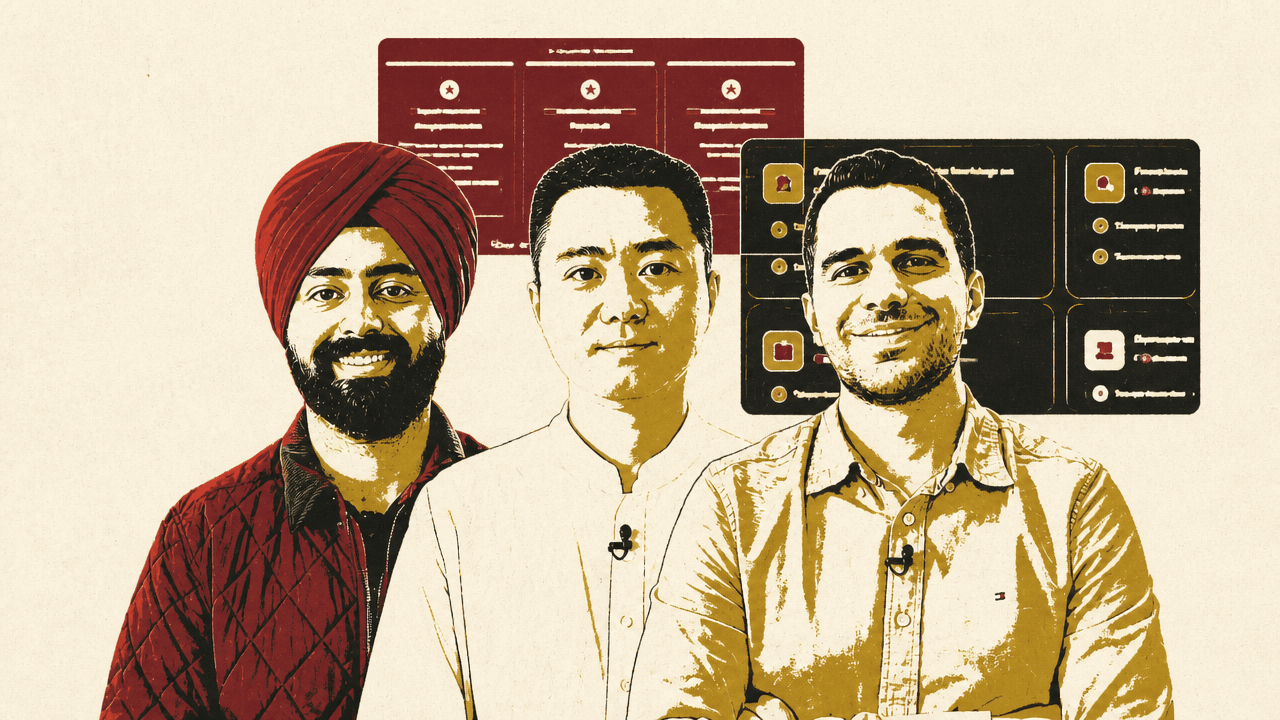

Microsoft Research is presenting MagenticLite as a full-stack agentic system designed to make small language models usable for multi-step work across a browser and local files. Weili Shi, Harkirat Behl and Hussein Mozannar argue that the capability comes from specializing the stack rather than relying on frontier-scale models: MagenticBrain handles planning, coding and delegation, while Fara 1.5 controls the browser. The release also emphasizes user oversight, with the agent pausing for credentials, approvals or other points where the user needs to take control.

MagenticLite is designed to make small models useful in full agent workflows

MagenticLite is the next generation of Magentic-UI: an agentic application rebuilt to work with small language models rather than relying on frontier-scale models. The team described the release as a full-stack redesign spanning training data, model design, orchestration, harness behavior, and user experience.

Weili Shi described the application as reworked to run efficiently on small language models, with a refreshed interface shaped by community feedback. Its operating surface is broader than a browser agent alone: MagenticLite works across a browser and a local file system in one workflow. The user can steer, approve, or take over at any point, and the application stops before critical actions.

The release has three connected pieces. MagenticLite was shown as an “open-source agentic experience optimized for SLMs.” Fara 1.5 is the computer-use model for web navigation and delegation, described as state of the art in its weight class for web tasks. MagenticBrain, referred to in one slide as the renamed Magentic Orchestrator, is the reasoning, coding, and delegation model that coordinates the work. The on-screen release graphic described Fara 1.5 and MagenticBrain as “coming soon on Microsoft Foundry.”

| Release | Role | Capabilities described |

|---|---|---|

| MagenticLite | Agentic application | Open-source experience optimized for small language models; works across browser and file system |

| Fara 1.5 | Computer-use model | Web navigation and delegation; described as state of the art in its weight class for web tasks |

| MagenticBrain | Orchestrator model | Reasoning, coding, planning, and delegation |

The browser-plus-files workflow was illustrated with a task grounded in local notes from the previous Microsoft Build conference. Shi gave MagenticLite access to a folder on her computer and asked it to identify what had shipped, changed, or been announced since then, then create a catch-up document for the next conference. The agent used the local files as context and opened its own browser, running in a virtual machine, to gather additional information. Shi said the virtualized browser helps minimize the risk of data leakage while allowing Fara, the browser-use model, to operate quickly.

The resulting markdown file was titled “Build-2025-what-you-missed.md” and covered updates to GitHub Copilot, Azure AI Foundry, and the Phi-4 model family since May 2025. When Shi asked MagenticLite to email the document through Outlook, the application notified her that input was required to log in. During sign-in, the interface stated that the user was in control of the browser and that the agent was paused and would not observe those actions. Once unblocked, MagenticLite resumed control and drafted the email.

The control pattern is explicit: the agent can pursue a long-running, multi-step task, pause when credentials or user input are needed, hand control to the user, and resume after being unblocked.

MagenticBrain pushes orchestration into a small model

Harkirat Behl described MagenticBrain as “the brain that ties it all together”: the planner, coder, and delegator behind MagenticLite. Its job is to take a messy request, such as “Book me a dentist appointment Tuesday afternoon and add it to my calendar,” and turn it into a concrete plan. That means breaking the task into steps, selecting tools or sub-agents, writing code where code is the right solution, delegating browser work to Fara, and recovering when a task breaks midstream.

MagenticBrain is the brain that ties it all together.

Orchestration is usually where developers reach for the largest available model, Behl argued, because reasoning is hard and failure modes are expensive. The team’s goal was to show that orchestration could be handled by a small model “without giving up on capability.” The training recipe is deliberately simple: a small language model trained with plain supervised fine-tuning, without a complicated multi-stage pipeline. The main unlock, in his account, is the data mix.

That mix combines two complementary styles of agentic behavior.

The first is standard tool-calling data. The team built an in-house pipeline called Vulcan, where tools are represented as Python objects with their arguments and return types defined in class structure. Those tools become nodes in a graph, and a training trajectory is an ordered path through that graph: search, book, calendar, email, and so on. The point is to teach multi-turn tool composition rather than isolated function calls. The paths are validated with rules and sandboxed execution, then mixed with other data, including public and in-house synthetic MCP trajectories, to broaden tool coverage.

The second is terminal data. Behl showed a CoAct-style trajectory for a request such as optimizing blog images to WebP under 250 KB and renaming them to match post slugs. In that format, the agent thinks through the task, takes terminal actions such as ls, reads execution results, and proceeds step by step. The team generates these trajectories through a four-stage pipeline: sample a scenario, create a task and environment, run an agent to produce a full trajectory, and filter for verified trajectories.

The distinction matters because many real requests do not map cleanly to a function call. Behl argued that sometimes “the right answer is five lines of Python.” A model trained only on function-calling data may try to force every problem into tool use. Mixing function-calling trajectories with terminal and code-oriented data is meant to teach MagenticBrain when to call a tool and when to write code instead.

Behl said the result competes with much larger general-purpose models while staying small enough to run next to Fara on your machine locally. He also described MagenticBrain as open weight, so developers can plug it into their own harnesses.

Fara 1.5 learns browser control from synthetic computer-use data

Hussein Mozannar framed AI Frontiers’ computer-use work around a specific bet: agentic models can be trained to complete computer-use tasks through end-to-end synthetic data generation, without human interaction data. Fara 1.5 is the successor to Fara-7B, which the team released the previous November as a small agentic model for computer use. The new release is a family of computer-use agent models at 4B, 9B, and 27B parameters, all based on Qwen 2.5. Mozannar described Fara 1.5 as setting state-of-the-art results for models at its size on web tasks.

Fara operates through a browser-control loop. For a task like applying to the latest intern opening at MSR AI Frontiers, it first captures a browser screenshot. That screenshot enters the model context along with prior screenshots, prior actions, and user messages. The model then predicts an action to perform in the browser, observes the result, and continues.

Its action space includes native manipulation actions such as clicking coordinates and pressing keyboard keys. It also includes tools for managing its own context, such as memorizing facts so it can act across hundreds of steps. Finally, it can ask the user for approval, permission, or preferences when needed.

On OnlineMind2Web, described as 300 tasks across 100 online URLs, Microsoft compared Fara-7B, MolmoWeb-8B, GUI-Owl-1.5-8B, and Fara-1.5-9B. Mozannar said Fara 1.5 9B nearly doubled the performance of Fara-7B, rising from 35 percent to almost 65 percent accuracy. The slide labeled the result “SOTA performance at model size for web tasks.”

| Benchmark | Model | Reported accuracy |

|---|---|---|

| OnlineMind2Web | Fara-7B | 35% |

| OnlineMind2Web | Fara-1.5-9B | Almost 65% |

Benchmark performance was not the only target. Fara was trained for tasks people might actually want to complete: form filling, booking, shopping, and repetitive web work. The central training system is FaraGen 2.0, described as a scalable synthetic data engine for computer-use agents.

FaraGen 2.0 begins with environments. The team trains on a mix of live web domains across popular websites and synthetic domains. Mozannar said synthetic websites are generated with the help of a coding agent in a tight debugging loop, enabling scenarios that cannot easily be performed on the live web, such as sending an email or booking a flight.

Given environments and tasks, the solver system uses a stronger teacher agent — identified here as GPT-4 — together with a user simulator. They operate in a loop to complete tasks and generate trajectories. Those trajectories are then evaluated by three verifiers.

| Verifier | Question it answers |

|---|---|

| Correctness | Did the agent perform the task correctly, judged with LLM-generated rubrics? |

| Efficiency | How many steps did the agent take, and did it find the most straightforward path? |

| User interaction | Did the agent make up information, take irreversible actions without approval, or fail to ask for clarification? |

The verified data is then used to train the model with supervised fine-tuning. In Mozannar’s account, Fara’s capability comes not from human interaction logs but from synthetic environments, solver-generated trajectories, and verifier-filtered training data.

The roadmap extends computer use beyond the browser

Mozannar said the work on computer-use agents will continue beyond Fara 1.5. The plans he listed point toward longer-horizon and broader-surface agents: always-on capabilities to monitor the live web, acting in additional environments such as Windows and Linux, and using the terminal alongside visual manipulation to complete tasks more efficiently.

Those plans align with the release’s broader design. MagenticLite is presented as a coordinated system in which a small orchestrator model decides how to plan, code, and delegate; a browser-use model acts through screenshots and native actions; and the application harness keeps the user able to intervene.

The release’s approach is to specialize the stack around agent workflows: local file access for grounded work, a virtualized browser for web action, sandboxed and verified trajectories for training, explicit delegation between models, and user handoffs at points of risk.