Language-Agnostic Analysis Finds 15 Vulnerabilities in ZKP Projects

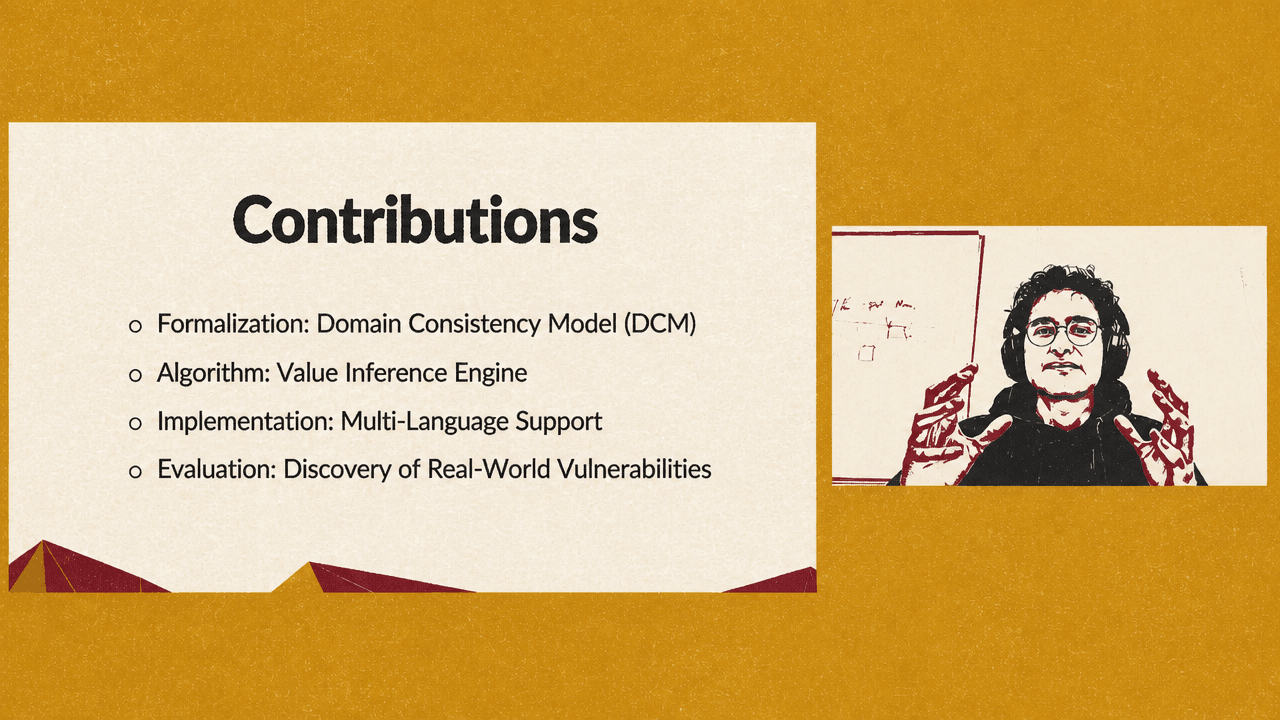

Arman Kolozyan of the Max Planck Institute for Security and Privacy argues that many zero-knowledge proof bugs arise from a mismatch between what a prover’s computation can produce and what a verifier’s constraints will accept. Presenting the paper “Language-Agnostic Detection of Bugs in Zero-Knowledge Proof Programs,” he describes a formal model, the Domain Consistency Model, and a static-analysis tool, CCC-Check, designed to detect those mismatches across ZKP languages. The work reports two-orders-of-magnitude speedups over SMT-based approaches on benchmarks and 15 previously unknown vulnerabilities in six ZKP projects, most of them outside the reach of existing models.

The bug class lives in the gap between proving and checking

Arman Kolozyan’s starting point is that zero-knowledge proof programs are not ordinary programs with an ordinary verifier generated as a byproduct. In the programming model he describes, the developer writes both what the prover computes and what the verifier checks. That split is the source of the security problem.

A zero-knowledge proof lets a prover convince a verifier that a statement is true without revealing selected secret data. Kolozyan illustrates the idea with a factoring claim: a prover knows primes p and q such that N = pq, but does not want to disclose p and q. Instead of sending the factors, the prover sends a proof that can be checked without revealing them. Two properties drive the applications he emphasizes: privacy and fast verification. A user might prove being over 18 without revealing name or date of birth; a blockchain application might move a complex computation off-chain and verify only a succinct proof on-chain.

At the abstraction level of the talk, the cryptographic machinery is treated as given. The programming problem is what matters. A developer writes a ZKP program in a domain-specific language, with constructs such as templates and signals, and with some inputs marked private. From that program come the prover’s work and the verifier’s checks. But Kolozyan stresses that the verifier’s side is not simply an automatic restatement of the prover’s side. The developer must explicitly express the constraints that establish that the prover did the computation correctly.

That matters because the prover and verifier do not have the same instruction set. The prover’s computation may use addition, multiplication, assignment, division, comparisons, and other operations. The verifier’s constraints, by contrast, are restricted to addition, multiplication, and equality checks. Kolozyan attributes that restriction to the underlying cryptography: fast verification requires a constrained arithmetic form.

The result is a translation burden. If the prover computes out <-- a / b, the verifier cannot directly check division. A developer may encode the check as out * b === a. For ordinary values such as a = 4, b = 2, and out = 2, that works: 2 * 2 = 4. But the encoding misses a condition implicit in the prover’s computation. Division by zero would crash the prover; it is not a valid computation. Yet the verifier constraint accepts fabricated values such as a = 0, b = 0, and out = 5, because 5 * 0 = 0.

That example is the core vulnerability pattern. The verifier is not necessarily checking what the prover was supposed to have done. It may be checking a weaker arithmetic condition that admits fake proofs.

Signal tags document assumptions, but the compiler does not enforce them

ZKP language designers already have mechanisms for some of these cases, according to Arman Kolozyan. In the division example, a template can tag b as nonZero, turning the missing precondition into an explicit assumption on callers. The template is effectively saying: if you use this function, ensure that b has already been constrained to be nonzero.

The reason this is not always checked inside the template is performance. ZKP developers try to minimize the number of constraints, because the constraint count has a large performance impact. If a caller has already checked that b is nonzero, and the callee checks it again, the program pays twice. Signal tags allow libraries to record assumptions without duplicating constraints.

But Kolozyan says these tags often amount to documentation. The compiler does not verify that callers actually satisfy them. If a library function assumes a nonzero denominator, a bounded range, or another condition, a caller can omit the needed constraint without receiving a compiler error. He connects this to real-world bug patterns visible in public trackers and issue reports: missing checks, missing bit-length constraints, mismatching bit lengths, arithmetic underflows, and out-of-range errors.

That is why the research frames the problem as a computation–constraint mismatch rather than merely a bad constraint. A constraint can be internally well formed and still fail to match the computation it is supposed to verify.

Existing detectors mostly see the verifier, not the intended computation

Arman Kolozyan’s survey of related work separates two limitations: language dependence and semantic scope. Related tools remain concentrated around Circom and other narrow targets: Circomspect, ZKAP, CIVER, and ZEQUAL are presented as Circom-focused; zkFuzz is shown as covering Circom and Noir; Picus targets R1CS. That narrowness motivates a language-agnostic detector.

The deeper limitation is that many tools analyze constraints without fully comparing them to the prover’s intended computation. Constraint-only analysis can still find important bugs. In response to a question from the audience, Kolozyan explains that existing work often detects under-constrainedness as nondeterminism: for the same input, the verifier may accept multiple outputs. That can be dangerous. If a verifier accepts both an under-18 and over-18 result for the same underlying facts, an attacker can present the favorable output and the verifier cannot distinguish it.

The questioner pushes on whether nondeterminism alone can define correctness, since a checker that sees only constraints does not know what behavior is intended. Kolozyan agrees that some nondeterminism may be expected, giving roots of a number as an example where multiple outputs can be legitimate. But he says nondeterminism in real-world ZKP programs often leads to severe vulnerabilities, which explains why prior work focused there.

His critique is not that constraint-only analysis is useless. It is that it cannot answer the central semantic question: what was the verifier supposed to check? In the division example, the only way to know that b = 0 should be rejected is to inspect the computation a / b. A constraint-only tool sees out * b === a; it does not see the division operation whose domain excludes zero.

Kolozyan’s concluding formulation is direct: “Analyzing the constraints alone leaves many ZKP bugs undetected.” The proposed alternative is to detect computation–constraint mismatches.

The Domain Consistency Model compares what can be produced with what can be accepted

The formal contribution Arman Kolozyan presents is the Domain Consistency Model, or DCM. Its basic idea is simple: for possible assignments of values to signals, compare whether the computation side could produce them and whether the constraint side would accept them.

In the division template, the assignment (a = 4, b = 2, out = 2) is consistent. The computation can produce it, and the verifier accepts it. The assignment (a = 0, b = 0, out = 5) is inconsistent. The computation cannot produce it, because division by zero would fail, but the verifier accepts it under out * b === a.

The model also accounts for explicit specifications such as signal tags. If a template declares b as nonZero, then the absence of an internal nonzero constraint is not automatically a bug in that template. The assumption is part of the specification: callers must supply the check. But if a caller uses the template without enforcing the nonzero requirement, the mismatch reappears at the call site.

Kolozyan says this framework lets the authors formalize multiple vulnerability classes found in practice. He explains division by zero in detail and gives arithmetic overflow as another concrete case: a function may only be valid for values in a fixed range, such as 0 through 15, and larger values can violate the function’s assumptions. The presentation lists the full set of semantic mismatch classes as follows.

| Vulnerability class | What is grounded in the presentation |

|---|---|

| Division by zero | Explained through the `out <-- a / b` example, where the computation excludes zero denominators but the constraint `out * b === a` can accept them. |

| Circuit logic assumptions | Listed as a semantic mismatch class. |

| Out-of-bounds indexing | Listed as a semantic mismatch class. |

| Arithmetic overflows | Explained as a range-assumption problem, where a function can only handle inputs within a specified range. |

| Special signals | Listed as a semantic mismatch class. |

| Conditionals with non-binary guard | Listed as a semantic mismatch class. |

Kolozyan says the authors managed to exploit arithmetic overflow in this setting to create fake proofs. The larger point is that the DCM gives a formal way to express when the verifier accepts assignments outside the domain of values the honest computation could have produced, taking explicit assumptions into account.

The detector trades exact enumeration for abstract interpretation

A naïve implementation of the DCM would be infeasible. In ZKP programs, operations happen modulo a large prime p. Instantiating the model literally would mean reasoning over all possible values of every variable, from 0 to p - 1. Kolozyan describes that as too slow.

The tool’s algorithmic contribution is an approximate “Value Inference Engine” based on static analysis, specifically abstract interpretation. Kolozyan contrasts this with formal verification approaches based on SMT solving. SMT-based methods are precise, but he says they are slow in ZKP settings because SMT solvers struggle with the nonlinear equations common in these programs. Static analysis is faster, but may report false positives because it over-approximates program behavior.

The approximation has two sides. On the computation side, the tool tracks specifications such as signal tags. Instead of enumerating all values an honest prover could compute, it records constraints such as “b is nonzero” or “a has maximum value 15.” Those tags summarize what the computation expects.

On the verifier side, the tool infers abstract values from constraints. It might approximate that a is accepted in [0, 15], b in [0, 5], and out in [1, 10]. If a computation-side tag requires b to be nonzero but the verifier-side approximation still admits zero, the tool can flag a potential mismatch.

The inference uses rule patterns from prior work. Kolozyan cites work by Shankara Pailoor and colleagues using inference rules that exploit recurring patterns in ZKP programs. A simple example is the constraint x * (x - 2) == 0: from that pattern, one can infer that accepted values are 0 and 2; under over-approximation, the analyzer might conservatively treat the range as 0, 1, 2. The authors reused rules from the literature, added new categories, and extended existing categories to catch vulnerabilities they found in real programs. Kolozyan notes that the paper contains the full algorithm and proofs of soundness and termination.

An audience member asks how this differs from refinement-type approaches such as Coda. Kolozyan answers cautiously, saying he had seen the Coda paper some time earlier and might be mistaken. His understanding is that Coda introduced a new programming language, whereas this work tries to support languages developers already use. He also suggests Coda may formally verify refinement types with an SMT-like approach, which could make it more precise but potentially slower than the abstract-interpretation approach presented here.

Language agnosticism comes from targeting an intermediate representation

The implementation question is whether a detector should support one ZKP language or many. Arman Kolozyan argues for many. The ZKP ecosystem has moved from libraries to domain-specific languages and, more recently, zkVMs. His work focuses on domain-specific languages rather than zkVMs, because zkVMs aim to hide cryptographic details from programmers and, in his account, currently have limitations that keep them from being widely used in real-world applications.

To justify multi-language support, the authors studied ZKP applications on GitHub and the languages used by new repositories over time. Circom has been highly popular, but the chart Kolozyan shows suggests Noir has been gaining traction in recent years. A detector built only for Circom risks not being future-proof.

Their solution is to target an intermediate representation rather than each source language directly. Kolozyan points to CirC, a shared compiler infrastructure for cryptographic tools. CirC translates programs written in different ZKP languages into an intermediate representation that can be consumed by solvers or proof systems. For this work, the important feature is that the IR already separates computations from constraints.

By operating on that representation, the detector can support multiple languages once those languages are translated into the IR. Kolozyan says the authors also added support for Noir and Circom to the CirC compiler. In the conclusion, he notes that another ZKP auditing company, Veridise, had recently proposed a new intermediate representation intended to be more readable, which he presents as further evidence that IR-level analysis is a useful direction.

The evaluation shows speed gains, false positives, and real vulnerabilities

The evaluation has two parts: benchmark comparison and real-world vulnerability discovery.

On 35 tagged benchmark programs from CIVER, an SMT-based verifier, the authors compared effectiveness and runtime. Arman Kolozyan says the tool was faster on all 35 programs. On average, it was two orders of magnitude faster. The reason, in his account, is that the abstract interpretation engine pattern-matches inference rules; it does not ask an SMT solver to reason through nonlinear equations.

The cost is the expected imprecision of over-approximation. Kolozyan says the tool produced false positives, especially for division-by-zero cases. The source description characterizes the broader result as comparable precision with substantially better speed; the talk itself emphasizes both the speedup and the presence of false positives.

The real-world evaluation described in the talk looked at ZKP projects with some existing recognition on GitHub. Kolozyan names a Microsoft project for proving possession of a valid mobile driver’s license, a TikTok project used in the context of trusted execution environments and attestations, and Self, an application for proving attributes such as being over 18 or having a nationality. Across these applications, the authors found cases where assumptions of templates or functions were not met by the corresponding verification side.

The source description supplies the broader quantitative result: using the DCM, the authors examined six widely adopted ZKP projects and found 15 previously unknown vulnerabilities. They reported the bugs to maintainers; 13 were patched. Of the 15, 12 could not be captured by existing models.

Kolozyan gives one concrete example from Self, which he says had been missed during an audit by a major ZKP auditing company. The relevant code is a LessThan(n) template that checks whether one input is less than another. In the tagged version, the function assumes its inputs are representable in n bits, meaning it has range restrictions on the inputs. The vulnerability arose because the function was used without adding the constraints needed to satisfy those assumptions. The authors were able to create a fake proof exploiting that gap.