GitHub Agentic Workflows Turn Actions Into AI-Run Development Processes

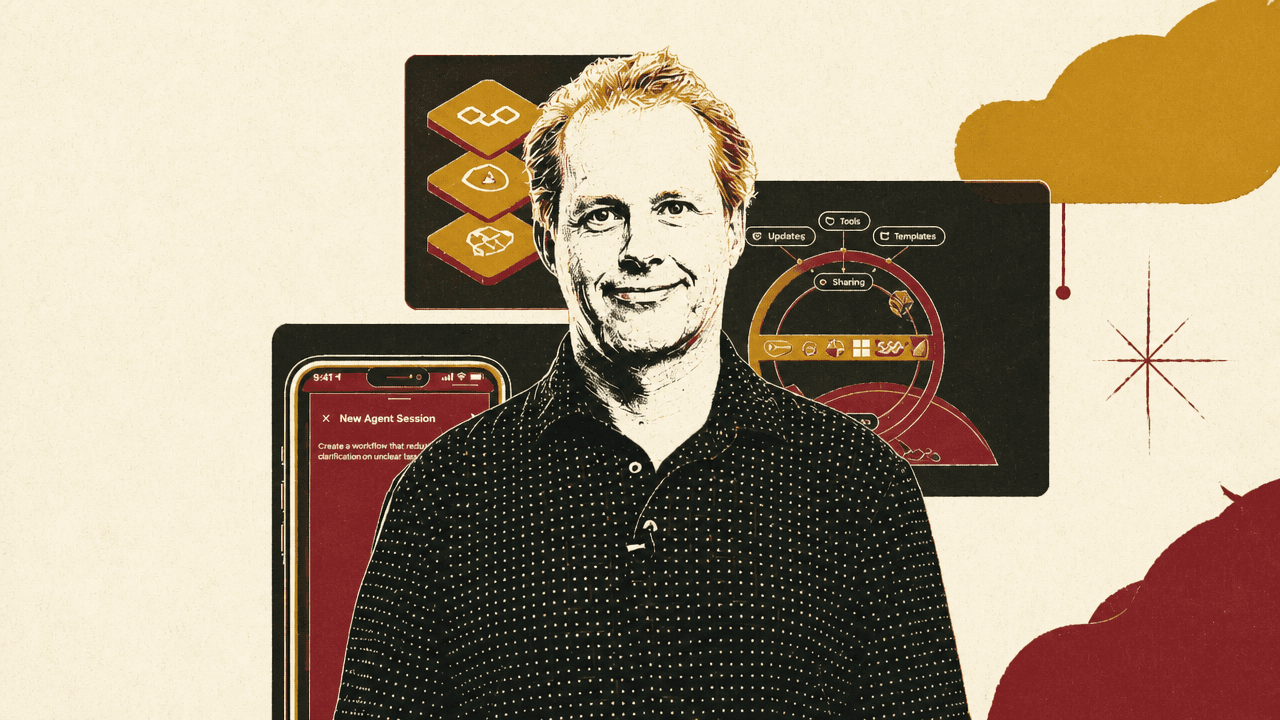

Microsoft Research’s Peli Halleux and Yash Lara present GitHub Agentic Workflows as a move from AI-assisted coding to repository-level process automation. Their argument is that agents should be embedded inside GitHub Actions to research, plan, assign, and open pull requests under human review, rather than operate as unconstrained swarms. The system’s promised scale depends on orchestration, sandboxing, limited permissions, and Microsoft-hosted models on Azure.

The claim is not faster coding; it is process automation at repository scale

Yash Lara introduced GitHub Agentic Workflows as an attempt to make a repository “run itself”: handle issues, automation, and workflows end to end without brittle scripts or manual glue. The system brings AI agents into GitHub Actions, uses built-in guardrails, and runs Microsoft-hosted models on Azure.

Peli Halleux described the project as a collaboration among Microsoft Research, GitHub, GitHub Next, and Azure Core. Its ambition is broad: use agents to automate “the entire spectrum of the software development lifecycle,” including testing, code generation, documentation, and other repository work.

The important distinction is between individual productivity and process multiplication. A slide contrasted three levels: completion at “1.4x,” a solo developer at “10x,” and automation at “100-1000x.” Microsoft Research’s case is that automation, not assisted coding alone, is “the true accelerator of agentic transformation.”

That scale is not supposed to come from unleashing many independent agents into a repository. Halleux explicitly rejected “swarms and chaos” as the model. The intended model is “order and orchestration,” built around processes.

The repository becomes an orchestrated process, not just a place to answer prompts

GitHub Agentic Workflows combines three layers: automation through GitHub Actions, safety through a sandbox, and reasoning through GitHub Copilot CLI. Together, those layers are meant to support “agentic human processes powered by GitHub.”

The phrase “agentic human processes” matters. The system is not presented as a fully autonomous replacement for development teams. The example includes multiple agents and multiple human decision points. Agents research, transform reports into work items, assign tasks, and open pull requests. Humans inspect findings, decide whether to continue, comment, review, and merge.

The concrete example was a “Research - Plan - Assign” workflow. It begins with Deep Research running on a schedule inside the repository. In Halleux’s example, the agent tries to solve a problem such as finding duplicate code and produces a report.

A developer reviews the report. If the findings look useful, the developer starts another agent to turn the report into work items. An automated agent called Issue Monster then picks up issues tagged with “cookie” — Halleux noted that it “loves to eat cookies” — and assigns them to Copilot. Copilot converts those tasks into code through the usual review loop: the developer can add comments and reviews; the work eventually becomes a pull request and may be merged.

| Stage | Actor | Output or action |

|---|---|---|

| Research | Deep Research | Researches in the repository and creates an artifact |

| Plan | Developer and another agent | Developer inspects the artifact; if useful, another agent turns the report into work items |

| Assign | Issue Monster, Copilot, and developer | Issue Monster picks up tagged issues and assigns them to Copilot; Copilot works on the task, opens a pull request, and the developer reviews and merges |

The example shows the intended unit of automation. The agent is not just answering a prompt. It is embedded in a repository process with triggers, artifacts, issue labels, assignments, pull requests, and human checkpoints. GitHub Actions supplies the event-driven automation layer; the agent supplies reasoning over the task; and the sandbox constrains what the agent can access, do, and affect.

Safety is treated as a first-order design constraint

Peli Halleux emphasized that agents are dangerous when left unattended. In an agentic repository process, untrusted content can enter through pull requests, issues, web pages, web queries, or other inputs. Those inputs can contain adversarial strings intended to take over an agent.

Agents are dangerous if they are left unattended.

The security diagram named several threat categories: prompt injection, permissions abuse, data exfiltration, and malware injection. It also identified the kinds of incoming material that can carry risk: pull requests, issues, web pages, and other inputs.

The proposed response is a sandbox that enforces what the agent can “access, do, and affect.” The diagram described a set of constraints and controls: read-only permissions, zero secrets, containers and firewalls, and integrity filtering. Safe outputs included creating an issue, creating a pull request, and sending a message.

| Area | Risk, control, or boundary shown |

|---|---|

| Threats | Prompt injection, permissions abuse, data exfiltration, and malware injection |

| Untrusted inputs | Pull requests, issues, web pages, and other inputs can carry adversarial strings |

| Sandbox enforcement | Constrains what the agent can access, do, and affect |

| Permissions and secrets | Read-only permissions and zero secrets |

| Execution and filtering | Containers, firewalls, and integrity filtering |

| Allowed outputs | Create issue, create pull request, and send message |

Halleux said Microsoft Research had invested in “a lot of layers of security” and described safety as a central aspect of agentic workflows.

Authoring moves from YAML to Markdown prompts

To support agents, the project changes the workflow authoring format. GitHub Actions YAML has been converted into Markdown, “which is very popular for agents.”

The file format shown has front matter at the top containing the older Actions-style configuration plus “agentic stuff,” followed by a prompt. The screenshot used an issue-clarifier.md file under .github/workflows. Its visible content showed a trigger for opened issues, read-only permissions by default, an allowed write operation to add a comment, and a prompt instructing the agent to analyze the current issue and ask for additional details if the issue is unclear.

The same screenshot showed a mobile chat interface asking: “Create a workflow that asks for clarification on unclear issues.” Users are not expected to edit these files manually. The workflow itself can be authored through an agent, using natural language.

Halleux also suggested that interaction with agents will not require a laptop. It could happen from a phone or through voice. The development artifact remains a repository workflow, but the interface for creating and modifying it becomes conversational.

The larger target is information work, not only software development

The repository is the first setting for the model rather than the boundary of the ambition. Peli Halleux widened the scope beyond software development, arguing that agentic human processes will change “any process that uses information and uses reasoning.”

That includes non-developers in marketing, sales, and operations. The claim is that anyone using a Copilot today for reasoning may eventually want to automate that Copilot. The visual connected agents to organizational functions such as marketing, sales, and operations, alongside platform logos including GitHub, Salesforce, SAP, and Dynamics 365. It also included the constraint: “Agents can only reason about what’s accessible.”

GitHub Agentic Workflows is presented as open source, with the project available at github.github.com/gh-aw.