AI’s Biggest Disruption Requires Rebuilding Markets Around Agents

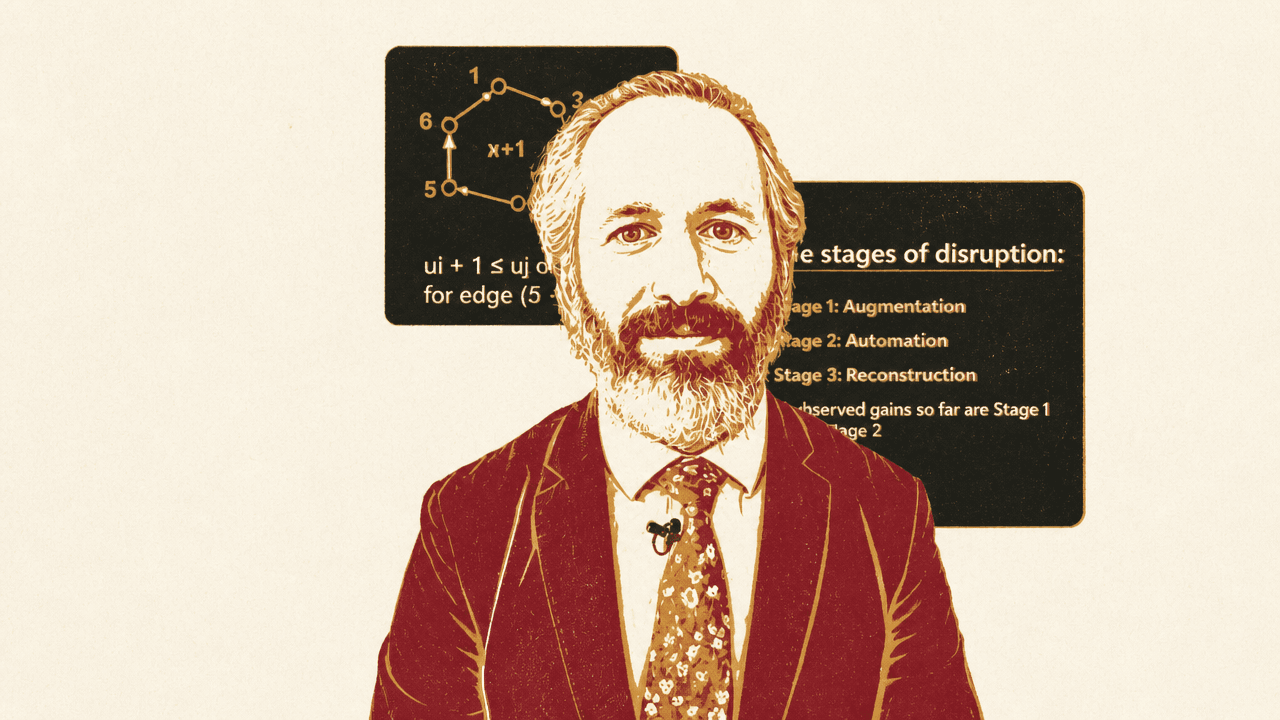

David Rothschild argues that AI’s largest economic effects will come less from better models than from whether workflows and markets are rebuilt for agents rather than humans. In his Microsoft Research Forum talk and related work on agentic markets, he says the key question is architectural: open systems could reduce communication friction and spread welfare gains, while closed platforms could use the same capabilities to reinforce incumbency. The transition, in his account, depends on choices about delegation, monitoring, auditability, and market access that are being made before the full disruption is visible.

Open agent markets or entrenched platforms

David Rothschild argues that the largest economic and social consequences of AI will not be determined only by model capability. They will depend on the architecture of the markets and workflows into which agents are deployed: whether agents can coordinate broadly across systems, or whether their interactions are constrained by incumbent platforms.

The central market claim is direct. Lower communication friction means lower lock-in. If agents can coordinate micro-tasks and payments across a wider ecosystem, the returns to innovation increase and the welfare gains are more likely to reach businesses and consumers rather than accrue mainly to central platforms. If interaction is constrained, the same AI capabilities can reinforce existing market power.

Incumbents benefit from constraining agent interaction, while society benefits from reducing it.

That is why Rothschild describes the “default trajectory” as entrenchment. Firms with existing consumer access have incentives to preserve control over market share. By contrast, open and safe agentic ecosystems require investment by organizations and governments, and those investments are costly and difficult to coordinate. Without them, AI may produce “speed without restructuring”: faster versions of today’s platforms rather than redesigned markets.

Rothschild makes a narrower point about workers: worker surplus depends on how delegation, monitoring, and entry are governed. As agents take on more tasks and possibly more authority, the distributional consequences depend not only on what agents can do, but on the rules that determine who may delegate, how that delegation is monitored, and how entry into agentic markets is handled.

The visible gains are not where the largest disruption lives

The current moment presents a puzzle: AI capabilities feel extraordinary, yet large-scale economic disruption still appears muted. David Rothschild’s explanation is not that model quality is irrelevant, or that processing power no longer matters. It is that most AI is still being inserted into workflows built for humans.

He divides disruption into three stages. Stage 1 is augmentation: AI helps with individual tasks such as writing, summarizing, and coding inside existing processes. Stage 2 is automation: routine tasks move “under the hood,” while humans monitor at a higher level. But even then, the basic architecture remains the same — forms, approvals, queues, and human-centered handoffs.

| Stage | What changes | What remains constrained |

|---|---|---|

| Stage 1: Augmentation | AI speeds up individual tasks such as writing, summarizing, and coding. | Workflows are still built for humans. |

| Stage 2: Automation | Routine tasks move under the hood and humans monitor at a higher level. | Forms, approvals, queues, and human-centered architecture remain. |

| Stage 3: Reconstruction | Workflows and markets are rebuilt around AI’s native strengths. | The constraint shifts to institutions, incentives, trust, and governance. |

Stage 3 is reconstruction. That is where Rothschild locates the largest welfare gains. In this phase, workflows and markets are rebuilt around AI’s native strengths: parallelism, memory, continuous monitoring, and machine-to-machine interaction. Systems no longer need to be organized around a user experience designed primarily for humans.

Most observed gains so far, in his view, are Stage 1 and early Stage 2. The “things we’re going to remember about this disruption” are in Stage 3. But that stage is hard to reach because it is not merely a software upgrade. It requires changing the surrounding institutions, incentives, and organizational machinery.

Stage 3 is blocked by authority and accountability, not just capability

David Rothschild’s second claim is that the binding constraints on Stage 3 adoption are “not necessarily technical.” Reconstruction requires organizations to delegate real authority to agents, create trust and accountability infrastructure, make data and constraints machine-legible, and reward redesign rather than local optimization.

That last distinction matters. If organizations use AI only to optimize existing processes, they remain inside the same human-centered architecture. Reaching Stage 3 means, in Rothschild’s phrase, “getting through that innovator’s dilemma”: being willing to “kill what you have” and create something new.

He compares AI’s adoption arc to earlier general-purpose technologies, including electricity, computers, and the internet. The implication is not that AI disruption is absent. It is that major disruption requires complementary changes in organizations and governments. The bottleneck is not just raw capability; it is the infrastructure that makes delegation safe, accountable, and economically attractive.

This is where coordination frictions become a policy and design choice rather than a technical footnote. Agentic systems need rules for delegation, monitoring, auditability, entry, and machine-to-machine interaction. Those rules determine whether agents can participate in open markets or whether they remain bound to closed intermediaries that preserve existing bottlenecks.

Synthetic agentic markets make the fork testable

To study these choices, David Rothschild describes work on synthetic agentic marketplaces: controlled environments where consumer assistant agents interact with business service agents through markets. The goal is to study, and potentially influence, the paths by which Stage 3 systems evolve.

One reason he values this approach is that “for the most part, agents don’t know they’re in a lab.” In his view, that gives the experiments external validity: researchers can observe agent behavior in market-like settings while still controlling the conditions.

These environments let researchers examine questions that would be confounded in the wild: how market outcomes change when interactions move from human-to-agent to agent-to-agent coordination; what breaks when agents receive real authority rather than advisory roles; what governance mechanisms are necessary for humans to delegate safely; and what forms of constraint, monitoring, and auditability are required.

The synthetic markets also allow red teaming by adding malicious actors. That matters because a reconstructed market cannot be evaluated only under benign conditions. It has to be tested under adversarial pressure as well.

The claim is not that adding AI on top of existing workflows has no value. It is that it does not produce the same results as rebuilding the interaction layer around what AI can actually do. The lab environments are meant to make that difference concrete.

Surplus allocation changes even when AI capability is fixed

Alongside the lab work, David Rothschild says the research uses formal theoretical models to study agentic markets at a higher level of abstraction. The purpose of theory is not fine prediction. It is comparative statics: isolating how different design choices change outcomes.

Those choices include open versus closed ecosystems, centralized versus decentralized discovery, varying feedback mechanisms, and different degrees of delegation. Theory also guides experimentation by identifying which design variables are worth testing.

The consistent result, according to Rothschild, is that architectural choices shape equilibrium behavior and surplus allocation even when AI capability is held fixed. Better models are therefore not the whole story. The distribution of gains also depends on how markets are structured: whether discovery is centralized or decentralized, whether ecosystems are open or closed, how much authority agents receive, and what feedback and governance mechanisms constrain them.

For Rothschild, delay is itself a decision. Early architectural choices can harden quickly, with long-term consequences for the economy and society. The task is not only to anticipate where AI is going, but to shape the systems into which agents are being deployed so the gains are larger and more equitably distributed.