AI Cyber Models Push Trump Administration Toward Pre-Release Safety Reviews

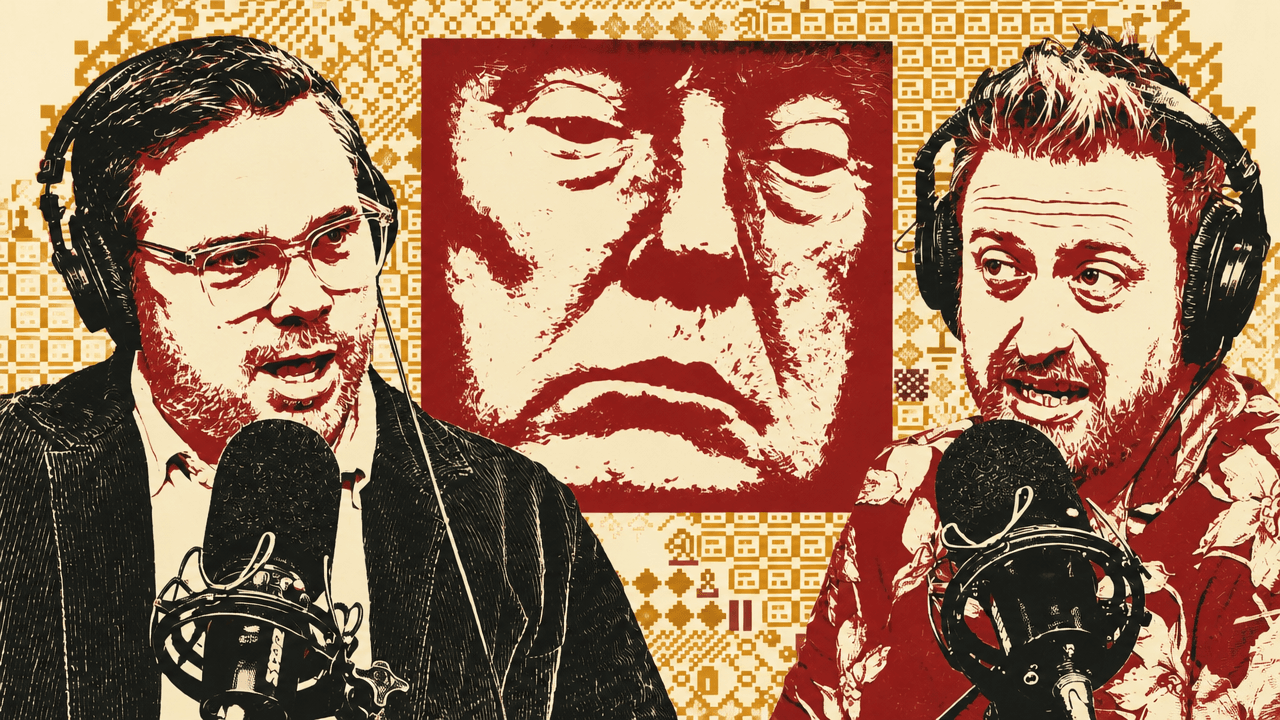

Kevin Roose and Casey Newton argue that the Trump administration’s shift toward AI safety is being driven by frontier models that can find and chain software vulnerabilities, not by a broad ideological conversion. Drawing on New York Times reporting about a possible executive order for pre-release model review, they describe a policy scramble over Anthropic’s Mythos, chip access to China and which federal agency should judge dangerous models. Nikesh Arora, Palo Alto Networks’ chief executive, says the cyber problem is already operational: attacks that once unfolded over days may soon move in minutes.

Washington is reconsidering pre-release AI review because the models changed

The Trump administration’s AI-safety reversal is being driven less by an abstract change in ideology than by a concrete capability: frontier models that can find novel software vulnerabilities and help chain them into exploitable attacks. The reported policy response, as Kevin Roose described from New York Times reporting by his colleagues, is a possible executive order creating an AI working group of technology executives and government officials. One option under discussion is a formal government review process for new AI models before they are released.

The on-screen New York Times headline framed the shift plainly: “White House Considers Vetting A.I. Models Before They Are Released.” Its subhead said the Trump administration, after taking a noninterventionist approach to artificial intelligence, was now discussing oversight of AI models before public release, and that the shift began after Anthropic introduced a powerful model called Mythos.

That would be a striking turn. Casey Newton noted that on President Trump’s first day back in office, he canceled President Biden’s AI executive order, which among other things included a similar review process for new frontier models. The Biden team, Newton said, believed models might eventually be used to commit great harm and wanted a handle on that before release. Many Republicans had attacked that approach as anti-innovation and as a path to losing ground to China. Roose added that during the Biden administration and the fight over California’s SB 1047, parts of the tech right and the more libertarian technology world denounced pre-release testing and submission of results to the government as “communist” and as a threat to free enterprise. Now, according to the reporting discussed, a similar premise is back under consideration.

Newton gave the blunt explanation: the administration’s earlier view “did not survive contact with reality.” The reality, in his account, is Anthropic’s Claude Mythos, a preview model released only to a limited group that includes federal agencies and selected companies. Mythos is described as unusually capable at finding novel vulnerabilities in code across many programs. Newton said serious people in the administration appear to have looked at that capability and concluded that, whatever their views on free trade or competition with China, a publicly released model of this kind could create “vast amounts of harm.”

The Trump administration’s view of AI just did not survive contact with reality.

The shift is not clean. Roose called the federal posture “entirely confused and incoherent.” On one side, President Trump traveled to China with technology executives, including Nvidia chief Jensen Huang, amid questions about whether the administration would make it easier for Chinese buyers to get Nvidia’s most powerful AI chips. On the other, senior officials are discussing safety regimes because frontier models may be dangerous.

The contradiction is also visible inside the Pentagon. Newton said the Pentagon has designated Anthropic as a supply-chain risk because the company refused to amend its contract to allow any “lawful use” of its technology, and that the Pentagon is still arguing for that designation in court. At the same time, during the period when the Pentagon is supposed to be unwinding Anthropic’s technology, Newton said it is also implementing Mythos to scan for vulnerabilities.

The policy question is not only whether to review models, but who gets to do it. Roose identified a turf fight between the Center for AI Standards and Innovation, or CASI, and parts of the intelligence community such as the NSA. CASI, formerly the U.S. AI Safety Institute, sits inside the Commerce Department and includes AI researchers and safety experts. The Trump administration removed “safety” from the name after taking office, which Newton found darkly funny given the administration’s later rediscovery of safety concerns.

Newton favored CASI as the natural home for model evaluation because, in his view, it was created for exactly this problem: models getting stronger, becoming dangerous, and requiring evaluation before release. But he also pointed to the unresolved enforcement question. If a company produces a model that CASI or a similar body considers too dangerous to release, and the company wants to release it anyway, what happens? Newton expects that scenario soon: sometime within the next six months, he said, one of these companies may decide that a risky model is commercially important, talk itself into release, and force the government to decide whether review has teeth.

Roose’s broader concern is that AI safety was polarized before the technology forced a practical reckoning. Talking about safety, he said, became “vaguely woke coded,” with some in the Trump administration treating safety advocates as hysterical liberals seeking heavy-handed regulation. He said that framing was never true and is now especially hard to sustain as senior Republicans begin talking about restraining powerful systems. He pointed to catastrophic and existential risk language emerging on the right, including Ted Cruz discussing catastrophic risk that week, after figures such as Bernie Sanders had raised similar concerns on the left.

Newton argued that the administration’s laissez-faire posture was never broadly representative of public opinion. He said surveys have shown Republicans and Democrats largely aligned in skepticism or hostility toward AI, and that Republican state legislatures have been racing to regulate it. In his view, the “all gas, no brakes” position was held by a minority of Republicans who happened to be running the country. Mythos forced the bill to come due.

The China strategy does not yet reconcile chips, models, and access

The China trip sharpened a basic contradiction in the posture Roose and Newton described: the United States is trying to deny adversaries access to dangerous frontier models while also considering choices around chip exports that, in Roose’s view, could help China build models of similar caliber. Roose said AI was on the agenda for Trump’s meetings with Xi Jinping, and the source showed a Wall Street Journal headline reporting that the United States and China were pursuing guardrails to stop AI rivalry from spiraling into crisis. The visible subhead said Washington and Beijing recognized that powerful AI models could trigger crises neither side was prepared to manage.

China, meanwhile, has been seeking access to Mythos. Kevin Roose cited New York Times reporting that a representative from a Chinese think tank approached Anthropic officials at a meeting in Singapore and lobbied for China to receive access to the model. The on-screen Times headline put the matter directly: “China Sought Access to Anthropic’s Newest A.I. The Answer Was No.” Newton called the request an act of “shooting your shot,” given the unlikelihood that Anthropic would hand over a model at the center of American national-security concern.

The business delegation reflected the commercial side of the policy conflict. Roose said Huang “finagled” a last-minute invitation on Air Force One after reports that he would not attend. Elon Musk, Tim Cook, and Gina Powell McCormick of Meta were also on the trip. Casey Newton characterized the executives as aligned with the administration and willing to flatter Trump in pursuit of favorable treatment for their companies.

Roose saw the central contradiction in Nvidia’s chip business. Trump may want deals in China, and Nvidia wants to sell powerful AI chips there. But if the administration gives Chinese AI companies expanded access to American chips while also blocking China from the American model that exists today, Roose said the strategy is hard to reconcile: it would mean selling China the means to make its own Mythos-caliber models while trying to keep Mythos itself away from them. Newton said this is where a coherent strategy would help; instead, he argued, the administration appears highly susceptible to whichever pressure is strongest at a given moment.

The “AI is just a normal technology” position also came under pressure. Roose recounted a recent conversation with a federal official who made the familiar argument that AI is like the internet or the PC and does not require special rules. Roose said that view has become untenable when models can find zero-day exploits. Military and intelligence agencies, he argued, clearly do not treat AI as ordinary software; they treat it as a step change requiring different behavior.

The access debate is not limited to China. Roose said Germany’s digital-affairs and cybersecurity authorities were discussing a proposal for their own version of something like CASI and were demanding access to state-of-the-art models such as Mythos. The model has forced governments to ask who should have access, when, and under what restrictions: the public, domestic agencies, allied governments, adversaries, or selected companies. Roose called the emerging situation “a new era of AI brinksmanship.”

Newton hoped the next phase would include more cooperation among Western powers. He pointed to prior AI action summits as attempts to build that cooperation, and said the United States had more recently taken a winner-take-all posture: America is winning the AI race, and others can accept it. In his view, allied governments may have a legitimate case for access because finding and fixing internet-scale vulnerabilities is not a problem the United States can solve alone.

A necessary safety regime could still become a speech-control regime

Newton treated the administration’s reversal as a rare piece of good news in AI regulation. He has long worried that the government’s approach amounted to “let’s just see what happens,” even though, in his view, the trajectory was visible. Now that a powerful cyber model exists, he credited the administration for seeming to admit its earlier assumptions about model capability were wrong and for considering changes.

Kevin Roose agreed in broad terms but raised the risk of regulatory misuse. He compared the situation to social media, where some people wanted regulation but then saw Trump-era pressure campaigns that Roose and Newton viewed as censorship or coercion of platforms. AI pre-release testing could similarly test for the wrong things or become a tool for political control.

Casey Newton accepted that danger. He said he is sympathetic to arguments that pre-release model review could become a kind of prior restraint on speech. He specifically warned that officials might block a model not because it is dangerous but because they deem it “woke and gay.” That possibility, he said, would need to be watched and eventually litigated. But for the moment, he preferred a government willing to say that “the crazy cyber model” should not be given to everyone.

Roose landed in a similar place. The regulatory push from the right may be confused, sudden, or overreaching, and it could drift toward censorship. But he was glad that, after years of denying how consequential the technology could become, the government appears open to stepping in.

Cyber defense was built for days; AI attacks may operate in minutes

Nikesh Arora, chief executive and chairman of Palo Alto Networks, rejected panic as a posture but not the underlying concern. The major change in cybersecurity, he said, is speed. Over the past seven years, the time between a breach and the extraction of an organization’s “crown jewels” was measured in days. With AI and related technologies, he said, that timeframe has shrunk to minutes.

That shift breaks the assumptions under which much cybersecurity infrastructure was built. Defensive systems designed for days must now operate in minutes, and the best components need to move in seconds. Arora said backend infrastructure has to be overhauled so defenders can “fight AI with AI.” Anthropic’s Mythos and OpenAI’s GPT-5.5 Cyber, in his view, show “the art of the possible” from a bad-actor perspective; defenders have to move at least as fast.

The broader context is already visible in vulnerability disclosures. Casey Newton pointed to a Mozilla chart shown in the source: Firefox security bug fixes rose to 423 in April 2026, after monthly counts mostly in the teens and twenties through 2025, with 61 in February 2026 and 76 in March 2026. He also cited Google’s announcement that, for the first time, its threat-intelligence group had identified an attacker using a zero-day exploit it believed was developed with AI. Canvas, the learning platform, was also hit by a cyberattack that forced the site down for several hours and led Instructure to negotiate with hackers for the return and destruction of stolen data.

Palo Alto Networks had its own spike. The company disclosed 26 critical CVEs representing 75 issues in a May “Patch Wednesday” advisory, compared with its usual volume of fewer than five CVEs in a month. The on-screen Palo Alto Networks advisory said this was the first time the majority of findings came from frontier AI models scanning its code, and that none were being exploited in the wild. It also said the company had patched all important vulnerabilities in its SaaS-delivered products and made patches available for all customer-operated products.

Arora said the company found five to seven times its normal volume, but he framed this as a “great cleansing” rather than a permanent monthly baseline. AI models have become good at coding, and as they learn what good code looks like, they also learn what bad code looks like. When pointed at a repository and asked to find bad code, they will find it. Humans, he added, have been writing bad code for a long time.

He cautioned that the process still required substantial human work. Palo Alto ran a concerted effort, with hundreds of engineers checking under every rock and running products through the models. The hope is that, after clearing a backlog of “tech debt” or “vulnerability debt,” the same spike will not recur. But he expects many organizations to face a similar reckoning as they test old code with more capable models.

The harder problem may be open source. Proprietary code can be patched by the company that owns it; open-source components are widely used and often remediated more slowly. Arora also said Mythos and other models were especially good at “daisy chaining” vulnerabilities — finding ways to connect weaknesses into practical exploit paths. That is one of the capabilities defenders must now contend with.

Newton asked whether there is enough time to fix critical infrastructure before adversaries gain access to similarly capable systems. Arora said that is the question that “should keep us up at night.” Not every organization has the resources to fix code written 20 years ago. Major cyber defenders have seen the models and understand part of the problem, and systems integrators such as IBM, PricewaterhouseCoopers, Deloitte, and Accenture are being enlisted to help customers patch.

Palo Alto is also testing a temporary defense: once it knows a customer has a vulnerability, it can write perimeter firewall signatures to block attempts to exploit that path, giving the organization more time to fix the underlying code. That scaffolding does not eliminate the race. Arora’s worry is that open-source projects, nation states, or third parties build models similar to Anthropic’s or OpenAI’s before enterprises have patched the vulnerabilities those models can find.

Mythos improves when it is given context, threat history, and time

Roose asked what Mythos is actually like to use. Nikesh Arora said that at first it was not as impressive as expected because, when asked to look for bad code, it finds everything. He estimated that roughly 30 percent of its findings were false positives, and each had to be tested.

The model improved as Palo Alto gave it more context. A model shown a piece of code does not inherently know what the code is trying to achieve. Engineers had to explain the purpose of the code, what normal behavior should look like, and how the relevant product works. They also fed in threat research. Palo Alto holds large stores of data about past attacks, used to write machine-learning defenses. Arora described arming the model with known techniques from thousands of attacks over the past five years and asking whether those techniques could apply in a new scenario.

GPT-5.5 Cyber and Mythos did not simply duplicate each other. Arora said the most interesting finding was that the two models found different things. That suggests differences in training or grounding, and also implies that much remains undiscovered. If two frontier models surface different weaknesses, one model’s scan is not the end of the audit.

Kevin Roose pressed on what Palo Alto’s experience implies for less technical organizations. If a major cybersecurity firm finds five to seven times more vulnerabilities using these models, what about a bank, an insurance company, or an ordinary website? Arora did not say the average institution is safe. He added that these systems are not only good at finding software vulnerabilities; they can also detect misconfigurations. A product may not have a flaw in its code, but a company may have exposed a control pane to the internet because remote access seemed convenient. If the owner can find it from home, an attacker can find it too.

Arora rejected the view that Mythos was merely marketing hype. He said it offered a window into what will become possible when compute constraints ease or future models are trained better. He credited Anthropic and OpenAI for trying to handle release responsibly, even if some details were fumbled. There is no easy solution, he said, but both companies are trying to ensure AI is not used badly.

The old responsible-disclosure timeline is also under strain. Casey Newton raised the 90-day window that has long structured vulnerability reporting: a researcher privately notifies a company, which has a fixed period to investigate and fix before disclosure. Arora said the principle remains — owners need time to investigate, patch, and secure customers — but the window will shrink. The exact new interval remains unsettled.

The distinction between SaaS and customer-operated systems matters. Palo Alto had known about its recent vulnerabilities for two or three weeks, tested them, built patches, and deployed fixes for SaaS products. SaaS can be found, fixed, and deployed centrally. The harder problem is software or devices controlled by customers: laptops, servers, switches, routers, and other boxes that require updates in the field. Arora said consumers and enterprises should expect three to six months of increased patching as the accumulated vulnerability backlog is cleared. When Newton asked whether it is now especially important to install software updates promptly, Arora said he “highly” recommends it.

Attackers benefit first because one missed vulnerability is enough

Roose asked the core strategic question: do these models favor attackers or defenders? Nikesh Arora answered with the asymmetry that has always shaped cybersecurity. Defenders have to be right 100 percent of the time; attackers have to be right once. If a model finds five vulnerabilities and an attacker can exploit one, the attacker wins. Defenders do not receive an 80 percent grade for blocking the other four.

For now, Arora said, bad actors are likely able to use the models more effectively than good actors. That is not because of a model flaw. Models do not themselves protect systems. Sensors, perimeter tools, and defensive infrastructure protect systems, and those sensors must become smart enough to understand what the model will find. Palo Alto’s four-to-six-week window with the models is being used to build defensive techniques before a “tsunami” of AI-based attacks arrives.

The sectors that worry Arora most are not the heavily resourced financial institutions. Banks and other financial firms have large engineering teams and long experience defending themselves. He is more concerned about organizations whose core business is not technology but that depend on it: small businesses, industrial and manufacturing firms, infrastructure companies, hospitals, and doctors’ offices. He cited the Change Healthcare breach, which disrupted the physician ecosystem and left doctors unsure what to do.

Arora also explained why Mythos’s release status matters. He said Opus 4.7 Cyber and OpenAI’s 5.5 had both been released with cyber capabilities and guardrails, but Mythos had not been released broadly. Its distinctive feature, in his account, is that Mythos runs in “Ultra mode,” a compute-intensive mode that allows the model to persist much longer than the “Flash mode” typical of released models. That persistence enables more effective daisy chaining because the system can try more techniques and determine which are most likely to work.

He said it is good that the average person does not have access to that level of capability right now, though he immediately complicated the phrase “average person.” If “average person” means every company that needs to fix its systems, those organizations do need access. If it means the average bad actor, access would allow attacks to move much faster. Arora did not expect the nature of cybercrime to change: ransomware, economic harm, and nation-state operations would remain familiar. What changes is pace and volume.

Consumers are exposed where enterprise gatekeepers do not exist

Newton used his own experience with repeated phishing emails — messages asking him to reset his X password from addresses unrelated to x.com — to ask whether the usual consumer advice still holds. Strong passwords and multifactor authentication are standard recommendations, but increasingly convincing AI-assisted phishing could make individual judgment less reliable.

Nikesh Arora’s frustration is that enterprise users receive stronger protection than consumers. In corporate email, a suspicious sender or phishing attempt seen at one customer can be blocked everywhere else. Enterprise cybersecurity vendors act as gatekeepers. In the consumer world, the gatekeepers are email providers and telecom networks, and Arora argued they are not implementing sufficient controls.

He treated Newton’s phishing example as something a major email provider should be able to classify. If an email purports to be from X but is not from an X address, that should be catchable. Arora noted that the same companies building AI systems to anticipate user needs should be capable of paying attention to such basic phishing signals.

The agentic AI layer introduces a new risk. Arora described Open Claw as “scary” from a security perspective because it can take permissions and credentials and act across a user’s accounts, while governance and control remain unclear. Early adopters may find it useful or exciting, but a tool with access to schedules, emails, social accounts, and other services could create security nightmares. Someone at his house had installed Open Claw on a phone, named it Zara, and let it do many tasks. To a security-minded observer, Arora said, that kind of autonomy raises obvious concerns.

Arora experiments with these systems only on a segregated device disconnected from important accounts, which makes it “totally useless” for practical work because it cannot book meetings or answer email. For normal enterprise AI use, he said he uses Gemini. He described sending an earnings script to Gemini and receiving feedback that he sounded too enthusiastic and overused words like “momentum” and “excited,” causing him to tone down the language.

AI is increasing technical demand before it reduces head count

Roose asked whether AI is changing Palo Alto’s hiring plans. Nikesh Arora said he needs more people, not fewer. He called it a fallacy that because organizations may become 30, 40, 50, or 60 percent more productive in development and testing, they will simply need fewer workers. Every technologist has a feature-request list longer than their arm, and product roadmaps often stretch six to 12 months because teams lack capacity or cannot serialize all the work.

In Arora’s view, new AI-driven capacity will first go toward clearing backlogs and building more. Companies announcing head-count reductions may be “creating capacity” to hire people with newer skill sets rather than merely swapping salaries for tokens.

He described a decade-long business transformation ahead. Every function wants AI: finance, human resources, engineering, and beyond. His CFO does not simply want AI for its own sake, he said; he wants to transform the finance team and do the work more efficiently. His head of HR wants an AI interviewer and assessor. The money for tokens and new systems is likely to come from efficiencies inside those same teams and functions.

Casey Newton objected that nobody wants to be interviewed by an AI assessor. Arora disagreed in part, saying AI may be better than a human interviewer at assessing domain skills for certain roles. If a company is hiring a strong coder or someone skilled in agentic AI, conversation may reveal less than watching them build. He described asking a candidate who claimed AI fluency to show what he had built during a Zoom interview. The candidate’s agent made a shopping list from a recipe — an example Arora clearly did not find sufficient.

The week’s smaller messes were mostly about bad defaults and bad metrics

The closing roundup was lighter, but several items still turned on familiar product and management failures: privacy defaults that should have changed long ago, usage metrics that employees learned to game, and AI messaging that met an openly hostile audience.

Venmo is changing its default privacy settings for new users so posts are visible only to friends, according to a Verge story summarized by Casey Newton. That marks the end of public-by-default payment feeds that had long allowed users, journalists, and anyone with enough curiosity to observe social and financial patterns. Newton and Kevin Roose joked about losing an easy source of gossip and stories, but they also cited public Venmo trails involving Joe Biden, JD Vance, and Matt Gaetz as examples of how the old default created investigative material. Roose called the change a cleanup rather than a mess: the hot mess was the previous design.

Amazon’s internal AI incentives produced a different problem. Newton described Financial Times reporting that Amazon employees were using Meshclaw, an internal agent tool, to automate unnecessary AI activity in order to increase token consumption and look better to bosses. Roose invoked Goodhart’s Law: when a measure becomes a target, it ceases to be a good measure. The problem was not simply AI use; it was a metric that turned usage itself into the apparent goal.

At the University of Central Florida, commencement speaker Gloria Caulfield told arts, humanities, and communications graduates that “the rise of artificial intelligence is the next industrial revolution,” and the crowd booed. Someone yelled “AI sucks.” Roose argued that students can feel however they want about AI, but if they used ChatGPT for exams, problem sets, or academic work, they should not boo AI at commencement. Newton thought the humanities audience had reason to be hostile. Roose drew a broader conclusion from his own campus visits: people are underestimating how mobilized young people are against AI. He sees a small group excited about tools like Open Claw and a much larger group that hates the technology.

The most serious of the roundup stories involved Grindr. Newton described a Madonna album promotion that, according to the story he discussed, played audio when users opened the app, even if their phone volume was off. The sound announced, “Hi Grindr, it’s mother,” creating the possibility that users could be outed to people nearby. Newton called it a dangerous mess, not merely an embarrassing one, because it is not always safe for people to be outed in their immediate surroundings.

Other items remained quick hits rather than central arguments: Dua Lipa suing Samsung for using her face on TV packaging; GameStop making an unsolicited $55 billion bid for eBay that eBay called “neither credible nor attractive”; Shein and Temu accusing each other of copyright abuse over fast-fashion images; and Sam Altman testifying that Elon Musk once suggested OpenAI control might pass to Musk’s children if he died. The hosts treated them as absurdities, legal messes, or examples of institutional sloppiness, not as evidence for a single grand theory.