OpenAI Trial Records Show Founders Anticipated an AGI Governance Fight

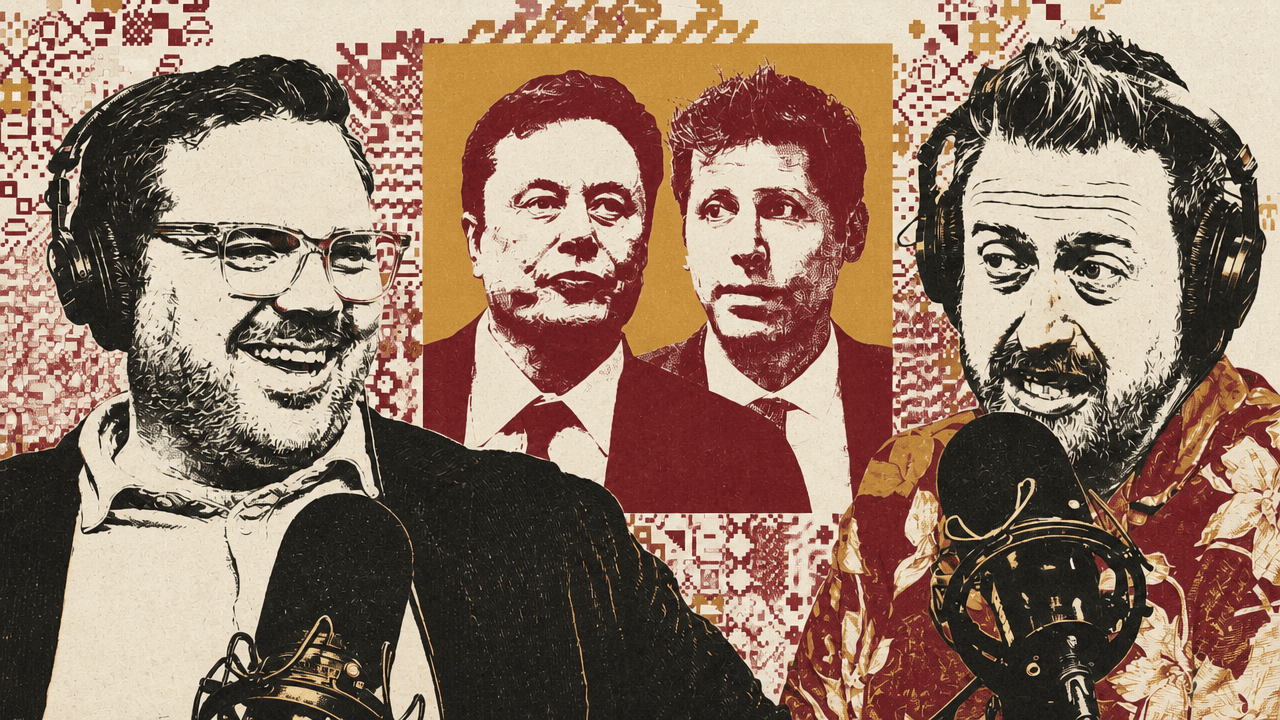

Kevin Roose and Casey Newton argue that the Musk v. OpenAI trial is notable less for its personal theatrics than for the written record it has exposed from OpenAI’s early years. In their reading, the evidence shows founders and executives anticipating fights over the governance, financing and control of artificial general intelligence before the technology appeared capable of justifying those stakes. The trial’s stranger artifacts — journals, trophies, succession questions and private channels — matter because they illuminate how closely OpenAI’s mission was tied from the start to power.

The written record shows OpenAI’s founders anticipated a governance fight before the technology had arrived

The most consequential material from the Musk v. OpenAI trial is not only the personal drama. Kevin Roose said the record from OpenAI’s early years shows that people around the company were already treating artificial general intelligence as a world-shaping technology in 2017, before the systems looked capable by today’s standards.

Roose’s formulation was blunt: they were writing as if AI would “become the most important technology in the world,” trigger “huge fights over who governs it,” and raise the question of whether someone could become a “global AGI dictator.” His point was that the early participants were not retrofitting grand language onto later success. They put fears about governance, power, politics, and economic transformation in writing while AI still “could basically barely complete a sentence.”

Casey Newton agreed that the record is striking because of how much the participants were right about. But he resisted Roose’s suggestion that they were almost “playing a board game.” Newton’s distinction was that the ideas still felt like science fiction a decade ago, but many of the people involved did appear to believe them. The fight was speculative, but not casual.

That makes the trial evidence unusually revealing. Roose suggested it may be “the last great deposit of receipts” from this world, because founders and executives have since learned not to put sensitive material in durable written form. Newton put the same point more bluntly: companies are getting better at destroying evidence. Roose sharpened it further: “Can’t destroy evidence if it never exists.”

Sutskever’s “big computer” line made the funding problem plain

Ilya Sutskever, OpenAI’s former chief scientist and co-founder, gave the trial one of its cleanest reductions of the company’s technical needs. Kevin Roose described Sutskever as “a very quotable man” who speaks in riddles and parables, and pointed to two answers that compressed the stakes.

Asked by the judge about the difference between AI systems from a decade ago and AI systems today, Sutskever compared it to the difference between “an ant and a cat.” Asked about OpenAI’s computing needs, he said the company needed more funding to build what he called “a big computer.”

Casey Newton singled out the phrasing because it made OpenAI’s need sound almost childlike in its simplicity. He recalled the line as: “If there is no funding, there is no big computer.” For Newton, the force of the testimony was that Sutskever “was really determined for there to be a big computer.” Roose agreed, and Newton added: “And now we have it.”

The exchange connected the mission question to the infrastructure question without much abstraction. In the trial record as Roose and Newton described it, OpenAI’s ambitions depended on computing resources that required funding, and that need sat beside disputes over control, governance, and who would decide the organization’s future.

Altman’s account cast Musk’s control proposal as dynastic

Sam Altman testified about what he called a “hair-raising” chat with Elon Musk, according to Kevin Roose. In that exchange, Altman asked Musk what would happen if Musk controlled OpenAI and then died. Musk reportedly answered that he had not thought about it much, but perhaps he should pass control to his children.

The source showed a Bloomberg headline by Madlin Mekelburg and Rachel Metz describing the testimony as: “Altman Testifies About ‘Hair-Raising’ OpenAI Chat With Musk.”

Casey Newton said the account undercut one theory of the trial: that Musk was pursuing the case even if he knew he might not win, partly to embarrass Altman. “I don’t know what could be more embarrassing for Elon,” Newton said, “than the news that he was trying to turn OpenAI into a hereditary monarchy.” Roose joked that a new season of “Succession” had arrived; Newton extended the image to Musk’s children “vying for control of OpenAI.”

The substantive question inside the joke was succession. If a person sought control over OpenAI, and the people around OpenAI believed the technology could become globally important, Altman’s account raised the question of what would happen when that person died. In the version Roose described, Musk’s answer was either undeveloped or dynastic.

Murati’s role in Altman’s ouster was presented as pivotal and ambiguous

Helen Toner’s deposition about Mira Murati focused on the board fight that saw Sam Altman fired and then rehired. The source showed a Verge headline: “Mira Murati’s deposition pulled back the curtain on Sam Altman’s ouster,” with the subheading, “The former OpenAI CTO had receipts. But they mostly confuse her own story.”

Kevin Roose said Toner “essentially accused” Murati, then OpenAI’s chief technology officer, of being either dense or insincere. Toner described Murati as “strikingly unsupportive” and “remarkably passive,” and said Murati “seemed totally uninterested in telling her team that her conversations with us had been a significant factor in our decision to fire Sam.”

Casey Newton explained the tension this way: according to the testimony, Murati had quietly supplied the board with substantial information about Altman, which the board then used in deciding to remove him. She also agreed to become interim CEO. But after the removal triggered internal backlash, Newton said, Murati became quiet, then eventually said she opposed Altman’s removal.

The source twice showed Toner’s line: “She was waiting to see which way the wind would blow, and she didn’t realize that she was the wind.” Roose called it a great quote and said it belonged on a bumper sticker. The underlying issue was less decorative: the testimony portrayed Murati not as a passive bystander to the board’s action, but as someone whose conversations materially influenced it, even if she later presented herself differently to employees.

Private artifacts became evidence, sometimes with unclear legal force

Some of the evidence Kevin Roose and Casey Newton discussed had documentary value even when its legal relevance was treated as uncertain or absurd.

One example was Greg Brockman’s private writing. Roose said OpenAI’s president and co-founder was required to submit personal journal entries from OpenAI’s early years as trial exhibits. The entries included Brockman’s thoughts about his ambition, his frustrations with Musk, his desire to succeed at building AGI, his aim to get to a billion dollars — Roose presumed in personal net worth — and his wish to be respected for “what I do.” The source showed a Wall Street Journal headline: “The Secret Diary That Has Spilled Into the Musk vs. OpenAI Feud.”

Newton said the use of the journal made him uncomfortable. He keeps a journal himself, he said, and the thought of Musk’s lawyers questioning him about it on a witness stand “sends a shiver down my spine.” He also noted that Musk’s lawyers and some press coverage had called Brockman’s writing a “diary,” while he and Roose insisted it should be called a journal. Roose found it bizarre that such writing would be kept somewhere discoverable, such as Apple Notes or a Google Doc.

The other artifact was the “Jackass” trophy. Roose said it was presented to an OpenAI employee early in the company’s history after Musk called him a jackass during a meeting. According to Roose, the trophy was given by Dario Amodei, then a safety executive, and showed the back half of a donkey with the inscription: “never stop being a jackass for safety.” The source showed a New York Magazine headline: “OpenAI Futurist Received a Gold ‘Jackass’ Trophy for Challenging Elon Musk.”

Newton asked why this was trial evidence at all. Roose thought OpenAI’s side was trying to suggest that Musk did not care about safety as much as he claimed, but he conceded the logic did not fully add up. Newton summarized the evidentiary move skeptically: “You can tell he didn’t care about safety. Look at this jackass trophy that we made.”

That uncertainty became part of Roose’s broader theory: the trial may be a mechanism for smuggling large amounts of gossip into the public record. Newton said they supported that aspect. Roose called it “a true gift to journalists and historians.”

Zilis’s role added a private-channel subplot to the boardroom fight

Casey Newton’s additional favorite moment involved Shivon Zilis, whom he described as a longtime Musk employee, mother to four of Musk’s children, and a former OpenAI board member. During the period in question, Newton said, Zilis became “a kind of covert liaison” between Musk and people at OpenAI.

According to Newton’s account, once it came out that Zilis was the mother of Musk’s children, OpenAI was, in his phrasing, “like, um, you have to leave the board now.” Kevin Roose described her as “the mole for Elon inside OpenAI” who was “ferreting him information,” and said her departure from the board was understandable.

Newton called the sequence “a true Hollywood twist.” Within the trial record described by Roose and Newton, it fits the broader pattern: formal governance questions kept appearing alongside personal relationships, private channels, old grievances, and early written predictions about how important OpenAI’s technology might become.