Figure Claims 50-Hour Autonomous Humanoid Test Was Not Teleoperated

Figure chief executive Brett Adcock told Bloomberg that the company’s livestreamed humanoid package-sorting test is fully autonomous and not remotely operated, rejecting viewer claims that repeated hand motions suggested teleoperation. Adcock said the robots were running on Figure’s onboard Helix 2 neural network, had operated for close to 50 hours with little downtime, and had pushed nearly 60,000 packages through the line. He framed the demonstration as evidence that Figure is moving toward commercially useful, human-speed humanoid robots built through a vertically integrated hardware, manufacturing, data and AI stack.

Figure’s central claim is autonomy, not remote control

Brett Adcock’s answer to the teleoperation allegation was categorical: “There’s absolutely no teleoperation involved in this.” The robots in Figure’s package-sorting livestream, he said, are “operating fully autonomously” on an onboard neural network the company calls Helix 2.

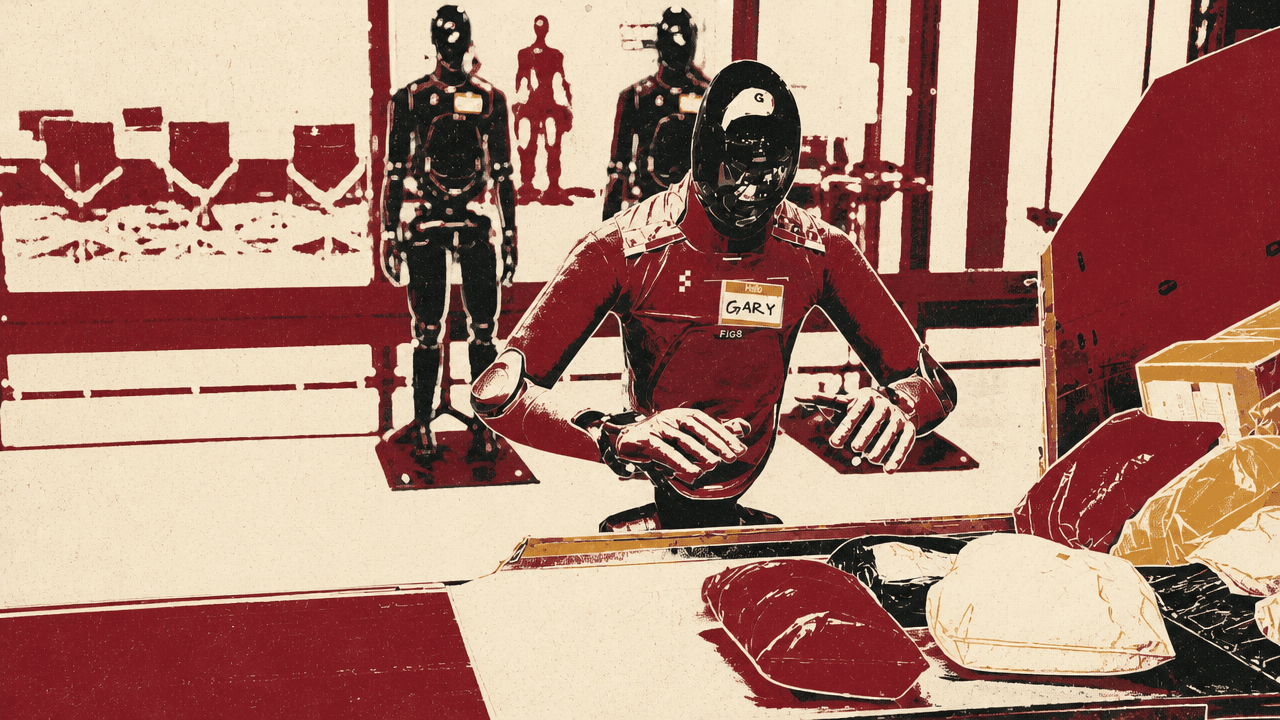

The skepticism came from a specific observed behavior. Ed Ludlow cited comments from viewers who interpreted repeated gestures near the robot’s head as a “telltale sign in robotics of teleoperation” and asked Adcock for a direct pledge that no remote operation was involved. Bloomberg showed Figure footage of a black-and-gray humanoid labeled “FRANK” sorting packages on a conveyor belt. The on-screen frame carried the labels “LIVE,” “FRANK,” “F.03,” and “real-time package sorting capability.”

Adcock’s explanation was mechanical rather than an admission of operator signaling. When the robot turns left to grab packages, he said, it moves its left hand upward and out of the way. Viewers should see that same behavior “every single time the robot turns for packages,” he said. In Adcock’s account, the repeated motion is part of the robot’s autonomous manipulation pattern, not evidence of teleoperation.

There’s absolutely no teleoperation involved in this.

Figure had been running the system autonomously for close to 50 hours, Adcock said, with robots operating in shifts, “basically almost no downtime on the belt,” and close to 60,000 packages pushed through the line.

Bloomberg also showed an X post by Adcock saying Figure’s original goal had been an eight-hour run, but that the company was “now over 24 hours of continuous autonomous operation without a failure,” calling it “uncharted territory.” The post presented the same endurance claim in Adcock’s own public framing.

Continuous operation depends on robot-to-robot shift changes

The public feed showed package sorting; Adcock’s operating claim also depended on what happens when a robot needs to leave the line. Figure’s robots generally operate on a four-hour battery life, he said. When a battery runs low, the robot “messages another robot” to come take its place. The outgoing robot leaves the conveyor system and goes to a wireless charging stand while the replacement continues the work.

If there is a hardware or software issue, Adcock said, the robot can walk off into maintenance and call another robot to replace it. Figure’s target is not only a single robot sorting packages, but 24/7 operation with no failures in the use case itself. He said the conveyor system had been running around the clock since the middle of the week and that, approaching 50 hours, the robots had been doing work “every single hour” since launch.

The operating model Adcock described includes autonomous package handling, four-hour battery shifts, robot-to-robot replacement when a battery runs low, wireless recharging, and substitution when hardware or software issues arise. The footage showed both FRANK and another black-and-gray robot labeled “GARY” working on the same kind of conveyor setup.

Bloomberg’s visuals also included Figure footage of two humanoid robots making a bed under on-screen labels about Figure’s “push into everyday robotics.” The image broadened the company’s positioning beyond logistics, but Adcock treated the package-sorting line as the immediate commercial proof point. Figure, he said, wants robots “everywhere in the world in the commercial market,” and the package-sorting run is “the first large step” toward that.

The benchmark is human-speed sorting with barcode-ready handling

Caroline Hyde framed the next constraint as a choice between speed and reliability, but Adcock said Figure is trying to demonstrate both. The robot in the livestream is operating at roughly human speed, he said: about three seconds per package. That, he said, is the requirement for the logistics line.

He also gave a second performance threshold: a 90% success rate on package flips for barcode scanning. Figure is “in that requirement” as well, he said. The combination of speed and barcode-facing success rate is how he framed “human parity” for this particular warehouse task.

| Operating measure | Adcock’s description |

|---|---|

| Sorting speed | Roughly human speed, about three seconds per package |

| Barcode handling | Goal of about 90% success on package flips for barcode scanning |

| Battery operation | About four hours before a robot swaps out to charge |

| Run duration | Approaching 50 hours of continuous line operation during the interview |

| Package volume | Close to 60,000 packages pushed through the line |

Reliability is the larger shift Adcock emphasized. When he started the company four years ago, he said, humanoid robots were falling and were “extremely unreliable systems.” Figure has engineered its systems to the point where, in his view, the robots are “extremely reliable” and can be shown publicly under continuous workload.

The demonstrated scope in this interview was a specific, measurable logistics task: package sorting with barcode-facing manipulation on a conveyor line. Adcock did not present that task as the full realization of general-purpose robotics. He said the bigger focus is solving for a “truly general purpose machine” and then manufacturing at “unprecedented volumes similar to cell phones today.”

Adcock says humanoid robotics requires owning the full stack

Figure’s view of the technical stack is vertically integrated. In response to a question about whether a large AI company might want to own the entire stack powering humanoid robots — raised in the context of speculation about OpenAI returning to robotics and Figure’s own prior OpenAI partnership — Adcock said humanoid robotics requires control from hardware through training.

To “really build like iRobot,” he said, Figure has to design nearly the entire hardware system itself: motors, stators, rotors, electromagnetics, batteries, actuators, sensors, kinematics, and structures. He said Figure now does those functions in-house, along with manufacturing, testing, AI data collection, and neural-network training.

Adcock did not frame robotics as a problem solved by placing an AI model into third-party hardware. He described the product as a coupled system in which hardware design, manufacturing, data collection, and model training have to be controlled together.

In the package-sorting example, that full-stack approach is supposed to show up as a robot doing “real use case work like humans do,” at human speeds, for periods longer than a normal shift. Adcock said most logistics shifts of this kind run about eight hours a day, while Figure is running the test 24/7 to show the systems are reliable and “mission-ready” for scale.

The bottlenecks are data and manufacturing, not capital

Figure’s two largest bottlenecks, according to Adcock, are data for pre-training Helix and manufacturing. He did not answer the capital question with an IPO plan. Instead, he said Figure has “well over a billion dollars of cash on the balance sheet,” which he described as a good financial position.

On manufacturing, Adcock said Figure’s facility, BotQ, is scaling up and is already at a production run rate of several thousand units annually. He also said the facility would manufacture between 60 and 70 humanoid robots in the current week, on Figure’s campus.

On data, Adcock said Figure is collecting and training models for its internal AI stack at a level the company has not done before. He described those next-generation AI models as “completely mind-blowing,” while keeping the bottleneck definition practical: the data problem and the manufacturing problem have to be solved to get humanoid robots out at scale.

The manufacturing comparison he chose was cell phones. Figure’s goal is not merely to make a working humanoid, but to manufacture at “unprecedented volumes similar to cell phones today.” That is the scale implied by Adcock’s operating model: a fleet of humanoids that can rotate, recharge, recover from issues, and keep a commercial line moving.