Agents Can Now Fine-Tune Open Models Through Prompted Workflows

Merve Noyan argues that open models have moved from downloadable artifacts into an operational stack for selection, serving, inspection, training and deployment. In her Hugging Face presentation, she makes the case that access to model weights now matters because developers can quantize, fine-tune and run models locally or at the edge, while Hub benchmarks, inference providers, traces, MCP and Skills let agents act directly on those workflows. Her strongest example is a coding agent that can size hardware, choose infrastructure and launch a fine-tuning job from a prompt.

Open models now sit inside an operational stack

Merve Noyan’s central claim is practical: open models are no longer just artifacts to download from a model hub. In the Hugging Face ecosystem she presented, they can be selected through benchmarks, tested through inference providers, served locally through model-card workflows, inspected through agent traces, and trained or deployed by coding agents equipped with Hugging Face Skills and MCP access.

That framing depends on openness mattering for more than ideology. Noyan drew a distinction between open-weight models, which can come with non-commercial licenses, open-source models with commercially available licenses such as MIT or Apache 2.0, and releases where more of the surrounding system is open as well, including code and agent harnesses. She did not present this as a legal taxonomy so much as a set of practical differences: the more of the system is available, the more control a developer has over what changes, what can be modified, and where the system can run.

Access to weights changes the operating model. If the weights are available, users can shrink, quantize, fine-tune, and deploy models in places where a hosted API may not be acceptable. Her slide summarized the case for open source as control over models, cost reduction “in multipliers,” customization and shrinking, and privacy for end users because inference can happen on edge devices or in browsers without sending data to another server.

Noyan also tied openness to predictability. Referring only to something she said had been revealed “yesterday or the other day,” she said closed-model performance was going down. Her point was that when the system is open, “nothing changes without you knowing.” The argument was not only transparency in the abstract, but control over whether a dependency has silently changed.

The older objection, in her telling, is that open models were not good enough. Noyan rejected that outright. She pointed to an Artificial Analysis Intelligence Index chart in which open-weight models, shown in green, were competitive with proprietary models, shown in black. The chart listed GLM-5.1, GPT-4o, Claude 3.5 Sonnet, Gemini 2.5 Pro, and DeepSeek-V3, with GLM-5.1 presented as leading the index.

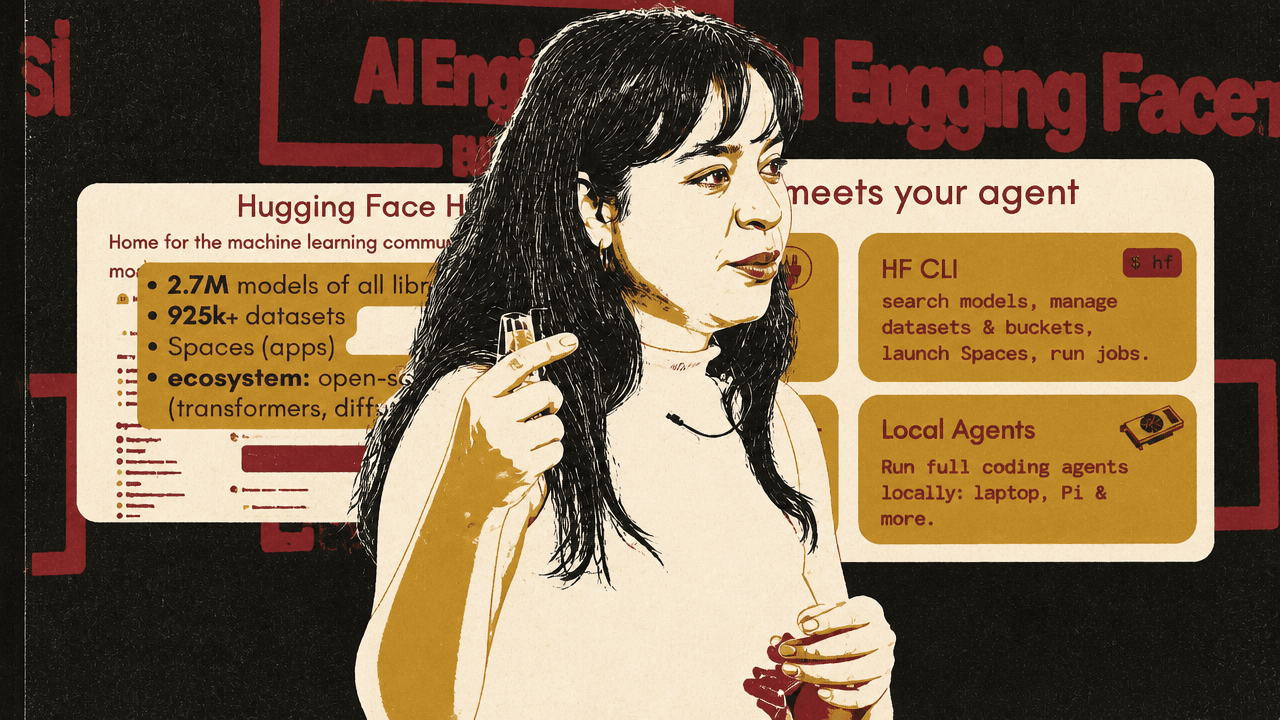

She said she uses GLM-5.1 in her own coding setup and described open models as having “caught up,” with further gains expected as new releases arrive. The Hugging Face Hub, in this account, is the infrastructure layer around that shift: a home for models, datasets, apps, community tooling, and open-source libraries such as Transformers and Diffusers. The Hub overview listed 2.7 million models and more than 925,000 datasets, though she noted the model count had likely already moved closer to 3 million.

Choosing an agentic model starts with task-specific evidence

Agentic models, as Noyan organized them, fall into two broad categories: LLMs with thinking and tool-calling behavior, and vision-language models that can act over screenshots as computer-use agents, including deciding where to click. Her examples included gpt-oss, Gemma-4, Minimax M2.7, GLM-5, and Nemotron3-Super for agentic LLMs, and Qwen3.5 and Kimi-K2.5 for agentic vision models.

She expects more models to launch with vision capabilities from day zero. Gemma-4 was cited as an “omni model,” alongside Qwen 3.5 and Kimi-K2.5 as vision-language models. The implication is that vision support is becoming part of the default agentic model surface rather than an add-on released later.

Running these models locally has become less burdensome, in her telling. The examples showed commands for MLX, vLLM, and llama-server, including serving Qwen/Qwen3-8B through vLLM and querying it with an OpenAI-compatible completion interface. What used to be a more friction-heavy serving problem is now often a few lines of setup.

For model selection, Hugging Face has added benchmark datasets to the Hub. The datasets page includes a “Benchmark” filter and examples such as SWE-bench Pro, Humanities Last Exam, and AIME. A SWE-bench Pro leaderboard on the Hub ranked open models by score, with GLM-5.1 shown at 50.4, MiniMax-M2.5 at 50.1, Kimi-K2.5 at 44.3, and Qwen3-Coder-Next at 44.1.

| Model | SWE-bench Pro score shown |

|---|---|

| zai-org/GLM-5.1 | 50.4 |

| MiniMaxAI/MiniMax-M2.5 | 50.1 |

| moonshotai/kimi-k2.5 | 44.3 |

| Qwen/Qwen3-Coder-Next | 44.1 |

The benchmark feature addresses a concrete failure mode of abundance. When the Hub contains millions of models, choosing among them becomes its own engineering task. Leaderboards let users filter by the capability that matters for the task: coding, math, OCR, or another benchmarked domain.

For quick testing before deployment, Noyan pointed to Hugging Face Inference Providers. The service routes a model to providers such as Groq, Cerebras, Novita, and others, and lets users compare cost and performance across providers. The comparison she showed included input and output pricing per million tokens, context length, latency, and throughput. She noted that the full table also included tool-use support, which matters for agentic workloads.

Agents are being given direct access to Hub workflows

The agent layer in Noyan’s talk centered on four Hugging Face surfaces: the MCP server, the HF CLI, Skills, and local agents. MCP was presented as a way to “plug Hub in to your favorite LLM.” The CLI lets agents search models, manage datasets and buckets, launch Spaces, and run jobs. Skills give agents Hugging Face ecosystem capabilities. Local agents make it possible to run coding agents with tools such as llama.cpp and Pi.

The strongest claim was that an agent can now be asked to train a model, not merely generate code that describes how one might train it. Noyan called this “vibe train models”: telling an agent, for example, “Train Qwen 3.5 on this dataset for me,” and having it work through backend details that used to require manual planning.

You just go to your agent and say, “Train Qwen 3.5 on this dataset for me,” and then it just trains.

For local coding agents, she highlighted Pi as a simple option. Pi can consume a local llama.cpp server, and she also pointed to llama-agent, which is baked into llama.cpp as a binary. In the setup she showed, a user can execute llama-agent with a Hugging Face Hub ID, or install Pi, configure it against a local OpenAI-compatible endpoint, and have the agent operate over local files and the terminal.

Hermes Agent was her strongest personal recommendation. Noyan said she would “die on this hill” because Hermes pushes one step beyond local-vs-closed model choice into memory management and self-improvement: after a task, the agent saves the approach as a reusable skill and persists memory across sessions. It can run through Hugging Face Inference Providers or against a locally served endpoint such as llama.cpp. She described its setup wizard as handling keys and setup, and casually mentioned integration targets such as Slack or WhatsApp.

Her example was concrete. She said she initially failed to integrate Hermes Agent into Slack, with colleagues present as witnesses. She then asked GLM-5.1, through Hermes Agent, to fix the integration, and it did. She recommended GLM-5.1 for open-model use and said she was looking forward to using Hermes with Gemma-4 and a rumored upcoming MiniMax model.

Agent traces become inspection data, and possibly training data

Hugging Face has added a repository type for agent traces, which Noyan described as a way to host Codex, Claude Code, or Pi sessions on the Hub. The interface placed “Traces” alongside benchmark datasets, with repositories such as badlogicgames/pi-nono, judieati/agent-traces-weixal, lishua/agent-traces-example, and vishwa90/vscode-sessions.

Once uploaded, traces appear in the dataset viewer as parsed session data. The example view included fields such as harness, session ID, traces, and file name. A later example showed an uploaded agent session rendered with user and assistant turns, a “Thinking” entry, and bash tool calls, including commands run inside a local workspace.

The upload workflow is deliberately low ceremony. Local directories such as ~/.claude/projects, ~/.codex/sessions, and ~/.pi/agent/sessions can be uploaded directly, with “nothing else needed.” Noyan added that Hermes Agent support for traces would likely arrive soon.

The significance is that traces are not only logs for debugging. Once trace data is hosted and explorable, Noyan said, a user can later train a model on it. Agent sessions become reusable data rather than disposable terminal history.

Local serving is moving into the model card

For users who want to run the LLM behind an agent locally, the Hub now surfaces compatibility with local apps. The models page includes an “Other” tab, and under that an “Apps” filter for tools such as LM Studio, Jan, Ollama, and llama.cpp. Filtering by those apps yields models supported by local serving environments.

On a model repository, the Hub can also show GGUF availability and hardware compatibility. Noyan explained GGUF as the llama.cpp file format supported by many local tools, including Ollama and LM Studio. Her example was a Gemma-4 26B GGUF model. The card showed an L4 GPU compatibility note, a 4-bit Q4_K_M option at 16.8 GB, and an 8-bit Q8_0 option at 26.5 GB.

The point was not only that quantized files exist, but that the model card can tell a user whether a given quantization fits available hardware. Noyan noted that if the Gemma-4 larger model is quantized to 4-bit, it fits within an L4 GPU with 24 GB of VRAM. She also said similar metadata is served for MLX repositories.

If the user does not know how to run the model, the “Use this model” menu provides local app instructions. Noyan showed a modal for Pi that included installing llama.cpp with Homebrew, starting a local OpenAI-compatible server with llama-server, configuring the model in Pi, and then running Pi.

Skills turn coding agents into operators for training, evals, demos, and datasets

Hugging Face Skills are designed to give coding agents structured access to Hub workflows. The HF CLI Skill lets agents search models, manage datasets, launch Spaces, run jobs, and manage repositories. Installation was shown for Claude, Codex, Cursor, and Opencode, including global and per-project installation for Claude.

Other skills extend that surface. Noyan listed llm-trainer for “vibe-training” with TRL, PEFT, and Jobs; community-evals for running evaluations; gradio for building demos; and huggingface-datasets for deeper dataset exploration through the Dataset Viewer API. Installation was shown through Claude’s plugin system and through Gemini extensions, with more integrations beyond the two shown.

The LLM Trainer Skill is broader than its name suggests. Noyan said it works not only for LLMs but also for vision-language models. The user can ask the agent to train a model on a dataset, and the agent can kick off the job remotely on Hugging Face infrastructure or locally, depending on the chosen setup.

Her example prompt was: “train Qwen2-VL on llava-instruct mix.” The agent asked follow-up questions about infrastructure and training preferences, including which instance to use and what validation split to apply. According to Noyan, the agent calculates the VRAM required to fine-tune the model at a given batch size and handles the setup details before launching the job. The resulting model can be found on the Hub.

The example showed a Qwen2.5-VL-3B-Instruct fine-tuning job using supervised fine-tuning on a vision-language dataset, with the dataset reduced from 3 million examples to 2,274 examples and two epochs specified. Noyan’s emphasis was that the agent handled the kind of infrastructure planning she has had to do manually for years.

Skills are not limited to language or vision-language models. A later job summary showed object-detection training for model ID PekingU/rtdetr_v2_r50vd with 43 million parameters, dataset mrtoy/mobile-ui-design with 705 train and 200 eval examples for a 10% quick test, image size 640 pixels, and a hardware line shown as “L4-small (1x T4, 16 GB VRAM, $0.40/hr).” Noyan said she had recently shipped skills for training object detectors, segmentation models, and other vision models, including handling details such as different bounding-box formats.

MCP makes hosted Hub capabilities callable by an LLM

The Hugging Face MCP server gives agents access to model, dataset, and Spaces search for a task; semantic search over Spaces and docs; job creation and management; and querying Spaces through LLMs. Noyan described Spaces as “the App Store of AI,” a place where many hosted apps can be discovered and used.

Jobs, in her description, are one-off workloads that terminate when they fail or succeed, with users paying for the time the job is up. That makes them a natural execution target for agent-generated scripts or data-processing tasks that do not need persistent infrastructure.

The MCP integration works across familiar platforms. Noyan listed VS Code, Cursor, ChatGPT, Gemini CLI, Claude, Claude Code, and LM Studio, and showed a VS Code settings snippet pointing an hf-mcp-server entry at https://huggingface.co/mcp?login.

For a simple demonstration, she asked HuggingChat to “generate image of a baklava made of yarn.” The model called a Hugging Face Space running a Qwen image-generation model, returned the tool output, and presented the generated image. Noyan noted that users need to enable a setting called dynamic spaces to access “absolutely all” Spaces, and described that option as somewhat experimental.

The broader use cases she named included image generation or editing, text-to-speech, document parsing, and using any app on Spaces.

The OCR pipeline shows the stack as a system, not a feature list

Noyan closed with a project built by her colleague Nils: OCR’ing roughly 30,000 AI-related papers on the Hugging Face Hub using Codex, open OCR models, and Jobs. The motivation was that Hub papers are meant to be searchable and discussable, but not every paper arrives with markdown that can be indexed.

The workflow combined the components she had been describing separately. First, pick an OCR model. Then ask an LLM to write and kick off a processing job on Hugging Face infrastructure. Then let the skill set up the right instance for the selected model, avoiding the manual “napkin math” of matching model, hardware, cost, and runtime.

For model selection, she showed the allenai/almOCR-bench benchmark dataset. The leaderboard placed datalab-to/chandra-ocr-2 first with a score of 86.9 and sedasta-dots-acc-community/dots-ocr-1.5 second with 83.0. In that project, they used Chandra OCR.

As of the day of the talk, Hugging Face had also shipped a skill for model picking from benchmarks. Rather than manually checking a leaderboard, a user can ask the agent to “pick the best model to OCR French documents for fine-tuning/inference.” The agent reads the skill instructions, runs the relevant benchmark script, and can make recommendations that include fine-tuning considerations or smaller model constraints.

After model selection, the LLM wrote the script. The generated command used uv run with an L4 instance flavor and a vLLM OpenAI image, passed an HF token, and ran a Python OCR script against an output repository. The agent also calculated runtime and cost. One estimate shown in the slide put processing 27,584 papers with 16 jobs in parallel, at the current L40S benchmark pace, at around 29 to 30 hours wall-clock and about $883 to $895.

Noyan also mentioned Buckets, a recently launched Hugging Face product she described as like S3 buckets but cheaper and faster, usable with mounting through hf-mount. For jobs that will be rerun, Buckets provide fast read-write storage rather than repeatedly moving data through ad hoc paths.