Slack-Native AI Coworkers Turn Memory and Permissions Into Product Risks

Fryderyk Wiatrowski argues that building Viktor as an AI coworker inside Slack is not a matter of scaling a personal assistant to more users. A company-level agent gains value from shared context, shared integrations, and the ability to act where work is discussed, but those same features create harder problems around memory isolation, permissions, fragmented Slack conversations, proactivity, and tone. His case is that an “AI employee” has to be designed less like a chatbot and more like a new hire entering the company’s communication layer.

A company agent is not a personal agent with more users

Fryderyk Wiatrowski describes Viktor as an “AI employee” rather than an app: it lives in Slack, participates in channels and threads, and uses the tools a company already uses. The distinction matters because the design target is not a personal assistant that each employee configures separately. It is a company-level agent with shared context, shared integrations, and shared access boundaries.

Viktor has no separate web interface. The premise is that a coworker should be reachable where work already happens. In this case, that means Slack: DMs, public channels, threads, replies, reactions, edits, and deleted messages. A slide introduced Viktor as “an AI coworker that lives natively in Slack, not a separate app to adopt,” connects to “3,000+ business tools,” and ships “real deliverables” such as PDFs, dashboards, code, and campaigns rather than only chat responses. Wiatrowski says that if an integration is not available, Viktor can build its own connection. The intended result is an agent that can operate across roles and functions rather than sit inside one workflow.

He frames the ambition in broad terms: Viktor is supposed to bring “universal PhD-level understanding” to company work. His example is a CMO who would be more effective with access to the codebase and the ability to contribute to it. Viktor, in his account, can cross those functional boundaries because it has company-wide tool context rather than the narrower context of a single role.

The company-level model changes the integration problem. With a personal agent, every employee may need to connect their own tools. With Viktor, one person can connect an integration and the team can use it, subject to permission tuning. A Growth team does not need 20 people to separately connect Meta Ads or analytics. The same shared setup also reduces ambiguity: if everyone connects their own version of a tool, the agent may not know which account is the right one to use.

That shared model is also where the hard problems begin. Wiatrowski’s core distinction is between “1 user” and “N users.” A memory system that is manageable for one person becomes more fragile when it is serving a hundred people. It can fill faster, accumulate conflicting context, and create leakage risks across channels, teams, and private messages.

Imagine that you have the same architecture and the same memory, but now for 100 users and not 1 user. So it's probably running out of the memory 100 times faster.

The harder issue is not only scale, but organizational structure. Slack channels map to teams, functions, hierarchies, informal politics, and access expectations. Viktor may be present in a Growth channel, an Engineering channel, an Executive channel, and individual DMs. The system has to prevent context from the Growth or Executive channel leaking into Engineering or Support, and it must not use Growth context in a private DM unless the person has the relevant access.

A slide called this an “organizational layer”: norms, politics, permissions, and the way work actually happens. Wiatrowski’s phrase for the product principle is that Viktor is “not a tool,” but “a hire.” That analogy is doing real work. If a company hires a new employee, it does not hand that employee every private account in the company by default. The same principle has to apply to an agent that acts on behalf of a team.

Slack makes slow work feel fast, but turns every interaction into input

Wiatrowski gives two reasons for choosing Slack as the interface. First, Viktor is meant to feel like a human employee, and people do not usually interact with employees through a separate web app. They message them. Second, the kinds of tasks Viktor is meant to perform may naturally take time. A powerful agent may need 10 minutes to complete a task.

In a web app, that latency feels bad. The user has switched context, issued a request, and is now waiting inside an interface. Wiatrowski contrasts that with expectations formed by ChatGPT, where a useful response may arrive in seconds. In Slack, the same 10-minute latency is perceived differently. If a teammate builds an app in 10 minutes after being asked in Slack, that feels extremely fast, not slow.

If you ping someone on Slack and tell them to build an app for you, and get an answer in 10 minutes, you are shocked.

The tradeoff is that Slack is not a clean, single-threaded interaction surface. A web app can present a new conversation with a clear beginning and a controlled context. Slack is messier. Users DM the agent, mention it in public channels, reply in threads, add emoji reactions, edit messages, and delete messages. Wiatrowski says all of this becomes input to the agent, and all of it somehow has to fit into a linear context even though the human conversation is not actually linear.

Message deletion is one example. If someone deletes a message, a human often infers that the task should not continue or is no longer relevant. If someone edits a message, the agent should respond to the edited version rather than blindly continuing from the original. Slack threads add another ambiguity. A user may begin in a thread with a coworker, then forget the thread and continue in a fresh DM. A human coworker would often carry over the prior context. To the agent, Wiatrowski says, that can look like a new task in a new area.

The system therefore needs to look back at previous messages and “roll them over” into existing conversations when appropriate. It must decide whether a new DM is really new, whether it belongs to a prior thread, whether a deleted message cancels an instruction, and whether an edit changes the task. These are not model-intelligence questions alone. They are product and context-management problems created by using the same interface as human coworkers.

Memory must respect teams, permissions, and private context

Wiatrowski frames memory as the challenge he focused on in moving from personal agents to company agents. A personal agent can accumulate clutter over time, but the context is at least anchored to one person. A company agent has to learn from many users while not allowing one group’s information to contaminate another group’s work.

One slide reduced the team-agent problem to three forces: “social hierarchy,” “conflicting instructions,” and “context fragmentation.” A company is not one user with one preference set. Managers and ICs may ask for incompatible things. Teams may work from different assumptions. Channels may have different sensitivity levels. DMs may contain private information that should not be used elsewhere.

The permissions problem becomes most visible in Wiatrowski’s customer story. A team admin at a large U.S. e-commerce brand connected Viktor. The first team integration they connected was their personal Gmail. Then the team began asking Viktor about that person’s emails. The admin complained that Viktor was leaking his data. Wiatrowski’s response was that the user had given Viktor access to a personal account in a team context: “If you hire a new employee, do you give them access to your personal email? Probably not.”

The incident led the team to add integration scoping. Integrations are not always shared now. A user can connect a personal integration, such as a personal email account, and allow Viktor to use it in the right context, including DMs or when explicitly called. The broader point is that shared context is valuable only if the sharing rules match organizational expectations.

That same tension appears in the comparison with desktop-based agents such as Claude Code or Claude Cowork, as Wiatrowski describes them. Viktor’s advantage, in his account, is that it runs in the cloud and does not require the user’s computer to remain open. It also has shared company context and shared integrations. But those advantages create the need for stronger access design, because the agent is not simply acting inside one person’s desktop boundary.

The design target is a coworker that knows the company without becoming a surveillance system or a permissions hazard. Wiatrowski treats those as inseparable. The agent’s usefulness depends on company-wide context; its acceptability depends on not using that context in the wrong room.

Proactivity is valuable only after trust is earned

Proactivity is one of Viktor’s most powerful features and one of its most sensitive. The valuable version, in Wiatrowski’s account, is straightforward: Viktor can observe a discussion, use connected tools, and contribute when it can check or automate something useful.

His example comes from growth work. A team may be discussing an A/B test and concluding that one option is performing better. If Viktor has access to PostHog or another analytics tool, it can check whether the claim is true. Wiatrowski says Viktor has intervened in discussions by confirming a result but noting that it was not statistically significant, then running the calculation to explain why the human conclusion was weak.

That is the upside of a company agent with shared context and tools. It can join the conversation at the moment a decision is being formed, not after someone opens a separate analytics dashboard and asks for a report. It can also expand usage: if Viktor is helpful in more parts of the workspace, people activate it more broadly.

The same behavior can feel invasive if it happens too early. Wiatrowski says that when Viktor starts DMing people and participating in threads on day one, security teams get alarmed. A newly installed agent that immediately watches and comments across the workspace can look less like a helpful coworker and more like surveillance.

The slide put the point this way: “Proactivity is the future, but it feels like surveillance until the agent earns trust.” Wiatrowski’s implementation principle follows from it: earn broader proactivity with a few users first, then roll it out more widely. The agent should not assume that installation grants social permission to participate everywhere.

Users noticed model personality, not only task quality

One unexpected lesson was the importance of tone. Wiatrowski says Viktor was using Opus as its main model, and the team tested GPT-4o as a possible replacement. On tool calling and code generation, he says GPT-4o was strong, and it was cheaper. On a narrow performance-and-cost view, the substitution looked plausible.

The test did not work as expected. Wiatrowski says there were “a couple” of reasons the team did not go with GPT-4o, but the most interesting one was personality. Users “started raging” during the A/B test because they preferred Opus. He leaves open whether this was caused by Viktor’s architecture or by the model itself, but he says users loved Opus and that Viktor’s version of it was “a bit sassy.” In his account, the difference was not reducible to whether the agent could complete the task.

This point is easy to understate because it sounds cosmetic. Wiatrowski treats it as a product constraint. If the agent is positioned as a coworker and lives in Slack, then its communication style is not just a wrapper around work. It is part of how users decide whether they want it in the workspace. A model swap that preserves functional capability but changes tone may still be rejected.

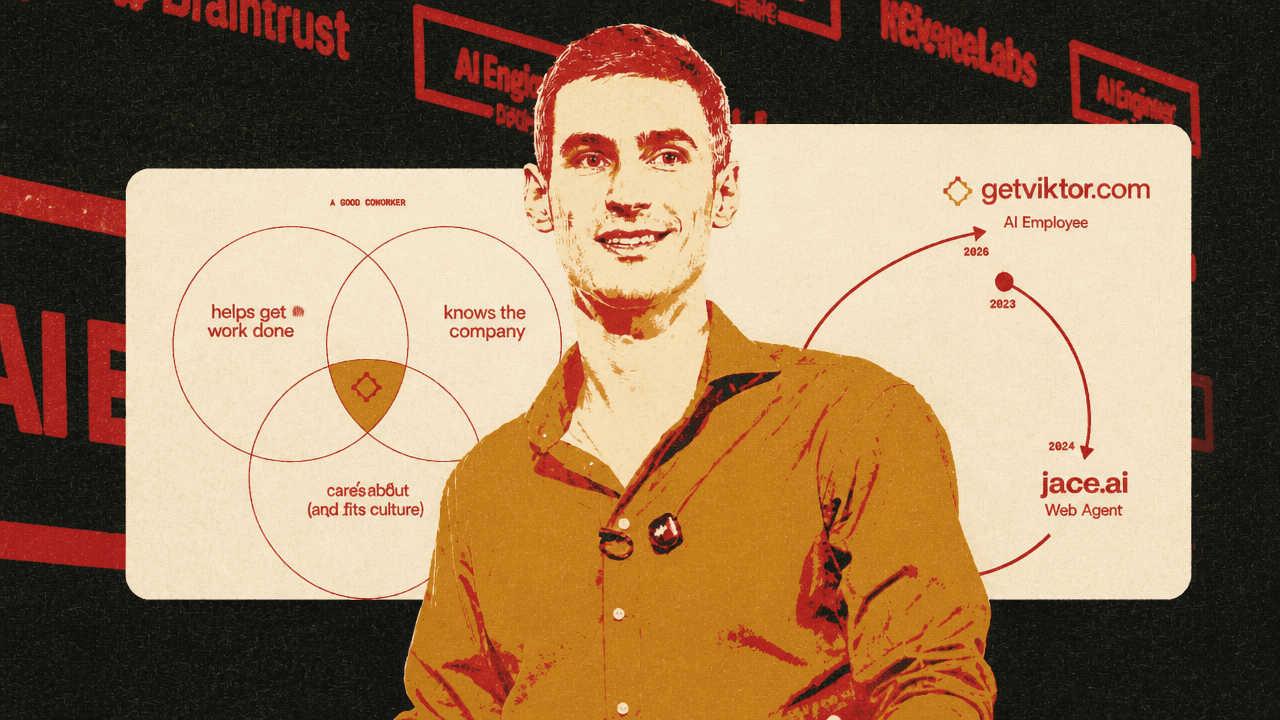

The final framework he gives for a good AI coworker includes friendliness alongside capability and company knowledge. The agent must help get work done; it must know the company; and it must be friendly, or in the slide’s phrasing, “not a douche.” Wiatrowski’s point is not that friendliness substitutes for utility, but that a coworker-like agent is evaluated socially as well as instrumentally.

The path to Viktor ran through browser and email agents

The company’s mission to build AI employees dates back to 2023, when its first approach was browser automation. At the time, Wiatrowski says, tool calling and strong code-generating models were not yet available in the same way. Browsers looked like the universal interface: most software had a web app, and a sufficiently capable agent could interact with any tool through the browser.

The product was then called Jace AI. It worked by taking a snapshot of the page’s DOM, minifying that representation in a lossless way, and using the user’s goal plus the page representation to choose the next action. The agent might decide whether to type in a Google search bar or click a login button. Wiatrowski says the approach did work, but only within the reliability limits of the models available at the time.

His estimate for 2023 browser-agent reliability was blunt: three to five steps at roughly 60% reliability, with failures compounding at each step. He says Jace AI was still state of the art on WebArena, which he called the most popular agentic benchmark, but benchmark performance did not make the system easy to turn into a useful product. Speed was also painful. A web agent might take a minute before failing, whereas tool calling can return an output immediately.

The company then moved from a browser agent to an email agent. Wiatrowski says Sonnet 3.5 enabled their first agent loop, and the team wanted an experience where the user did not have to open a web app to ask for help. The agent would instead have context and proactively identify work to do. In the Jace email product, an arriving email triggered an agent loop. The agent could connect to tools and respond not only with a draft email, but also with actions. His example was a refund request: the agent could perform the refund, with approvals if needed.

That progression explains Viktor’s interface choice without making the history the main point. The company moved from browser automation, to an agent embedded in email, to an agent embedded in the company’s communication environment. The technical center of gravity shifted from “can the agent manipulate software?” to “can the agent behave like a coworker inside the environment where work is discussed, delegated, and corrected?”

The coworker standard is higher than the chatbot standard

Wiatrowski closes the technical argument with three requirements for anyone building an AI coworker. First, it must help get work done. He treats this as increasingly tractable because current models are capable and integrations can be connected through systems such as PipeDream. Second, it must know the company, which means using Slack context well and going through the Slack approval process, which Wiatrowski described as difficult and potentially boring. Third, it must be friendly enough that the team likes interacting with it.

The “AI employee” label carries a higher burden than “copilot” or “tool” in Wiatrowski’s framing. A tool can be awkward as long as it performs a function. A coworker needs role awareness, access discipline, communication judgment, and some social fit. It also needs to fit the medium it inhabits: Slack is where work is delegated, corrected, fragmented, forgotten, resumed, and socially negotiated.

His five-year vision is direct: every company has AI employees, not merely tools or copilots, and Viktor becomes the go-to AI employee. He connects that ambition to a longer history of automating cognitive labor, citing Gottfried Wilhelm Leibniz’s 1685 line: “It is unworthy of excellent men to lose hours like slaves in the labor of calculation... Let us leave that to machines.” Wiatrowski’s extension is that calculation was not the only cognitive task machines could take over.

In Wiatrowski’s account, the company agent becomes useful when it lives in the communication layer, inherits the right tools, and behaves enough like a coworker to be trusted there. That makes the problem larger than tool access. It includes where the agent lives, how it inherits permissions, how it handles fragmented conversations, how it earns the right to be proactive, and how it sounds when it speaks.