Coding Agents Need Library Source Code, Not Longer Prompts

Michael Arnaldi, of Effectful, argues that coding agents use Effect better when the project gives them the Effect source code, not just better prompts or documentation. In a workshop starting from an empty repository, he demonstrates cloning the Effect repo into the project, having the agent extract local pattern files, and then using strict TypeScript diagnostics, tests, lint rules and persistent instructions to steer the agent toward a working Effect HTTP API.

The agent needs the library’s code more than it needs another prompt

Michael Arnaldi’s central recommendation for making coding agents useful with Effect was deliberately blunt: put the library repository inside the project and make it visible to the agent as part of the working codebase.

This session should just be called “just clone the fucking repo,” and be done with it.

The reason was not that documentation is useless, or that prompting has no effect. It was that coding agents have been trained primarily to consume, modify, and reproduce patterns from codebases. They are not continuously learning systems. A model may feel conversational, but Arnaldi described the interaction as appending messages to a fixed-size array: the context window. The model’s durable knowledge comes from pretraining and post-training, not from yesterday’s chat. If a team teaches a model something today, the model does not remember it tomorrow unless the relevant information is supplied again.

That creates a practical problem for libraries and frameworks whose best practices are new, under-documented, or not well represented in training data. Arnaldi said much of his own work is library-level development: low-level TypeScript and Rust, complex type machinery, and codebases with little or no public documentation. He had expected AI assistance to be most useful in application development, not library development. He said that expectation was wrong. He has not been writing code by hand for months, including in library-level work.

The mechanism he settled on was simple. If the agent needs to use Effect, put the Effect repository in the project. In the project he built, he added the effect-ts/effect-smol repository, shown on GitHub as “Core libraries and experimental work for Effect v4,” as a Git subtree under .repos/effect. Then he instructed the agent, through AGENTS.md, to use that repository to extract patterns and best practices.

Arnaldi distinguished this from relying on node_modules. In his view, agents have been trained to focus on “your own code,” not dependency internals. If the library lives only in node_modules, the agent is less likely to inspect it. If the code lives in a .gitignored directory, many tools will avoid indexing or searching it. Cursor, he noted, does not index ignored files. The workaround is to masquerade the library source as part of the project by putting it in a normal repository directory that the agent can see.

He started from an empty repository and used the process to assemble a Bun project with Vitest tests, a TypeScript check script, strict diagnostics, and a simple Effect-based HTTP API with OpenAPI documentation. He had wanted to go further into workflows and clustering, but those became an architectural extension rather than part of the implemented code.

The broader operating loop was to make the codebase legible to the agent, make library patterns locally discoverable, create persistent pattern documents, enforce constraints through type checking and linting, and keep context narrow enough that the model can act effectively.

The model is capable, forgetful, and easily misdirected

Michael Arnaldi’s workflow depends on a particular mental model of LLMs. He rejected the idea that they should be treated like human learners. Humans continuously convert experience into long-term memory; models used through coding agents do not. A coding model has learned general programming patterns during training and has been reinforced to operate on codebases, but its knowledge is fixed after training. It can generalize, sometimes impressively, but it is also operating with compressed and often outdated knowledge.

That is why he did not frame the problem as simply writing a better prompt. He framed it as arranging the repository so the model can see the current knowledge it needs. If the model has stale knowledge, the project must supply fresh knowledge in the form the model is best at using: code.

He also cautioned against assuming that larger context windows automatically solve the problem. A million-token context window can carry more information, but pushing more information into the network can confuse the model, especially when the same session is used for multiple unrelated tasks. Arnaldi repeatedly restarted the coding-agent session to avoid “context pollution.” He described this as a normal part of his workflow, not an exceptional cleanup step.

For more automated work, he uses what he called a “ralf loop”: a simple bash-driven loop that asks the model to pick a small task, implement it, and exit. The point is not sophistication. It is to prevent the same bloated context from accumulating planning, mistakes, obsolete assumptions, and implementation details across too many steps.

He contrasted this with plan modes in coding tools. Asked whether he uses OpenCode’s plan mode, Arnaldi said he does not rely on it heavily because the model’s access to tools is constrained. His preferred equivalent is spec-driven development: ask the model to discuss and write a specification into a Markdown file, persist that file, then ask a fresh or narrower agent context to implement it. The spec becomes the plan because it is in the repository and can be pointed to again.

This was a recurring pattern. Knowledge that mattered became files: AGENTS.md, patterns/http-api.md, patterns/sql.md, patterns/testing.md, and task-specific plans such as plans/todo-api.md. The model could then be pointed at these files, and the files could be committed, reviewed, edited, and evolved.

Arnaldi also argued that less tool access can improve model behavior. He described experiments with a coding agent that has only one tool call, execute, which can run arbitrary TypeScript code, including calling bash through TypeScript. In that setup, the model cannot directly patch files; it must write a TypeScript script that changes code. Arnaldi said this led the model to use TypeScript transformers and AST-based transformations, and that reducing the number of available actions sometimes improved the output.

His summary of the pattern was that many AI workflows work better when they are simpler than expected. Complex context-management architectures can lose to a loop that gives the model a small task, enough local knowledge, and a hard exit.

A useful AI repository starts with boring constraints

Before adding Effect-specific code, Arnaldi had the agent bootstrap a minimal Bun project. The initial setup included Vitest, a TypeScript check script, a src directory, a test directory, a tsconfig.json, and basic smoke tests. He verified the setup by running bun run test and bun run typecheck.

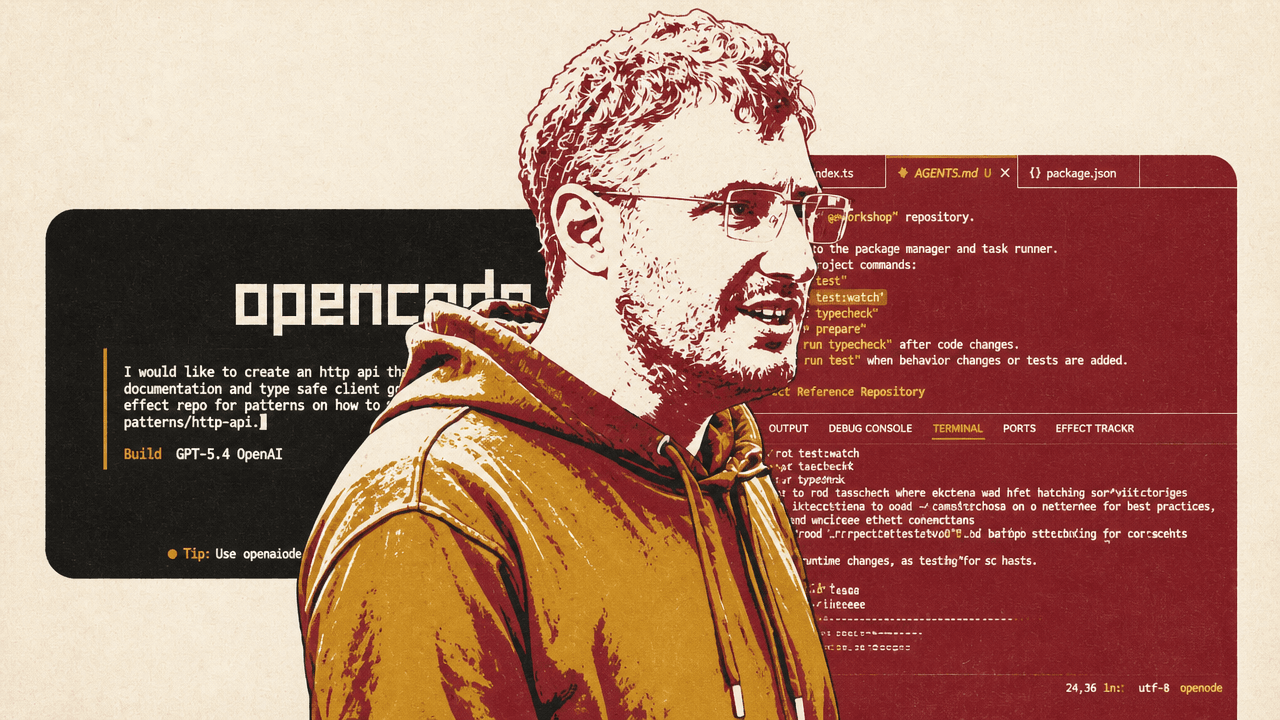

He used GPT-5.4 through OpenCode. He said that when he began this way of working he used Sonnet 4, which he described through Joffrey Huntley’s phrase as “a kid with a knife running through the house.” Even that was good enough for coding work, but he said models such as Opus 4.5 and GPT-5.4 are much better.

Arnaldi also said open-weights models appear to lag frontier models by three to six months. That raised a question for him: how long before open-weight models become good enough for the operations he does every day? His interest in that possibility was sharpened by his dislike of model-provider restrictions, especially Anthropic policies that he said limit how he can use models through tools such as OpenCode or his own TypeScript files that interact with the AI SDK.

The setup was not frictionless. He tried to use Microsoft’s native TypeScript preview, tsgo, as the type checker. The agent first looked for the wrong npm package, typescript-go; the npm page shown on screen described it as a security holding package after malicious code was removed. The correct preview instructions, shown in Microsoft’s GitHub documentation, used @typescript/native-preview and npx tsgo as the tsc equivalent. After installing the TypeScript Native Preview extension in VS Code and configuring settings such as js/ts.experimental.useTsgo and typescript.native-preview.tsdk, the editor showed the TSGo language server active.

That mattered because Arnaldi wanted the Effect language-service diagnostics active and strict. He demonstrated a diagnostic for an unused Effect value: Effect.succeed(100) produced a warning that the Effect value was neither yielded nor used in an assignment. For a human developer, a warning may be enough. For an agent-heavy project, he wanted every available diagnostic escalated to an error.

His reasoning was direct: if AI is going to write much of the code, the project should not allow anything with “any remote resemblance of an error” to pass. He asked the agent to set all available diagnostics to error, and the resulting tsconfig.json changed Effect diagnostics such as globalFetch and globalRandomEffect from warnings to errors.

This was the first form of back pressure in the system. The repository should push against the model’s shortcuts. TypeScript diagnostics, tests, and later lint rules are not just quality checks for humans; they are steering mechanisms for agents.

He then committed the initial workspace and added the Effect repository as a squashed Git subtree under .repos/effect. The terminal showed a subtree commit for repos/effect, confirming that the library source had become part of the visible project tree rather than a dependency hidden in node_modules.

| Repository artifact | Purpose in Arnaldi’s workflow |

|---|---|

| `.repos/effect` | Local copy of the Effect source for the agent to inspect and imitate. |

| `AGENTS.md` | Persistent instructions listing commands, constraints, and where to find Effect source and pattern files. |

| `patterns/http-api.md` | Research extracted from the Effect repo on building HTTP APIs and OpenAPI support. |

| `patterns/sql.md` | Research extracted from the Effect repo on using Effect SQL with SQLite. |

| `patterns/testing.md` | Project-specific testing guidance, including use of `@effect/vitest` and `it.layer`; later visuals showed the testing pattern file inside a `.patterns` folder. |

| `plans/todo-api.md` | Task-specific implementation plan for the todo HTTP API. |

Patterns are generated on demand, not installed wholesale

After adding Effect’s source, Michael Arnaldi did not immediately ask the model to implement an API. He opened a new session and asked it to research the codebase.

The initial prompt told the agent it had access to .repos/effect, then asked it to explore patterns for an HTTP API with OpenAPI documentation and a type-safe client. More specifically, Arnaldi asked the agent to explore the Effect repository and save the research into patterns/http-api.md, asking questions if needed. The model’s conclusion was that the strongest default Effect pattern was to define a shared HTTP API, derive OpenAPI from it, and mount documentation. Arnaldi accepted a shared HTTP API but said he did not need committed generated client artifacts in the repository.

He did not want to dump every Effect pattern into the project up front. If the repository contains patterns for every part of Effect, the agent may overuse them. Instead, he wanted patterns created when the project actually needed a capability.

That became clear when the todo API needed persistence. Arnaldi paused the HTTP plan and asked the agent to research Effect SQL and SQLite, again by exploring .repos/effect, and to create patterns/sql.md. He explained that this self-selecting approach is important in brownfield projects. A team may want to introduce Effect for one layer without refactoring the entire system. If the project uses Drizzle for persistence, for example, the persistence pattern could remain Drizzle rather than Effect SQL. The model should be given the patterns the team intends to use, not the entire universe of possible patterns.

An audience member asked whether reusable patterns could be distributed through Git subtrees or similar mechanisms, perhaps by framework authors. Arnaldi said Effect’s team will likely develop a CLI to prefetch available patterns while still letting users pick and choose. He also wants to automate the “explore source, create patterns” process, because centrally authored best practices may not fit a specific project.

Another audience member raised a related concern: different teams use different model qualities. A free or lower-tier model may generate worse code and worse documentation than the frontier model used here. Would it help for framework authors to ship official pattern libraries, colocated with the package?

Arnaldi said the idea is generally good, but model-specific prompting makes it harder than it appears. Even AGENTS.md is not really a universal standard, because Claude and GPT respond differently. He gave a concrete example: he avoids uppercase imperatives with GPT because, in his experience, GPT “gets scared” if yelled at and becomes passive or overly agreeable. With Claude, uppercase emphasis can make the model pay attention to a sentence. That means reusable patterns may need to be generated or adapted for the model family in use.

His preferred direction is to make the source code, examples, and documentation sufficiently self-explanatory that any capable model can generate appropriate patterns from them. A CLI could then generate model-optimized context on the spot. He acknowledged that this approach may fail and that the team may eventually provide prewritten patterns for common model families instead.

He also mentioned another possibility: fine-tuning an open-source model to use Effect patterns by default. Effect’s team has considered that, but doing it well would require strong evaluations.

The agent must be boxed in where it tends to cheat

Michael Arnaldi repeatedly returned to the same operational principle: watch what the model does, and when it takes a shortcut, turn that shortcut into a rule, a diagnostic, a lint check, or a pattern.

He showed his own accountability repository as an example of a more mature setup. It contains an ESLint configuration with custom rules intended to stop agents from doing things that made his code worse. The rules are opinionated and specific because they came from actual model behavior. He bans explicit type assertions, discourages any and unknown, and prohibits patterns such as as X. Initially, he banned unknown because models would write as unknown as X. The model then discovered that never is a bottom type and wrote as never as X. Arnaldi’s response was to ban as.

This was not framed as purity. It was about preventing the agent from satisfying the compiler while bypassing runtime validation and domain modeling. In one shown ESLint rule, he banned sql<type>... because using a type parameter on an SQL template literal provides no runtime validation. The rule instructed the model to use SqlSchema.findOne, findAll, single, or void with a Schema for type-safe queries.

He gave a similar example with identifiers. If a schema uses plain strings for both UserId and another kind of ID, the compiler cannot prevent accidentally passing one as the other. Arnaldi’s preferred approach is branded types, with constructors and validation at the edge. But if a model can write as UserId, it may satisfy the type checker without validating anything. So the rules need to prevent the cast and force the model toward constructors or schema validation.

He described another failure mode: the model may accept API input as plain strings and then call constructors inside handlers, defeating edge validation. In that case, he would write rules that push validation into schemas and flag constructors inside handlers as suspicious.

The style is reactive, and intentionally so. Arnaldi compared it to reading a rule book with strangely specific prohibitions: those rules exist because somebody did the thing. In an AI codebase, the same dynamic applies. A rule such as “avoid custom wrappers that call Layer.build in tests” exists because the model created that wrapper.

The same principle applied to AGENTS.md. The first generated file listed commands, including watch-mode commands. Arnaldi immediately removed or constrained those, because agents will run watch tasks or dev servers and get stuck. He added an explicit instruction never to run commands in watch mode, including bun run test:watch or a dev server.

The result is not a single perfect prompt. It is a feedback loop. The model proposes code. Tests, diagnostics, lint rules, and human review reveal bad tendencies. The repository is updated so the model is less likely to repeat them.

The todo API worked because the agent had source, patterns, and tests

With the Effect source cloned and HTTP and SQL patterns written, Michael Arnaldi asked the agent to implement a todo API. The API needed to create todos with title and description, update todos, flag todos as done or not done, and list todos. He asked the model to discuss the plan and create plans/todo-api.md.

The model generated a SQLite-backed implementation using Effect SQL. In client.ts, it created a live SQL layer with a .todos.db file and a migrations layer using SqliteMigrator.layer and SqliteMigrator.fromRecord, including a 1_create_todos migration. Arnaldi said the layer looked basically correct and that inline migrations were a valid choice, but he noticed duplication.

In the HTTP code, the model created schemas including TodoNotFound as a Schema.TaggedError with an id field and a message getter, and a Todo schema using Schema.Struct with fields such as id, title, and done. Arnaldi called the result decent and noted that the model correctly added a schema annotation so TodoNotFound would map to a 404. But he also saw design choices he would tighten later: identifiers were plain strings rather than branded types, and the model used Schema.Struct where he personally prefers classes. He said he might later add a best-practice file preferring classes or a lint rule prohibiting Schema.Struct in specific files.

That is how he evaluated the generated code. He did not demand perfection from the first pass. He looked for whether the code followed the extracted patterns closely enough, type checked, ran, and exposed the expected functionality. Then he identified the next back-pressure points.

The agent also wrote tests. Some of them passed unexpectedly when Arnaldi ran bun run test. But he disliked the test structure. The model had created helper functions that manually built and provided layers, wrapping effects in Effect.scoped. Arnaldi said this pattern is used in parts of the Effect codebase to test layer internals, but it was unnecessary for application-level tests. Even without deep Effect knowledge, he said, the repeated wrapper “stinks.”

His preferred test pattern was it.layer from @effect/vitest: provide a layer directly to the test rather than building custom wrappers around layer provisioning. He asked the agent to clean up the tests, move utilities into their own folder, and use it.layer.

The cleanup produced a new patterns/testing.md, which captured the testing guidance for future work: use @effect/vitest for Effect-based tests, use it.effect, use it.layer, and avoid custom wrappers around Layer.build. Arnaldi then updated AGENTS.md to reference all pattern files. In speech he referred to .patterns at that point, while earlier prompts and outputs had used patterns/; the important operational step was that the pattern documents were registered where the agent could find them.

The API itself ran. He asked the agent to add a start command and identify where to find the OpenAPI docs. Running bun run start brought up Swagger UI for the Todo API at localhost:3000/docs. Arnaldi then checked the generated OpenAPI spec and said it showed schemas properly.

The todo API was not presented as production-ready. It had a local SQLite file that needed to be ignored, some duplication, plain-string identifiers, and testing patterns that needed correction. But starting from an empty repository, the agent produced a functioning Effect-based HTTP API with OpenAPI docs after being given the library source and a pattern-extraction workflow.

Good agent work is repository preparation, not zero-shot luck

Arnaldi challenged a common way of judging models: whether they produce good output by default on the first prompt. He said the zero-to-one problem matters for the first hours or days of a project, but it is not the core measure of usefulness. A model is useful when it can operate in a large-scale codebase using its patterns and continue to work without failing at scale.

That shifts the programmer’s job. If the job is no longer primarily to write code by hand, then the job becomes setting up repositories so models can act well inside them.

For a brownfield codebase, Arnaldi said his first step is to let the model explore the code, then clone the main libraries and frameworks the project uses. If the project uses TanStack Router, clone TanStack Router. If it uses Svelte, clone Svelte. Then ask the model to extract best-practice files. Once those local references exist, the model becomes more effective because it can imitate real code rather than rely on stale or generic memory.

He also uses semantic code search in experiments because models often reimplement existing features when they cannot find them. Semantic search helps the model locate similar code instead of producing duplicates. An audience member mentioned Knip as another tool for catching unused or duplicated code paths, especially after refactors where generated code leaves dead files or unused exports behind. Arnaldi accepted that as useful information, though he did not integrate it in the project.

The same principle applies to commands. AGENTS.md should list available project commands such as bun run typecheck and test commands, but should also say which commands not to run. In this project, watch modes and dev servers were explicitly forbidden for the agent because they can block a session. Arnaldi liked that GPT-5.4 generated a relatively concise AGENTS.md; he said Opus would likely have written hundreds of lines for the same task.

He was clear that these files evolve. The initial AGENTS.md is only a prototype. As the model creates bad patterns, new rules are added. As the project adopts more libraries or subsystems, new pattern files are created. As commands change, the command list is updated.

He mentioned slash commands and skills as ways to automate repeated tasks such as “create a new pattern.” OpenCode and Claude Code both allow slash commands. Skills can be more portable across teams where different developers use Cursor, OpenCode, Claude Code, or other agents. Arnaldi is skeptical of skills as a universal answer—putting a skill for every Next.js internal into context will pollute the model rather than make it competent—but he sees them as useful for bounded tasks like pattern generation.

Model choice changes the harness, the prompt, and the guardrails

Michael Arnaldi’s comments on model choice were pragmatic rather than tribal. He said GPT and Claude-family models are both exceptional, and sometimes one will fail where the other succeeds. For many coding tasks, he considers them broadly comparable.

He currently uses OpenAI models more often because he wants freedom in the harness, meaning the CLI or integration layer he uses to work with the model. His examples were OpenCode and his own TypeScript files that interact with the AI SDK. Arnaldi said Anthropic prohibits the usage he wants in those contexts, and that this is why he switched more of his work to OpenAI models. Before those restrictions, he used Opus more often. He still sees areas where Opus is better; UI work was his example.

The operational consequence is that repository instructions and guardrails need to account for model behavior. GPT tends to ask for confirmation more often, especially on complex tasks. After producing SQL research and updating the HTTP plan, the model asked whether to continue implementing. Arnaldi found that annoying and said Opus would likely have continued on its own. But the trade-off is that Opus sometimes takes shortcuts. If one any slips into a codebase, Arnaldi said, Opus may repeat as any everywhere because it has discovered that the pattern compiles.

That is why his mature Opus-heavy projects needed large lint configurations. The lint file becomes a wall against the model’s shortcuts.

Prompt tone also differs. Arnaldi said he does not use uppercase imperatives with GPT because it can become passive and overly agreeable. With Claude, uppercase can focus attention. This was part of his argument that universal pattern files are hard to maintain: the same instruction style may not work equally well across model families.

He did not present a deterministic routing rule such as “use GPT for backend and Claude for frontend.” One audience member suggested programmatically falling back from one model to another if it fails or takes too long. Arnaldi said that could be done. His actual practice is simpler: if one model drives him nuts, he tries the other.

Evaluation is necessary, but style still needs humans

Michael Arnaldi connected pattern generation and model-specific context to a larger research problem: how to evaluate whether generated Effect code is good.

Effect’s team is experimenting with evals. The setup he described uses human-written best-practice code and generated code, then asks an LLM judge to compare them and score whether the generated code is too different. He did not present this as a solved or especially elegant method. It is part of their research, especially if they pursue fine-tuning an open-source model on Effect patterns and need reinforcement-learning evaluations.

The hard part is that many quality judgments are not binary. Code that type checks is better than code that does not; that is a relatively safe property to evaluate. But questions of file structure, verbosity, and style are harder. Is terse code better? It depends. Is verbose code better? It depends. If two file structures both convey the meaning, a human may still prefer one over the other. Among 100 humans, Arnaldi suggested, the preference may split 80/20 rather than converge on an absolute truth.

This creates a problem for official Effect patterns. The evals necessarily encode the team’s opinions about what is good. Those opinions may be reasonable, but they are not universal truth.

An audience member asked about testing patterns directly. Could a pattern include executable snippets or references that can be checked? Arnaldi said this could work in some cases, perhaps with an explicit tag such as “TS execute this” for snippets intended to run. But many pattern files contain illustrative file names or examples that are not meant as real references. A naïve test would fail because it treated an example as a concrete path.

Another possibility is to run a command that asks a model to write code using a pattern, then test and evaluate the output. Arnaldi said that moves toward evals and makes sense at scale, but on a per-project basis it can become too much overhead. You may spend more time curating evaluations than writing or shipping code.

For the Effect repository itself, he said the team is thinking about running evals daily and generating reports. That would let them see whether changes to the library, documentation, or examples improve or degrade model output.

Workflows matter more when AI makes operations long-running

A simple todo API does not expose the failure modes that made Michael Arnaldi want to discuss Effect Cluster and workflows. A more realistic backend might include authentication and registration. Registration commonly requires two unrelated operations: writing something to a database and sending an email, or sending an email code and waiting for confirmation. There is no ordinary database transaction that spans those unrelated operations. If the server crashes between them, it may be hard to know whether the email was sent.

Arnaldi pointed to the common user-facing message “if the email did not arrive in 30 minutes, please retry” as a symptom of this kind of design. His objection was practical: the system is forcing the user to retry because the application cannot guarantee that both operations happened.

Queues are one way to address this class of problem. Workflows are another. Arnaldi said Effect has a workflow solution implemented on top of Effect Cluster, where multiple Bun, Node, or other runtime instances can cooperate so that, in his description, once a procedure starts the system is designed to carry it through even if one server crashes and the work moves elsewhere. He compared the category to systems such as Temporal and Ingest. He also said this part of Effect remains unstable, but is expected to become stable very soon.

His recommended workflow for learning those Effect subsystems was the same as for HTTP and SQL: ask the model to explore the Effect repository, extract patterns for Effect Cluster and workflows, write those patterns into the project, and then implement from them.

He also argued that AI features make workflows relevant to smaller systems. Historically, workflow engines were more obviously valuable at larger companies because at scale every edge case happens regularly. With AI, response times are longer. A normal web request might take 10 milliseconds, a window in which a server is unlikely to fail. An LLM-backed process may take a minute, and many more things can go wrong during that minute. Even with only 10 users, long-running AI operations can produce disruptions that shorter request-response systems rarely expose.

In Arnaldi’s view, this is part of Effect’s appeal: workflows, clustering, AI integrations, and integrations such as Discord and Slack compose within the same system. The models, when given the source and patterns, are “pretty decent” at using it.