Multi-Agent Software Systems Need Contracts and Handoffs to Run for Days

Factory’s Luke Alvoeiro argues that long-running software agents will not be built by stretching chat sessions, but by organizing agents into roles with explicit contracts, handoffs and validation. In a talk on Factory’s Missions system, he presents a three-part architecture — orchestrator, workers and validators — designed to run software work for hours or days while humans supervise scope and acceptance rather than every step. The case rests on Factory’s production experience, including missions Alvoeiro says have run as long as 16 days, and on a claim that serial execution, adversarial verification and model selection by role matter more than default parallelism.

Missions are built for days, not long chats

Factory’s strongest claim is operational: Luke Alvoeiro says its longest Mission has run for 16 days, and Factory believes the system can run for 30. The point is not that a single agent session can stay coherent for that long. It is that long-running software work needs an ecosystem of agents, structured handoffs, validation checkpoints, and shared state.

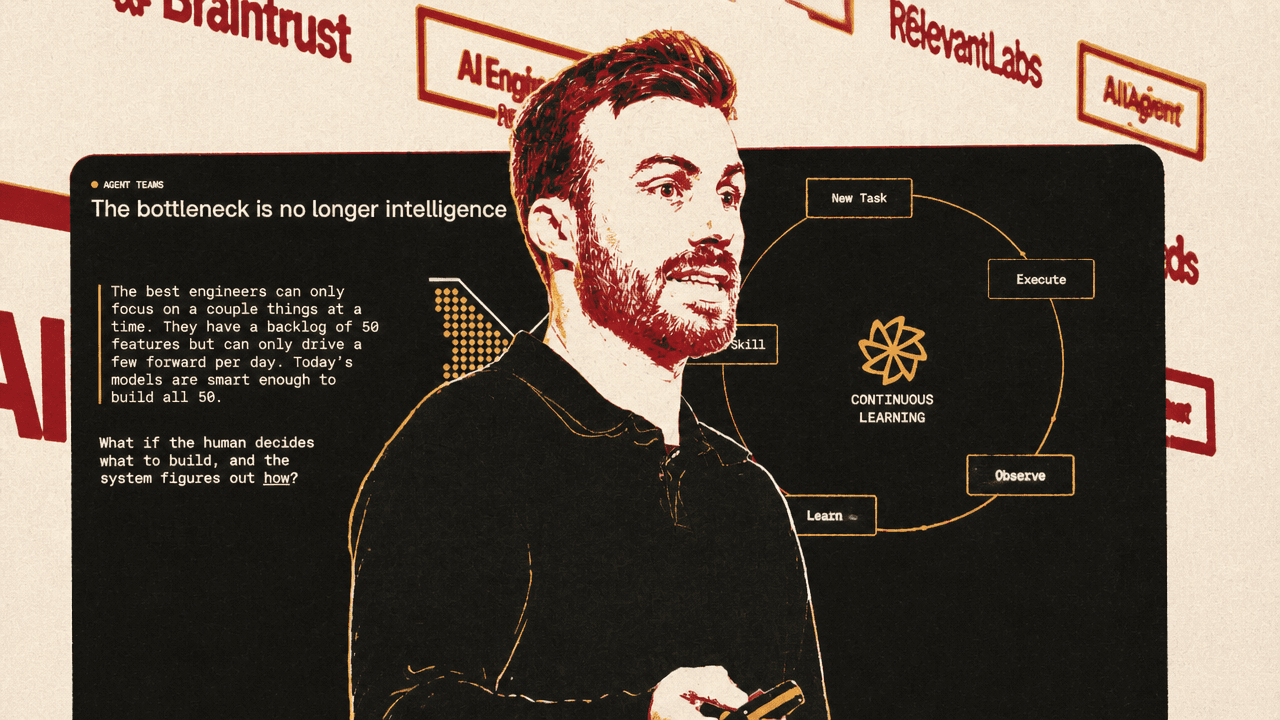

That architecture starts from Alvoeiro’s premise that software engineering is no longer limited mainly by whether models are intelligent enough to attempt the backlog. It is limited by whether humans have enough attention to supervise what models can already try to do.

A strong engineering team may have dozens of features or fixes in view, but every task still demands review, prioritization, and intervention. Alvoeiro’s question is what changes if the human decides what to build and the system figures out how to build it. In his formulation, an agent system should be able to work for hours or days while the human returns to completed work rather than continuously steering each step.

That is the design target behind Factory’s “Missions”: not a larger prompt, a longer context window, or a more patient chatbot, but a system for decomposing work, enforcing verification, and carrying state across many agent runs.

Five multi-agent patterns, four composed into Missions

The taxonomy begins with a complaint: multi-agent systems are hard to reason about because frameworks use different terminology and encode different assumptions about what works. Luke Alvoeiro proposes five patterns.

Delegation is the simplest: one agent spawns another for a subtask. Creator-verifier separates building from checking; the agent that wrote the code is invested in the implementation, while a fresh agent with fresh context is more likely to find problems. Alvoeiro compares the pattern to human code review.

Direct communication is peer-to-peer messaging between agents without a coordinator. Alvoeiro treats it cautiously because state can fragment across conversations and the system lacks a single source of truth. Negotiation applies when agents coordinate around a shared resource, such as an API or a section of the codebase; he says the best case is positive-sum coordination rather than adversarial conflict. Broadcast is one agent sending shared status, context, or constraints to many others, which he calls critical for long-running coherence.

Missions combine four of those patterns: delegation, creator-verifier, broadcast, and negotiation. The user describes a software goal, scopes it through conversation, approves a plan, and then Missions handles execution. The omitted pattern is direct peer-to-peer communication; the architecture Alvoeiro describes relies instead on shared state and structured handoffs.

| Strategy | How Alvoeiro defines it | Role in Missions |

|---|---|---|

| Delegation | One agent spawns another for a subtask. | The orchestrator spawns workers and research sub-agents. |

| Creator-verifier | One agent builds; a different agent checks. | Implementation and validation are always separate agents. |

| Broadcast | One agent sends shared context or status to many. | The validation contract and shared mission state are referenced by agents. |

| Negotiation | Agents coordinate over shared resources or decisions. | The orchestrator reviews handoffs and decides whether to accept, rescope, or create follow-up work. |

| Direct communication | Agents talk peer-to-peer without a coordinator. | Presented as hard to get right because state fragments; not part of the stated Missions composition. |

The three roles are planner, implementer, and adversarial checker

Missions are organized around three roles: the orchestrator plans, workers implement, and validators verify. For Luke Alvoeiro, the separation among those roles is the foundation for making multi-day agent work possible.

The orchestrator is the user’s sounding board during scoping. It asks strategic questions, looks for unclear requirements, and produces a plan with features, milestones, and a validation contract. That contract defines what “done” means before any code is written.

Workers receive feature assignments with clean context. They read the spec, implement the feature, commit through git, and hand off to the next step. The point of committing through git is that each later worker inherits a clean slate and a working codebase rather than a long, degraded conversational context.

Validators are separate from workers and are designed to be adversarial. Missions still runs familiar checks such as tests, type checking, linting, and code review. But Alvoeiro says that is insufficient for long-running autonomy. The system also validates behavior: whether the work functions end-to-end, not only whether the code appears correct.

Tests written after implementation don't catch bugs. They confirm decisions.

That is why the validation contract is written during planning, before implementation. In Alvoeiro’s account, an agent that writes a feature and then writes tests for it will often produce tests shaped by the implementation rather than by the intended behavior. The tests may pass and show coverage, but they mostly confirm the decisions already made. A validation contract defines correctness independently. For a complex project, he says it can contain hundreds of assertions, with each feature assigned one or more assertions and the sum of all features expected to cover the full contract.

After each milestone, Missions runs two validators. The scrutiny validator performs the more traditional checks: test suite, type checking, linting, and dedicated code review agents for each completed feature in the milestone. The user-testing validator acts more like a QA engineer. It launches the application, interacts with it through computer-use-like capabilities, fills out forms, checks pages, clicks buttons, and verifies functional flows holistically.

Alvoeiro says the user-testing validator takes significantly longer because it interacts with a live application. He also says most of a mission’s wall-clock time is spent waiting for this kind of real-world execution rather than generating tokens. The validators have not seen the code before, which is the point: they are not invested in the implementation.

Handoffs make the system coherent across days

Validation alone is not enough for work that lasts days. The system also has to prevent context from disappearing between agents. Luke Alvoeiro describes structured handoffs as the mechanism for keeping agents coherent “over days, not just minutes.”

A worker does not simply report that it is done. It fills out a structured handoff with what was implemented, what was left undone, commands run, exit codes, issues discovered, and whether it followed the procedures the orchestrator set.

The handoff is also part of the self-healing loop. Errors are caught at milestone boundaries. Corrective work is scoped. The mission pulls itself back on track not by assuming that agents remember what happened, but by forcing the relevant facts to be written down and then addressed.

This is also why Alvoeiro rejects default parallelism for software implementation. The obvious expectation is that 10 agents running at once should produce 10 times the throughput. Factory tried that, he says, and it did not work well for software development tasks. Agents conflicted, stepped on each other’s changes, duplicated work, made inconsistent architectural choices, and consumed tokens while coordination overhead ate the apparent speed gains.

Missions instead executes features serially. One worker or validator runs at a given time. Parallelism is reserved for operations that cannot conflict: codebase search, API research, documentation reads, and validator-side read-only reviews such as code review. Alvoeiro calls this “serial execution with targeted internal parallelization.” It is slower on paper, but he says the error rate drops dramatically, and for multi-day runs correctness compounds.

Mission Control turns supervision into operations

A standard chat interface does not fit a system that runs for days. The user needs a quick view of project completion, budget consumption, the active worker, handoff summaries, and how the system is changing course after discoveries.

Factory built Mission Control as that dedicated view. In Luke Alvoeiro’s description, it is an interface for asynchronous oversight: the user can monitor the mission as a project manager, redirect it when necessary, or close the laptop and return later.

The displayed Mission Control view shows an active feature, milestone, worker model, procedures, expected behavior, elapsed time, input and output token counts, feature status, validation logs, and controls such as features, workers, pause, mission directory, and quit to orchestrator. The example on screen is a terminal-like interface for a mission in a development directory, with an active authentication feature, a Claude 3.5 Sonnet worker profile, feature progress, and a log that alternates between worker starts, worker completions, and validation milestones.

| Mission Control area | What it exposes |

|---|---|

| Current execution | Active feature, milestone, worker, procedures, and expected behavior. |

| Progress | Completed and pending features, plus a progress log of workers and validators. |

| Cost and runtime | Elapsed time and token counts, including input, cached, and output tokens. |

| Operator controls | Options to inspect features and workers, pause, open the mission directory, or return to the orchestrator. |

The interface matters because the system changes the nature of supervision. The human still scopes, approves, and can redirect. But the running work is represented as features, milestones, workers, validators, logs, and handoffs rather than a single stream of conversational turns.

Model choice becomes an architectural skill

Missions assume that the right model is assigned to the right role. Planning, implementation, and validation place different demands on a model.

Planning benefits from slow, careful reasoning: strategic questions and constraint analysis. Implementation benefits from code fluency, creativity, fast generation, and tool use. Validation benefits from strict instruction following. Luke Alvoeiro says no single model or provider is best at all three.

Inside Factory, he says, they call the necessary skill “droid whispering”: the ability to mentally model how different LLMs interact, where they fail, how those failures compound over a multi-day run, and which model should sit in which seat. He credits Theo, the engineer who built the Missions prototype, with the model defaults, but says users are encouraged to customize them for the needs of a project.

One example is validation. Alvoeiro suggests using a different model provider for validation to reduce the chance that the validator shares the same training-data bias as the model that implemented the feature. That is part of the claimed advantage of a model-agnostic architecture. If a system is locked into one model family, it is constrained by that family’s weakest capability. If models specialize, the ability to place the right model in the right role becomes a compounding advantage.

He also says the structure can compensate for models that are not frontier-level. Validation contracts and milestone checkpoints allow Missions to run successfully even with open-weight models, in his account, because the architecture supplies discipline around the model’s work.

A Slack-clone mission shows where the time and tokens go

Factory’s production example is a Slack clone. The dashboard reports an 18.5-hour total runtime, 185 total agent runs, and 778.5 million total tokens. It breaks time into orchestration, implementation, and validation, and tokens by type and role.

| Measure | Value shown |

|---|---|

| Total runtime | 18.5 hours |

| Orchestration time | 0:30 hours, 2.3% |

| Implementation time | 9:06 hours, 49.5% |

| Validation time | 8:14 hours, 37.2% |

| Agent runs | 185 total runs |

| Total tokens | 778.5M |

| Token split by type | 30.1M input, 744.9M cache read, 3.4M output |

| Token split by role | 29.2M orchestration, 455.5M implementation, 293.8M validation |

| Code size | 35.5k lines |

| Test share | 52.0% tests |

| Statement coverage | 89.25% covered |

Luke Alvoeiro summarizes the example as implementation accounting for about 60% of the time and tokens. The displayed dashboard gives the more granular view: implementation is shown as 9:06 hours, or 49.5% of runtime, and 455.5 million of 778.5 million total tokens. Validation is also a major share of the run, at 8:14 hours and 293.8 million tokens.

Alvoeiro says validation “never succeeds on the first go” in these missions. Follow-up features are almost always created. That is his evidence for the value of the QA loop: the system is not expected to get everything right in one pass; it is designed to discover failures, create corrective work, and continue.

The Slack-clone mission ended with roughly half of the code lines in tests and about 90% statement coverage, according to the dashboard and Alvoeiro’s narration. He also notes that Factory relies heavily on prompt caching to offset the cost of such long tasks. In the displayed token breakdown, cache reads dominate the token total: 744.9 million of 778.5 million tokens are cache reads.

He then broadens the examples. Users have applied Missions to overnight prototypes, large features, internal tools, major refactors and migrations, ML research, and codebase modernization intended to make agents more productive in the future.

The architecture is designed not to be obsolete after the next model release

One design pressure is the fear that a new model release will obsolete today’s agent architecture. Factory’s response, according to Luke Alvoeiro, was to make the system get better with model improvements rather than break because of them.

Almost all orchestration logic lives in prompts and skills rather than in a hard-coded state machine. Alvoeiro says the logic for decomposition and failure handling is contained in about 700 lines of text, and that changing four sentences can alter execution strategy significantly. Worker behavior is driven by skills that the orchestrator defines per mission, which allows customized behavior for the specific job.

The deterministic layer is intentionally thin. It handles bookkeeping: triggering validation, blocking progress when handoff issues have not been addressed, and enforcing the discipline of the process. In Alvoeiro’s phrasing, Missions ensures discipline while the models provide intelligence, using primitives the models already know how to work with, such as Agents.md and skills.

That design choice matters because the goal is not to encode all judgment into software. The system leaves judgment-heavy work to models and uses deterministic code to maintain the workflow boundaries that make long-running autonomy possible.

What Factory thinks this changes for engineering teams

The final implication returns to the initial bottleneck: human attention. Luke Alvoeiro suggests the economics of engineering work change if a five-engineer team can move from roughly 10 concurrent workstreams to perhaps 30 with Missions. The exact figure is framed as a possibility, not a measured universal result.

The role of the engineer shifts toward architecture, product decisions, and genuinely hard problems, rather than the execution of every implementation step. Alvoeiro also argues that the codebase should end up in a better state than before: more end-to-end tests, more unit tests, more skills, more structure, and configuration that makes both agents and humans more productive later.

He closes by restating Missions as a composition. Delegation appears when the orchestrator spawns workers and research agents. Creator-verifier appears because implementation and validation are always separate. Broadcast appears through the shared validation contract and mission state. Negotiation appears when the orchestrator reviews handoffs and decides whether to accept, rescope, or create follow-up work.

But Alvoeiro says the strategies are not sufficient by themselves. The connective tissue is structured handoffs, model assignment by role, and an architecture that improves as models improve. He identifies open questions: how to parallelize Missions further so they run faster, and how to orchestrate missions themselves into more complex workflows. His production claim is direct: this works on real projects at scale today.