Vertical AI Teams Need Domain Experts Who Own Quality Loops

Chris Lovejoy of Notius Labs argues that vertical AI companies increasingly fail or succeed on whether they can turn domain judgment into product quality, not simply on access to better models. He proposes three operating models for that expertise: an Oracle who both judges and changes outputs, an Evaluator who defines and measures quality while engineers implement fixes, and an Architect who designs systems that improve from use. His case studies of Granola, Tandem and Anterior show why the right model depends on whether quality is subjective, measurable, or too variable for manual iteration.

Vertical AI is an organizational problem before it is a model problem

Chris Lovejoy argues that the hard part of building vertical AI products is no longer simply finding a better model. In his view, frontier models are good enough for many vertical applications; the gap is whether organizations can turn domain judgment into product quality.

Lovejoy’s background explains the emphasis. He trained as a medical doctor at the University of Cambridge, worked in the NHS, and moved into AI in 2018, building models and products across organizations including Tandem, Anterior, and Notius Labs. Across those roles, he says, the repeated challenge was how a company could “bake in” domain expertise in a way that produced a differentiated AI product.

His earlier thesis, presented at a previous AI Engineer conference, was that “the system for incorporating domain insights is more important than the sophistication of your models” or pipelines. He described this as the “last mile problem”: getting an AI product to understand the specific nuances of the workflows, use cases, and customers it is supposed to serve.

The follow-up question he kept receiving was organizational: if domain expertise matters, how should a company actually be built around it? What kind of expert should be hired? Where should that person sit? What authority should they have?

Lovejoy’s answer is that vertical AI teams should become “domain-native AI organizations.” In his framing, that means designing the company so that expert judgment is not an occasional consultation layer, but part of the system by which AI quality is assessed, improved, and eventually made self-improving.

The frontier models are good enough, but the gap is now how do organizations operationalize the expert judgment around them?

He points to the scale of the opportunity as one reason this question matters. Lovejoy says venture investors are treating vertical AI as a major opportunity, and cites Bessemer’s argument that vertical AI could move beyond vertical SaaS because AI addresses labor, which he frames as a multi-trillion-dollar market. But he also notes that broad enthusiasm has not yet translated into consistent success at scale. According to a Gartner-attributed statistic he presents, roughly 50% of generative AI projects were abandoned in 2025. Lovejoy’s interpretation is that many AI products are being built without deep enough understanding of the workflows they are automating or how domain experts would perform those processes.

He identifies three common mistakes: not hiring domain experts, or hiring them too late; hiring the wrong kind of domain expert; and hiring them without fitting them into the organization in a way that lets their expertise change the product.

AI quality cannot be separated from judgment

Lovejoy starts from a simple premise: appraising AI quality requires judgment, and good judgment requires domain expertise.

That expertise can be formal. A healthcare product may need doctors; a legal product may need lawyers. But Chris Lovejoy stresses that it can also be informal. The relevant question is not whether someone has a professional credential, but whether they understand what quality means for the product’s actual output and use case.

This matters because AI teams constantly make choices among approaches based on outputs. They need to decide what good looks like, what failure looks like, and what kind of product behavior is acceptable. In Lovejoy’s account, those decisions cannot be fully outsourced to generic model benchmarks or engineering intuition. They require the organization to contain, empower, and operationalize the right kind of judgment.

He is not making a blanket argument that every company must go hire a credentialed domain expert. In some cases, the right person may already be inside the organization, informally performing the role. The point is to recognize the function: someone must be able to assess output quality with the kind of taste, experience, and specificity that the product domain demands.

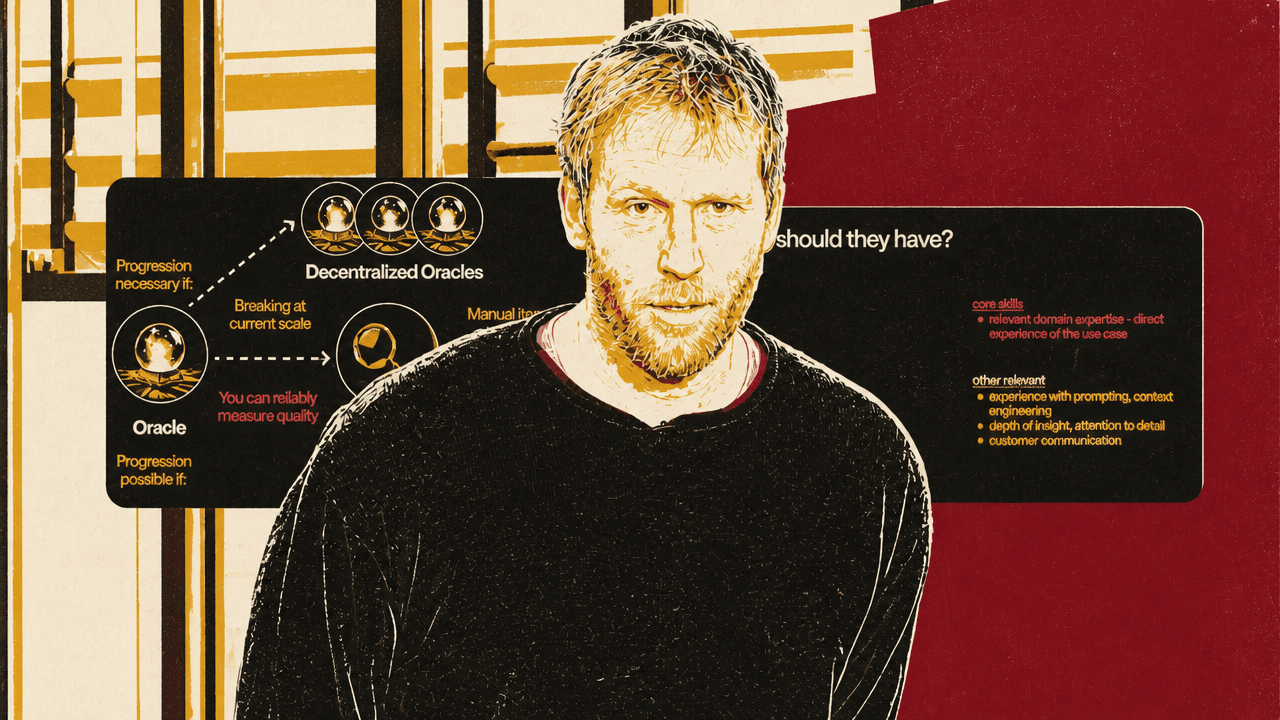

From there, Lovejoy proposes three organizational roles for domain expertise: the Oracle, the Evaluator, and the Architect.

The Oracle directly improves the application. The domain expert inspects AI outputs, uses the product, reads traces, identifies failures, and then changes the system. Often that means modifying prompts. It can also mean adding documents, tools, context, or other mechanisms that encode domain knowledge. In this model, the expert owns both sides of the loop: assessment and improvement.

The Evaluator separates assessment from implementation. The domain expert defines what quality means, builds or specifies systems to measure it, and gives engineers the information needed to improve the product. The measurement system might rely on user behavior, customer feedback, expert review, LLM-as-judge techniques, or internal review workflows. Engineers then do most of the implementation work, with the domain expert guiding what matters and where performance is failing.

The Architect steps back further. Instead of repeatedly assessing outputs and handing fixes to engineers, the domain expert designs a system that can assess and improve from usage with less human intervention inside the core loop. Lovejoy does not detail the mechanics in this talk, but describes the role as creating the architecture for automated improvement.

| Role | What the domain expert does | What changes in the product loop |

|---|---|---|

| Oracle | Reviews outputs, identifies failures, and directly modifies prompts, context, tools, or other product behavior. | Assessment and improvement both sit with the domain expert. |

| Evaluator | Defines quality, metrics, review systems, and failure modes so performance can be measured. | The expert owns assessment; engineers primarily implement improvements. |

| Architect | Designs systems that learn from usage and improve over time with less direct human intervention. | The expert designs the automated assessment-and-improvement system. |

The right model depends on measurability and iteration speed

The first decision is whether performance can be measured with meaningful metrics. If quality cannot be measured objectively, or if “taste” is central, Lovejoy says the organization should usually start with an Oracle approach. The domain expert’s judgment is the quality system.

If one person can review the core output and make the necessary improvements, a single Oracle may be enough. If the product has too many variations, customers, specialties, or geographies for one person to cover, the company may need “decentralized Oracles”: multiple domain experts responsible for different subsets of the output or customer base.

If quality can be measured, the next question is whether manual iteration is fast enough. If engineers can take the expert-defined metrics and feedback, make product changes, and keep up with the pace of customer and product variation, then the Evaluator model can work. The expert’s main job becomes defining quality and building a measurement system that engineering can act on.

If manual iteration is too slow, and if improvement can be automated, Chris Lovejoy says the organization should move toward the Architect model. The need for this model emerges when there is too much local variation, too much customer-specific behavior, or too much ongoing adaptation for humans to keep manually translating expert feedback into product changes.

| Question | If yes | If no |

|---|---|---|

| Can performance be measured in meaningful metrics? | Ask whether manual iteration is fast enough. | Use an Oracle; if one person cannot cover the variation, use decentralized Oracles. |

| Is manual iteration fast enough? | Use an Evaluator: the expert defines and measures quality while engineers improve the system. | Use an Architect, if improvement can be automated. |

He frames these roles as an evolution path rather than fixed job descriptions. A small startup may begin with a single Oracle because that is the fastest way to understand failures and improve the product. As the product scales, the same person may need to help build decentralized review and customization systems, move into an Evaluator role, or design Architect-style systems for automated adaptation.

Progression becomes necessary, in Lovejoy’s view, when the current model starts breaking at scale. But progression is only possible under certain conditions. A company can move from Oracle to Evaluator only if it can reliably measure quality. It can move from Evaluator to Architect only if manual iteration is too slow and there are viable mechanisms for automating improvement.

Granola works as an Oracle case because meeting notes require taste

Granola, the AI meeting-notes company, is Lovejoy’s first case study. He says the company had recently passed a billion-dollar valuation, but the important point for his framework is the shape of the product: it has one core output, and that output depends heavily on judgment.

Chris Lovejoy describes Jo Barrow, a friend at Granola, as a writer and journalist who joined as the company’s first employee, wrote all the prompts, and performed extensive research into what makes good meeting notes. According to Lovejoy, that research included many hours reading papers and speaking with “hundreds, thousands” of users.

Barrow’s role, in his telling, has been as the primary gatekeeper of AI quality. She reviews the meeting notes produced by the product, assesses what is working, and directly changes the prompts and behavior of the system. In Lovejoy’s framework, that is an Oracle role: the same person assesses and improves.

The reason this works is that there is no objectively perfect meeting note. A note can be more or less useful, clear, faithful, concise, structured, or suited to a user’s expectations, but Lovejoy’s point is that those judgments are mediated by taste. A purely objective metric cannot fully determine the best output.

Granola also has a single core output: the meeting note. That makes the product unusually amenable to direct human review and improvement even as the company scales. Lovejoy says there are nuances: the company also runs evals and has internal tooling that lets others contribute to prompts. But the basic mechanism remains Oracle-like because the key quality loop still depends on expert judgment applied directly to the output.

The domain expertise in this example is not a licensed profession. Barrow is not presented as a doctor or lawyer. Her expertise comes from writing, journalism, research, user understanding, and detailed taste about what makes meeting notes good. For Lovejoy, that is enough to count as domain expertise because it maps directly to the product’s core output.

Tandem needed decentralized Oracles because medical notes vary by specialty and geography

Tandem, a medical AI scribe company, is Lovejoy’s second case. Like Granola, Tandem generates notes from spoken conversations. But in this case the notes are medical, which changes the domain requirements.

Tandem’s early domain expert was Roy Pekny, whose background Chris Lovejoy describes as medical doctor and then McKinsey. Pekny reviewed medical notes and updated prompt templates. That again began as an Oracle model: one domain expert assessing outputs and directly improving the system.

The model broke as Tandem scaled. According to Lovejoy, one person could not cover the full range of specialties, countries, note types, and use cases. The company therefore moved toward decentralized Oracles, hiring doctors from different countries and specialties and updating the platform to support a long tail of prompt customizations.

This example clarifies when “more domain expertise” does not mean a bigger advisory panel, but a different operating model. Tandem did not simply need medical review in general. It needed people with context about specific clinical settings and customer needs. One doctor might work with customers in a particular subspecialty and geography, understand what they needed, and make prompt changes for that setting. Many other versions could exist for other contexts.

Lovejoy says the move worked because medical note quality requires medical expertise, but still contains subjectivity. As with ordinary meeting notes, there is no single perfect medical note. But unlike Granola’s case, the variation is too broad for one person’s taste and expertise to handle alone. The organization therefore needs multiple Oracles, each with ownership over a subset of outputs or relationships.

Anterior moved from Oracle to Evaluator to Architect because prior authorization is measurable but highly variable

Anterior’s first product addressed prior authorization in the United States. As Chris Lovejoy explains it, a doctor may request a scan such as an MRI; the request goes to an insurance company; nurses and doctors inside the insurer determine whether the treatment should be approved as appropriate.

At Anterior, Lovejoy was the first technical employee. He built the initial product, including prompts and code, and reviewed AI outputs by applying clinical judgment to whether the AI was making an appropriate decision. He then updated prompts and code based on those reviews. That was the Oracle stage.

As the company gained more customers and demand, the Oracle role stopped scaling. Lovejoy then moved into an Evaluator role. He defined metrics and failure modes, built a review dashboard, and hired clinicians to review outputs. That gave the company performance data it could use with the engineering team.

But he says that also failed to scale from the “fixing” side. The problem was not merely knowing whether outputs were correct; it was handling variation in how different organizations interpreted their policies and rules. Manual engineering iteration could not keep up with all the local differences. That pushed the work toward an Architect model: designing methods for automated improvement.

Anterior is the cleanest example of Lovejoy’s full progression because its quality target was more measurable than meeting notes. The AI output, as he describes it, was either approval of the prior authorization decision or escalation for clinician review, and correctness was determined based on medical evidence. Clinical reasoning is required to assess it, but the outcome is more objectively measurable than whether a meeting note has the right taste.

At the same time, the product had large variation across customers in how prior authorization rules were interpreted. That combination — measurable quality, expert reasoning, and high customer-level variation — made it suitable for evolution from Oracle to Evaluator to Architect.

Lovejoy also emphasizes that the early Oracle experience made the later roles better. By personally reviewing outputs and fixing failures, the domain expert learns how the AI performs and where it fails. That understanding helps define metrics, identify failure modes, design review systems, and eventually architect automated improvement loops.

The job specification changes with the role

Lovejoy warns against hiring for a generic domain-expert label. The relevant expertise has to match the use case directly.

For an Oracle, the core skill is direct experience with the task the product performs. “Just being a doctor might not actually be sufficient,” Chris Lovejoy says. If the product is medical coding, the relevant question is whether the person understands medical coding: what it involves, where it goes wrong, and how quality should be judged. The same logic applies to other domains. The company should not stop at “doctor,” “lawyer,” or another broad category if the product depends on a narrower workflow.

For Oracles, adjacent skills include prompting, context engineering, depth of insight, attention to detail, and customer communication. Lovejoy treats prompting and context engineering as useful but more learnable. What is harder to fake is the combination of direct domain exposure and careful taste about outputs. He points back to Barrow at Granola as an example of someone who went deeply into the details.

For Evaluators, the skill set shifts toward data science intuition. The domain expert is defining metrics, building systems to collect those metrics, and making them usable. Lovejoy describes this as fundamentally data science work. Statistics can matter, especially at scale. Industry connections can matter if the company needs to recruit expert reviewers. Leadership experience helps if the expert will manage a review team. Product management skills help because the Evaluator must collaborate with engineers and translate quality signals into product improvements.

For Architects, relevant domain expertise remains necessary, but Lovejoy adds experience working on LLM-powered products. The Architect needs to understand what levers are available for improving performance. Engineering skills can also help, either to implement improvements directly or to steer their development.

The practical implication is that a company should hire for breadth where possible. Lovejoy acknowledges that it is a lot to ask for one person to have all the skills listed across Oracle, Evaluator, and Architect. But he recommends finding someone with the necessary domain expertise plus as many adjacent skills as possible, then pairing them with complementary specialists when needed. If the domain expert lacks statistical depth, for example, pair them with a statistician.

The failure mode is hiring someone who is “just a domain expert” in a narrow sense and cannot grow with the organization. Lovejoy does not minimize the difficulty of domain expertise itself, but argues that a person who cannot evolve from Oracle to Evaluator or Architect may force the company to bring in someone else later. That is less desirable than having someone who builds context from the beginning and can carry it into later organizational forms.

Ownership matters because advisory experts do not change the system

The operating principles are straightforward: define a principal domain expert, give that person ownership, and hire for breadth so the role can evolve.

The “principal domain expert” is a single person accountable for the quality of AI performance. Chris Lovejoy argues this is partly about speed. When everyone is responsible, no one is truly responsible, and decisions slow down into consensus by committee. A single accountable owner can make calls, invest deeply in understanding how the AI performs, and feed that understanding into better decisions.

Ownership is distinct from advice. Lovejoy says companies should not treat the domain expert as a consultant who reviews outputs and hands opinions to someone else. The expert needs to be in the room when product decisions are made. Otherwise the company is unlikely to build the systems required to measure and improve AI quality accurately.

He describes a failure mode from a company where two senior clinicians were present, but neither was clearly the principal domain expert. Final authority was ambiguous, their role was more advisory, and progress on the broader AI quality system was slow. Both clinicians eventually left after roughly 12 to 18 months. Lovejoy suggests that lack of ownership may have been part of the reason. For the company, the loss was not just headcount; it was the departure of people with substantial organizational and domain context in their heads.

This is the organizational core of Lovejoy’s thesis. Domain expertise does not create advantage merely by existing somewhere inside the company. It has to be connected to authority, measurement, iteration, and product change. A reviewer who flags problems but cannot shape the improvement system is not enough. A credentialed expert outside the decision loop is not enough. A committee of experts without accountability may move too slowly.

The recommended starting point is early hiring of a principal domain expert with relevant use-case expertise and broad adjacent skills. Lovejoy expects that person to begin as an Oracle in many organizations, because direct review and direct improvement are the fastest ways to learn the product’s failure modes. As the product and company scale, the role should evolve along the axis the use case demands: toward decentralized Oracles, toward an Evaluator system, or toward Architect-led automated improvement.