BFL Is Moving FLUX From Image Generation Toward Physical AI

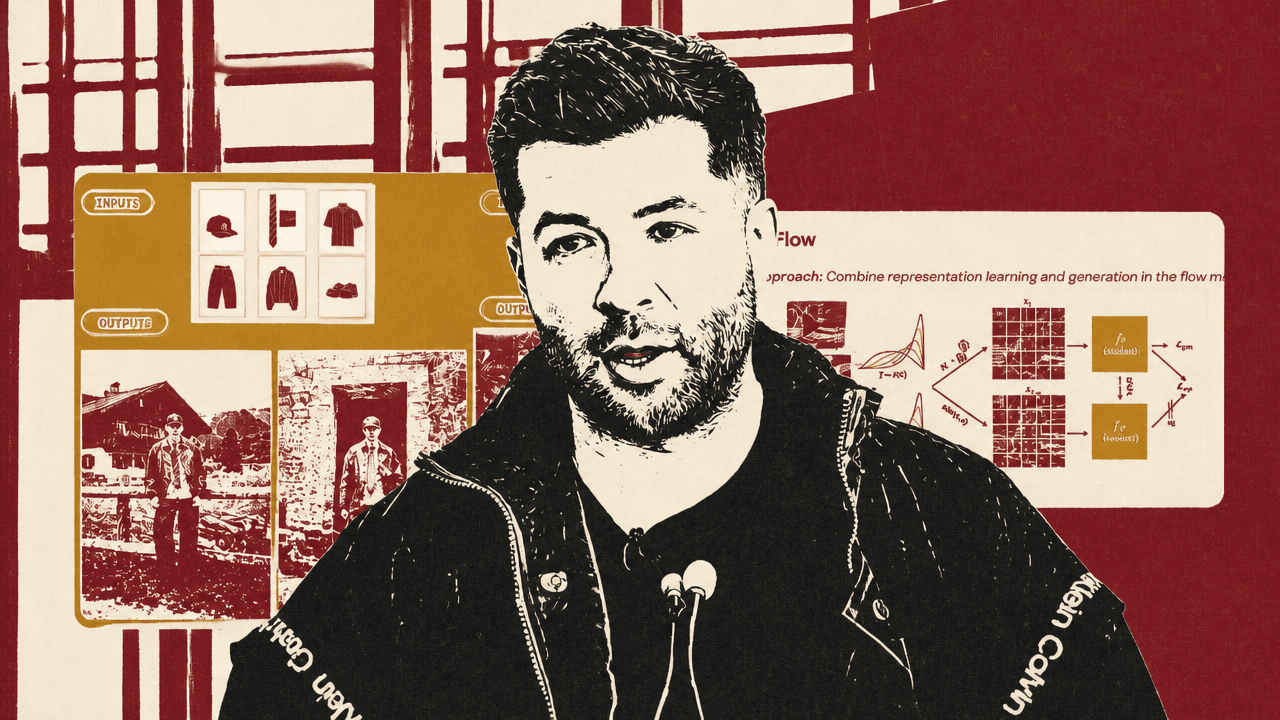

Stephen Batifol of Black Forest Labs argues that FLUX is no longer just an image-generation line but the start of a broader push toward visual intelligence: models that can generate, edit, understand, and eventually act across images, video, audio, and physical environments. In the talk, he presents FLUX.1, Kontext, FLUX.2, and FLUX.2 Klein as product steps toward that goal, while BFL’s Self-Flow research is framed as the mechanism for moving representation learning inside multimodal generative models rather than relying on external encoders.

BFL’s image models are being aimed at multimodal and physical-world systems

Stephen Batifol framed Black Forest Labs’ current work as a progression from open image generation toward what the company calls visual intelligence: models that generate and edit images quickly, learn stronger internal representations, and eventually extend across video, audio, actions, world models, and robotics.

Batifol introduced BFL using the company’s own slide language: “built by the team behind Stable Diffusion, Latent Diffusion & FLUX.” The slide described BFL as having a $3.3 billion valuation, more than 75 employees, and more than 200,000 academic citations, with backers including Andreessen Horowitz, General Catalyst, NVIDIA, Salesforce Ventures, and Samsung Next. Enterprise customers and partners shown on the slide included Microsoft, Adobe, Canva, Mistral AI, Gamma, and Picsart. He also positioned BFL as a research company first: one that publishes papers and open work because, in his framing, it wants the field to move forward with it.

The first FLUX release, FLUX.1, arrived on August 1, 2024, according to BFL’s timeline slide. Batifol called it BFL’s “first breakthrough”: an open-source, text-to-image model that could run on a laptop and that he described as a major competitor to Stable Diffusion at the time. He emphasized anatomy quality relative to other models, including larger ones. A slide showed a post from Hugging Face co-founder and CEO Clem Delangue saying FLUX had become the most-liked model on Hugging Face at the time, out of more than one million models. Batifol noted that this was no longer true, but said it mattered for a company “coming out of nowhere” with a new model.

FLUX.1 Kontext extended the model from text-to-image into editing. Batifol described it as the first open-source editing model, combining generation and image editing in one system. The examples shown were simple but important: an input portrait could be edited to remove an object from the subject’s face, move the same person into Freiburg, and then change the environment to snow while preserving the character. The model, in his account, supported character consistency, local editing, and speed: around seven to eight seconds by his recollection, compared with roughly 40 to 50 seconds for early GPT image editing.

That editing capability mattered beyond one-off retouching. Batifol showed a storyboard built from a recurring cartoon seagull character wearing a VR headset and drinking beer, then adding another character, hats, and outdoor scenes. The point was continuity. Partners and customers, he said, used this kind of image sequence as input frames or end frames for video and animation models.

FLUX.2, shown on BFL’s timeline as released on November 25, 2025, was presented as BFL’s current best image model and a step toward “visual intelligence.” Batifol used generated photographs of hands, animals, people, sports, and product-style food imagery to make a quality claim: some outputs, particularly around hands, veins, bracelets, animals, and product detail, would be very difficult for him to identify as AI-generated.

The more consequential FLUX.2 feature was multi-reference editing. In one example, the model received six product or clothing images and a prompt asking it to “create an outfit with those images.” Batifol said the model composed the items coherently: the jacket and tie were worn properly rather than simply pasted into the scene. In another example, a sofa was placed into an interior room, a use case he connected to e-commerce and furniture visualization. FLUX.2 could take up to 10 images simultaneously, with what he described as strong character, product, and style consistency.

| Release | Date shown | Capability Batifol emphasized |

|---|---|---|

| FLUX.1 | 01/08/2024 | First text-to-image model; open-source release; runnable on a laptop |

| FLUX.1 Kontext | 29/08/2025 | In-context image generation and editing; character consistency and local edits |

| FLUX.2 | 25/11/2025 | BFL’s best image model to date; first multi-reference model; state-of-the-art open-source text-to-image and editing |

| FLUX.2 [klein] | 26/01/2026 | Interactive image generation and editing; sub-second speed |

The bottleneck is not only generation quality, but whether the model understands what it makes

For Stephen Batifol, the technical limitation of conventional generative training is that a model can learn to denoise without learning the structure of the scene it is denoising. He used a physical example: a model trained only to generate images does not inherently learn that a glass should sit on a table rather than pass through it, or that a person sitting on a chair should not fall through the chair.

The standard training setup he described begins with images, adds random noise, and trains the model to remove that noise. This denoising objective can produce strong images, but it does not directly teach the model the relationships and physical constraints inside the scene. Representation alignment is the workaround he presented: an external visual encoder, trained for tasks such as image segmentation, gives the generative model richer visual representations. He cited DINOv2 as an example of such an external encoder.

The attraction is clear. A slide titled “A Tale of a Representation Gap” stated that generative models improve dramatically by alignment with external encoders, showing a 17.5x faster convergence in the chart. Batifol said alignment can reduce loss much faster.

But the same alignment setup introduces structural costs.

First, it creates a scaling ceiling. If the encoder is a fixed checkpoint, scaling the generative model does not automatically scale the representation system it depends on. The generative model remains limited by the side model.

Second, many encoders are modality-specific. A visual encoder may work for images, but if a lab wants one model to generate images, audio, video, and more, it would need separate encoders for each modality. Batifol described that as a “Frankenstein setup” where the pieces do not naturally fit together.

Third, the objectives are misaligned. The generative model is trained to create content; the encoder may have been trained to segment images. They can be made to work together, but they are not optimizing the same thing.

Batifol also showed that “better” encoders do not reliably yield better generative models. A chart comparing DINOv2 and DINOv3 showed worse generation performance with DINOv3, despite his description of DINOv3 as technically the better model. The point was not that DINOv3 is worse in general, but that there is no simple rule guaranteeing that a stronger encoder, by its own benchmark, will improve the generative system.

Self-Flow puts representation learning inside the generative model

Stephen Batifol presented Self-Flow as BFL’s research route around the external-encoder problem. He said the company released the research in the open about a month and a half before the talk, describing it as “a scalable approach for training multi-modal generative models” that combines representation learning and generation inside the same flow model.

In the conventional setup, the model receives noisy data, tries to denoise it, and aligns with an external encoder. Self-Flow instead adds two different random noise levels to the same underlying asset. One branch receives a high-noise version. Another receives a lower-noise version. Batifol described the high-noise branch as the student, which must denoise more difficult inputs, and the lower-noise branch as the teacher, a more stable version of the student.

The student learns two things at once: a generation loss and a representation loss. The loss shown on the slide was .

The significance, in Batifol’s framing, is that the model no longer needs an external encoder to acquire stronger representations. If the model scales, the student and teacher scale together. The same mechanism can be applied across images, video, and audio. He said this is already being used for different models BFL is training, and that the company sees it as the direction for getting rid of side encoders.

Batifol was careful to frame the examples as research models, not production releases. In multimodal training, he said the shown Self-Flow research results were better than the baseline across audio, image, and video. A chart also showed the baseline converging and plateauing while the Self-Flow line continued improving. He speculated that if training continued toward two million steps, the baseline would likely plateau further or possibly worsen, while Self-Flow would continue lowering loss.

The qualitative examples made the claim more legible than the charts. In text rendering, a baseline vanilla flow-matching model produced misspellings such as “The future is FlLUx,” “FUX was here,” and “From worls to worlds: FLUX.” Self-Flow produced the intended versions: “The future is FLUX,” “FLUX was here,” and “From words to worlds: FLUX.” Another side-by-side neon sign example showed the baseline rendering “FLUx is nutlimolal,” while Self-Flow rendered “FLUX is multimodal.”

| Example | Baseline output shown | Self-Flow output shown |

|---|---|---|

| Text on a surface | The future is FlLUx | The future is FLUX |

| Short phrase | FUX was here. | FLUX was here... |

| Phrase with repeated letters | From worls to worlds: FLUX | From words to worlds: FLUX |

| Neon sign | FLUx is nutlimolal | FLUX is multimodal |

Batifol tied this to representation learning: the model has learned that letters in “FLUX” should appear in order and in relation to one another. The text examples were not merely typography demos; they were evidence, in his argument, that a model trained this way forms more useful internal structure.

The same pattern appeared in anatomy. A baseline portrait with complex makeup showed distorted facial anatomy; the Self-Flow version had more natural proportions. Batifol cautioned that this was not a production model expected to be perfect, but argued that the anatomy was visibly better.

Video examples extended the claim. In one side-by-side comparison, a generated woman performing a push-up moved unnaturally in the baseline, while the Self-Flow version showed more accurate motion and form. In another, a puffin walking in the baseline flickered and produced visual artifacts, while the Self-Flow version was more stable. Batifol contrasted the baseline’s flicker with Self-Flow’s stability as another example of the qualitative difference he wanted the audience to see.

The approach also extended to joint video-audio generation. Batifol showed a person saying “Hello from the Black Forest.” In the baseline, the audio degraded into strange sounds at the end; in the Self-Flow version, the phrase ended cleanly. He emphasized that it was the same model trained across the image, video, and audio examples, not separate systems stitched together.

Actions and robotics turn visual intelligence into a physical-world problem

Stephen Batifol’s most expansive claim was that the same multimodal representation approach can move beyond media generation into action prediction. He showed a robotic arm attempting to pick up a soda can and bring it closer. The baseline had flickering and odd arm movement. In the Self-Flow example, trained for the same number of steps, the arm picked up the can and brought it closer.

That example served as the bridge from image models to physical AI. BFL, in Batifol’s account, is interested not only in image or video generation, but in actions and “doing more things towards physical AI.” Later, when he described the company’s road to visual intelligence, robotics was the reason world models mattered.

World models, as he framed them, are models trained to understand and simulate geometry, relationships, and interactions in the physical world. The research goal is not just better synthetic video. It is the ability to train agents inside generated worlds, scale self-driving, and automate manufacturing. A slide summarized the intended path as three focus areas: real-time generation, world models, and robotics and automation.

The reason is robots. That’s why we care.

A question from the audience pressed on the training basis for world models. Batifol declined to share the data, calling it a trade secret and saying data is sensitive. He did say BFL is partnering with many people for it.

Another audience question asked how the state of the world is stored in action-prediction models. Batifol answered that this is what the model learns: representations that include the state and “some kind of memory.” When asked whether that memory sits in the context window or an external system, he said it is the context window: the model has tokens and can infer, in effect, “I’ve moved, here is where I should be then next.”

Asked whether such a system could run indefinitely, Batifol was cautious. “Indefinitely” was not something he was sure about. There would always be a limit, he said, and one might use a sliding window.

Real-time generation changes the interaction model

Stephen Batifol centered the speed thread on FLUX.2 [klein], which he described as a move toward interactive editing and interactive generation. He said the model generates and edits images in less than a second, recalling fastest figures of roughly 500 milliseconds for editing and 300 milliseconds for generation. In the demo, a user sketched a crude chair-like shape with a stylus, while an image generation interface updated high-fidelity chair images in near real time. Batifol stressed that this was not a video model; it was a sequence of image edits happening interactively.

The performance comparison shown for FLUX.2 [klein] plotted Elo rating against end-to-end latency for text-to-image, single-reference image-to-image, and multi-reference image-to-image tasks. Batifol said Klein’s 4B and 9B models were at least on par with other open-source models while being dramatically faster. In text-to-image, he described Klein latency as around 0.5 seconds, compared with Qwen around 15 seconds. In single-reference image-to-image, he said Klein 9B was slightly above 0.5 seconds, again compared with Qwen around 15 seconds. In multi-reference editing, Klein remained under a second while Qwen was closer to 20 seconds.

| Task | FLUX.2 [klein] latency described | Qwen latency described | Batifol’s conclusion |

|---|---|---|---|

| Text-to-image | About 0.5 seconds | Around 15 seconds | At least on par in performance while much faster |

| Image-to-image, single reference | A little above 0.5 seconds for Klein 9B | Around 15 seconds | Fast enough for interactive editing |

| Image-to-image, multi-reference | Less than 1 second | Closer to 20 seconds | Multi-reference editing can remain near real time |

The reason this mattered, Batifol said, is that users should be able to generate and guide outputs in real time. He described mockups rendered “as fast as you think,” design choices browsed with persistent memory, and interactive visual engines for gaming and film. In the most expansive version, a film could be rendered as it is prompted.

That framing puts speed in the same category as multimodal representation: a prerequisite for a different class of system. Waiting 10 or 20 seconds, or a minute or two, makes generation a batch process. Sub-second generation makes it an interactive process, where prompting, editing, and visual feedback can collapse into one loop.