Context Graphs Make AI Decision Trails Queryable

Stephen Chin of Neo4j argues that enterprise AI systems need context graphs because retrieval alone can surface relevant facts while missing the relationships that make them usable. In his examples, a graph-augmented system can connect a patient’s emphysema care plan to smoking history or a credit decision to prior rejections, policies, margin trades and fraud signals. Chin’s case is that agents should preserve not only documents and answers, but the decision traces, tool calls, causal chains and outcomes that let humans inspect and reuse prior reasoning.

Retrieval is not enough when the missing part is the relationship

Stephen Chin frames context graphs as an answer to a specific failure mode in enterprise AI: the information needed for a good decision may exist, but it is scattered across Slack discussions, customer threads, CRM records, Jira boards, PagerDuty alerts, Zendesk tickets, Zoom calls, and people’s heads. In that state, an agent asked to make a critical business decision is operating without the relationships that would make the facts usable.

His distinction is not simply between “more data” and “less data.” It is between isolated retrieval and connected context. In the “blue pill” version of the enterprise, reasoning is lost across silos: why a choice was made, which alternatives were considered, what precedent existed, which approval chain applied. In the “red pill” version, the enterprise has a system of reasoning that connects data sources, decision traces, policies, exceptions, evidence, approvers, and outcomes.

The context graph Chin describes is a knowledge graph designed around that second state. It does not only store entities such as customers, accounts, products, employees, policies, or tickets. It also stores decisions as first-class objects: what was decided, what evidence supported it, what policy applied, what exception was invoked, who approved it, and what happened afterward.

Context graphs are really powerful for this because unlike a traditional audit log, they're capturing the why, the decision traces that happens while you're evaluating your models.

Chin points to two signals that, in his telling, show the term moving beyond an internal vendor framing. A slide places “Context Graphs” on Gartner’s “Hype cycle for Agentic AI” as of April 2026, and Chin says Foundation Capital “started this thread” with a post about a “3 trillion dollar startup opportunity” in context graphs. The article does not independently establish either claim beyond the talk and visible slide; the substance of Chin’s argument is practical: agents cannot make reliable, explainable decisions across domains if the reasoning and relationships behind prior decisions are not available for traversal.

Knowledge graphs give LLMs a structure they can traverse

Knowledge graphs are the base layer for the context graphs Stephen Chin describes. At the simplest level, a graph holds nodes, relationships, and properties. Nodes represent entities: people, companies, objects, accounts, events. Relationships represent associations or interactions between them. Properties attach attributes to either nodes or relationships, and those properties can include vectors.

His example slide shows a small graph connecting “Dan,” “Ann,” and a “Car.” Dan and Ann are person nodes with names and birth dates; Dan “lives with” Ann and “drives” a car; Ann “owns” the car. The car has properties such as brand, model, description, a description embedding, and a description source. Chin’s point is that graphs can combine explicit structure with embeddings: similarity search can help locate relevant content, while graph traversal can preserve the relationships that make the content meaningful.

He describes LLMs and knowledge graphs as complementary. LLMs contribute language, reasoning, and creativity. Knowledge graphs contribute knowledge, context, and enrichment. Together, they support storing relationships, visualizing the data that matters, finding hidden patterns, and analyzing the context that drives agent performance.

The production objective, in Chin’s formulation, is explainable AI. A slide breaks that down into three graph-enabled activities: storing learnings from user-agent interactions as context; visualizing conversations, flows, and reasoning; and analyzing context data to identify and apply improvements to agent systems. The graph is not only a data store. It becomes a representation of how the agent interacted with the world.

The healthcare example shows why similarity search can be grounded but incomplete

The retrieval problem becomes concrete in a healthcare question: “What was in the Care Plans associated with Andrea Jenkins’s emphysema?”

Asked directly, an LLM gives a broad answer about preventing further lung damage. Stephen Chin characterizes this as generic: the model knows what emphysema is and can describe standard practice, but it is “not connected to real data.”

A baseline RAG system improves the answer. The slide describes it as “LlamaIndex-RAG backed by GPT 4.0 and Chroma vector database.” It returns that Andrea Jenkins’s emphysema care plan included respiratory therapy, specifically deep breathing and coughing exercises. Chin calls this more grounded, because it is drawing on patient-related information, but still incomplete and generic.

GraphRAG changes the answer by bringing in more of the patient-specific context. In the slide’s GraphRAG version, built with Neo4j, LangChain, and OpenAI, the answer becomes: “The Care Plan for Andrea Jenkins’s emphysema included medication management, smoking cessation counseling, and pulmonary rehabilitation exercises.” Chin says the smoking cessation recommendation clearly reflects a history of smoking, and he says operation history is the kind of background information that was lost in the similarity search.

| Approach | What it returns | Chin’s characterization |

|---|---|---|

| LLM direct | Generic emphysema care guidance such as preventing further lung damage | Not connected to real data |

| Baseline RAG | Respiratory therapy, deep breathing, and coughing exercises | Grounded but incomplete |

| GraphRAG | Medication management, smoking cessation counseling, and pulmonary rehabilitation exercises | Complete, accurate answer |

The important claim is not that vector retrieval is useless. Chin’s claim is narrower: similarity search may retrieve some relevant text and still miss information that matters because it sits in connected background context. The missing piece is not always in the nearest chunk. It may be one or more hops away through the patient’s history and related records.

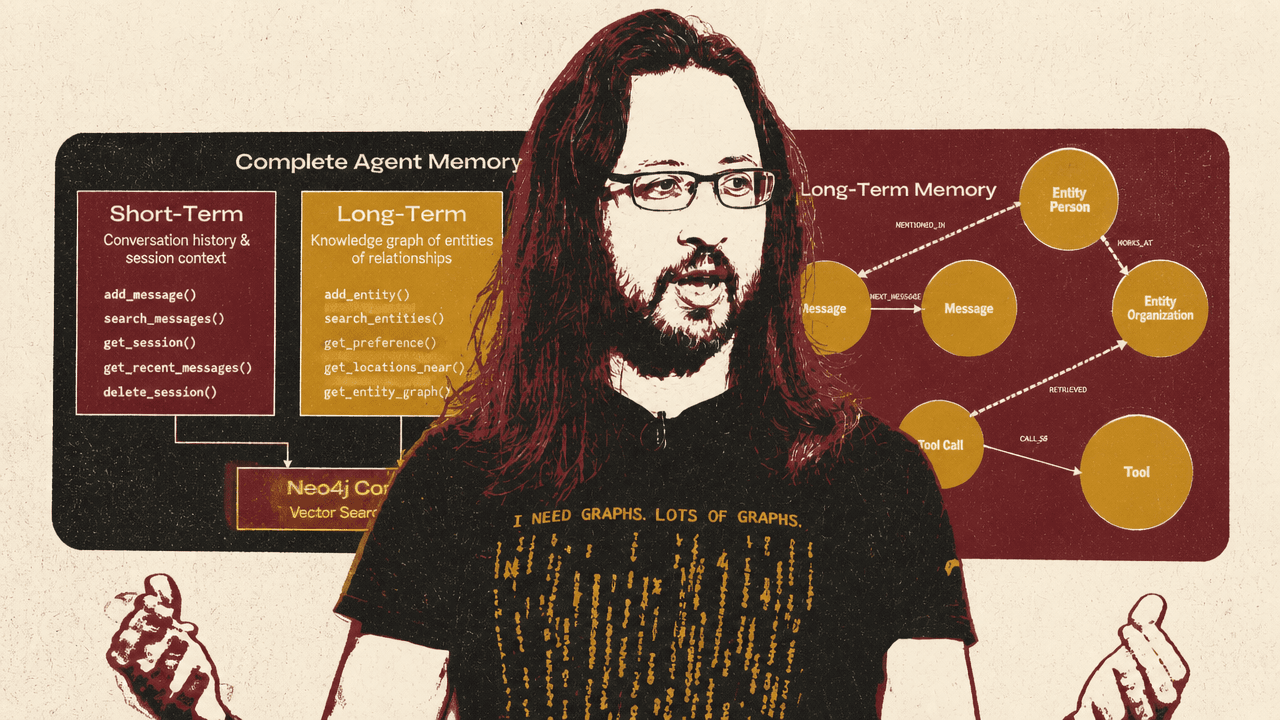

Agent memory needs short-term state, long-term knowledge, and reasoning traces

Agent memory is separated into three layers: short-term memory, long-term memory, and reasoning memory. GraphRAG supplies grounding and retrieval, but Stephen Chin says agents also need a memory structure that records what is happening now, what has been learned over time, and why prior decisions were made.

Short-term memory is the current context: conversation history, session state, current activities in the agent pipeline, tool results, compression, and relevancy handling. A slide describes short-term memory as conversation history and session state with automatic entity extraction. It shows sessions and messages stored as graph nodes with metadata, entity extraction through a multi-stage pipeline, deduplication through exact, fuzzy, and semantic matching, and enrichment of extracted entities with labels and properties. In the example, the message “Review Jessica Norris’ account” yields entities such as “Jessica Norris” as a person and “Account” as an object.

Long-term memory is a persistent knowledge graph of entities, relationships, and learned preferences. Chin says this layer must be organized well because “there is so much of it.” His slide uses a POLE+O entity model: person, organization, location, event, and object. People include customers, employees, and stakeholders; organizations include companies, teams, and departments; locations include addresses and regions; events include transactions, decisions, and meetings; objects include accounts, products, and documents. The key capabilities listed include cross-conversation knowledge persistence, temporal relationships with validity windows, configurable entity subtypes, automatic extraction from conversations, and vector search across entity types.

Reasoning memory is the layer Chin emphasizes for explainability. It records tool call traces, reasoning traces, and previous decisions. Instead of only keeping the final answer, the system stores the tool invocations, parameters, results, causal chain, and provenance that led to the answer. That enables an agent to check whether it solved a similar problem before, reuse successful patterns, and provide hooks for compliance and debugging.

Typically what we get from LLMs is we get the result, right? They'll tell us, well this is what I recommend, this is you know advise this. But to get to that result, there's thinking, there's reasoning which happens behind the scenes.

Chin argues that graphs are well-suited to this memory structure because relationships are first-class. The connections between entities, decisions, and events are not metadata attached after the fact; they are the structure being queried. He also points to structural similarity through graph embeddings such as FastRP, multi-hop traversal across chains like customer to account to transaction to decision to policy to employee, and community grouping through algorithms such as Louvain.

Neo4j’s open source “agent memory” package is presented as the implementation that brings these pieces together. Its API maps to the three memory layers: short-term operations for messages and sessions; long-term operations for entities, preferences, locations, and entity graphs; and reasoning operations for traces, tool calls, similar traces, and provenance. The method names matter less than the design: the same graph can preserve the live conversation, the durable domain model, and the decision trail an agent may need to reuse or explain.

The podcast demo treats dense media as a graph of people, topics, companies, and tool calls

The podcast application shows how short-term, long-term, and reasoning memory can work together outside a regulated business workflow. The demo loads more than 300 episodes of Lenny’s podcast into a memory architecture with entity extraction, a knowledge graph, and a Pydantic AI agent.

Stephen Chin says podcasts are dense and hard to search because they contain many connected topics, people, companies, and references. The application gives the agent tools for accessing graph memory. It can traverse entities and relationships across episodes, check whether it has solved a similar question before, remember conversation history and learned preferences, and show tool-call result cards for interactive visualization.

The on-screen architecture starts with a short-term conversation chain: a conversation node connected through first and next messages. Long-term memory adds entity nodes such as person and organization, connected by relationships like WORKS_AT and MENTIONED_IN. Reasoning memory adds a reasoning trace connected to reasoning steps, tool calls, tools, and retrieved information.

In the demo screenshot, the user asks: “Show me locations mentioned in the Brian Chesky episode.” The assistant searches podcasts and returns a successful memory result in 3.09 seconds, with 13 locations. The interface displays a map on the right and exposes the agent configuration on the side: multi-step reasoning, conversation memory, preference learning, graph context, 21 available tools, and 7 test call cards.

Chin’s interpretation is that the graph format lets the system produce a more holistic view of the dataset than a similarity search over isolated chunks. It is not merely pulling “some similar locations.” It is aggregating context and letting the user navigate and query the dataset dynamically.

A context graph captures the decision trail an audit log misses

Stephen Chin defines a context graph as “a knowledge graph specifically designed to capture decision traces — the full context, reasoning, and causal relationships behind every significant decision.” He contrasts it with a traditional audit log.

A traditional audit log records actions: for example, “Transaction rejected at 14:32.” It does not, in Chin’s comparison, preserve relationships between events, causal chains, or reasoning. It is flat and disconnected. A context graph captures the “why”: decision traces, causal chains, entities, relationships, events, and tribal knowledge made queryable.

| Traditional audit log | Context graph |

|---|---|

| Records actions only | Captures the full “why” |

| No relationships between events | Decision traces and causal chains |

| No causal chain or reasoning | Entities, relationships, and events |

| Flat, disconnected records | Connected, traversable structure |

| Example: “Transaction rejected at 14:32” | Tribal knowledge made queryable |

The architecture he describes starts with context graph retrieval tools in an agentic system. These tools use knowledge graphs, vector search, and graph data science algorithms to retrieve relevant information. During the agent loop, the system pushes contextual memory back into the graph. Later queries can retrieve those reasoning traces as part of their own output.

This is the key shift from retrieval to reusable decision context. A context graph is not only a way to find supporting documents. It is a way to ask whether the organization has seen a similar situation before, how it handled it, what policy governed it, what exception mattered, and what happened afterward.

The financial services demo makes the credit decision trail visible to the human approver

The financial services application grounds the context graph argument in a business workflow where the system must explain a recommendation. The domain model has three layers: entities, events, and context.

Entities include people, organizations, accounts, and transactions. Events include decisions, transactions, approvals, and rejections. Context supplies “the why”: policies applied, risk factors, and employee reasoning. The graph connects these into a financial services context graph where a person has an account, an account makes transactions, decisions are applied to policies, and reasoning links back to the facts and policies that informed the decision.

The demo architecture draws from a support ticket system, a CRM, and internal business data. A FastAPI backend uses a Claude agent, 10 MCP tools, OpenAI embeddings, and Neo4j context graphs and graph data science. The frontend is a Next.js application with graph visualization. Stephen Chin says the project is open source, has a hosted version, and can be run locally from GitHub.

The user question in the demo is direct: “Should we approve a credit limit increase for Jessica Norris? She’s requesting a $25,000 limit increase.”

The visible agent tools include search_customer, get_customer_decisions, find_similar_decisions, find_precedent, get_causal_chain, record_decision, detect_fraud_patterns, get_closed_account_decision, find_accounts_with_high_shared_transaction_volume, get_policy, and detect_fraud_pattern_by_customer. A tool call searches for “Jessica Norris” and returns a customer record with a medium risk profile, one account, and two decisions.

The screenshot is doing important work because it shows what Chin means by auditable traversal. The interface displays the chat and tool calls on the left and a node graph on the right. The selected node is a prior decision tied to account number ACC88990055, account type margin, and decision type credit_limit_increase. Its visible reasoning says the application was rejected because a credit score of 620 was below a required minimum of 650, recent derogatory marks included one late payment in the last 12 months, and the debt-to-income ratio was 48%, above a maximum allowed 45%.

| Evidence visible in the financial-services demo | Value shown |

|---|---|

| Customer | Jessica Norris |

| Risk profile | Medium |

| Accounts | 1 |

| Prior decisions | 2 |

| Prior decision type | Credit limit increase |

| Prior decision status | Rejected |

| Credit score | 620, below required minimum of 650 |

| Debt-to-income ratio | 48%, above maximum allowed 45% |

| Recent derogatory marks | 1 late payment in last 12 months |

Chin points out that the system knows Jessica’s bank account, related margin trades, and prior decisions. The model recommends not approving the request. Chin describes the outcome in loan terms while the visible prompt concerns a credit-limit increase; in both cases, the demo is about a financial approval decision. The recommendation is accompanied by reasons: risk factors, previous decisions that influenced the outcome, and fraud detection patterns indicating potential organizational risk.

For Chin, the important feature is that a human user can inspect the information being populated and used. In ordinary enterprise tooling, the relevant data could be spread across separate systems and informal discussions. In the context graph, prior rejection, policy constraints, related trades, fraud signals, tool calls, and causal relationships are brought into a queryable structure.

The developer promise is grounded business decisions with visible reasoning

The final claim returns to the human user and the developer. For the user making a financial decision, the context graph supplies the information needed to stand behind a recommendation and justify it to the organization. For developers building agentic applications, it supplies a way to show that the application is solving a real business problem with grounded information rather than producing an opaque answer.

That emphasis follows from the demo’s design: the system supports a human user who can inspect the recommendation, the graph, and the underlying decision trail. The agent retrieves, reasons, records, and recommends, but the value comes from the graph’s ability to show what the system drew on: the customer, accounts, transactions, policies, prior decisions, similar cases, tool calls, and outcomes.

Chin points attendees to Neo4j Graph Academy’s context graph course and says the course spins up a free Aura instance in the background so learners can try graph techniques before using them in a production or enterprise instance. The displayed Graph Academy slide describes the goal as combining generative AI and knowledge graphs to produce “highly accurate responses, with rich context and deep explainability.”