Supabase Says Skills and MCP Close the Agent Context Gap

Pedro Rodrigues of Supabase argues that agents fail on production systems less because they cannot reason than because they lack product-specific judgment. In a test using the same Postgres task, Supabase found that Claude with MCP alone created a view that could bypass row-level security, while MCP plus a Supabase skill added the required `security_invoker = true` flag. Rodrigues’s case is that MCP gives agents tools, but skills supply the rules, workflows, and current documentation paths needed to use those tools safely.

The failure mode is not weak reasoning, but missing product judgment

Pedro Rodrigues framed Supabase’s agent problem as a context problem with concrete security consequences. Agents can complete ordinary implementation tasks, he said, but they become unreliable when the task depends on product-specific rules that are new, absent from their training data, or easy to overlook. For Supabase, the recurring categories were security pitfalls, stale knowledge, and missing workflows.

We can all agree that agents are already smart enough, right? They can do very, they're very capable of doing mundane tasks by themselves. But when you present a task about something that they haven't seen yet, or you've updated since they were trained, like your product for example, they need the right guidance.

The security example was a Postgres view over a table with row-level security enabled. Rodrigues described a collaborative app in which users should only see data belonging to them. Supabase gave the same agent, which Rodrigues identified as Claude Sonnet 4.6, the same task under two conditions: MCP only, and MCP plus a Supabase skill. The prompt shown in the test was: “Create a team_task_summary view so team members can see how their team is doing.”

The resulting SQL patterns diverged at the security rule that mattered.

| Condition | SQL pattern shown | Annotation |

|---|---|---|

| MCP only | CREATE VIEW team_summary_view AS SELECT ... | AI issues: data exposed |

| MCP + skill | CREATE VIEW team_summary_view WITH (security_invoker = true) AS SELECT ... | Proper data isolation |

Rodrigues said that in Postgres, a view created over a table with row-level security can bypass RLS unless security_invoker = true is explicitly set. The result is not a syntax error or a failed task; it is a working query that exposes data the underlying table would not expose by default.

That difference is the failure mode Rodrigues used to motivate the rest of the talk. MCP gave the agent a way to interact with Supabase. It did not, by itself, tell the agent the product-specific judgment call that mattered. The skill supplied the instruction the agent otherwise lacked.

Rodrigues did not present skills as a replacement for MCP. He said the earlier “MCP versus skills” debate had largely settled into a recognition that the two have different roles. The more useful question, in his view, is how to write a product skill that gives agents the missing product knowledge without turning the skill into a stale duplicate of the documentation.

A skill is an index, a brief, and a set of optional resources

Pedro Rodrigues defined skills as folders containing instructions, scripts, and resources that agents can discover and load dynamically when they appear relevant to a task. The important mechanism is progressive loading. The agent first sees the skill’s frontmatter — its name and description — and uses that information to decide whether to load the skill.

Once loaded, the main instruction file, skill.md, carries the natural-language guidance: recommended knowledge, best practices, and domain expertise. A skill can also include bundled resources, such as scripts, reference files, templates, or other assets that the agent may need while executing the task.

That structure creates a design problem. A product team has to decide what belongs in the main file, what should remain in reference files, and what should simply point back to the company’s live documentation. Rodrigues said writing the Supabase skill was deceptively complex because Supabase itself is a complex product. The work was not just “write down what Supabase is.” It was deciding which instructions were stable enough, important enough, and short enough to put directly in the agent’s path.

Supabase’s skill was announced as an open-source set of instructions for AI coding agents. It was described as covering “18+ integrations” with “~100 tests,” installable with:

npx skills add supabase/agent-skills --skill supabase

Rodrigues said the point was to teach agents how to build on Supabase correctly, not merely to give them a broader API surface.

Do not duplicate the docs; force the agent toward the current source of truth

The first design principle Rodrigues offered was to describe intent rather than means. His shorthand was: do not duplicate information. A product skill should not become a parallel documentation site, because that creates the same maintenance problem every software team already tries to avoid.

For Supabase, the skill should point the agent to the current documentation and tell it how to look there. Rodrigues said agents tend to fall back to training data and can be “lazy” or “stubborn” about admitting they need fresh information. The skill therefore has to be explicit and persistent: search the documentation, use the right source, and do not rely on stale patterns.

Supabase’s documentation lookup path had three routes. The first choice was an MCP search_docs tool: a structured search against current documentation. The first fallback was appending .md to a documentation URL to retrieve raw Markdown from the same source as supabase.com/docs. The final fallback, when MCP is unavailable, was native web search.

Supabase is also experimenting with exposing documentation over SSH. Rodrigues described the premise as giving agents a file-system-like interface to the docs, because agents are already competent at navigating files and using Linux-style tools such as grep, ls, and cat. Instead of presenting documentation only as web pages, Supabase is testing a remote interface that lets agents treat the documentation as something they can inspect like a filesystem.

He briefly connected that idea to semantic search during the Q&A. Asked about demand for vector databases, Rodrigues said customers have been exploring vectors more, especially for embeddings. The use case he was most interested in was semantic search. Applied to docs-over-SSH, that could augment familiar shell-like navigation with richer retrieval, rather than leaving agents to search documentation naively with bash tools alone.

If a rule can be skipped, put it in the main skill file

Pedro Rodrigues said Supabase learned that reference files are not reliable places for critical rules. Even after an agent loads a skill, it may not load the reference files. If a task requires one reference file, the agent may skip it. If it requires two, Rodrigues said it will “most likely” not load both. Three or four reference files are, in his telling, effectively out of reach for anything the agent cannot afford to miss.

That observation changed how Supabase structured the skill. Rodrigues said Supabase initially put its security checklist in a reference file and saw agents miss it. The team moved those rules into skill.md.

The inline checklist shown in the talk included:

- use

security_invoker = trueon views; - remember that

UPDATErequires aSELECTpolicy; - never expose the

service_rolekey.

The reason was not that these rules are the whole of Supabase security. It was that missing them can cause real damage, and they are unlikely to change often enough to justify hiding them in optional material.

Rodrigues’s principle was blunt: “If something can get skipped, it will be skipped.” For product teams writing skills, that means the main file should contain the stable, high-severity rules that define safe use of the product. Reference files are for material that can be loaded opportunistically. They are not for instructions where omission produces a security exposure.

Opinionated workflows matter more than comprehensive product coverage

Rodrigues’s third principle was to be opinionated. Product teams know how their product should be used, and the skill should encode those workflow judgments rather than merely enumerate capabilities.

His example was Supabase schema management. Supabase, which Rodrigues described for unfamiliar listeners as a backend-as-a-service providing database, storage, authentication, and other backend features, lets agents interact with and modify database schemas. The workflow Supabase chose for the skill was deliberately staged.

First, in development or staging, the agent should use direct DDL operations and iterate freely with execute_sql or supabase db query. Second, once the schema changes look right, the agent should run Supabase advisors — described by Rodrigues as lint-like checks for security and performance issues. Third, only after those advisors pass should the agent formalize the change into one clean migration file.

The workflow is meant to prevent an agent from creating a migration file for every minor schema change while it is still exploring. Rodrigues presented this not as a universal database rule, but as the workflow Supabase believes works best for its platform. That is why it belongs in the skill.

This is also where the skill’s purpose differs from a tool definition. MCP can let the agent call database tools. The skill can say when to call them, in what order, and what “good” looks like before the agent moves from exploration to a formal migration.

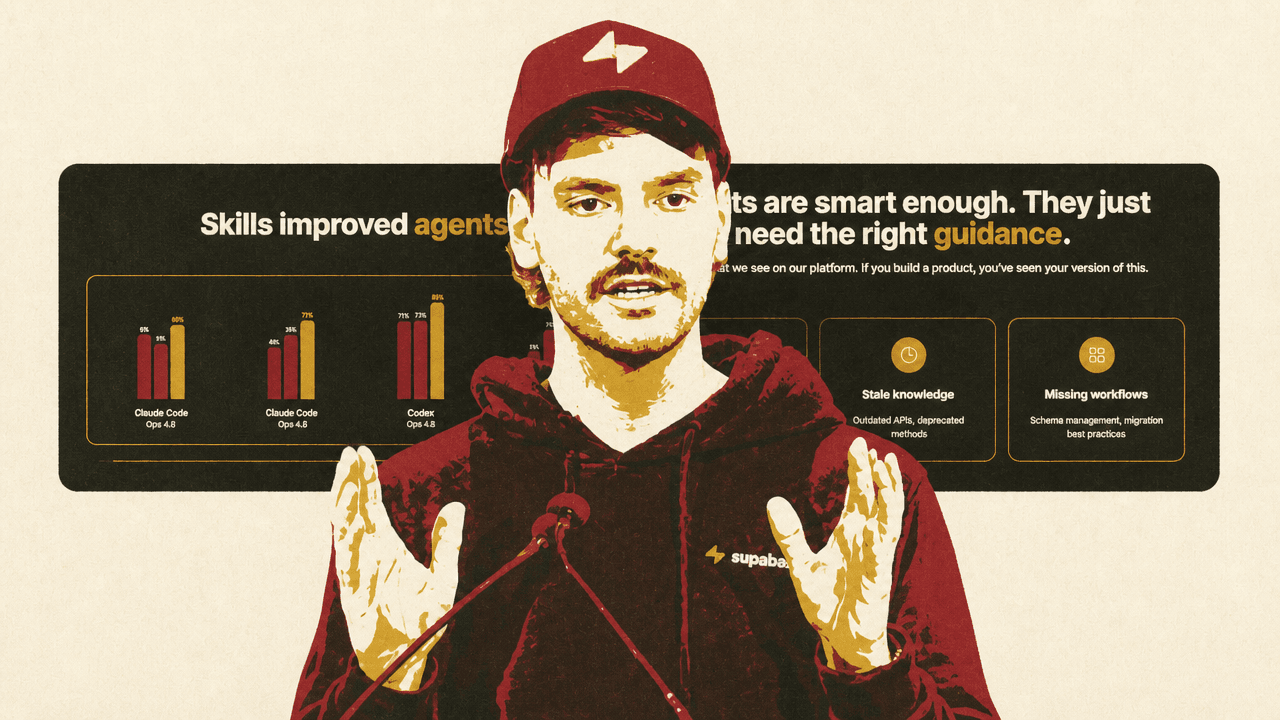

The evals showed MCP and skills solving different parts of the problem

Supabase tested the skill with evals across six Supabase scenarios, four agent-and-model combinations, and three conditions: baseline, MCP only, and MCP plus skill. Rodrigues described evals as tests for agents and LLM behavior — including tool calls and reasoning — analogous to CI tests for code. Supabase scored task completeness from 0 to 100 using Braintrust.

The results showed MCP plus skill scoring higher than MCP only, and MCP only generally scoring higher than baseline, in every model combination displayed.

| Agent and model combination displayed | Baseline | MCP only | MCP + skill |

|---|---|---|---|

| Claude Code / Claude 3.5 Sonnet | 42% | 62% | 82% |

| Claude Code / Claude 3.5 Opus | 42% | 70% | 87% |

| Codex / GPT-4o | 52% | 62% | 82% |

| Codex / GPT-4o Mini | 58% | 62% | 78% |

There was a naming discrepancy between the displayed chart and Rodrigues’s narration. The table above uses the labels displayed with the numbers: Claude 3.5 Sonnet, Claude 3.5 Opus, GPT-4o, and GPT-4o Mini. In speech, Rodrigues referred to Claude Code with Opus 4.6 and Sonnet 4.6, and Codex with GPT-5.4 and GPT-5.4 Mini.

Rodrigues’s interpretation of the evals was that tools alone are not enough. Agents need behavioral guidance, not just capabilities. The lift appeared across Claude and Codex in the displayed results, which he described as evidence that the pattern is agent-agnostic. The bottleneck, in his phrasing, was guidance: smart models need the right context and the right tools.

That interpretation is the same pattern as the row-level-security example. MCP exposed capabilities; the skill carried environment-specific assumptions about how to use those capabilities safely.

Distribution is still a constraint on whether skills work in practice

The Q&A surfaced one constraint Rodrigues did not treat as solved: skill distribution. Asked how Supabase distributes skills inside the organization — by pasting, repository, Git repository, or package manager — he said distribution is one of the current downsides of skills.

There are competing approaches. Rodrigues mentioned Vercel’s skills package and plugins bundled with MCP servers or other systems, but said some approaches are model-specific. In his view, distributing skills in general remains an unsolved problem, with different players trying to define the registry or distribution mechanism.

Internally, Supabase packages skills in repositories themselves. A team can create a .claude plugin, .cursor plugin, or equivalent inside the repo, making the skill available or discoverable to the relevant agent environment. If the repository is open source or otherwise accessible, a skills package can fetch it.

The constraint ties back to the central claim. A skill only helps if the agent can find it, load it, and use the right version in the relevant working environment. Rodrigues’s answer was practical rather than final: for now, Supabase uses repositories and environment-specific plugin conventions while the broader packaging layer remains unsettled.

The practical recipe is small, live, and tested

Rodrigues ended with three implementation rules for teams building product skills.

Use the docs. Point to the single source of truth instead of copying it into the skill. The skill should tell the agent where to look and how to retrieve current information.

Be opinionated. Encode the product team’s judgment calls, especially around workflows that agents are likely to get wrong if left to infer from APIs alone.

Start minimal. Rodrigues said model vendors and blog posts about skills tend to converge on the same advice: begin with less than 100 lines, test, iterate, expand, and do not be afraid to create new versions.

The Supabase lesson is that a product skill is not valuable because it contains everything. It is valuable because it contains the right stable rules, points to living sources for changing knowledge, and gives the agent a workflow that reflects how the product should actually be used.