Continuous Agents Need Stateful Compute, Not Traditional CI/CD

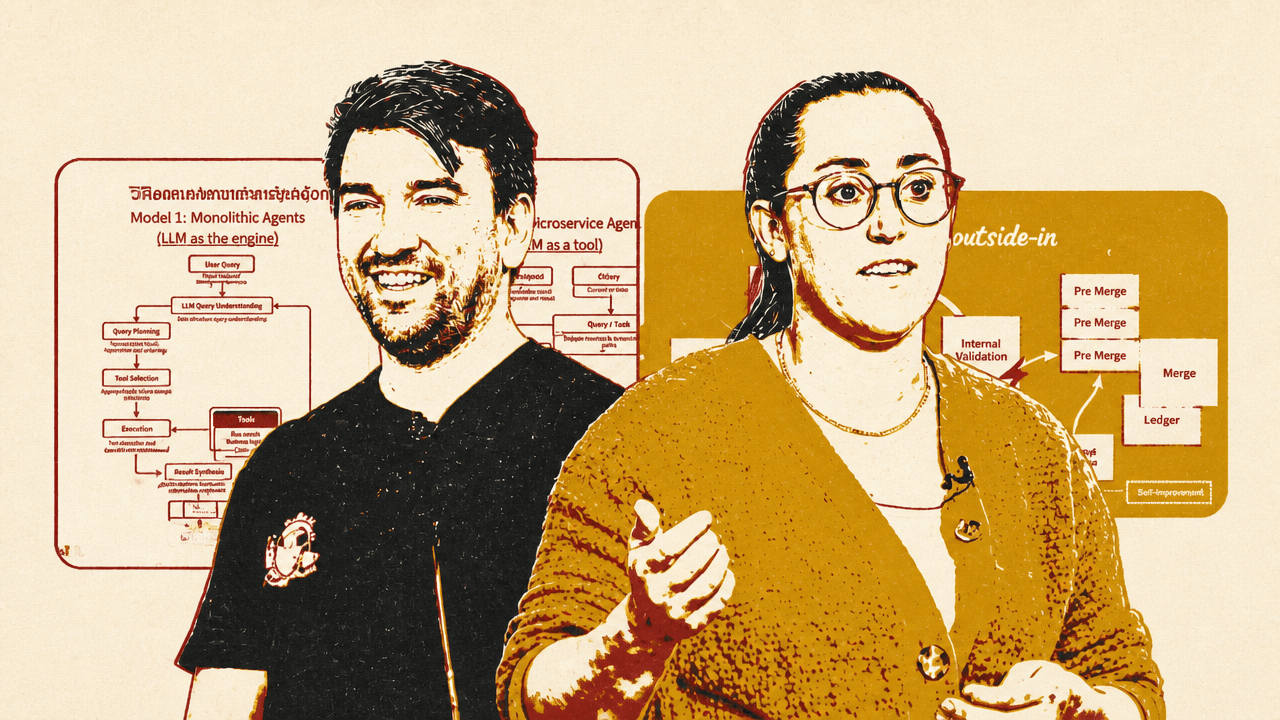

Madison Faulkner and Hugo Santos of Namespace argue that traditional CI/CD is organized around human-paced pull requests, and starts to fail when autonomous agents generate continuous, overlapping streams of code. Their proposed replacement keeps validation inside a stateful agent loop, uses caching and orchestration to avoid cold starts, and moves completed work into a pre-merge layer where humans review intent and outcome rather than every diff. The underlying CI functions remain, but the pull request stops being the system’s basic unit of work.

CI/CD was built around human latency; agents remove the hiding place

Madison Faulkner frames the problem as a mismatch between the assumptions of traditional CI/CD and the behavior of agentic software. The old system assumes that a human developer submits a small number of changes, those changes are packaged as pull requests, colleagues review them, GitHub Actions or an equivalent system runs build, test, and deploy steps, and the developer iterates on any failures. In the workflow Faulkner describes, a human developer with repository permissions submits roughly one diff a day to one repository, and each pull request takes about ten minutes to verify.

That design can tolerate slowness because human work is slow enough to mask it. Machines may take time to restore caches, run tests, and coordinate merges, but the developer is already waiting on review, context switching, or making the next change. Hugo Santos later makes the same point more explicitly: “machine latency hides behind human latency.” When humans write code slowly, package changes as PRs, and validate after push, delayed feedback is survivable.

Agentic development changes the traffic pattern. Faulkner describes agents using the same CI/CD systems, but generating N PRs to N repos. The verification time per change may remain similar, but the arrival pattern changes. Instead of a predictable flow of one or two changes, the system sees bursts, fan-out, and short-lived branches.

| Mode | Change pattern | Queue and cache behavior |

|---|---|---|

| Human developer | One PR per day | Predictable queues; local caches often warm |

| Agent workflow | Thousands of short-lived branches and PRs | Runners queue; cache miss rates spike |

The issue is not merely that there are more commits. It is that the existing pipeline’s bottlenecks compound under agent behavior. Branches become ephemeral. Caches are thrashed. Runners saturate. Different agents push the same codebase in several directions at once. Faulkner says that at some point “merging all these different versions together is really impossible,” and identifies that as the moment the current CI/CD model breaks down.

A Namespace slide showing GitHub activity over the prior three months displayed a sharp rise in commits, alongside lines added and deleted. Faulkner points to that pattern as evidence that activity has “gotten absolutely crazy,” emphasizing the spike in commits and churn.

The first replacement layer is acceleration, but the target is orchestration

Madison Faulkner’s proposed starting point is not to throw away existing CI/CD immediately. It is to accelerate it. She describes an “agent cache” inserted over existing infrastructure such as GitHub Actions, runners, repositories, build tools, and source-code management systems. In the near term, that cache speeds up slow build, test, and deploy stages — a problem she describes as common among current teams.

But the cache is not only a performance optimization. It becomes an orchestration layer. In Faulkner’s architecture, the CI/CD cache sits between agents, repositories, runners, build tools, and source-code management, with hardware-software co-design directing parallelizable or real-time tasks. She calls that hardware and software co-design “critical.”

The architecture she sketches expands from caching into intake, orchestration, identity, and retries. Intake requires ingress shaping and rate limiting. The cache requires global orchestration. The orchestration layer routes work to the right runners. Identity introduces short-lived credentials for agents. Retries include parsing logs and feeding failures back into the system at scale. In other words, the acceleration layer begins as a way to make existing CI faster, but it grows into the orchestration layer for agentic development.

That shift matters because agents are not just faster versions of human developers. They create a different traffic pattern against development infrastructure. A human may work locally, keep caches warm, and submit occasional PRs. An agent fleet may create many short-lived branches, retry failures, request many builds, and generate overlapping changes. Faulkner’s claim is that the middle of the software development lifecycle — build, test, integrate, release, deploy — is where the next infrastructure wave will be forced to innovate.

She situates this within a broader change in agent architecture. NEA’s framing contrasts “monolithic agents,” where the LLM acts as the engine, with “microservice agents,” where the LLM is one tool inside a broader orchestration layer with subtasks, memory updates, data, and other tools. Faulkner argues that agentic software is moving toward the microservice model. Software development infrastructure, under that model, cannot assume one assistant operating like one person at a keyboard. It has to coordinate many agentic services acting continuously.

The pull request is the wrong unit of work for continuous agents

Hugo Santos takes Faulkner’s diagnosis further by treating the pull request as the wrong unit of work. He argues that up to now, “the human is the agent.” A developer starts with an intent, writes code, opens a pull request, waits for CI, receives human review, and eventually merges. But each stage can send the developer back through the loop. A PR may fail formatting. Tests may fail. A reviewer may object to an API choice. The merge queue may reject the change because another colleague landed code first.

That loop is tolerable at human scale. The “opportunity to merge” — the time between working on code and getting it into the repository — can be large because the total number of concurrent changes is limited. As the rate of change increases, Santos says, that opportunity becomes “really, really important.”

The PR was designed for human review. It assumes scarce attention, delayed feedback, and discrete handoffs. A developer packages up work, sends it to another person, waits, and responds. Agents invert those assumptions. Code generation is cheap. Work is continuous. Validation needs to move into the inner loop rather than run as a later phase after push. Latency becomes a first-class problem.

CI still performs necessary functions in the old model. Santos lists validation — does the code work? — along with enforcement of invariants, coordination across changes, and governance over whether a change is allowed. CI checks for regressions. It verifies that code is compiled and built from a known source. It determines whether concurrent changes conflict. It helps decide whether a change is permitted.

But when agents generate many changes continuously, those functions cannot remain attached to the PR boundary. Human reviewers become overwhelmed by volume. Santos says reviewers need to focus on intent rather than implementation. The merge itself also becomes a systems problem. He compares it to a high-performance database: there is serialization, a single ledger, and a need to lock the database in order to commit. With humans, the lock interval can be large relative to the rate of change. With machines, the required interval becomes short, because there are many more transactions competing to land.

The act of merging is starting to look a lot like high-performance database problems.

That analogy is central to his argument. The repository becomes a ledger. The merge queue becomes a serialization mechanism. At high transaction counts, rollbacks increase. The bottleneck is no longer only whether a test passes, but whether a change can be reconciled into a moving shared history quickly enough.

The near-term architecture removes PRs and keeps validation inside the agent loop

Hugo Santos says the architecture his team already uses, and that he sees among companies “at the forefront,” has no PRs. It begins with intent and plan. The goal is written down somewhere: a Linear ticket, a Slack message, or another codified spec. That intent enters an agent harness — he mentions “cloud code,” Aider, Cursor, and Factory as examples of tools that might occupy this role.

The agent checks out code and begins implementing the plan. Invariants are applied from the beginning. For example, the agent starts from a well-known commit rather than an arbitrary local state. Internal validation then uses repository assets to determine whether the change is correct: it builds, tests, and evaluates the work inside the loop. The agent reports back to the human — “Hey, I just finished. Does it look good? Should I change something else?” — and the human often replies with the instruction Santos says his team uses constantly: continue.

The source’s “tomorrow: human outside the loop” diagram makes the shift explicit. Intent and plan feed code generation inside a stateful environment; internal and external validation sit inside the automated loop; world signals can update the plan; completed work enters a pre-merge queue before merge and ledger; human approval is moved outside the loop rather than placed at every validation point.

This is already faster than the PR-centered model, but Santos argues it is still not fast enough because external validation still has a human in the loop. The next step, which he places in “weeks to months, not years,” removes that human from the inner validation loop. Code generation gets faster as inference improves. Builds and tests must become extremely fast as well. “You cannot go and spend 15 minutes running your tests or 45 minutes or any sort of minutes,” he says, because every delay slows the loop.

External validation is then performed by other agents. Santos gives examples: a security-focused LLM, or an API-conformance LLM, providing feedback that the main harness incorporates back into the code. These evaluators are not described as replacements for all human judgment; rather, they move routine validation and feedback into the automated loop so that humans are not required at every iteration.

For that loop to work, Santos argues, the environment has to be stateful. Memory and state matter because starting from scratch each time compounds delay. The system must preserve enough context and build state to behave more like an engineer’s workstation: incremental, warm, and efficient. The loop also receives “world signals.” The plan may change. Another change may land. The harness has to adapt its intent and plan accordingly, creating a new loop. The target is therefore not a static CI job but a continuously running development process that can update its assumptions as the repository and surrounding requirements move.

Pre-merge separates machine completion from human acceptance

In Hugo Santos’s proposed architecture, completed changes do not go straight into the repository. They enter a pre-merge layer: a queue of changes that would have been merged if merging were fast enough and if acceptance had already been resolved. The reason for the intermediate layer is concurrency. Many agents may be operating in parallel on the same parts of the codebase. The system needs a reconciliation process that preserves serializability before changes enter the repository ledger.

The human role moves to this pre-merge and external approval layer. Santos does not describe humans reviewing every diff. He describes humans reviewing intent and outcome. The approval artifact might be a video showing that a feature works. It might be the output of a security-focused LLM. It might summarize multiple commits or multiple agent attempts, rather than correspond to one PR. Several independent agents may work on related features and have their outputs semantically grouped into something a human can manage.

That grouping is important because Santos says the volume is already too high. Within Namespace, he says, the volume of what would historically have been called PRs is four times larger than before. “It’s impossible for a human reviewer to look at every single PR,” he says.

The proposed system therefore changes what humans review. Instead of reading implementation details for every change, they review whether the stated intent was satisfied and whether the produced evidence is acceptable. Governance does not disappear, but it shifts upward into the harness and the rules the team has codified. The harness, in Santos’s phrasing, coerces the change toward following those processes. External approval remains, but it is no longer the synchronous checkpoint for every iteration.

This is also where Santos’s database analogy becomes operational. The pre-merge layer is not just a different review queue. It is the place where many candidate changes are reconciled into a serial order before being appended to the repository ledger. If CI/CD was organized around validating discrete PRs, this architecture is organized around managing concurrent streams of agent work.

The multiverse appears when the repository tip is no longer a stable starting point

Hugo Santos’s more speculative endpoint is what he calls “the multiverse.” If the inner loop becomes extremely fast, agents may not apply a plan only to the latest commit at the tip of the repository. The tip is moving. There may be multiple candidate starting points. Agents may work on multiple commits at the same time to address the same plan.

That possibility increases both compute demand and coordination complexity. Exploring several candidate histories in parallel consumes more resources. It also requires the inner loop to be extremely quick; otherwise, the cost of evaluating many possibilities becomes prohibitive. Santos says resource usage will “blow up” because of the number of candidates being explored at the same time.

His answer is performance and efficiency: do not do unnecessary work, do not start things from scratch, and make agents work more like engineers on their own workstations, where state is incremental and local feedback is fast. This is the same underlying claim in another form. CI/CD was built around discrete submissions into shared infrastructure. Agentic development needs continuous compute, warm state, and orchestration that can keep up with branching, validation, and reconciliation.

The result is not that CI’s underlying concerns vanish. Santos explicitly says CI still matters, but “it’s just shifted.” Validation no longer lives as a separate phase; every iteration goes through it. Invariants still have to be enforced, including compliance-relevant guarantees such as starting from a known checkout rather than unvetted code. Coordination moves out of CI as a separate gate and into the overall agent loop. Governance remains important, but it is lifted into the harness and into the policies that shape how agents make changes.

The claim, then, is more precise than the title’s “CI/CD is dead.” What dies is the PR-centered, delayed-feedback, human-paced pipeline as the organizing model. The functions of CI/CD survive: validation, invariant enforcement, coordination, and governance. But Faulkner and Santos argue that those functions are redistributed into caches, stateful environments, agent harnesses, pre-merge queues, and human review focused on intent and outcome rather than every diff.