PFF’s Two-Engineer Agent Team Shipped 10x More Output

PFF CTO Mike Spitz argues that AI agents change the basic operating constraint of an engineering organization: the question is no longer how to make engineers faster, but how to make agents faster. In a three-month case study, he says two agent-heavy engineers shipped far more frequently than a ten-person team on the same codebase, with PFF measuring a 10x output gain per engineer and higher customer satisfaction. The result, in his account, was not the end of engineers but the removal of Scrum-era coordination rituals and a sharper split between agent-executed work and human judgment.

The unit of optimization moved from engineers to agents

Mike Spitz said PFF’s three-month case study began with a change in the question the engineering organization was asking. Instead of asking how to help engineers output more, he asked how to make the agents faster.

That mattered because, in his framing, much of the modern software organization had been built around the engineer as the bottleneck. Spitz pointed to the long history of agile practice, software craftsmanship, and even the perks associated with engineering teams as signs of an industry structured to attract, retain, and optimize scarce human engineering capacity. At PFF, he argued, that assumption had started to break.

PFF is a sports data company serving NFL and NCAA teams, with a consumer business around fantasy football, sports betting, and draft tools. Spitz described a distributed engineering organization with engineers in India, Spain, and across the United States. The company had about 200 employees and around 20 engineers. It was falling behind competitors in an area where consumers were showing demand: draft-related tooling.

The company’s consumer draft product already had scale: Spitz cited more than 100 million pageviews per year and more than 9 million mock drafts started annually.

He began experimenting personally around November after Claude Opus became available, then spun the work out to two engineers: one of PFF’s strongest front-end engineers and one of its strongest full-stack engineers.

The case study compared that two-person, agent-heavy team with a roughly ten-person team. Spitz emphasized the obvious caveat: small teams are normally faster than large teams. Some part of the multiplier, in his view, came simply from the smaller group having less internal coordination overhead. But he also said the two-person team still had to coordinate its daily deployments with the larger team, so the comparison was not isolated from the real organizational friction around shipping.

The two-person team shipped far more often, and PFF tried to measure whether that output mattered

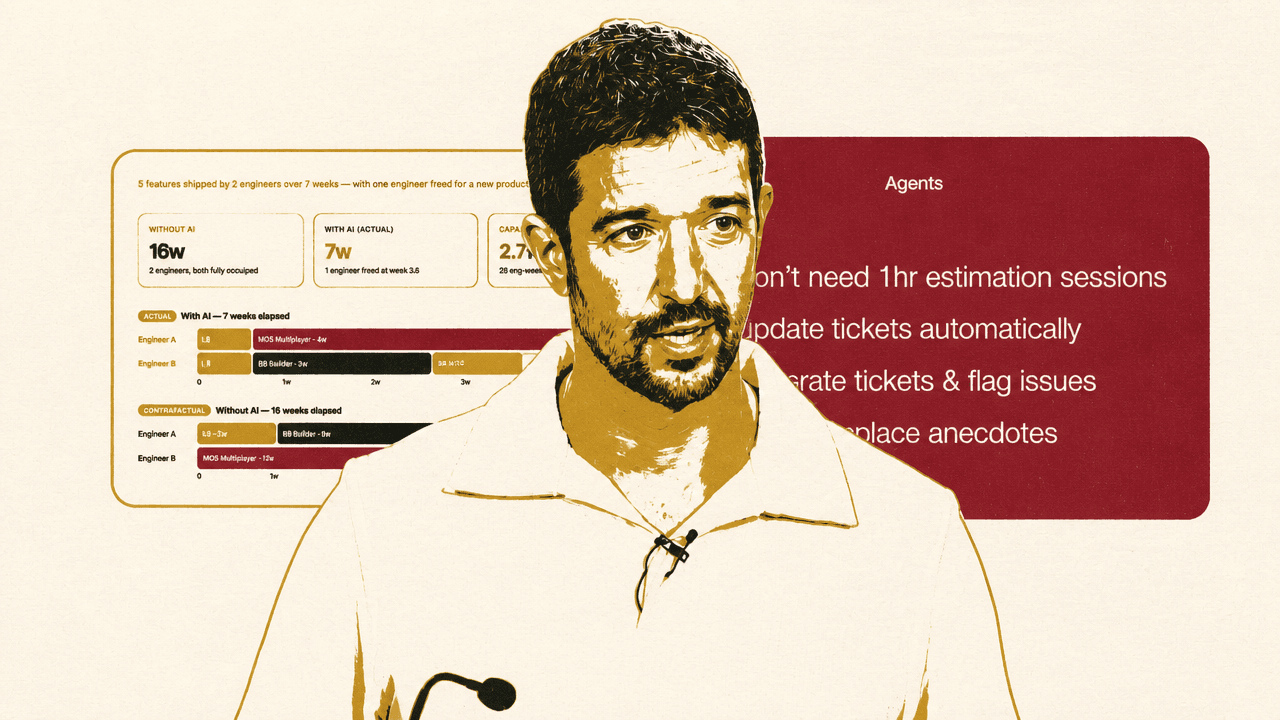

The most dramatic result Mike Spitz presented was deployment frequency. The two engineers were deploying about five times per day. In his spoken comparison, the roughly ten-person team was deploying about once every five days. The slide summarized the comparison as “five per day vs one per week” and reported a 25x difference against the team average.

Raw activity was not the measure. Spitz said pull requests and code volume were not useful ways to judge whether the work mattered. PFF instead blended ticket count with code complexity and found about 10x output per engineer compared with the ten-person team.

The counterfactual comparison was more revealing than the deploy count alone. PFF showed five features shipped by two engineers over seven weeks: PFF+ Multiplayer, My Drafts, BB Builder, BB MCs, and a new product one engineer could begin after being freed. In the actual AI-augmented version, one engineer was freed for new product work at week 3.5. In PFF’s non-AI estimate, the same work would have taken 16 weeks, with both engineers fully occupied.

| Measure | With AI | Without AI / prior baseline |

|---|---|---|

| Elapsed time for five features | 7 weeks | 16 weeks |

| Engineering allocation | One engineer freed at week 3.5 | Two engineers fully occupied |

| Capacity framing | 28 engineering-weeks of output from 10.2 input; 2.7x multiplier | Both engineers blocked for the projected feature set |

| Average quality score | 8.6/10, n=248 | Previously around 7 to 7.5, according to Spitz |

The operational claim was compounding capacity. When one engineer becomes unblocked in under a month rather than remaining tied up for three months, the organization can start work that previously would have waited.

Customer satisfaction was the control on the productivity story. Higher deployments and more output did not matter, Spitz said, unless customers were happier with what shipped. PFF ran statistically significant surveys and reported an average quality score of 8.6 out of 10 for the five features in the case study, with n=248. Before AI, he said, PFF’s average had probably been around 7 to 7.5, which he took as evidence that the company had not been delivering what customers wanted.

Scrum rituals fell away because their original job disappeared

For Mike Spitz, the process conclusion was blunt: Scrum did not survive. The company was not just measuring delivery gains from AI-assisted engineering; it was also removing process built for a human bottleneck.

The workflow that remained had two main parts. First, PFF replaced recurring ceremonies with huddle sessions. These huddles happened every other day, lasted roughly 30 minutes to an hour, and included engineers, product, and design. The purpose was immediate feedback on what had been built in the prior couple of days. The team was trying to deploy to production as quickly as possible in an MVP state and gather feedback.

Second, PFF built a flow from spec to lightweight design document, then to generated tickets and pull requests. The spec itself was not simply handed to an agent. Spitz said PFF had the agent interview the team and provide feedback on the spec. The agent then produced a lightweight design document using a skill that analyzed previous LDDs so new work followed the same ethos as prior work. Those LDDs were distributed for engineering feedback. After that, tickets were automatically created, followed by pull requests.

Several standard agile rituals lost their old function. Sprint planning disappeared because PFF no longer needed hour-long estimation sessions. The point was not that planning has no value; it was that estimates no longer mattered in the same way when the operating constraint changed. Spitz added one future exception: once token subsidization ends, teams may need to estimate token expenditure to decide whether the work is worth the cost.

Daily standups disappeared because tickets update automatically from pull request state. If a PR is open, the corresponding ticket moves to in progress. If the PR goes into review, the ticket updates. If the PR merges, the ticket closes. Spitz acknowledged that teams could have managed this before, but said it had become easier and more natural in the new workflow.

Sprint refinement moved upstream into the spec and LDD process. When PFF automatically creates tickets, Spitz said, it structures them so they do not block each other; where dependencies exist, the system flags them.

Retrospectives were displaced by a combination of immediate issue-flagging, customer satisfaction surveys, and development metrics such as deployment frequency. Spitz conceded that this might be controversial. His preference was for engineers to flag issues immediately rather than waiting until the end of a sprint. He contrasted that with a common retrospective failure mode: feedback raised a sprint later can feel unheard or stale.

The first adopters were deliberately the strongest engineers, not the average case

Mike Spitz’s rollout advice did not start with universal access. Teams should begin with engineers who have deep, broad system knowledge — the people others already go to when they are stuck. PFF chose two of its strongest engineers for the case study, one front-end and one full-stack.

Agent-heavy engineering, in this account, requires judgment about the system, not just willingness to use tools. The engineers most likely to thrive are curious and self-directed: the type who will spend time figuring out how something was built if they do not already understand it. Spitz contrasted them with an older style of engineer who needs highly prescriptive specs and said those engineers are likely to struggle.

The phrasing was deliberately sharp: “Not everyone can drive a sports car.” Spitz did not present that as a moral judgment. He said engineering organizations need to be honest about who can operate well in this mode and who may find it hard.

That caution also shaped his rollout advice. Teams should scale slowly, not give every engineer every coding assistant at once and declare the transition complete. Spitz criticized the pattern of handing out Claude Code or Codex, running a hackathon, and assuming the organization has changed. Every engineering organization has a different style, he said, and the transition needs to respect that.

He also recommended beginning in non-critical systems. Before the formal case study, he spent roughly two months building small proof-of-concept features and pushing them to production in areas where low traction meant bugs would not matter much. Only after that did PFF move the approach into a product area with 100 million annual pageviews.

The review model split what agents can check from what humans must judge

PFF’s guardrail architecture began with verifiable, deterministic tasks. Mike Spitz listed unit tests, end-to-end tests, linters, and PR prerequisites, but also emphasized organization-specific checks. For PFF, those included feature flags because the company uses trunk-based development, and potentially generated analytics for interactive elements.

The code-review model also changed. PFF did not want to rely on agents for system-design review — the kind of review engineers normally perform when they are reasoning about architecture and tradeoffs. Instead, agents took over the code-review feedback engineers dislike receiving: variable names, style mismatches, and other opinionated but relatively mechanical issues.

That split mattered culturally as well as technically. By offloading style and naming feedback to agents, PFF could remove some of the emotional charge from code review. Engineers could then focus on the larger questions.

Human review remained heavy at the front of the process. Spitz said people were still deeply involved in the spec and the lightweight design document, where the team decides how something will be built. Human review was lighter at the code level, except for security, and heavy again at the end for product feel.

Product feel was one of his recurring constraints. He said the current tooling makes it possible for people to create almost anything in an hour, but many outputs have the “brand feel and the product feel” of something created by Claude Code. For PFF, getting the best result meant having the engineering team spend time making sure shipped work still felt like part of the company’s existing product.

Security was another explicit human responsibility. Spitz said agents like to take shortcuts, so people need to ensure the security side is behaving. Humans also need to judge scale and engineering complexity: whether the team is spending tokens on work it does not need, whether the agent has over-engineered something, and whether the implementation will hold up. He said the LDD is meant to prevent problems such as an agent producing 1,000 lines of code for something that should have been simpler.

Autonomy required encoding the company’s engineering habits as composable skills

Mike Spitz described the development lifecycle as something like a factory. The point was not to make engineers interchangeable; it was to decompose engineering work into small, repeatable elements that agents can execute consistently.

In a car factory, he said, one part of the process builds a door and another fits a steering wheel. In software, the analogous pieces might include branch naming, feature-flag creation for trunk-based development, or the way a team builds APIs using a specific design pattern. PFF, for example, uses a service-repository pattern when building APIs. Spitz argued that those patterns should be abstracted into composable skills.

He warned against importing other people’s skills when they carry strong software opinions that conflict with the organization’s own. If external skills encode patterns at odds with the engineering culture, he said, teams will end up with issues. The skills need to match the organization’s own style.

PFF’s target loop involved agents at every stage: spec, lightweight design document, ticket creation, branch and PR creation, self-testing, and self-QA. Spitz said “pretty much everything” in that listed flow was fully autonomous at the time of the talk, but the boundary matters: the repair loop after QA failure was not yet built.

The QA loop was the most concrete example. Whenever PFF merges a PR, it automatically deploys to staging. Once staging deployment completes, a QA agent spins up. That agent looks at the tickets involved, reads the acceptance criteria, and performs QA against them. If everything passes, the flow proceeds. If not, the agent flags the failed items.

The next step Spitz wanted to build was automatic repair. Within the following couple of months, he wanted an agent to review the tickets, identify where acceptance criteria had not been met, and automatically create PRs to fix the issues. That is the “self-healing” flow he described: agents able to fix their own issues at each stage.

The benefit, in his framing, is not simply automation for its own sake. Once the team trusts agents, humans can parallelize more work. That is where the multiplier comes from: a small number of humans steering a larger number of agent-executed workflows.

The playbook was aggressive, but not indiscriminate

Mike Spitz’s recommendations had two sides. Teams should start with boring, repetitive tasks, ideally the work engineers already hate. That creates buy-in and removes obvious friction. They should remove as much redundant process as possible, asking what each meeting or process is actually for rather than preserving it because the industry has done it for two decades.

They should encode the team’s own engineering culture and patterns as skills. They should build functioning guardrails before moving toward autonomy. And they should use their best engineers to build the system out.

The warnings were just as strong. Do not onboard everyone at once. Do not try to create a one-size-fits-all approach. Do not try to do too much at once. The transition needs to be phased.

But excessive caution was also a risk. Spitz argued that other companies are moving quickly, and falling a few months behind can compound into being six months behind, then twelve. He said he already felt a few months behind from PFF’s perspective.

Small companies, he argued, have an advantage. Scaling this approach across 20 engineers is much easier than scaling it across 100, 1,000, or 10,000. That does not remove the need for a careful rollout. It changes the urgency. Teams should move in phases, but rely on their engineers to tell them whether the organization can scale faster or needs more time.