AI Will Expand Work, Not Replace It, Andreessen Argues

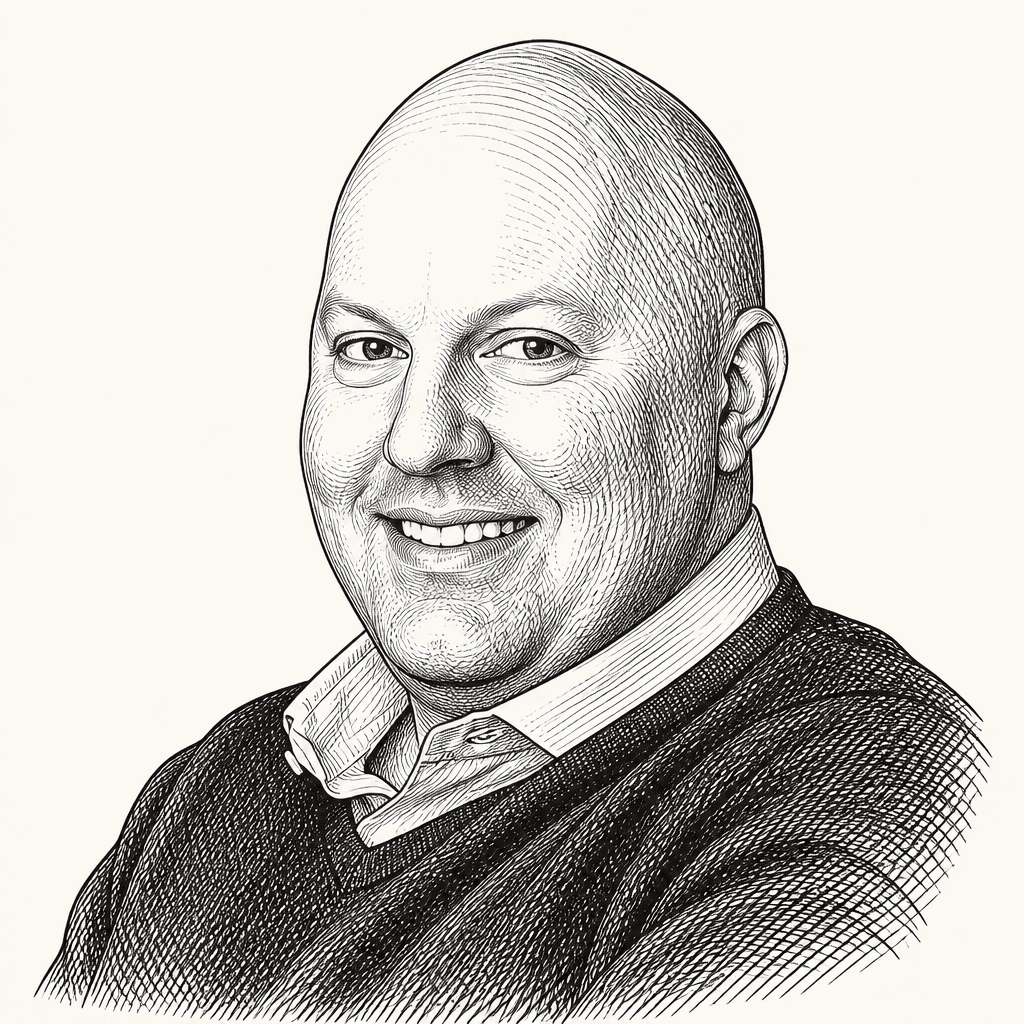

Marc Andreessen argues to Erik Torenberg that AI is more likely to expand work than eliminate it, turning coders, product managers and designers into more generalist “builders” whose productivity and bargaining power rise with the tools. He treats the current wave of AI anxiety as driven partly by stale experience with older models, hostile media narratives and institutions with incentives to preserve fear. His “golden age” thesis is conditional: the upside arrives where companies, workers and governments allow AI-driven capability to become more output, new roles and new firms.

Andreessen’s AI claim is not fewer workers, but much more work

Marc Andreessen’s central claim is that AI is expanding the amount of economically valuable work people can do, not simply substituting for labor one task at a time. He treats software development as the first visible test case: a domain where the tools are already good enough, adoption is already underway, and the effects can be observed directly.

The visible pattern, he says, is not programmers becoming idle. It is programmers working more.

Andreessen calls the leading adopters “AI vampires”: people using systems like Codex, Claude Code, and other AI coding tools so intensely that they “stop sleeping.” They are exhausted, “bleary-eyed,” and carrying “huge bags under their eyes,” but also euphoric. They are not acting like people whose market value has collapsed. They are acting like people who have discovered a sudden increase in what they can produce.

They've got these huge bags under their eyes, they're completely exhausted. But they're like euphoric. They're thrilled.

The “AI vampire” image is reinforced by stylized visuals: classical-painting-style workers at laptops, close-ups of tired eyes, and an “EUPHORIC” text overlay against a gold-and-binary background. The imagery is theatrical, but it matches the substantive claim Andreessen returns to later: the most aggressive adopters are exhausted because they can now do more, not because they have become irrelevant.

Andreessen says this is not limited to professional programmers. He describes former programmers who had stopped coding and have now picked it back up, as well as partners at a16z who had never coded and are now “ripping out software like crazy.” One partner, he says, built an entire AI system for his own work by “vibe-coding” it. When Andreessen asked whether he had looked at the code, the partner answered, “hell no.” Asked whether he had ever looked at any software code, the answer was also “hell no.”

The point is not only that AI makes coders faster. It is that AI lets non-coders produce working software at all. Andreessen’s answer is that it does, and that the consequence is a new class of hyper-productive workers, not merely a reduction in headcount.

His economic claim is conventional in form: when the marginal productivity of a worker rises, work expands. The worker becomes more valuable, not less. He says he is seeing this in compensation as well as behavior: the most productive AI-augmented coders are becoming more in demand, gaining more bargaining power, and seeing compensation rise.

He puts the increase in programmer productivity at the extreme edge as “the most dramatic increase in programmer productivity in like ever.” At leading-edge companies, he says, estimates are that leading-edge programmers are “like 20x more productive than they were a year ago.”

That does not mean he denies layoffs. He draws a distinction between AI-driven productivity gains and what he calls longstanding corporate bloat. Erik Torenberg raises Andreessen’s recent claim that companies have been “2 to 4X bloated” for a long time. Andreessen says the response he has received has often not been disagreement, but escalation: people telling him their former company was more like “8X bloated.”

Twitter/X is treated as the visible case. Torenberg says Twitter proved the point by cutting 70% or 80% of its workforce and still running “better or as good as it was before.” Andreessen says he does not know the exact number and would not say if he did, but believes “for sure the number has a 9 on it,” perhaps “high 9s.” His interpretation is that Elon Musk “forecasted the future through his own actions.” Supporting visuals include an article excerpt about Twitter layoffs and a displayed Elon Musk post claiming X traffic had reached an all-time high.

The bloat argument is separate from the productivity argument, but they intersect. Andreessen says every major Silicon Valley company is overstaffed and has been “basically forever.” He extends that beyond tech to corporate America broadly, and rejects the premise that companies are optimized for profitability. When companies want to make large cuts, he says, they need a rationale; AI is now a convenient one.

He does not call that rationale wholly false. If a company wants to generate the same amount of code, and each AI-augmented programmer can produce much more, then fewer programmers are needed for that fixed amount of output. But that framing misses the adjustment he considers decisive: companies will not keep output fixed. They will generate more code, build more products, and move faster. That, he predicts, produces “enormous amounts of employment growth on the other side.”

So he advises reading company layoff announcements “in code.” Some reductions may reflect actual productivity gains from AI. Some may reflect overdue correction of overstaffing. The bigger story, as he sees it, is that output expands when capability expands.

The tech job may collapse into the builder

The role Marc Andreessen expects to change most is not simply “coder,” but the split between programming, product management, and design. Torenberg cites a viral formulation that the future tech company might consist of product engineers or vibe coders, infrastructure/security people, “the adults in the room” such as legal and finance, and “hot people” or personality hires for functions such as sales and customer support.

Andreessen jokes about the “hot people” category as the familiar pharmaceutical or Oracle sales rep, but takes the broader job-structure question seriously. The emerging title he sees in leading Valley companies is “builder.”

A builder, in his description, is not just a programmer. It is a person responsible for creating complete products, using AI to fill gaps across coding, design, and product judgment. The old configuration had separate jobs: programmer, product manager, designer. Andreessen describes the current moment as a three-way standoff in which each group increasingly thinks it may not need the other two. Programmers think AI can help them with product and design. Product managers think AI can help them generate code and design. Designers can use AI to move into product and code.

Andreessen’s prediction: “they’re all correct.”

The consequence is not that all work disappears, but that the old occupational categories may. A person might enter the builder track from coding, product management, design, customer service, or another function. What matters is the ability to use AI to build a complete thing.

He situates this in a longer pattern of job transformation. Jobs disappear, new jobs appear, and the new jobs are often better. He points to the United States 200 years ago, when, in his comparison, almost everyone farmed; today, he says, only a small share does. Having grown up in farm country, he argues that people worried about job change would not want to go back and farm as people did in 1800.

This is the core of his “golden age” thesis. AI is not just a tool for a narrow technical class; it is a general augmentation layer that could become available to everyone. Opening imagery makes the thesis literal: “Golden Age” text over classical scenes, a robot in Roman armor addressing a crowd, and a robotic hand reaching toward a human hand in a Creation of Adam-style composition.

AI is going to be a superpower that everybody in the country and everybody on the planet is going to have access to.

Andreessen argues that if people become more productive in their line of work, they will be compensated accordingly, because the economy “naturally compensates according to productivity.” He expects a “rapidly rising ladder” of both income and jobs, provided the process is allowed to happen.

The political economy matters. Andreessen says the upside depends on “the degree to which it’s actually allowed to happen.” He contrasts the United States with Europe, which he predicts will “try to prevent all this from happening.” He says Europe has been falling behind economically and will continue to do so because of what he calls a “100% self-inflicted” wound.

The forecast is expansive, but conditional: the golden age arrives where AI adoption is permitted, normalized, and rewarded.

The junior-worker panic gets the direction wrong

Marc Andreessen’s advice to young people follows directly from the builder thesis: gain “AI superpowers.” For college students and new graduates, he frames AI as a historical accident in their favor. They have arrived at the moment when a new capability for augmenting human ability across “a thousand fronts” has appeared, and it will improve from here.

Older and supposedly wiser people, he says, will dig in, get angry, and resist. That resistance creates an opening for younger workers who make AI central to their professional and creative identity. His practical advice is to walk into interviews with evidence: not just a resume or portfolio, but a demonstration of how they use the technology and what capabilities that gives them.

He invokes a Douglas Adams observation about age cohorts and technology. If a new technology arrives when someone is under 15, it feels like how the world has always worked. Between 15 and 35, it is “cool and nifty” and can become a career. Above 35, it is “unholy” and should be destroyed. Andreessen says he is especially jealous of people between 15 and 25 right now. He does not generally wish he could go back in time, but says it would be “really, really fun” to be 18, 20, or 22 with these tools and figure out what to do with them.

Erik Torenberg adds that a16z is trying to hire more AI-native people because they can help the firm become more AI-native. Andreessen says that cuts against a current doomer narrative: that companies will stop hiring junior employees because entry-level work is easiest to replace with AI. He thinks the opposite is true.

Companies, in his view, should want “AI native kids.” They will outperform older “Luddite” peers “gigantically, titanically,” though older workers who adopt the tools can also do well. The result, he predicts, will be “super producers” unlike any seen before — including 18-year-olds, 24-year-olds, and even 14-year-olds using AI.

He closes the point with a joke that captures the tension: “the children yearn for the AI mines.” The joke is deliberately flippant, but the underlying claim is serious. AI-native young people are not merely candidates to be protected from automation; they may be the first large cohort whose default working style is built around it.

AI skepticism, in Andreessen’s account, is often based on stale contact with the technology

Marc Andreessen distinguishes several phenomena that get conflated in public discussion: genuine risks around sycophantic models, pejorative claims of “AI psychosis,” dismissive “AI cope,” and what he calls “AI psychosis psychosis.”

“AI psychosis,” as he describes the pejorative, refers to people being “whammied” by AI, especially through sycophancy. A user tells a model they have discovered an anti-gravity machine, and the model praises them as an unrecognized genius who has made a breakthrough in physics. Andreessen concedes there is a serious version of the concern: if people are prone to delusion and a model is overly sycophantic, the model can feed the delusion.

But he objects when the term is expanded to cover anyone reporting a positive or productive experience with AI. If someone says their productivity rose, that they have a thought partner for the first time, or that they made something they could not otherwise have made, critics may classify that as “AI psychosis.” Andreessen’s counter-label is “AI cope”: the move of treating positive AI experiences as delusional because one is committed to the belief that AI is fake, fraudulent, or merely a “stochastic parrot.”

“AI psychosis psychosis,” his further coinage, is for the angrier version — people who “froth at the mouth” in reaction to AI optimism.

The reason this is intensifying, he says, is that many people formed their view from earlier models. From roughly GPT-2 through GPT-4 or GPT-4.5, the systems were interesting and fun: they could produce Shakespearean rap lyrics or sustain late-night conversations. But they hallucinated, reasoned poorly, wrote code unevenly, struggled with math, and were too prone to sycophancy. Skeptics who used those systems got an accurate impression of that period, but now have a lagging view of the technology.

Andreessen says the current systems are “stellar.” He points to GPT-4.5, reasoning models, reinforcement-learning post-training across domains, agents, long-lived agents, and a new Codex “goal” feature that lets people have Codex work on projects for 24 hours or longer without human intervention. From the vantage point of his work, he says, the actual utility of these systems is “ramping incredibly quickly,” and serious companies expect capability to rise dramatically over the next couple of years.

His advice to skeptics is empirical: try the state of the art. If someone tried AI two years ago, six months ago, a free version, or a bundled add-on, they may not understand what the tools can do now. He says meaningful contact may require paying for a premium package — “literally” something like $200 — but that the point is to be “face-to-face with the actual technology” rather than operating from a stale impression.

The same distinction between talk and behavior appears in his critique of AI sentiment polling. Torenberg notes a tension: people use AI, benefit from it, and cannot live without it, while polls may show anxiety or hostility. Andreessen says this is why social science does not simply ask people what they think; it watches what they do.

People’s stated preferences often differ from their demonstrated preferences. He uses dating as an analogy: people may state a criteria list and then marry someone very different. Polls are also vulnerable to question framing. He invokes “push polls,” where wording is designed to generate a desired response or change respondents’ thinking, such as asking whether they would still support a candidate if they knew he killed kittens.

His claim is that AI polling is being shaped by a hostile media environment. He says the press “hates AI with the fury of a thousand suns” as part of a broader hostility to tech, and that a sustained fear campaign plus loaded polling can manufacture negative results on almost anything. His preferred evidence is usage: people are using AI heavily, he says; they love it; Net Promoter Scores are high; churn is shrinking; recurring usage and consumption are rising; company growth rates in usage and revenue are speaking for themselves. He calls AI “the fastest category of technology in the entire history of the world” in terms of growth rate of usage and revenue.

He also criticizes AI companies themselves for contributing to public fear. Some companies, he says, have run fear campaigns while building the very systems they warn people about. The public should therefore watch what they do, not only what they say.

As a corrective to panic, he cites a poll by David Shor that asked Americans to rank the issues they care about. Andreessen says, from memory, that AI ranked around number 29. His interpretation is that outside the AI discourse bubble, Americans have more immediate concerns: energy costs, crime, drug addiction, mortgage payments, children’s schools, health. AI is not yet central to most daily worries.

The Anthropic blackmail story becomes an allegory about doomer literature

Erik Torenberg opens the AI-risk discussion with what he calls the Anthropic blackmailing incident and connects it to a concept from Joe Hudson called the “golden algorithm”: whatever a person is scared about, they bring about in exactly the way they feared. If someone fears abandonment, they become insecure, and their insecurity drives people away. Torenberg suggests the AI version is that people afraid AI will become evil have written extensively about the ways it could become evil, possibly seeding the behavior they fear.

Marc Andreessen says he has not studied the incident in detail and has only seen Anthropic’s thread, not the underlying material. With that caveat, he says the thread appeared to trace blackmail behavior to AI-doomer literature present in the training data. His interpretation is that the movement’s own scenarios of rogue AI — written over decades — may have contributed to behavior the same movement says it wants to prevent.

He adds a pointed criticism of Anthropic as, in his words, “half doomer.” If the goal is not to build a killer AI, he says, “step one would be don’t build the AI,” and step two would be not to train it on literature saying it is supposed to be a killer AI. Torenberg’s “golden algorithm,” Andreessen says, combines with “the snake eating its tail.” He likens the situation to the horror-movie line: “the call is coming from inside the house.”

The argument is less a technical diagnosis than a cultural one. Andreessen is saying that AI risk discourse is not outside the system it describes. If its texts enter training corpora, they can become part of model behavior. If its institutions build models while warning of catastrophic tendencies, they create paradoxes between advocacy, practice, and incentives.

Moralized institutions are judged by incentives, not labels

Erik Torenberg introduces “suicidal empathy” through Gad Saad’s forthcoming book and a quote from Matt Kramer: if one’s empathy does not produce forgiveness, acceptance of others’ “spiritual sovereignty,” or understanding of people who think and live differently, then it is not empathy but “empathy TM.”

Marc Andreessen summarizes Saad’s concept as a critique of social reform movements that claim to produce positive change but generate severe negative consequences. He connects it to Thomas Sowell’s long-running critique of reform movements and to recent progressive activism around criminal justice, policing, and “harm reduction.” In San Francisco, he says, harm reduction ended up handing out drug paraphernalia and, in some cases, free drugs to people “literally dying in the street from drug addiction.” Reformers claimed compassion, but in his account helped kill both addicts and the city while innocent people were harmed.

Still, Andreessen argues that the phrase “suicidal empathy” lets the actors off the hook. If activists were truly governed by overwhelming empathy, he says, they would show empathy toward enemies. Instead, he argues, they often delight in destroying ideological opponents. And if they were suicidal, they would not use the movements to gain power, status, and money.

His San Francisco example is the nonprofit ecosystem: organizations that, in his account, damage the city while being lavishly funded by city and state government. He concludes that the phenomenon is not really empathy or self-destruction. It is, in his words, hateful, greedy, self-aggrandizing, and oriented toward accumulating power and resources.

Torenberg then asks about what he calls the “SPLC incident,” framing it as a possible example of groups secretly funding the threats they publicly fight. Andreessen’s answer is sweeping and explicitly allegation-based. He says the Southern Poverty Law Center matters because, in his view, it played a dominant role in debanking, censorship, and cancellation over the last 15 years. In many meetings with companies, he says, “the SPLC’s word was gospel.” If the SPLC labeled someone bad, he says, that could mean removal from social platforms, debanking, inability to get a job, and “social and economic death.”

Andreessen says the organization occupies a “twilight world”: not a government agency subject to government oversight, not a conventional company, and operating as a nonprofit that can raise tax-advantaged money. In his account, that status gave it unusually intense power in the business world, the financial sector, and Silicon Valley.

On the alleged legal matter, Andreessen says the SPLC has not yet presented a defense, that the material he is describing consists of allegations, and that the presumption of innocence applies. He then describes what he says are allegations in a Justice Department indictment: alleged misuse of donor funds, alleged money laundering and other crimes, and alleged support for extremist groups. His remarks include an unclear sequence in which he starts to refer to January 6, corrects himself to Charlottesville, and then again refers to January 6-related allegations. The uncertainty remains part of the source.

The broader argument is about incentives. If a group’s purpose and fundraising depend on fighting an enemy, Andreessen argues, it has an incentive for that enemy to remain visible. Torenberg compares the idea to a Nathan Fielder-style premise: if the business model is fighting racism, fund more racism to get more business. Andreessen agrees and returns to his objection to “suicidal empathy”: the label is too flattering if the actors are actually gaining power, money, and institutional authority.

His conclusion is not that such groups necessarily should be banned. He says perhaps, in America, they should be allowed to do all this. But he objects to being lied to and to having deplatforming, censorship, and debanking dressed up as moral necessity.

Official narratives are weakest where behavior and evidence diverge

On UFOs, Marc Andreessen begins with a disclaimer: he does not know anything others do not know. His disposition is open — “I want to believe” — because the number of galaxies, stars, planets, and Earth-like planets makes extraterrestrial life seem plausible to him. But he says UFO examples tend to fall apart as one gets closer to the details. Military camera footage of strange moving objects may resolve into parallax effects, instrument artifacts, camera artifacts, digital imagery artifacts, weather balloons, ball lightning, or other explanations. He has not yet seen the case that “tipped” him over into belief.

At the same time, he acknowledges government secrecy around aerospace programs. Erik Torenberg asks why the government would hide materials if there were nothing to worry about. Andreessen’s answer is that classified aircraft development provides a plausible explanation. Stealth fighters and bombers were highly classified; test flights required cover stories and suppression of information. Area 51, he says, was the classic example, tied to classified test flights for new aircraft.

He also entertains the possibility that UFO stories may at times have served as overt cover stories. If a military intelligence officer wanted to protect a classified flight program, a UFO cult could be useful. It gives observers a false story to believe instead of recognizing a breakthrough military technology. More subtly, it stigmatizes investigation. If pilots fear being viewed as UFO nuts, they may avoid reporting strange things — a problem if the objects are truly anomalous, and also a problem if they are foreign drones or other national-security concerns.

The media environment changes what happens next. In the old system, Andreessen says, UFO culture developed through broadcast television, official programming, mimeographed newsletters, and paperback books. In the new system, the old walls collapse and the Overton window disintegrates. Every UFO theory can spread. So can propaganda campaigns intended to hide real information. Pressure builds until someone in power decides to “rip the Band-Aid off” — assuming the details are not still being fudged.

Andreessen’s generational argument gives the same media shift a human form. Torenberg asks about Chris Arnade’s point that the major divide is not only educational but generational: boomers are more confident in inherited truth, while younger people are more post-truth, relativistic, or pluralist.

Andreessen says boomers believed what was on television. He cites the line that a baby boomer is someone who believes the talking head on the TV set. They grew up with Walter Cronkite and the idea that the television or the New York Times told them the truth. Andreessen says this was “always BS,” but it was what boomers believed. People under 40, and especially people around 20, have seen too many examples to take that seriously.

The second part is moral relativism. Andreessen says a key part of “Boomer Truth” is the belief that there is no fixed morality: values are self-chosen, all cultures are equal, Western society is not superior, and if anything the West is worse. Before “woke,” he says, there was political correctness; during his college years, that took the form of multiculturalism or “multi-culti.” Torenberg mentions Peter Thiel and David Sacks’s 1995 book The Diversity Myth. Andreessen also invokes The Closing of the American Mind, which he describes as a major book arguing that colleges were teaching students there was no morality, only choose-your-own-adventure values.

His formulation is that boomers had “a fixed received belief that there is no fixed morality.” The media apparatus, cultural program, and educational system were then built around that belief. Younger people inherited the downstream consequences: COVID, woke politics, school impositions, and institutional messaging that taught them both not to trust authority and not to accept any stable moral framework from that authority.

The result, in Andreessen’s view, is a worldview that is more open-minded and more critical at once. Zoomers are more interested in ideas, more skeptical of authority and received wisdom, more cynical about manipulation, and more sensitive to the media environment. They are aware, he says, that “psychological warfare” is happening and that they have been on the receiving end of it. Their contempt for authority figures is, in many cases, “very well earned.”

That cultural backdrop also explains his interest in internet-native slogans. Torenberg asks whether “retardmaxxing” can be summarized as “stoicism meets you can just do things.” Andreessen says no: it is just “you can just do things.” Stoics, he argues, put a great deal of effort into being stoic. The point of retardmaxxing is not to put effort into becoming a certain kind of person; it is simply to do the thing.

Asked how he monitors so many situations, Andreessen gives a deliberately half-comic, half-serious answer. Being plugged into the MTS fire hose is “absolutely critical,” he says, and he praises the tools the team is developing. He had been following coverage of the OpenAI trial on MTS that week. More broadly, he says he long ago “wire jacked” the back of his skull into social media: a continuous X feed, Substack feed, and YouTube feed. But he adds the counterweight: reading enough old books to balance the daily fire hose.

Andreessen’s operating preference is visible across the discussion: workers using AI until they stop sleeping matter more than labor-market panic; users returning to AI products matter more than negative polls; young people’s distrust of official narratives comes from lived experience; and institutions should be judged less by their mission statements than by their incentives and effects.